Web scraping is a method that helps programmers to attach to a site using code and scrape JavaScript and HTML hosted on a website. Then, the code is analyzed using a few libraries which can help with the data extraction we want.

The benefit of web scraping with programming languages like Python is that we are not restricted to data extraction from one page; however, if a website's logic is steady enough, we can repeat through all website pages to scrape the maximum data possible.

Web scraping is not a foolproof method. Like all other instruments, there are situations or limitations where it couldn't work correctly. If we are fortunate enough, we may not face these problems when mining. Each website has its weird structure and protection systems; therefore, it is a new challenge.

You can't download all the codes with BeautifulSoup

Tactlessly, there is no probability of getting a workaround while the problem arises using the code. It might happen that a site has allowed protections which prevent BeautifulSoup from having a connection. If some sites identify that you are sending GET requests without utilizing a browser interface, they might block you. It is uncertain that any other libraries would work in a similar scenario.

You can't parse the code

At times, the software could still use the HTML, but for a few reasons, a code can't get parsed or converted into well-structured BeautifulSoup objects. If we can't parse that, we can't use any methods given by a BeautifulSoup library for scraping information; it makes the automation process impossible.

Website structure without any logic

You may discover other times when the code is correctly accessed, downloaded, and parsed; a website might have a poor design which is not possible to recognize a general structure in similar pages. Indeed, this rarely happens, but we had to cope with a problem sometimes, resulting in numerous pieces of data getting lost as the retrieval procedure might not get adequately automated.

It is too difficult

We hope you never deal with this problem, although a few websites can overcome you with quantities of code that are impossible to decode correctly. At times, information is snuggled in the structures secreted by JavaScript and hashes, and although all the data you require is hidden within the code, you can't get a way of simply extracting it.

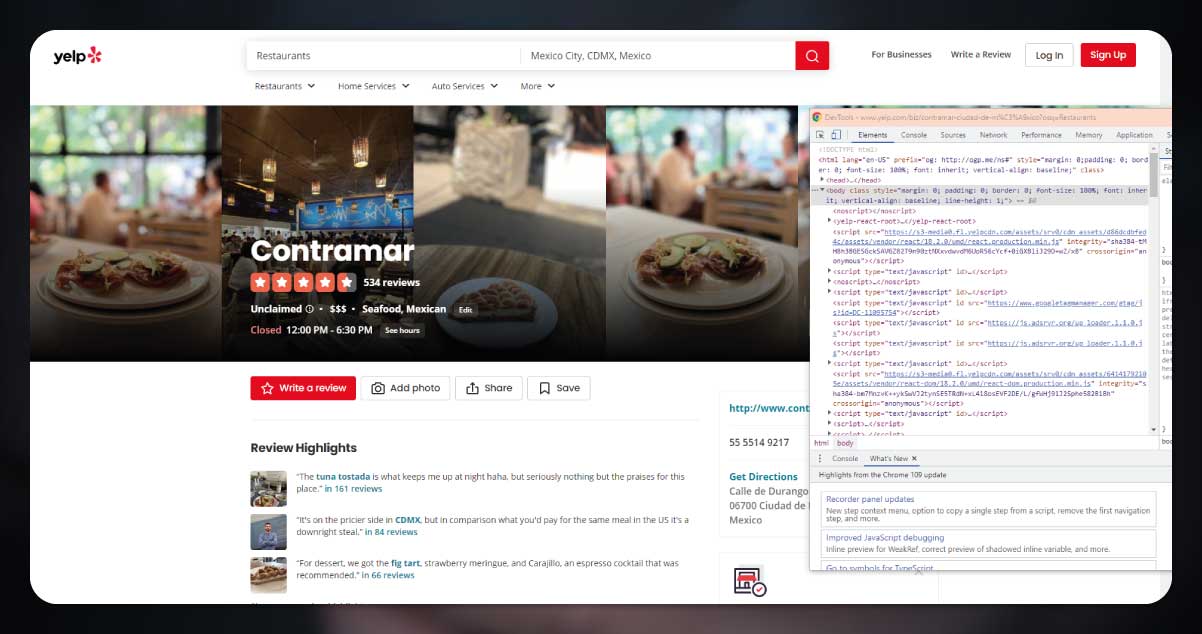

In this blog, we will concentrate on extracting the reviews of the same restaurant.

To do a correct data scraping, we will follow these steps:

The procedure is very spontaneous and could be summarized in this way: before, we observe if we can do the web scraping; if yes, then we do it on a single page, and we extend a code to many pages.

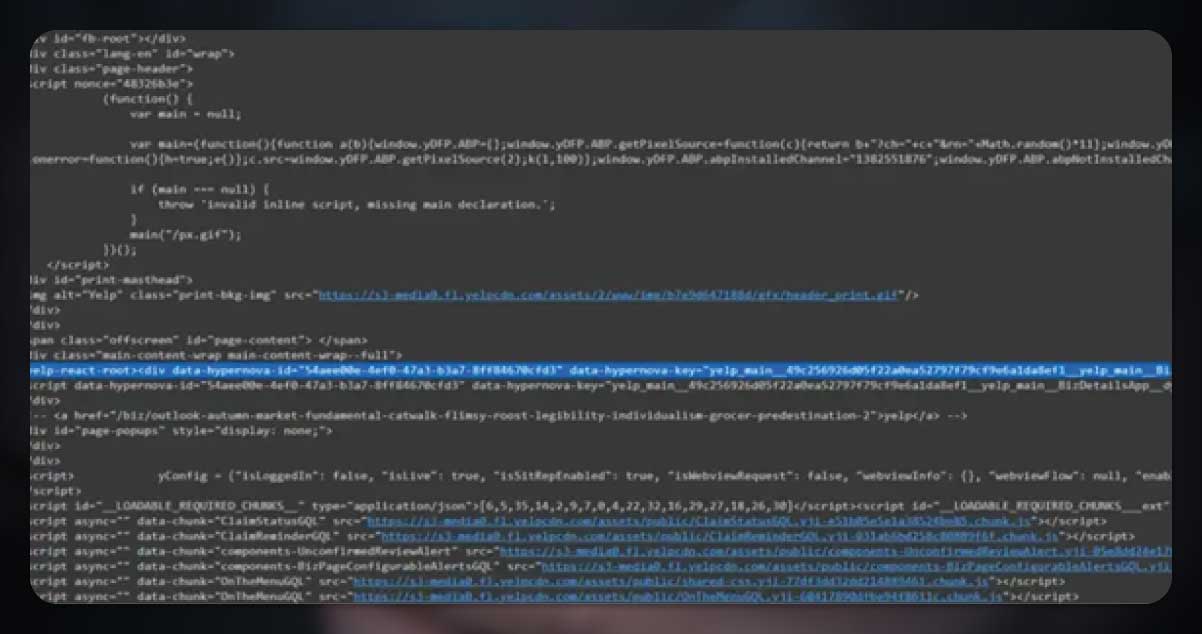

At the start, we thought there wasn't any hope. It took us a while to know all reviews were limited to that one line of HTML code that we needed to analyze.

The next challenge was to see if there were any evidence of the logic which might have permitted us to repeat through different pages about a similar restaurant.

Luckily, the logic is straightforward. Once we have identified the restaurant we want to extract, we can change the number given in a link divided by 10 like an indicator for a review page. Repeating through various pages is very easy.

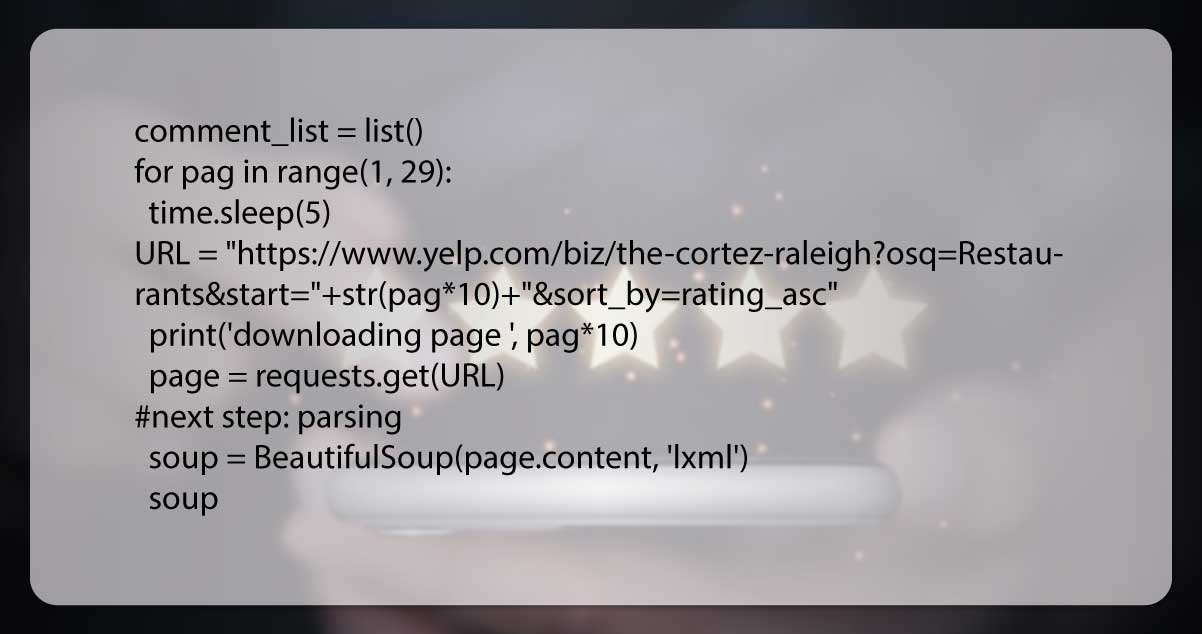

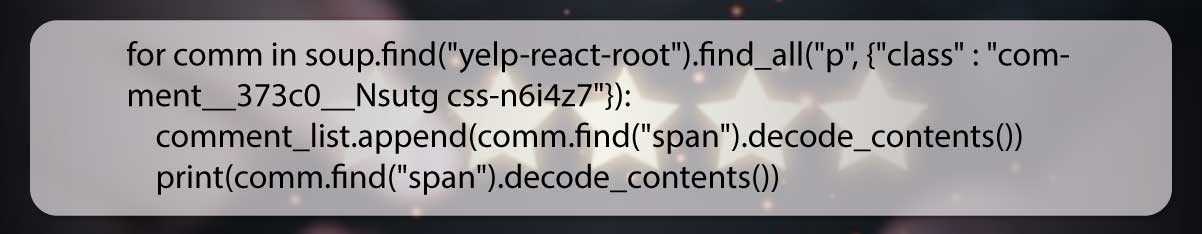

Here is the Python code to follow:

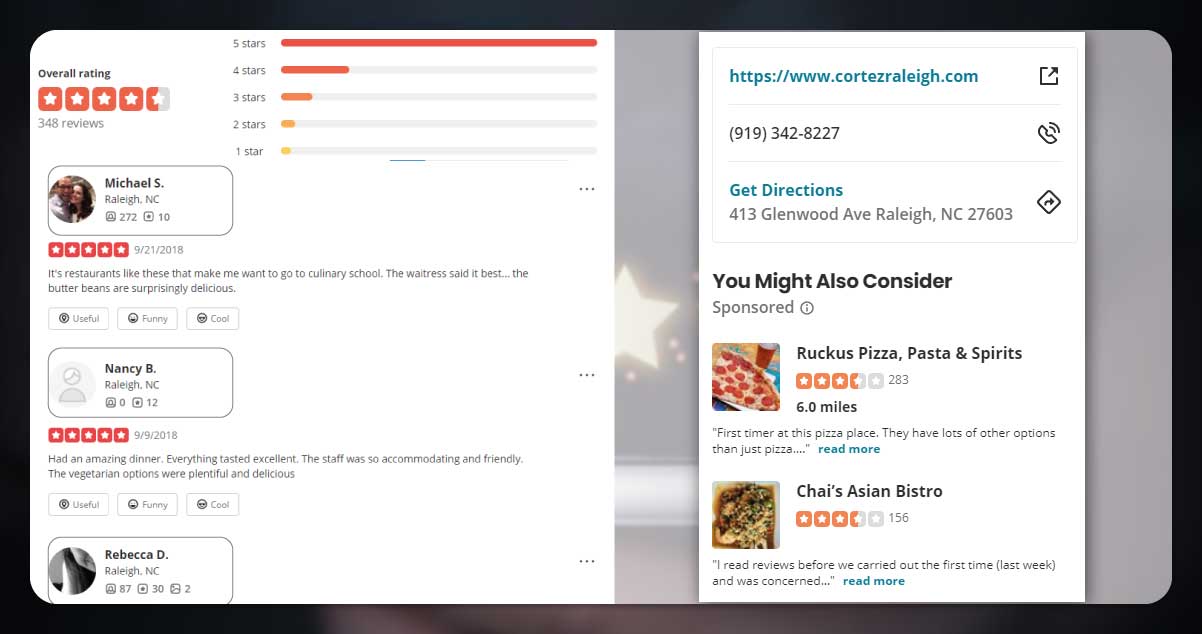

During downloading data, a code will show us the development made and downloaded data.

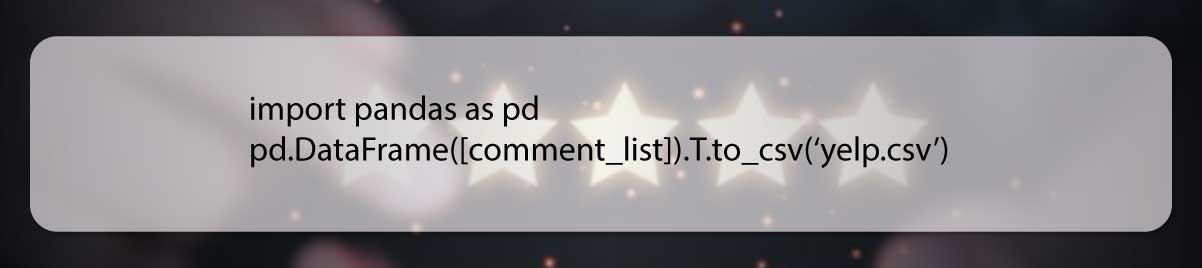

Exporting results from a list is straightforward. We can do that using the text file; however, we prefer CSV to help the data movement using other software.

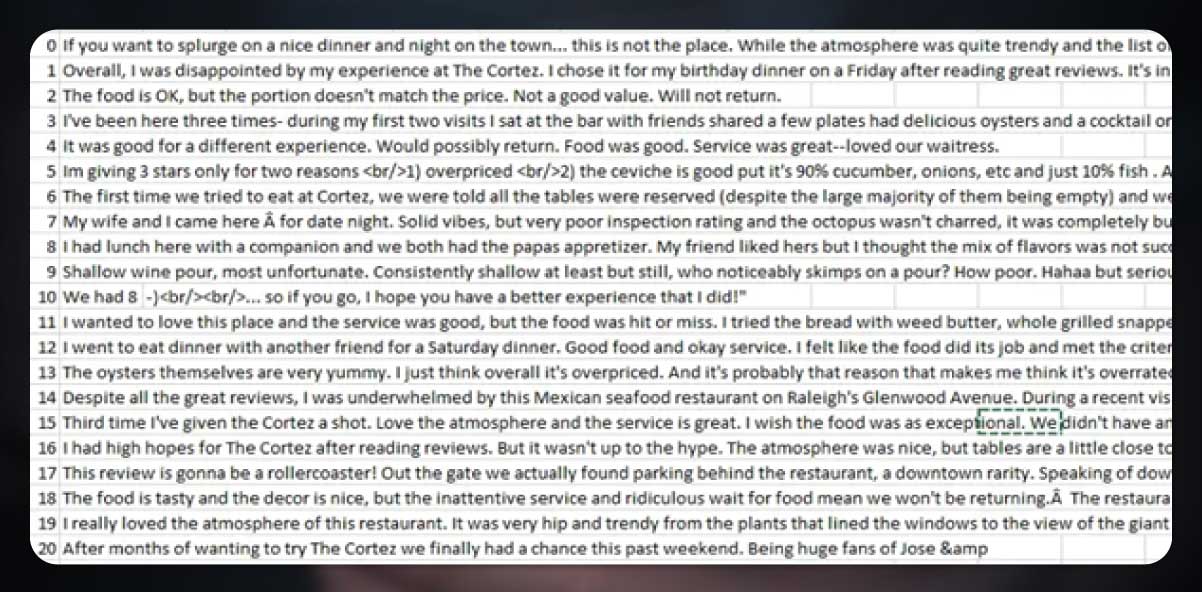

As you can see in the screenshot, we have successfully exported all the reviews in a single CSV file.

We can do many cool things to make the best value of the data we have just downloaded. We could clear it and then do a sentiment analysis of this data. After that, we could download data from different restaurants and envisage the best stations in the area; it entirely depends on our imagination.

Still, want to know more? Contact Actowiz Solutions now! You can also reach us for all your mobile app scraping and web scraping services requirements.

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

Complete guide to scraping Shopify store data in 2026. Extract product prices, reviews, and inventory from Shopify stores for competitive intelligence.

Discover how Natural Grocers achieved a 23% increase in promotional ROI using real-time organic product pricing intelligence. Learn how data-driven pricing strategies enhance promotions and retail performance.

Track UK Grocery Products Daily Using Automated Data Scraping across Morrisons, Asda, Tesco, Sainsbury’s, Iceland, Co-op, Waitrose, and Ocado for insights.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.