Introduction

Web Scraping with Python has become a widely used technique for extracting data from

websites for competitive analysis, market research, and automation. Python’s powerful

libraries—BeautifulSoup, Scrapy, and Selenium—allow businesses to extract structured and

unstructured data efficiently. However, raw scraped data often contains errors, duplicates, and

inconsistencies, making it difficult to analyze directly. This is where Scraped Data

Transformation plays a critical role.

According to industry reports:

| Statistic |

Details |

| 85% of businesses |

Use web scraping for market intelligence. |

| 60% of scraped data |

Requires transformation before use. |

| Data cleaning errors |

Can lead to a 40% drop in decision-making accuracy. |

Without Data Cleaning in Python, businesses risk basing decisions on flawed data. By

applying Data Mapping with Pandas, organizations can clean and structure the extracted

information to ensure its usability. Implementing an ETL Process for Web Scraping enhances

workflow efficiency, enabling companies to make data-driven decisions based on accurate,

structured information.

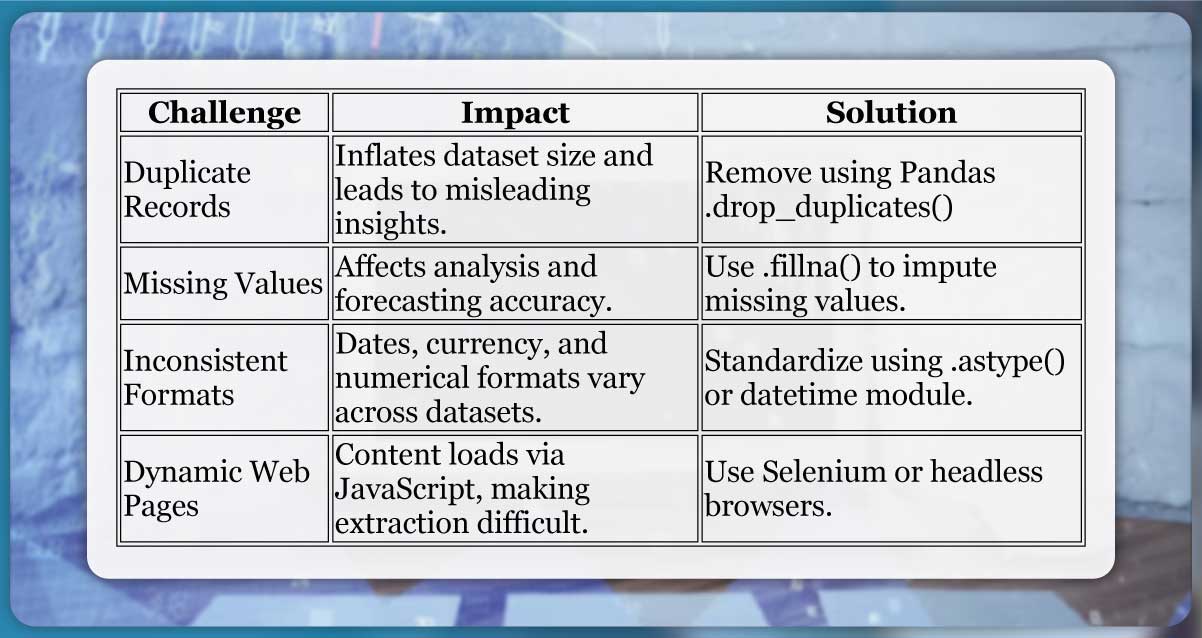

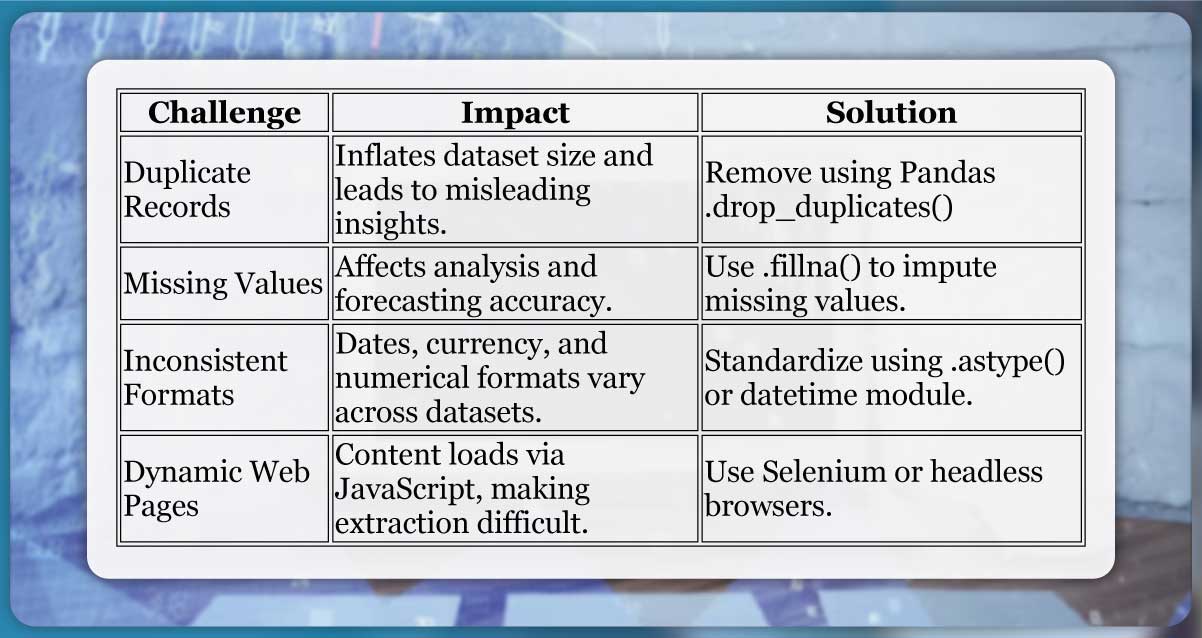

Challenges of Handling Raw Scraped Data

Extracting data is only the first step—processing and refining it is where most

difficulties arise. Common challenges with raw scraped data include:

| Challenge |

Impact |

Solution |

| Duplicate Records |

Inflates dataset size and leads to misleading insights.

|

Remove using Pandas .drop_duplicates() |

| Missing Values |

Affects analysis and forecasting accuracy. |

Use .fillna() to impute missing values. |

| Inconsistent Formats |

Dates, currency, and numerical formats vary across

datasets. |

Standardize using .astype() or

datetime module.

|

| Dynamic Web Pages |

Content loads via JavaScript, making extraction difficult.

|

Use Selenium or headless browsers. |

Without proper Data Cleaning in Python, these challenges can lead to incorrect

analysis and flawed decision-making. Proper Scraped Data Transformation ensures that data is

structured, standardized, and reliable.

Why Data Transformation and Mapping Are Crucial for Analysis?

Once data is scraped, it must be transformed and mapped into a structured format to

be useful. Poorly mapped data can lead to inaccurate insights and inefficiencies in business

processes. Data Mapping with Pandas ensures datasets are correctly structured and aligned with

industry standards.

| Aspect |

Impact of Poor Transformation |

Benefit of Proper Mapping |

| Price Monitoring |

Incorrect product-price mapping leads to wrong competitor

analysis. |

Accurate pricing insights for competitive advantage. |

| Sentiment Analysis |

Scraped reviews with missing sentiment labels distort

results. |

Reliable customer sentiment tracking. |

| Predictive Analytics |

Unstructured data affects model accuracy. |

Clean, structured data improves forecasting. |

By following an ETL Process for Web Scraping, businesses ensure that raw data

undergoes systematic cleaning, transformation, and storage, making it ready for advanced

analysis and decision-making.

Understanding Scraped Data

What Raw Scraped Data Looks Like (Unstructured, Inconsistent Formats)

Raw data obtained from Web Scraping with Python is often unstructured and needs

Python Data Processing before it becomes useful. Websites display information in various

formats, including HTML, JSON, XML, and dynamically generated JavaScript content. This causes

inconsistencies when extracting data, as the same type of information may appear in different

structures across pages.

For example, a product’s price might appear in different ways:

| Source |

Price Format |

| Website A |

₹1,299 |

| Website B |

Rs. 1,299/- |

| Website C |

1299 INR |

These inconsistencies make direct comparison difficult. Proper Data Structuring with

Python ensures that all extracted values are converted into a uniform format for better

analysis.

Common Issues: Missing Values, Duplicate Records, Incorrect Data Types

Raw data from scraping often contains missing values, duplicates, and incorrect data

types, which can lead to errors in Big Data Analytics with Python.

| Issue |

Impact |

Solution |

| Missing Values |

Incomplete datasets lead to inaccurate analysis. |

Use .fillna() or drop empty values. |

| Duplicate Records |

Inflates dataset size and affects machine learning models.

|

Use .drop_duplicates() to remove redundancy.

|

| Incorrect Data Types |

Numeric values stored as text can break calculations. |

Convert using .astype(int) or

.astype(float).

|

To ensure Visualizing Scraped Data is effective, proper cleaning is crucial. Without

preprocessing, graphs and models based on raw data may produce misleading results.

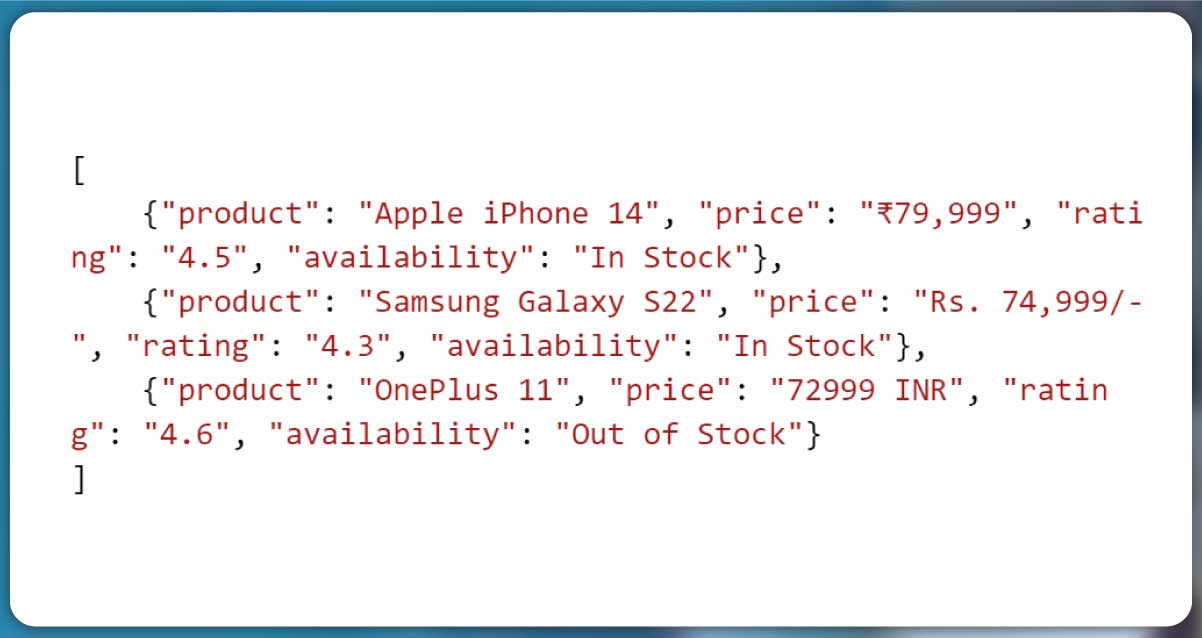

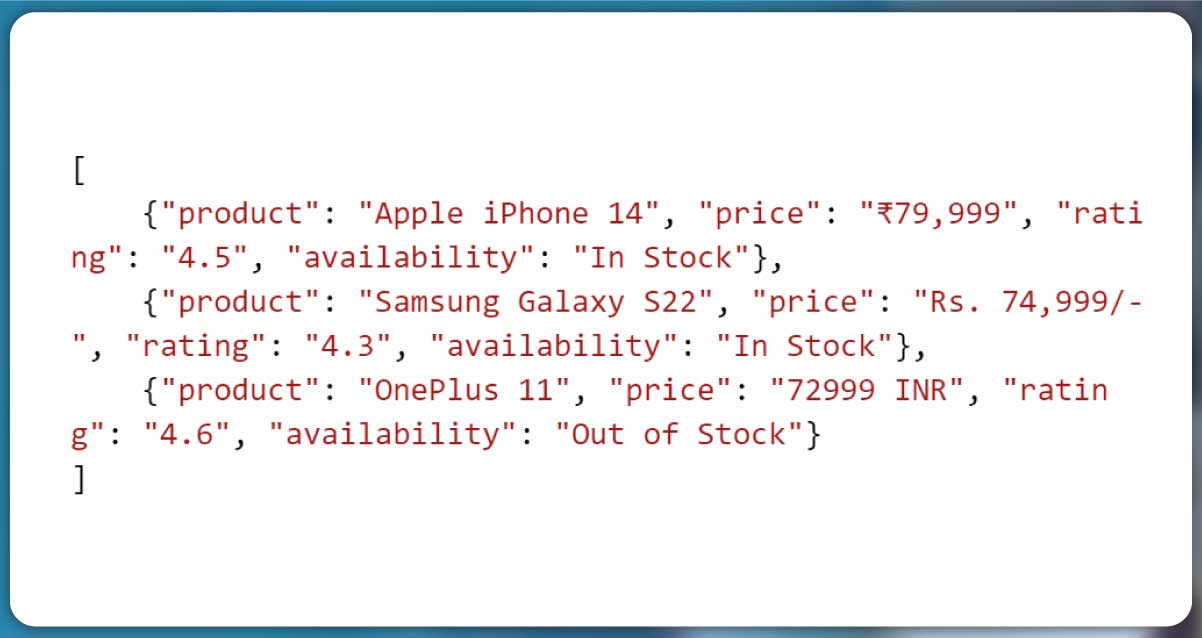

Examples of Raw Data from Web Scraping

Here’s an example of unprocessed scraped data:

Problems:

- 1. Inconsistent price formats (₹, Rs., INR).

- 2. Availability not standardized (some products are in stock, others not).

- 3. Different rating scales (some sites may use 1-10 instead of 1-5).

Using Geospatial Data Mapping, businesses can structure this information based on

location-based pricing and availability trends. Data Structuring with Python helps convert this

messy data into clean, usable datasets, essential for Big Data Analytics with Python.

Essential Python Libraries for Data Transformation

Processing scraped data efficiently requires powerful Python Data Processing tools.

Python offers several libraries that help clean, structure, and transform raw data into an

analyzable format. Below are some essential libraries for Data Structuring with Python and their

key use cases.

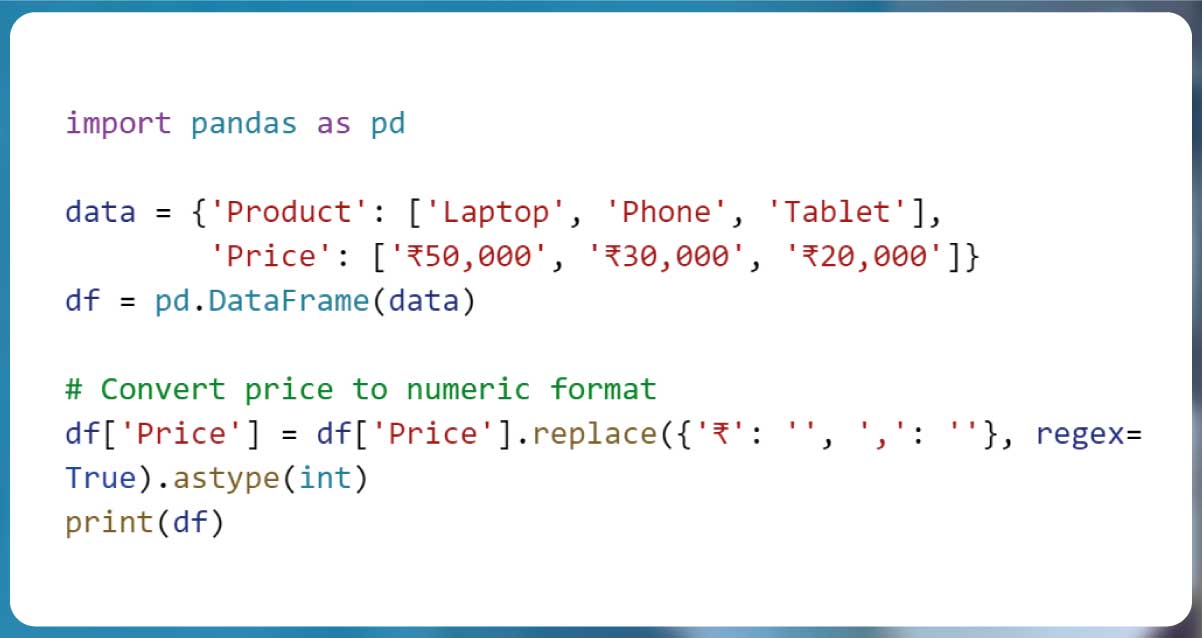

1. Pandas – Cleaning, Structuring, and Analyzing Scraped Data

Pandas is one of the most widely used libraries for cleaning, structuring, and

analyzing scraped data. It provides DataFrame and Series objects to organize data efficiently.

| Feature |

Use Case |

.dropna() |

Removes missing values. |

.fillna(value) |

Fills missing values with default values. |

.drop_duplicates() |

Eliminates duplicate entries. |

.astype(dtype) |

Converts data types (e.g., str → int). |

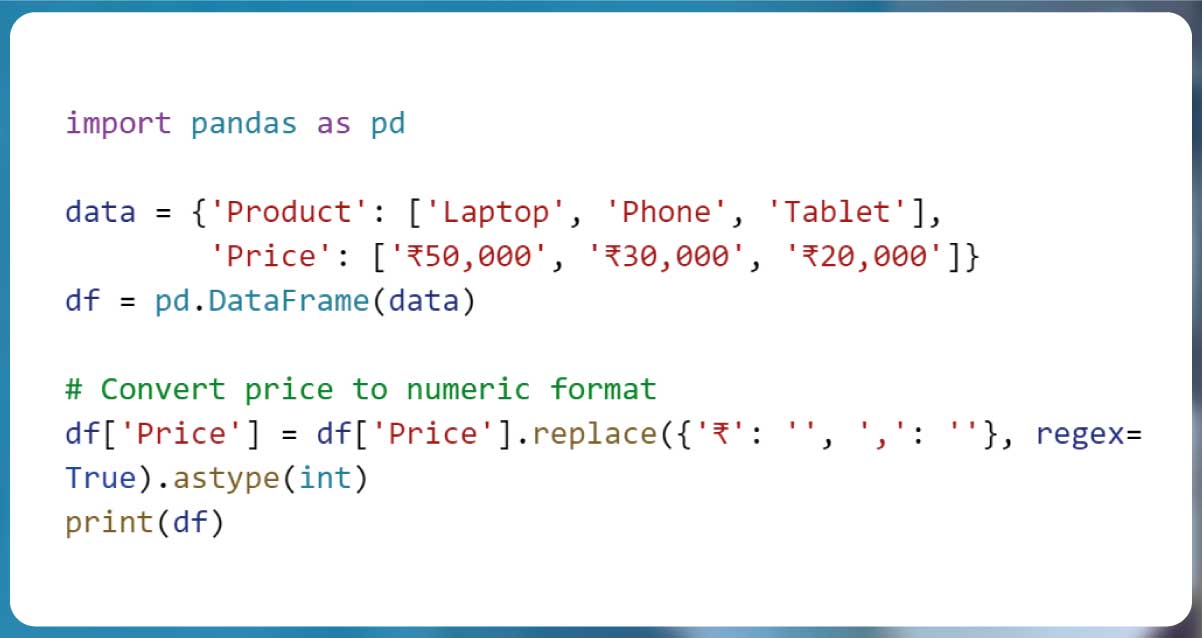

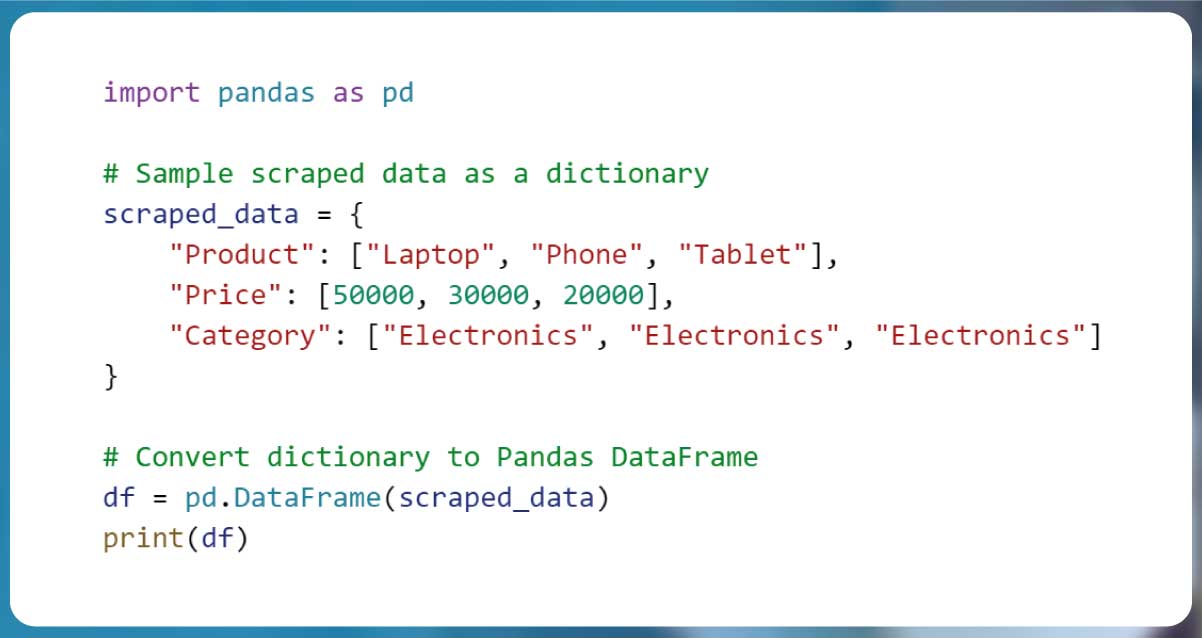

Example:

This ensures the Visualizing Scraped Data process is accurate.

2. NumPy – Handling Numerical Data Efficiently

NumPy is used for efficient numerical computation in Big Data Analytics with Python.

It supports multi-dimensional arrays and functions for statistical analysis.

| Feature |

Use Case |

np.array() |

Converts lists to numerical arrays. |

np.mean() |

Calculates the average of numerical data. |

np.median() |

Computes the median of a dataset. |

np.std() |

Finds the standard deviation. |

Example:

import numpy as np

prices = np.array([50000, 30000, 20000])

print("Average Price:", np.mean(prices))

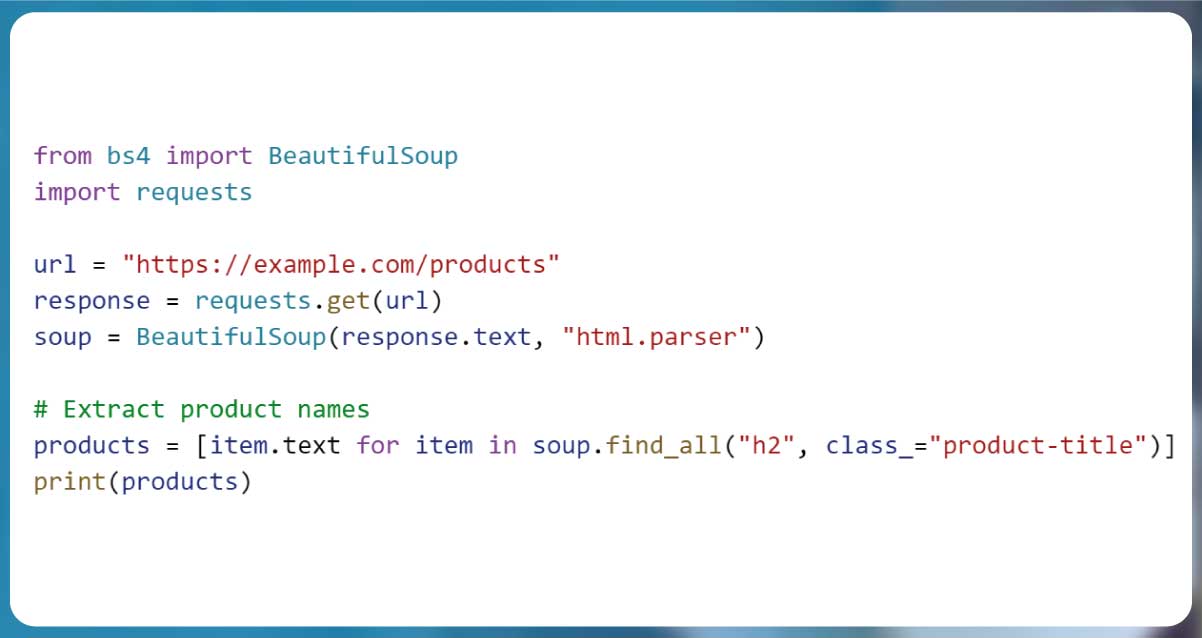

3. BeautifulSoup & Scrapy – Extracting Structured Data

For web scraping, BeautifulSoup and Scrapy help extract structured data from HTML

pages.

| Library |

Purpose |

BeautifulSoup |

Parses static HTML data. |

Scrapy |

Extracts large-scale data efficiently. |

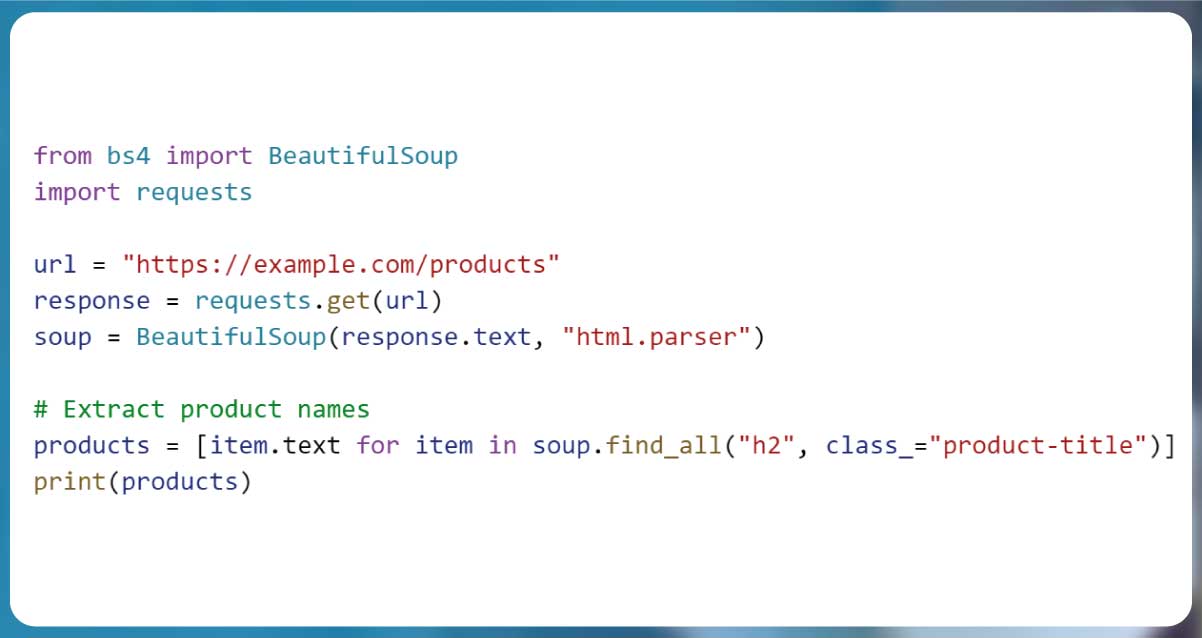

Example using BeautifulSoup:

4. JSON & CSV Modules – Storing and Exporting Cleaned Data

Data extracted and transformed should be stored in structured formats like JSON or

CSV.

| Format |

Use Case |

CSV |

Best for tabular data (Excel, spreadsheets). |

JSON |

Ideal for nested, hierarchical data. |

Example:

import json

data = {'Product': 'Laptop', 'Price': 50000}

with open("output.json", "w") as file:

json.dump(data, file)

This ensures efficient Geospatial Data Mapping and Big Data Analytics with Python.

Cleaning Scraped Data

Raw data extracted through Web Scraping with Python is often messy and requires

thorough Data Cleaning in Python before analysis. This step is crucial in the ETL Process for

Web Scraping, ensuring that data is structured and ready for further processing. Below are key

methods for Scraped Data Transformation using Data Mapping with Pandas and other Python tools.

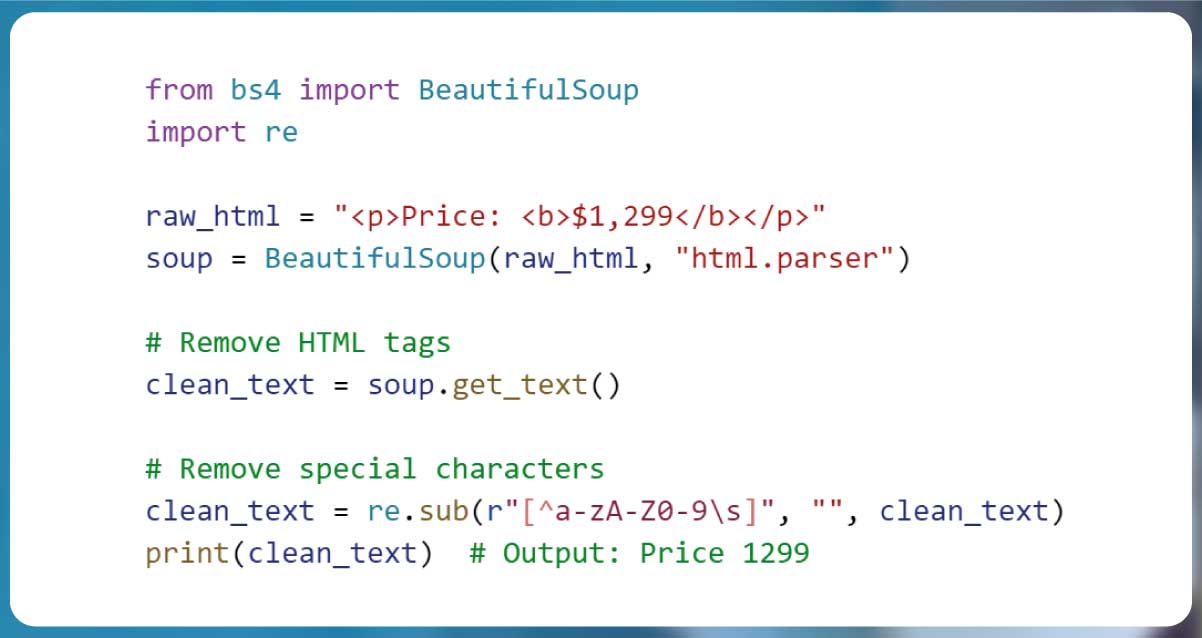

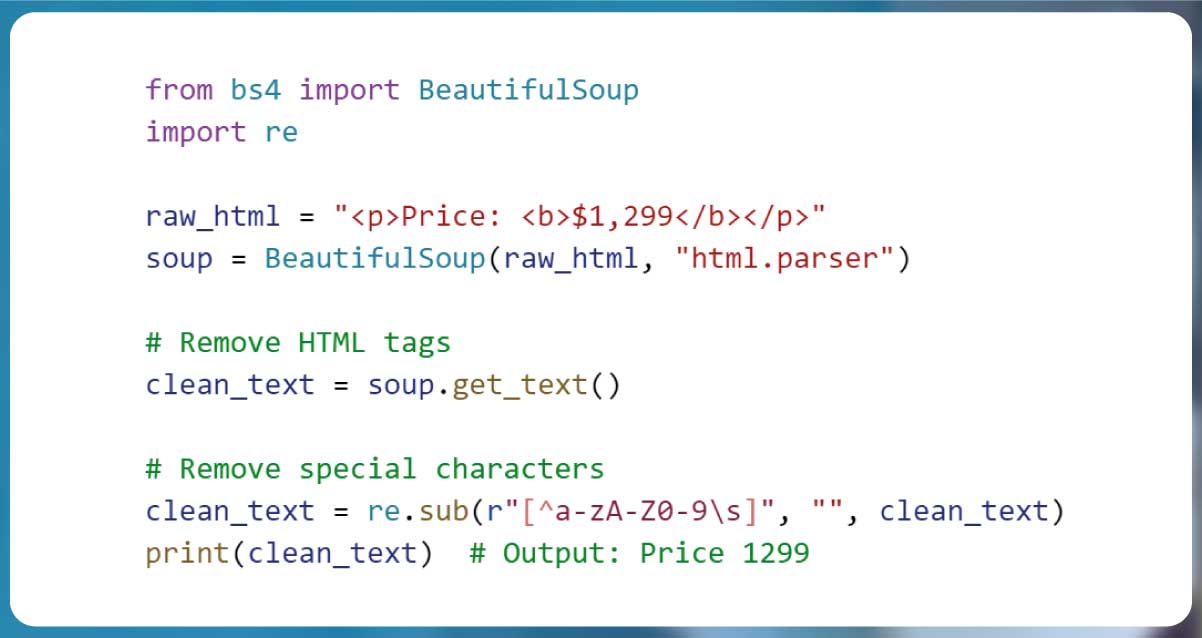

1. Removing HTML Tags, Special Characters, and Unnecessary Spaces

Web pages contain HTML tags, JavaScript code, and unnecessary symbols that must be

removed for clean text extraction. BeautifulSoup helps eliminate HTML tags, while Pandas and

Regex handle special characters and whitespace issues.

Example: Cleaning HTML and Special Characters

| Issue |

Impact |

Solution |

| HTML Tags |

Clutters text fields. |

Use BeautifulSoup .get_text() |

| Special Characters |

Prevents clean data storage. |

Use regex re.sub() |

| Extra Spaces |

Affects search and sorting. |

Use .strip() or .replace() |

2. Handling Missing Values (Filling, Removing, or Interpolating Data)

Incomplete data is a common issue in Scraped Data Transformation. Depending on the

dataset, missing values can be:

- Removed if they are not essential.

- Filled using default or estimated values.

- Interpolated using trends in the dataset.

Example: Handling Missing Values with Pandas

.jpg)

| Method |

Use Case |

.dropna() |

Remove missing values. |

.fillna(value) |

Replace missing values with a default. |

.interpolate() |

Estimate missing values based on trends. |

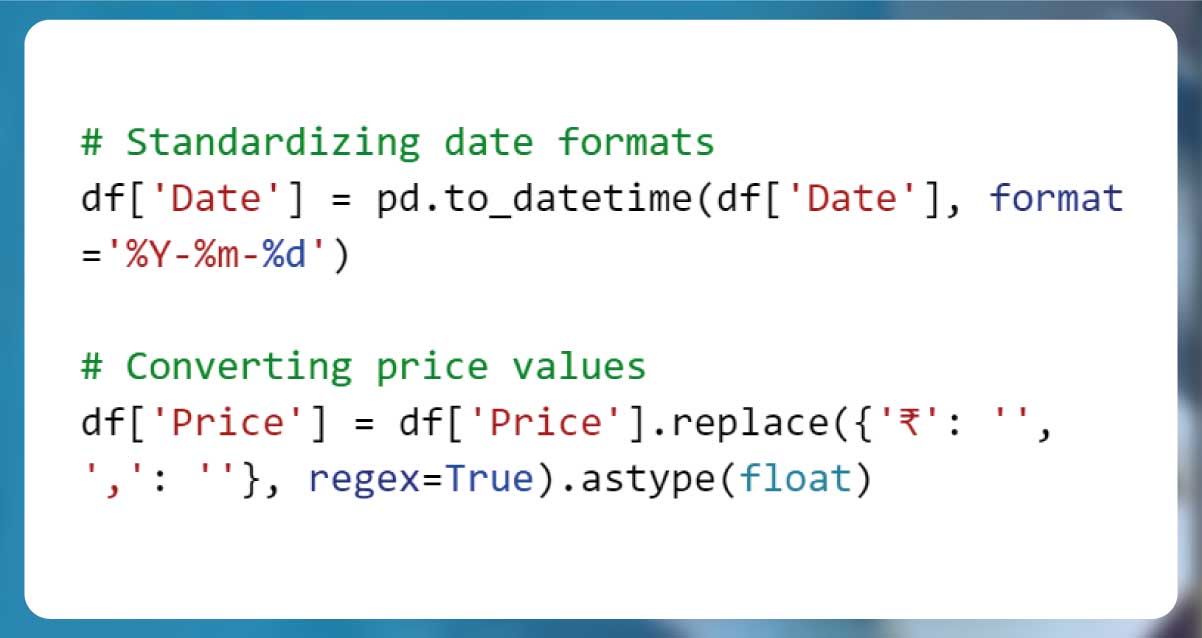

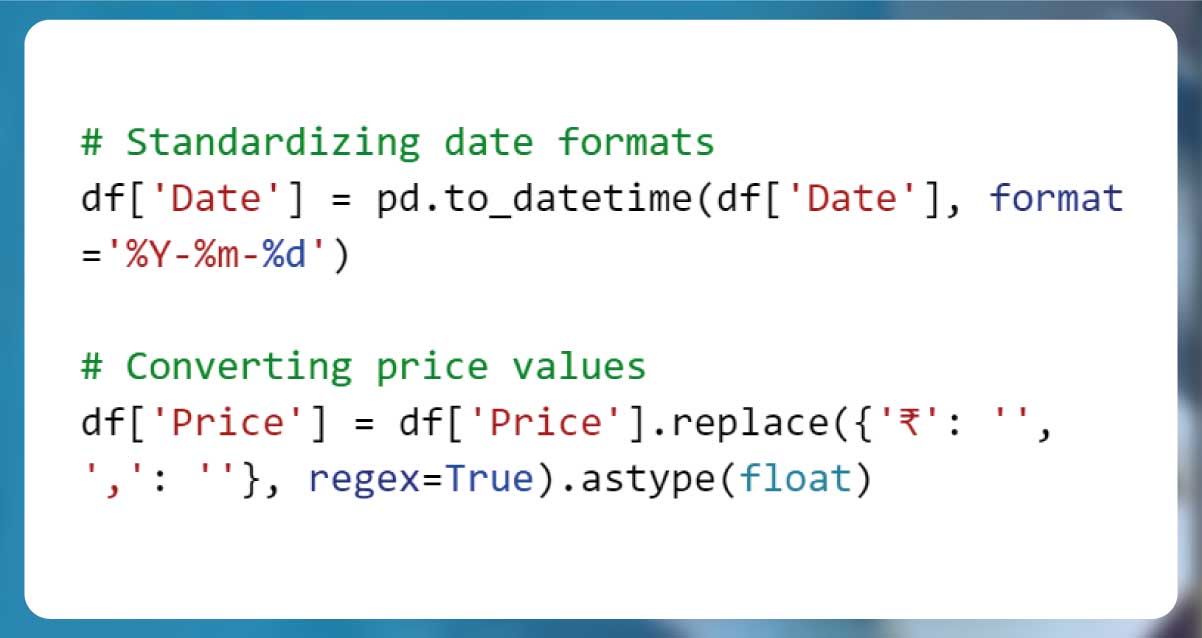

3. Standardizing Date, Time, and Numerical Formats

Inconsistent date formats and currency values can affect analysis and Data Mapping

with Pandas. Converting them into a uniform structure ensures consistency.

Example: Converting Dates and Prices

| Issue |

Solution |

| Different date formats (MM/DD/YYYY vs. DD-MM-YYYY) |

Use pd.to_datetime() for conversion. |

| Currency symbols and commas in numbers |

Use .replace() and

.astype(float). |

By applying these Data Cleaning in Python techniques, businesses can streamline the

ETL Process for Web Scraping, ensuring that data is accurate, structured, and ready for

insights.

Mapping and Structuring Data

Once data is cleaned, the next step in Scraped Data Transformation is mapping and

structuring it into an organized format for analysis. Using dictionaries, Pandas DataFrames, and

relational formats, businesses can ensure efficient Data Mapping with Pandas as part of the ETL

Process for Web Scraping.

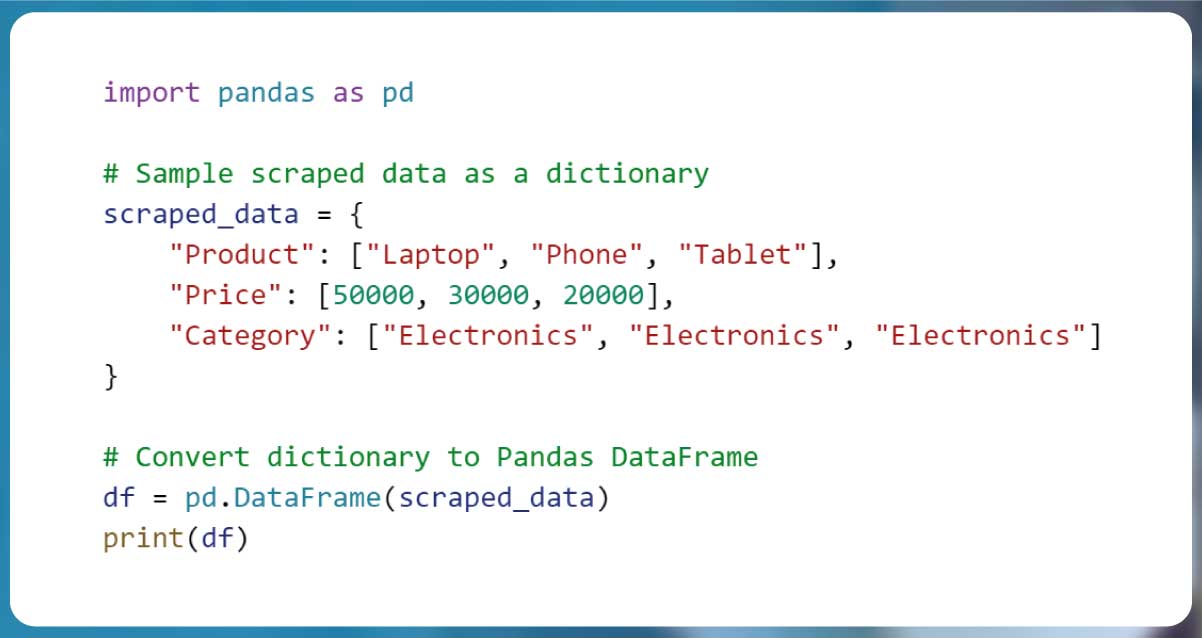

1. Using Dictionaries and DataFrames for Better Organization

Dictionaries in Python are excellent for storing and organizing scraped data, while

Pandas DataFrames offer tabular structures for efficient processing.

Example: Converting Raw Data into a Dictionary and DataFrame

| Benefit |

Why It’s Useful |

Dictionaries |

Store key-value pairs for easy mapping. |

DataFrames |

Structure data into rows and columns for analysis. |

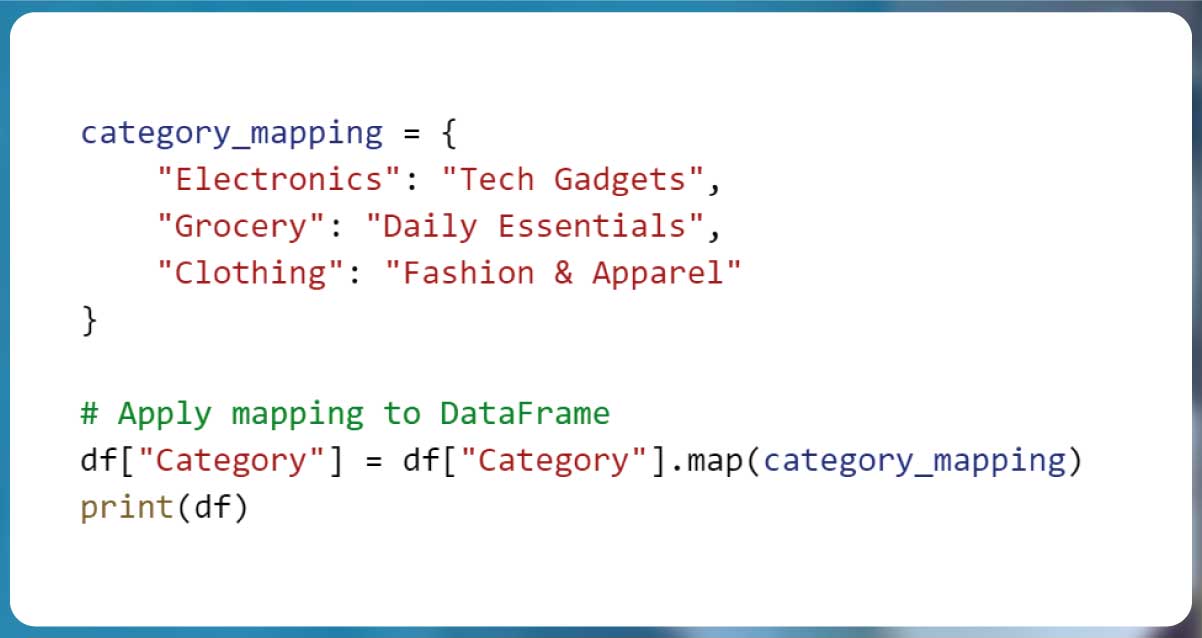

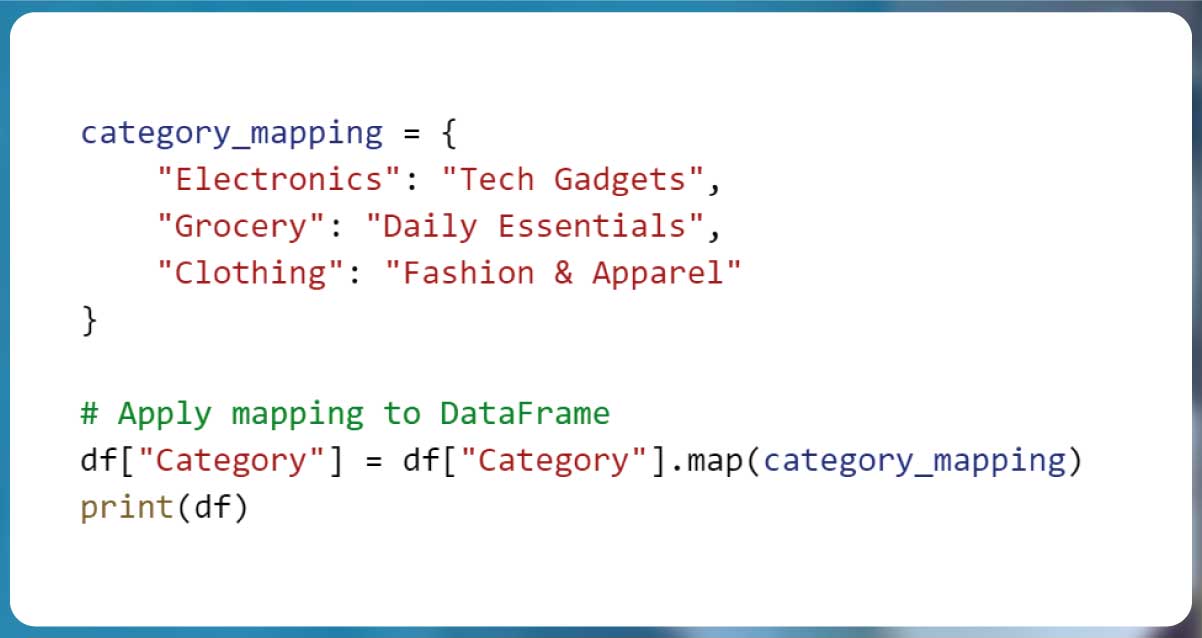

2. Mapping Categories and Labels to Meaningful Names

Often, scraped data contains vague or coded categories that need to be mapped to

meaningful labels for clarity.

Example: Mapping Product Categories to User-Friendly Labels

| Issue |

Solution |

| Coded or vague labels (e.g., "Elec") |

Map to full names (e.g., "Electronics"). |

| Different spellings across sources |

Use .replace() or .map() for

consistency. |

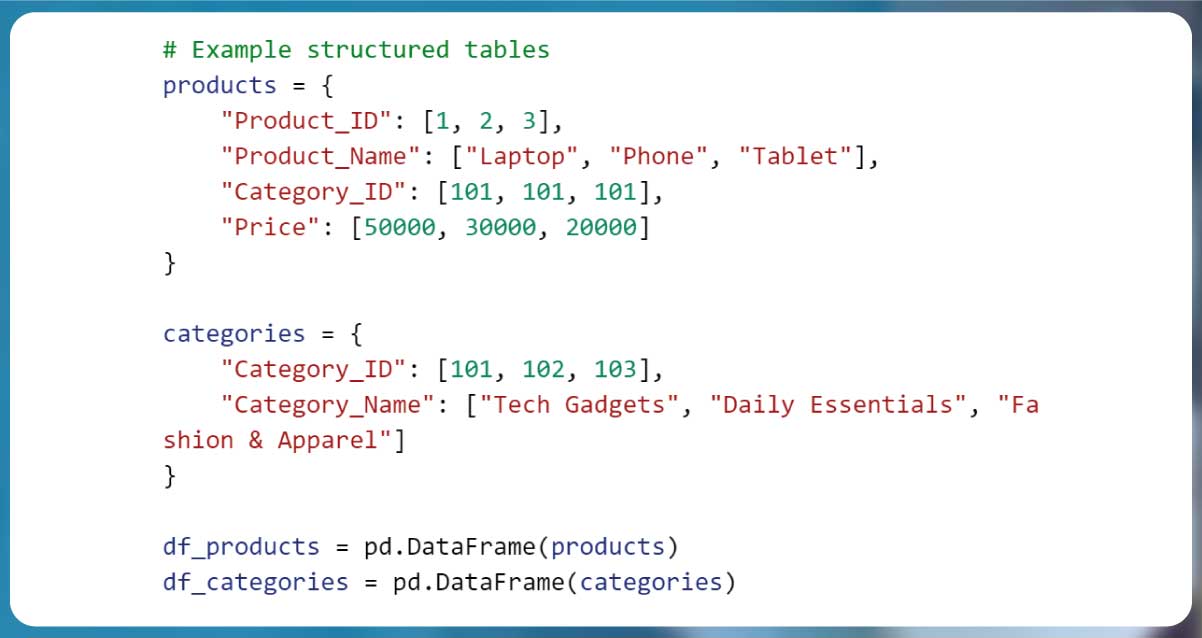

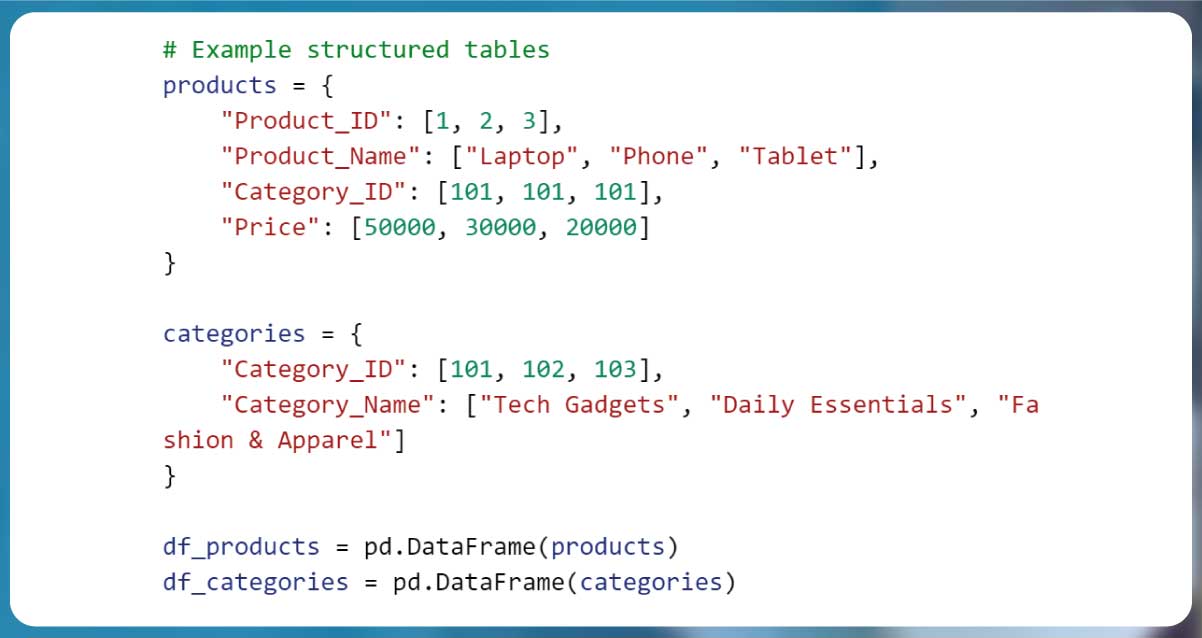

3. Converting Unstructured Data into a Relational Format

Relational databases require structured tables with relationships between entities.

Scraped data often needs to be normalized before being stored.

Example: Splitting Data into Multiple Tables for a Relational Format

Instead of storing all data in one table, separate it into related tables for

efficient queries.

| Table |

Fields |

| Products |

Product ID, Name, Category ID, Price |

| Categories |

Category ID, Category Name |

By mapping and structuring data properly, businesses can improve Big Data Analytics

with Python, making it easier to visualize trends and extract insights.

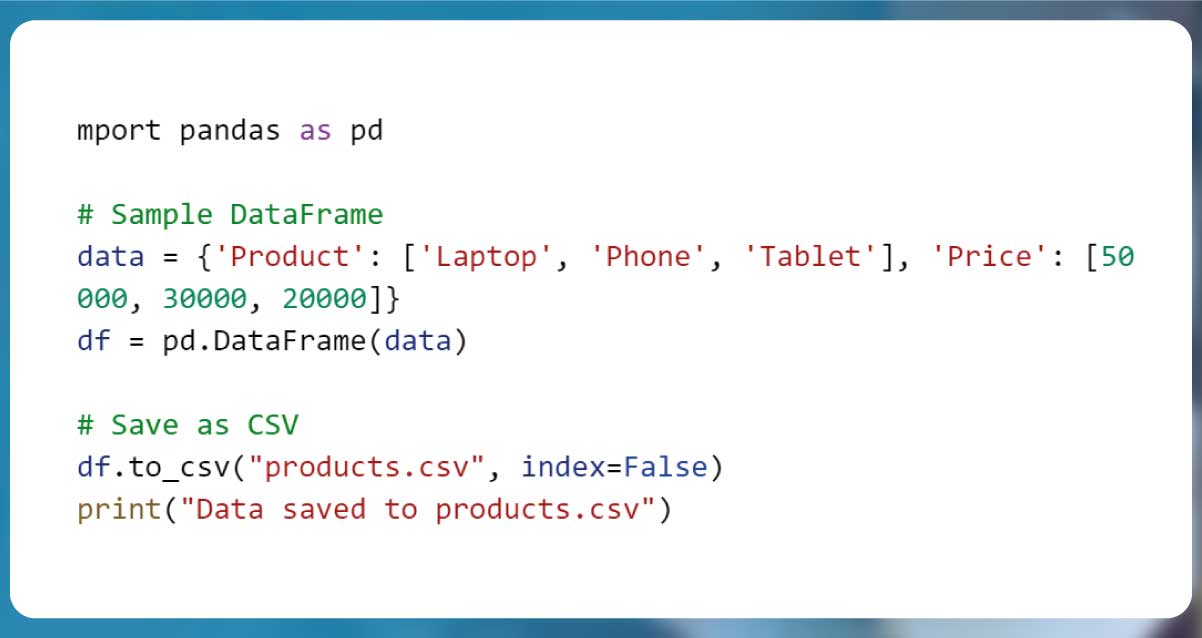

Exporting and Storing Cleaned Data

Once Python Data Processing is complete, the next step is storing structured data

efficiently for future use. This involves exporting cleaned data into formats like CSV, JSON, or

databases and automating data storage with SQL and NoSQL systems. Proper data storage ensures

smooth Big Data Analytics with Python, making insights easily accessible.

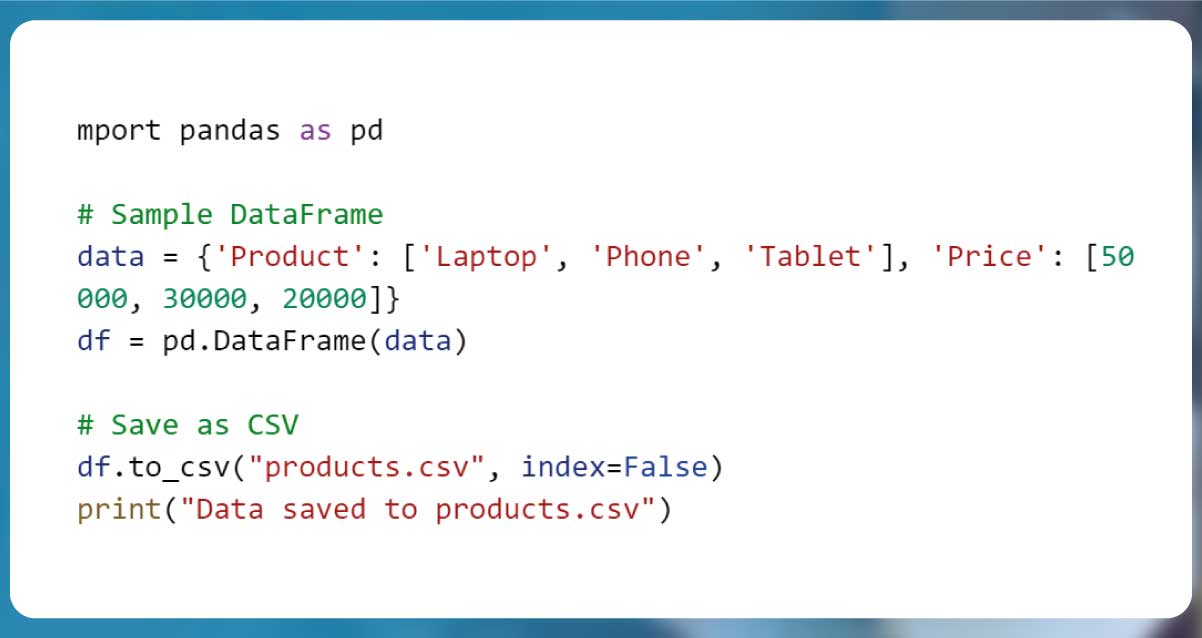

1. Saving Structured Data in CSV, JSON, or Databases

Depending on the use case, different formats are used for Data Structuring with

Python:

| Format |

Use Case |

Pros |

CSV |

Best for tabular data & spreadsheets. |

Easy to read & lightweight. |

JSON |

Works well for APIs & hierarchical data. |

Flexible & human-readable. |

SQL Databases |

Suitable for structured, relational data. |

Optimized for queries & joins. |

NoSQL (MongoDB, Firebase) |

Ideal for unstructured or dynamic data. |

Scalable & schema-free. |

Example: Exporting Data to CSV

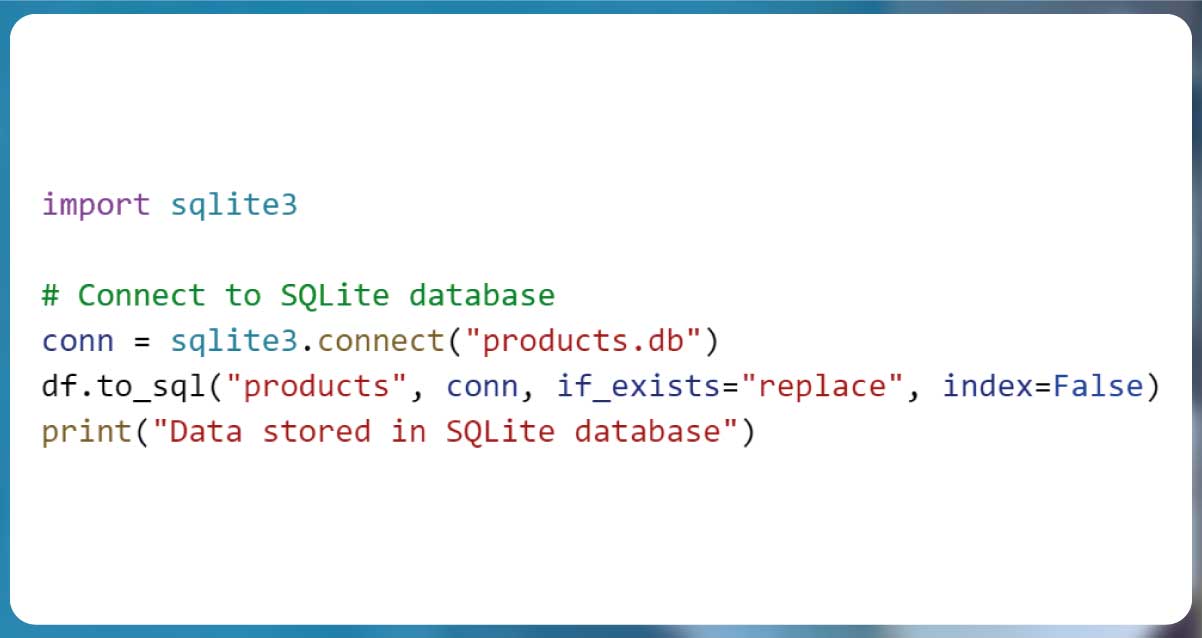

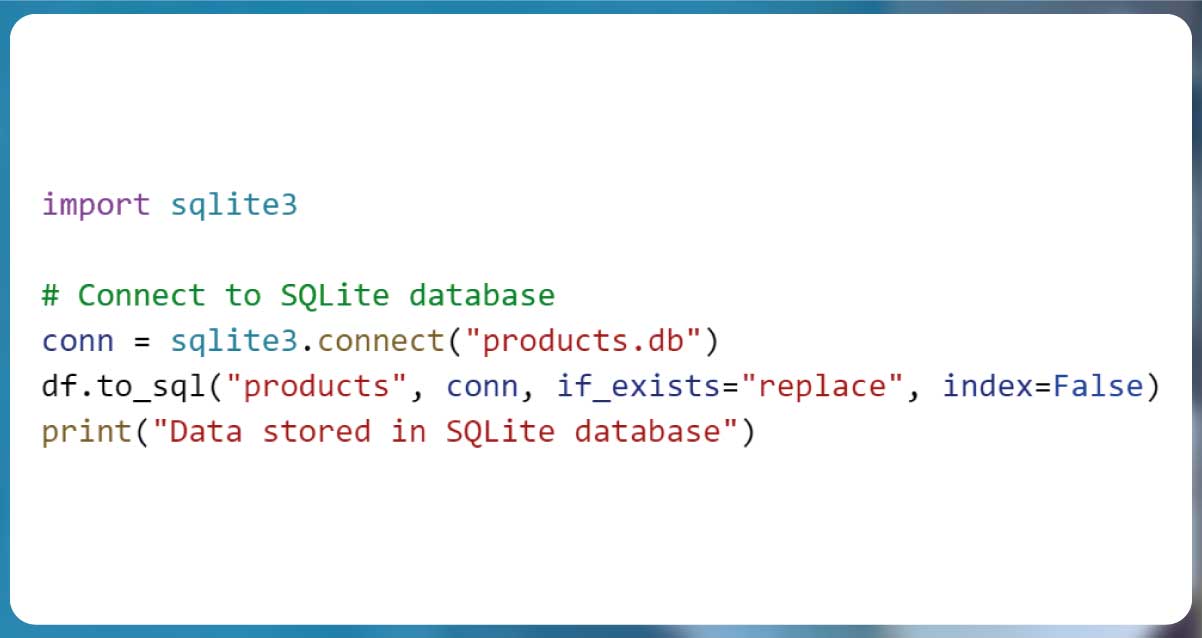

2. Automating Data Storage with SQL and NoSQL

For Visualizing Scraped Data and long-term storage, databases are more efficient

than flat files.

Storing Data in SQL (MySQL / PostgreSQL)

Storing Data in NoSQL (MongoDB)

.jpg)

3. Geospatial Data Mapping and Big Data Storage

For Geospatial Data Mapping, storing location-based data is crucial. PostGIS

(PostgreSQL extension) and MongoDB’s geospatial indexing are useful for this.

| Database |

Geospatial Feature |

PostGIS |

Stores & queries latitude/longitude data. |

MongoDB |

Supports 2D indexing for mapping. |

By properly exporting and storing cleaned data, businesses can ensure scalability,

efficiency, and easy data retrieval for analytics and reporting.

Automating the Transformation Process

Automation is essential in Scraped Data Transformation to handle large-scale

datasets efficiently. By writing Python scripts, leveraging APIs for real-time updates, and

integrating cloud storage solutions, businesses can streamline the ETL Process for Web Scraping

and ensure continuous Data Mapping with Pandas.

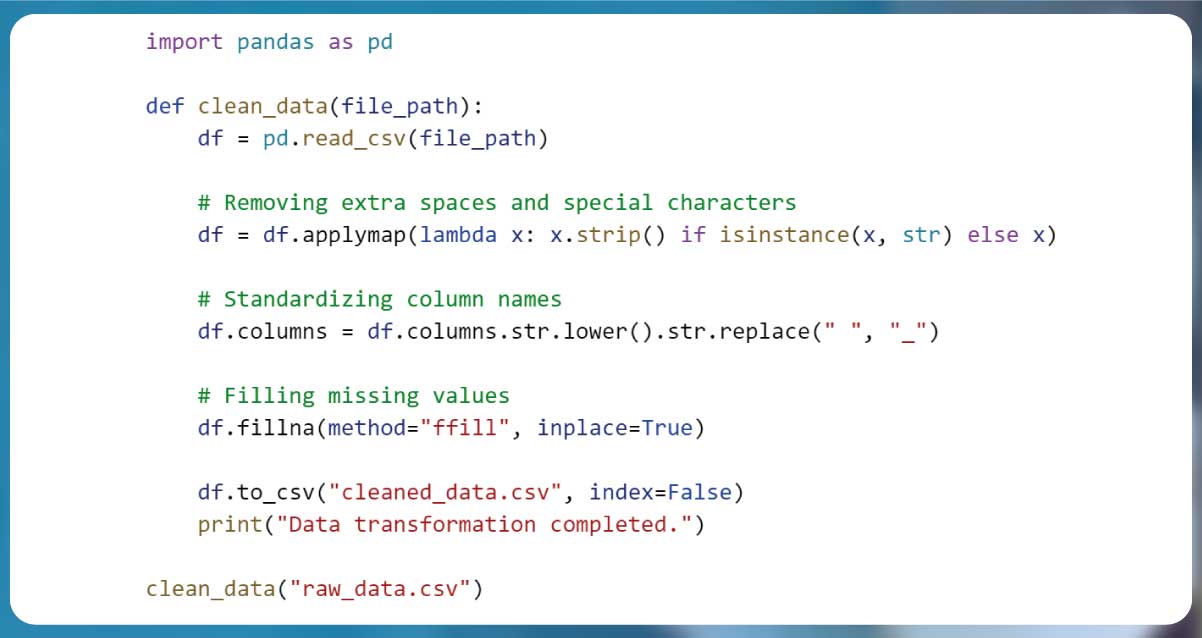

1. Writing Python Scripts for Recurring Data Transformation Tasks

Manually cleaning and structuring scraped data is inefficient for recurring tasks.

Python scripts automate these processes, ensuring consistent and accurate transformation.

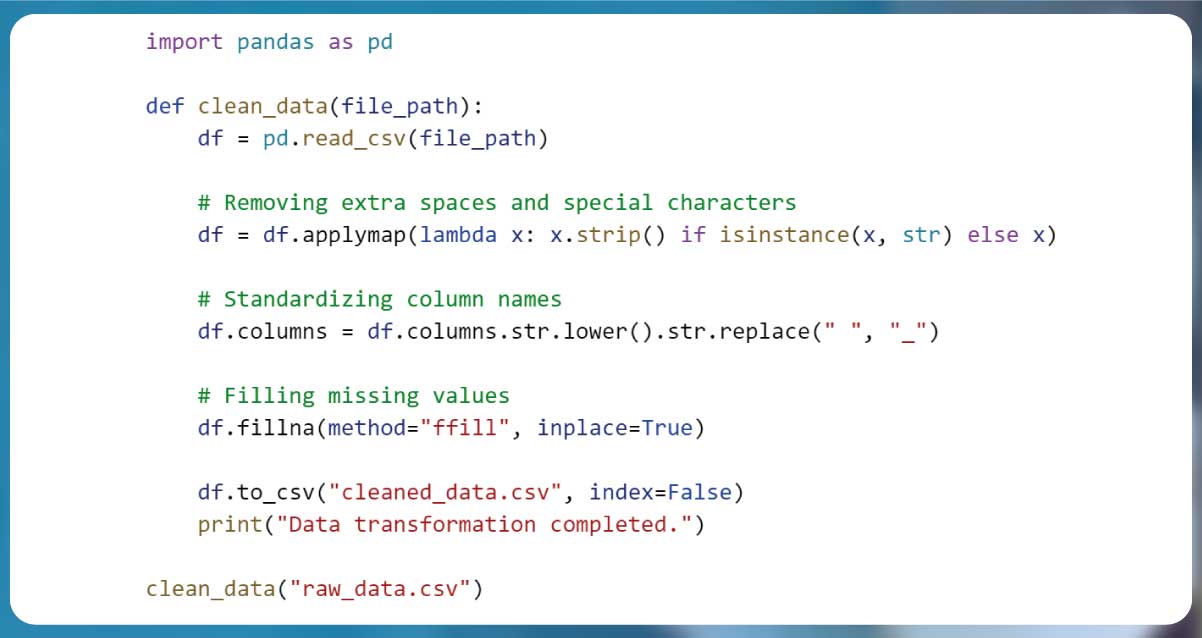

Example: Automating Data Cleaning with Pandas

| Task |

Automated Process |

| Removing spaces & symbols |

.applymap() function |

| Standardizing column names |

.str.lower() & .replace() |

| Handling missing values |

.fillna(method="ffill") |

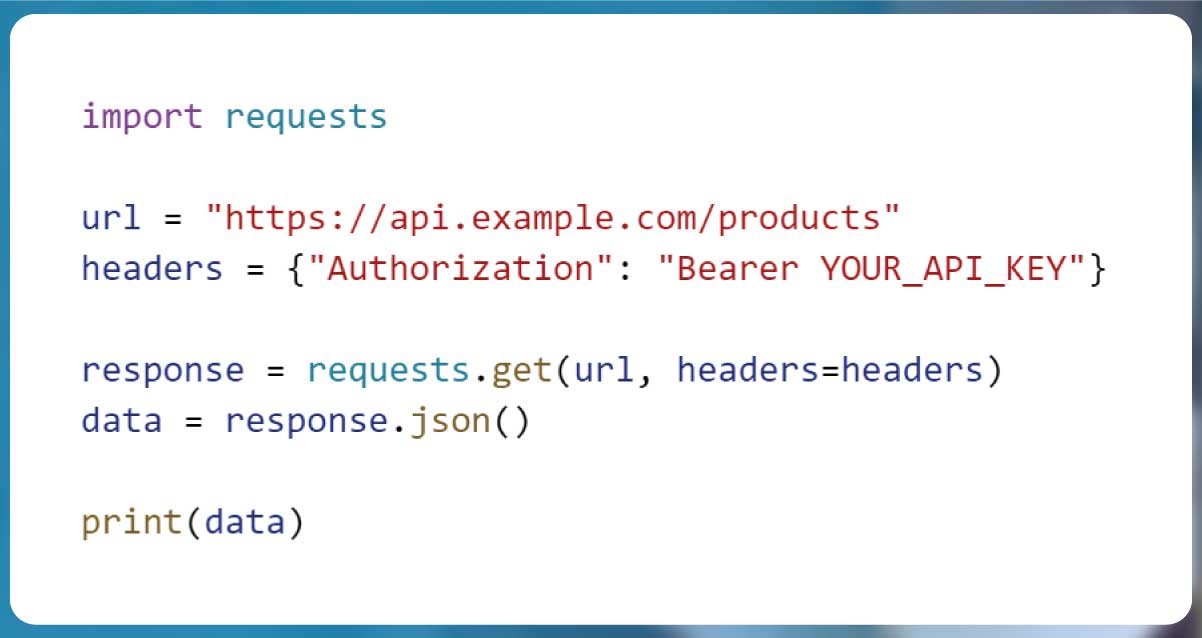

2. Using APIs for Real-Time Data Updates

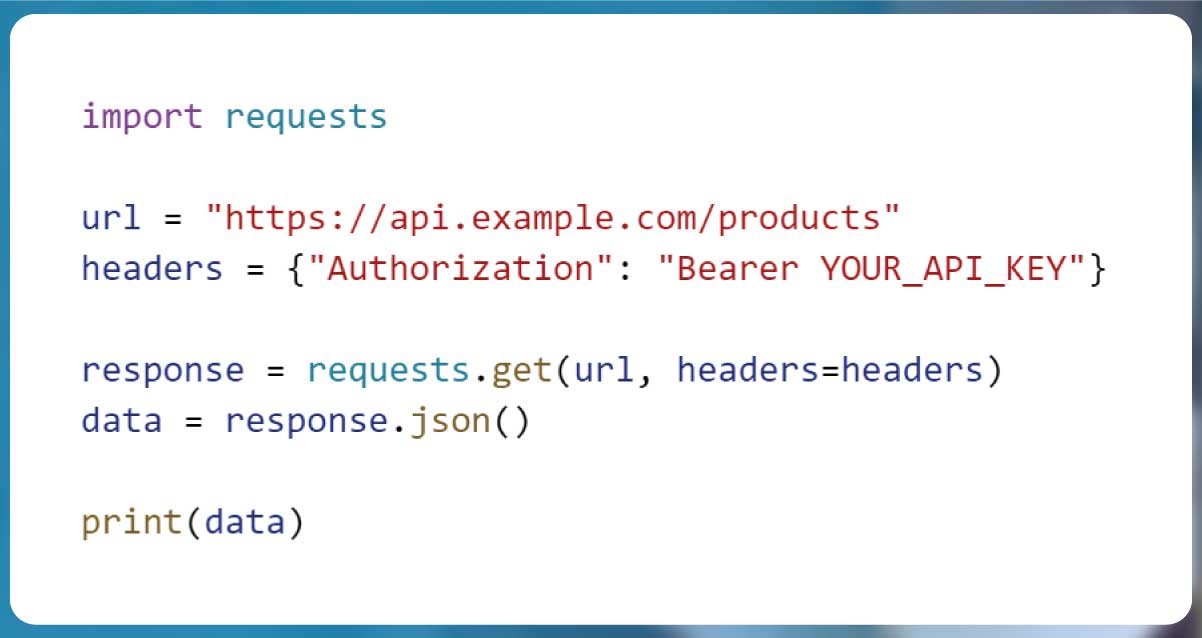

APIs help fetch real-time data instead of scraping static pages repeatedly. Web

Scraping with Python can be combined with APIs for dynamic updates.

Example: Fetching Data from an API

| API Integration Benefits |

Why It’s Useful |

| Faster than scraping |

Direct data retrieval from sources. |

| Live data updates |

Always fetches the latest records. |

| No legal risks |

Avoids scraping restrictions. |

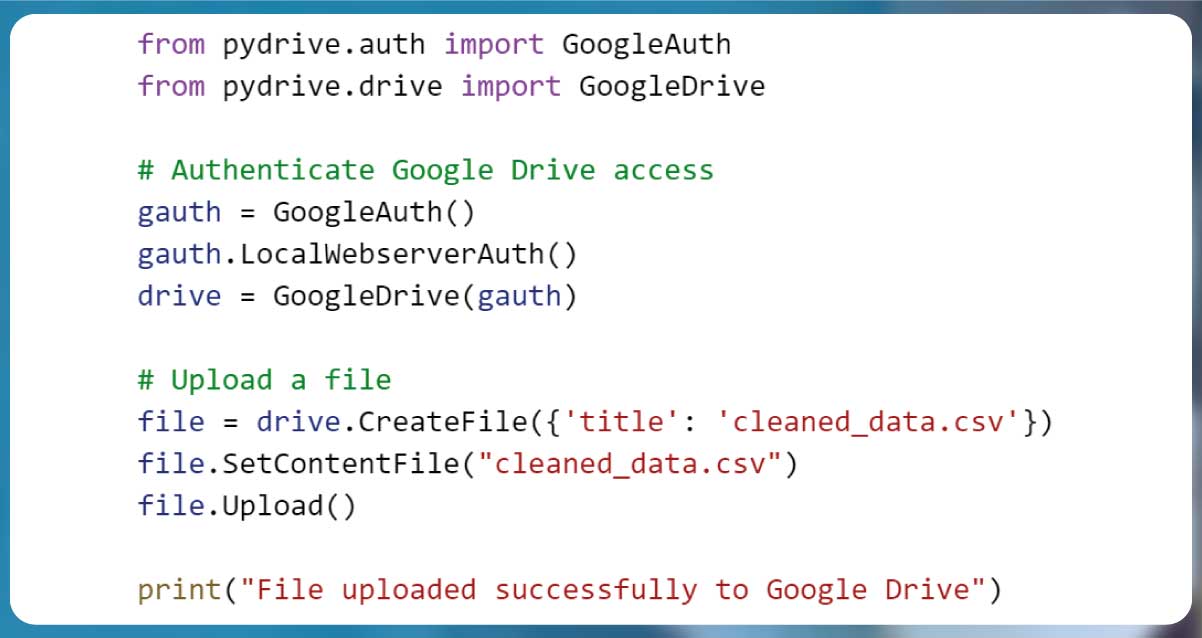

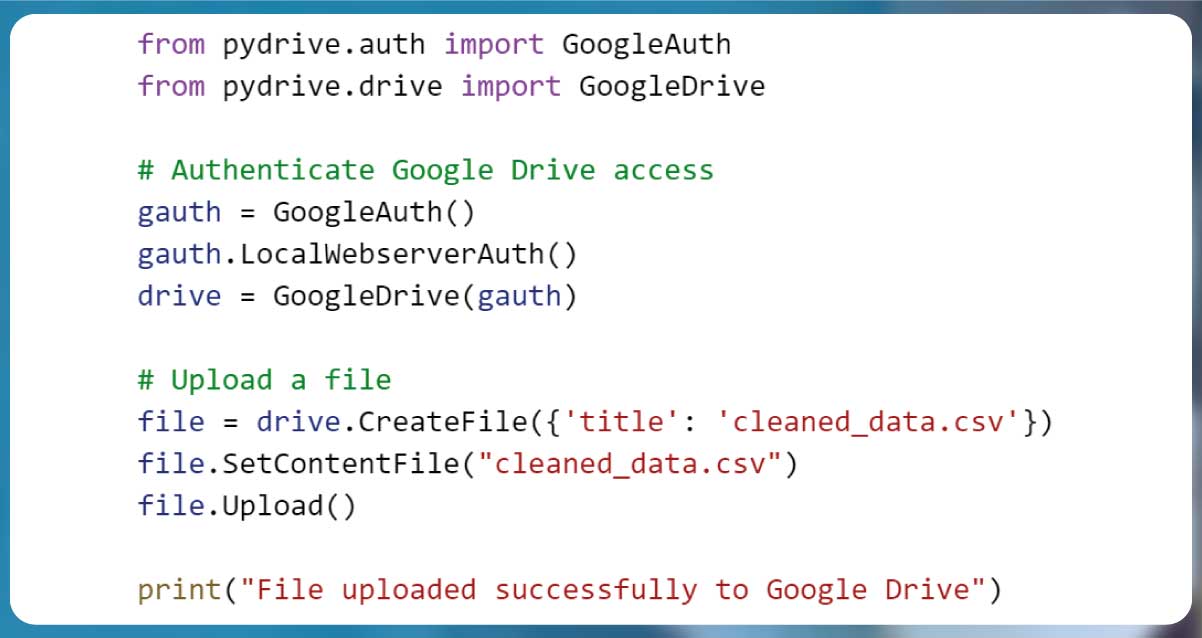

3. Implementing Cloud Storage Solutions for Data Management

For scalability, storing transformed data in cloud platforms like AWS S3, Google

Drive, or Azure ensures easy access and security.

Example: Uploading Data to Google Drive with Python

| Cloud Storage Option |

Use Case |

AWS S3 |

Large-scale enterprise storage |

Google Drive |

Personal & small business storage |

Azure Blob Storage |

Integrated with Microsoft ecosystem |

By automating the transformation process, businesses can save time, reduce errors,

and ensure data is always up to date.

Conclusion

Transforming and mapping scraped data is essential for making raw information

structured, usable, and insightful. Throughout this guide, we explored key techniques, including

Python Data Processing, Data Structuring with Python, and Geospatial Data Mapping. Leveraging

libraries like Pandas, NumPy, and BeautifulSoup, we demonstrated how to clean, map, and store

data efficiently for Big Data Analytics with Python.

Actowiz Solutions specializes in web scraping, data transformation, and automation

services to help businesses extract and analyze data seamlessly. With expertise in ETL

processes, Python-based data pipelines, and real-time data analytics, Actowiz ensures that

organizations can make data-driven decisions with confidence. Contact Actowiz Solutions now! You

can also reach us for all your mobile app scraping , data

collection, web scrapings , and instant data scraper service requirements!

Core Scraping Services

Amazon Data Scraping #1 Walmart Data Scraping Shopify Store Scraping HOT TikTok Shop Scraping HOT Flipkart Data ScrapingTop Global Platforms

Platforms by Region

🇺🇸 USA🇬🇧🇪🇺 UK/EU🇮🇳 India🇦🇪 ME🌏 SEA🌎 LATAM🇨🇳🇯🇵🇰🇷🇦🇺 AUAmazon Data Scraping #1 Walmart Data Scraping Target Data Scraping NEW Shopify Scraping HOT TikTok Shop Scraping HOT Costco Data Scraping NEW Best Buy Scraping NEW Home Depot Scraping NEW Etsy Data Scraping NEW Shein Data Scraping NEW DoorDash Scraping NEW Instacart Scraping NEWTesco Data Scraping NEW Sainsbury's Scraping NEW ASDA Data Scraping NEW Ocado Scraping NEW ASOS Data Scraping NEW Rightmove Scraping NEW Deliveroo Scraping NEW Zalando Scraping NEW Otto Scraping NEW Cdiscount Scraping NEW Carrefour Scraping NEW Allegro Scraping NEW Bol.com Scraping NEWFlipkart Data Scraping JioMart Data Scraping NEW BigBasket Scraping NEW Myntra Data Scraping NEW Nykaa Data Scraping NEW Blinkit Data Scraping Zepto Data Scraping Zomato Data Scraping Swiggy Data ScrapingNoon Data Scraping NEW Amazon.ae Scraping NEW Talabat Data Scraping NEW Careem Data Scraping NEW PropertyFinder Scraping NEWPricing & Promotions

MAP Violations Brand Protection Counterfeit Detection Price Intelligence AI HOT Data IntelligenceBrand & Intelligence

Share of Search Content Audit & PDP Reviews & Ratings Retail Media Buy Box Monitoring Social Commerce HOT Live Commerce NEW Agentic Commerce NEWDigital Shelf & Search

Assortment Planning Competitive Benchmarking Product Availability Seller Intelligence NEW Q-Commerce NEWAssortment

E-commerce Intelligence Hyperlocal Insights POI & Store Locator DTC Brand Analytics NEWFor Retailers

Marketplace Scrapers

Amazon API TikTok Shop API HOT Uber Eats API Airbnb API Zepto / Blinkit API Instacart API NEW Talabat API NEWData APIs

Web Extract API Reviews API SERP API Pricing Webhook NEWUniversal APIs

Live Crawler API Scheduler Realtime Alerts Webhook Delivery 🐍 Python SDK 💚 Node.js SDKDelivery & SDKs

Knowledge Center

Digital Shelf Playbook MAP Compliance Guide Pricing Intel Guide Scraping Compliance TikTok Shop Guide NEW Cross-Border Guide NEWGuides & Playbooks

Sample Datasets HOT ROI Calculator NEW API Postman Collection Demo Dashboards Free API Playground NEW Press KitDownloads & Tools

Trust Center About Us FAQs CareersTrust & Company

.jpg)

.jpg)