In 2023, GPU availability was the hardest constraint for AI teams. By 2026, compute is abundant — but high-quality, legally sourced training data has emerged as the tightest bottleneck for frontier and vertical AI labs alike.

The shift has three drivers:

1. Open web data is drying up. Publishers are blocking AI crawlers (OpenAI's GPTBot, Google Extended, Anthropic's ClaudeBot), and high-quality sources are increasingly behind paywalls, logins, or licensing agreements.

2. Legal scrutiny has intensified. Post-New York Times v. OpenAI and related cases, AI labs face billions in litigation exposure for data sourcing practices that were industry-standard three years ago.

3. Model performance now depends on data quality, not quantity. The era of "throw another trillion tokens at it" is over. Curated, high-signal datasets outperform raw bulk scrapes by 3-10x on downstream benchmarks.

For AI startups building frontier models, vertical models, RAG systems, or domain-specific fine-tunes, the question is no longer "how do we get lots of data?" — it's "how do we get the right data, legally, at the right cost, with documented provenance?"

This guide breaks down exactly how serious AI teams are sourcing LLM training data from the web in 2026 — and how specialized providers like Actowiz Solutions accelerate the process.

Before a single URL is fetched, serious AI teams establish:

Crawling permissions: Does the source allow programmatic access? What do robots.txt, Terms of Service, and crawl-delay directives specify?

Content licensing: Is the content under CC, Creative Commons variants, public domain, or proprietary copyright?

Personal data handling: Are there PII, PHI, or GDPR/CCPA-relevant elements? If yes, what's the removal plan?

Attribution requirements: Does the license require author credit, source URLs, or use restrictions?

Commercial vs non-commercial use: Many sources allow research use but restrict commercial training

Regional law exposure: EU AI Act, CCPA, PIPEDA, and upcoming US federal AI legislation

This is why leading AI labs are hiring dedicated data counsel and why data provenance documentation has become a board-level discussion.

Web-scale data collection for AI training requires infrastructure at a scale most startups can't build in-house:

Distributed crawling: across thousands of concurrent proxies and user agents

JavaScript rendering: for modern single-page apps (SPAs), which require headless browser farms

PDF, document, and multimedia extraction: PDFs, DOCX files, images, videos, and audio all need specialized parsers

Incremental crawling: re-crawling efficiently only when content changes

Duplicate detection: at URL, content-hash, and semantic levels

Language detection: critical for multilingual models

Content classification: filtering for quality, topic, toxicity, and relevance

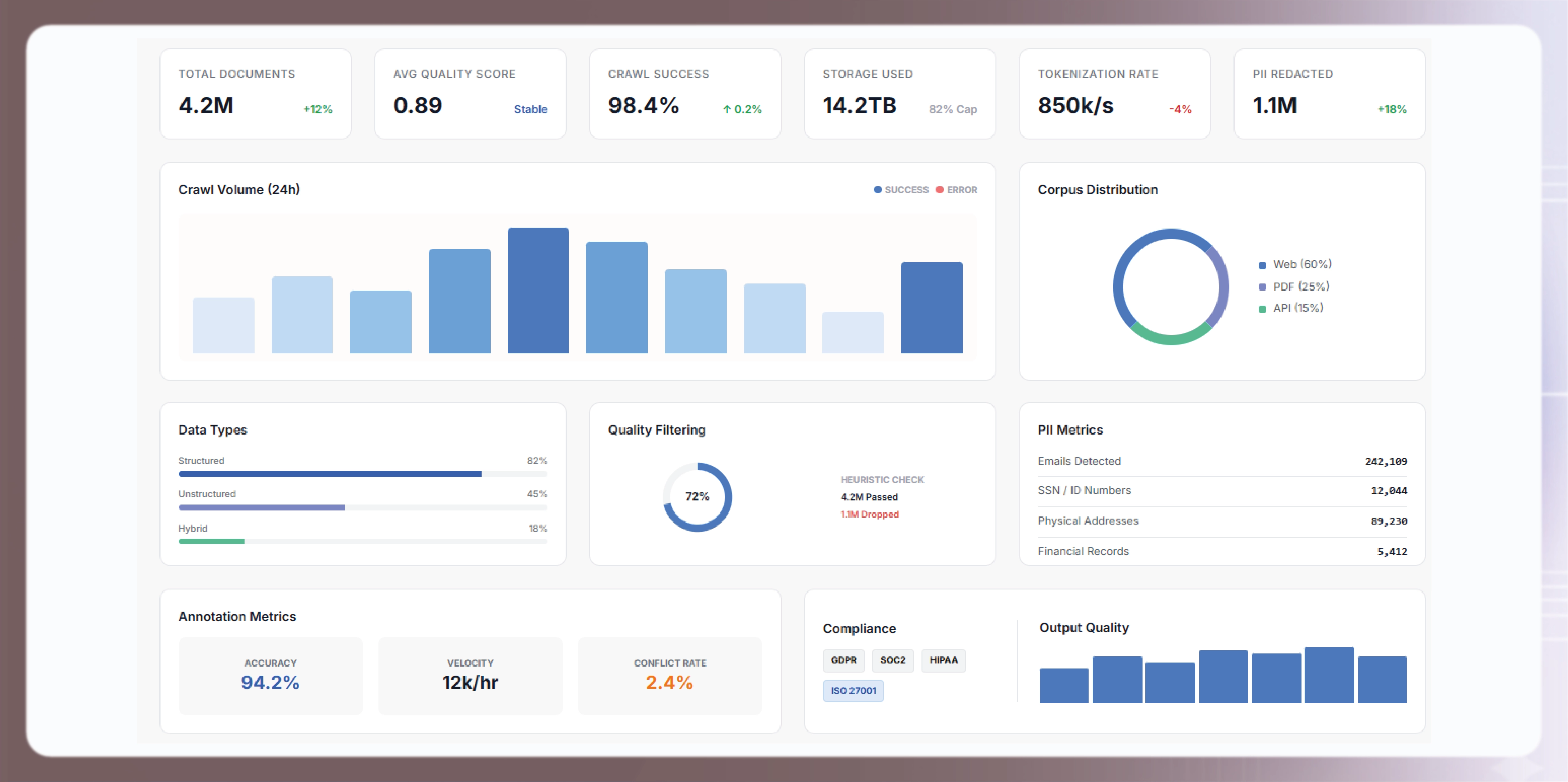

This is where most teams fail. Raw web data is 90%+ noise:

Boilerplate extraction: stripping navigation, ads, footers, cookie banners, and templated content from extracted text

Quality scoring: using small classifier models to score content for educational value, factual density, and grammatical quality

Toxicity filtering: removing hate speech, violence, CSAM, and other harmful content categories

PII scrubbing: automated redaction of names, addresses, phone numbers, SSNs, credit cards, health information

Deduplication at scale: semantic deduplication across billions of documents using minhash, SimHash, or embedding-based methods

Domain balancing: ensuring training data isn't dominated by a single source or content type

Modern AI labs maintain full provenance logs for every token of training data:

Source URL and crawl timestamp

robots.txt state at time of crawl

Content license classification

Extraction pipeline version

Quality scores and filter decisions

PII redaction audit log

This isn't optional. Investor due diligence, enterprise customer audits, and litigation defense all require provenance.

Foundation model builders need trillions of tokens across web, books, scientific papers, code, and multilingual content. Even with the shift toward smaller, curated datasets, the bar for pre-training corpus size remains multi-trillion tokens.

Vertical AI companies need deep corpora in narrow domains:

Legal AI: case law, contracts, statutes, regulatory filings

Medical AI: clinical guidelines, medical literature (beyond paywall zones), drug information

Financial AI: SEC filings, analyst reports, financial news, earnings transcripts

Code AI: GitHub repos (with license filtering), documentation, Stack Overflow archives

Scientific AI: arXiv, PubMed, patent databases, technical documentation

High-quality instruction-response pairs sourced from Q&A sites, forums, and synthetic generation pipelines. Quality here directly determines model helpfulness.

Pairwise comparisons, ratings, and preference signals from human annotators. This is increasingly hybrid — mixing human feedback with AI-generated preferences.

Image-text pairs for vision-language models

Video-caption datasets for video understanding

Audio transcripts for speech models

Document-image pairs for OCR and document AI

Enterprise customers building RAG systems need constantly refreshed knowledge corpora — news, financial data, product catalogs, technical documentation — structured for retrieval rather than generation.

Holdout datasets for model evaluation, red-teaming corpora, and capability benchmarks — often the most expensive to create because human annotation is mandatory.

Build or partner on infrastructure that respects robots.txt, crawl-delays, and publisher opt-outs. Source publicly available content from domains that explicitly allow crawling. This is the most scalable path but requires continuous legal review.

Direct agreements with large publishers, data providers, and content platforms. OpenAI's deals with Reddit, News Corp, and others set the template. Costs range from $1M-$250M+ for major publisher deals. Out of reach for most startups.

Start with Common Crawl, The Pile, RedPajama, Dolma, FineWeb, and similar open datasets. Supplement with domain-specific custom crawls for the vertical your model serves.

Use frontier LLMs (GPT, Claude, Gemini) to generate training data for smaller models — with careful evaluation to avoid model collapse and hallucination amplification.

Partner with specialized annotation providers (Scale AI, Surge, Prolific, Actowiz) for instruction-tuning data, RLHF pairs, and evaluation sets. Costs range from $0.10 to $20+ per annotation depending on complexity and required expertise.

Work with providers like Actowiz Solutions that handle the full pipeline — legal framework, crawling infrastructure, quality curation, PII scrubbing, provenance documentation — as managed services.

Understanding what's reasonable to pay for training data is critical:

Bulk web corpus (raw): $0.0001 – $0.001 per 1K tokens

Cleaned, deduplicated, quality-filtered corpus: $0.001 – $0.01 per 1K tokens

Domain-specific curated corpus: $0.01 – $0.10 per 1K tokens

Instruction-response pairs (human-generated): $0.50 – $5.00 per pair

RLHF preference pairs: $2 – $15 per pair

Expert annotations (medical, legal, code): $5 – $50+ per annotation

A medium-size vertical AI company building a 70B-parameter fine-tuned model typically budgets $500K – $5M annually for training data — often split 60/40 between crawling infrastructure and human annotation.

Actowiz Solutions has built end-to-end AI training data extraction infrastructure for AI labs, vertical AI startups, and enterprise ML teams — handling the legal complexity, technical scale, and quality curation in one managed service.

What we deliver:

Multi-terabyte web crawling: distributed infrastructure that crawls millions of domains with full compliance controls

Specialty corpus construction: domain-specific crawls for legal, medical, financial, technical, scientific, and e-commerce data

Multilingual data: coverage across 40+ languages for multilingual model training

PDF, document, and media extraction: specialized pipelines for non-HTML content types

Quality filtering: classifier-driven quality scoring, toxicity filtering, and deduplication at web scale

PII scrubbing: automated redaction pipelines compliant with GDPR, CCPA, and HIPAA standards

Provenance documentation: full source URL, crawl timestamp, license classification, and filter logs per document

Human annotation at scale: our annotation teams deliver instruction-tuning pairs, RLHF preferences, and expert-level evaluations

Compliance frameworks: our legal team maintains guidance on source licensing, regional regulations, and industry best practices

Custom data schemas: output formatted for any downstream pipeline (HuggingFace datasets, Mosaic streaming, custom formats)

Our AI training data pipelines process petabyte-scale web data monthly for AI customers ranging from stealth-mode startups to publicly traded enterprise AI companies.

The legal landscape is evolving rapidly. In the US, scraping publicly available data has broad legal support (hiQ Labs v. LinkedIn), but copyright and Terms of Service questions remain contested in active litigation. Every AI team should work with legal counsel to establish a defensible sourcing posture. Actowiz provides the technical infrastructure and documentation to support whatever compliance posture you adopt.

Yes. By default, we honor robots.txt, crawl-delay directives, and AI-specific opt-out signals (GPTBot, Google-Extended, ClaudeBot equivalents) for the relevant agent identities. Custom configurations are available based on client legal guidance.

Yes. We operate specialized pipelines for legal (case law, regulations, contracts), healthcare (literature, guidelines, MRF data), financial (SEC filings, analyst reports), and code (GitHub with license filtering) domains.

We actively crawl and curate data in 40+ languages, including low-resource languages where public data is scarce. Custom language requirements can be scoped.

We implement URL-level, content-hash, MinHash, and embedding-based semantic deduplication. For customers building pre-training corpora, we deliver deduplication reports with cross-source overlap statistics.

Yes — we provide dedicated annotation teams for RLHF preference data, instruction-response pairs, and domain-expert evaluations. Pricing and throughput depend on complexity.

Every document we deliver includes source URL, crawl timestamp, robots.txt state at time of crawl, license classification, and complete processing audit trail. This documentation is designed to withstand due diligence scrutiny.

Pilot projects start at $15,000 for targeted domain corpora. Enterprise pre-training data partnerships are custom-scoped and typically range from $500K to $5M+ annually.

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

Complete guide to scraping Noon Saudi Arabia, Amazon.sa, Jarir, and Extra for Saudi e-commerce intelligence. Built for brands entering KSA, regional distributors, and Vision 2030 investors.

Scrape Cracker Barrel restaurants locations Data in the USA in 2026 to analyze store presence, expansion trends, and location intelligence.

Scrape Tim Hortons restaurants locations Data in USA to uncover expansion trends, store distribution insights, and competitive benchmarking strategies.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.