Introduction

The quality of an AI model is determined by the quality of its training data. For US machine learning teams building LLMs, recommendation systems, computer vision models, and other AI applications, acquiring high-quality, diverse, and representative training data at scale is the single most important — and often most challenging — part of the development process.

In 2026, the demand for AI training data has exploded. LLMs require billions of tokens of diverse text data. Multimodal models need paired text-image datasets. Specialized AI applications in healthcare, finance, and eCommerce require domain-specific data that is not available in standard datasets.

This guide covers the practical strategies, tools, and considerations for collecting AI training data at scale — from web scraping and data partnerships to synthetic data generation and quality assurance.

The AI Training Data Landscape in 2026

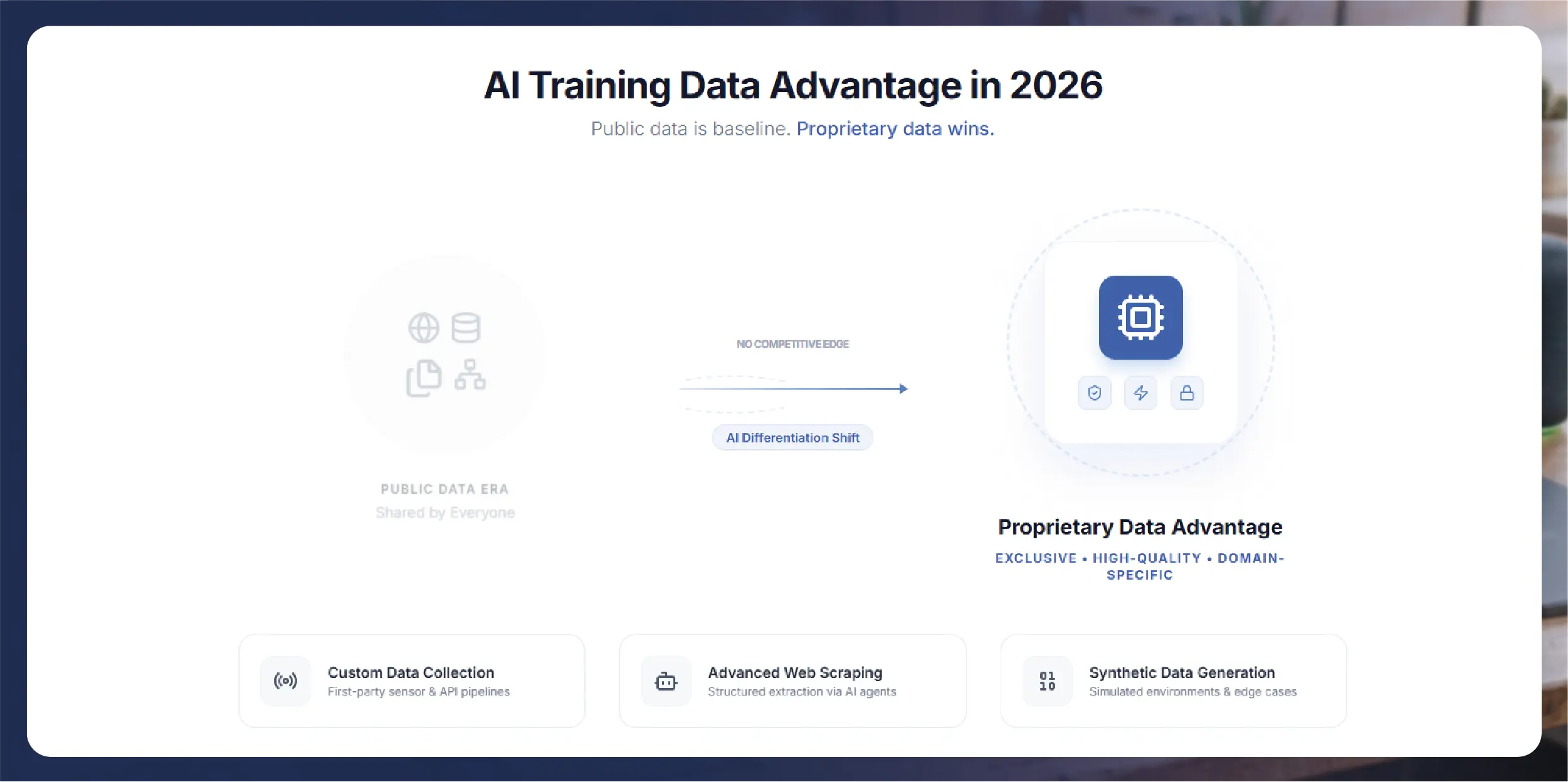

The training data market has evolved significantly. Public datasets like Common Crawl, Wikipedia, and open-source repositories remain important foundations, but they are no longer sufficient for competitive AI development. Every major AI lab and enterprise team is training on similar public data, which means public data alone does not create differentiation.

The competitive advantage in 2026 comes from proprietary, high-quality, domain-specific data that your competitors do not have. This is driving a surge in demand for custom data collection services, specialized web scraping, and synthetic data generation.

Data Collection Methods

Web Scraping for AI Training Data

Web scraping is the most scalable method for collecting large volumes of text, product information, reviews, and other structured data from the public web. For AI training purposes, web scraping can provide product descriptions and specifications for eCommerce AI models, restaurant menus and pricing for food-tech applications, real estate listings for property valuation models, news articles and blog posts for general language models, reviews and social media posts for sentiment analysis training, and technical documentation for specialized knowledge models.

The key differences between scraping for AI training versus scraping for business intelligence are volume (AI training requires orders of magnitude more data), diversity (training data needs to represent the full range of language patterns, formats, and topics), and quality requirements (training data must be cleaned of noise, duplicates, and low-quality content that could degrade model performance).

Licensed and Partnered Data

For domain-specific AI applications, licensing data from industry-specific sources provides higher quality and more targeted coverage than general web scraping. Examples include medical literature databases for healthcare AI, financial data feeds for fintech models, and patent databases for innovation and research AI.

Synthetic Data Generation

Synthetic data — artificially generated data that mimics the statistical properties of real data — is increasingly used to supplement real-world datasets. Synthetic data is particularly valuable for augmenting underrepresented categories to improve model performance on rare cases, generating privacy-safe alternatives to sensitive personal data, and creating edge-case scenarios that are rare in natural data but important for model robustness.

Human-Labeled Data

Many AI applications require labeled or annotated data. Image classification, named entity recognition, sentiment analysis, and other supervised learning tasks need human-labeled training examples. Labeling can be done through in-house annotation teams, crowdsourcing platforms, or specialized labeling services.

Quality Assurance for Training Data

The adage "garbage in, garbage out" applies more to AI training than to any other data application. Quality issues in training data propagate directly into model behavior.

Deduplication is critical because duplicate data points in training sets can cause models to overfit to repeated patterns. Implement hash-based deduplication at the document level and near-duplicate detection at the content level.

Content filtering removes low-quality, toxic, biased, or irrelevant content from training datasets. Automated classifiers can flag potentially problematic content, but human review is essential for calibrating filter thresholds.

Language and encoding validation ensures all text data is properly encoded, in the expected language, and free of encoding artifacts that could introduce noise.

Freshness management tracks the age of data in your training set. For models that need to reflect current information, stale data should be periodically refreshed with new collections.

Bias auditing examines training data for systematic biases in representation, language, and content that could propagate into model outputs. This is particularly important for models deployed in sensitive applications like hiring, lending, and healthcare.

Legal and Compliance Considerations

AI training data collection in the United States involves navigating an evolving legal landscape. Web scraping of publicly available data is generally permissible, but brands and ML teams should be aware of terms of service restrictions on specific websites, copyright considerations for creative content, privacy regulations when data includes personally identifiable information, and emerging state-level AI and data collection regulations.

Several US states — including Colorado, Connecticut, Maryland, and Minnesota — are expected to be active enforcers of data collection regulations in 2026. ML teams should work with legal counsel to ensure their data collection practices comply with applicable laws.

Best practices for compliant data collection include scraping only publicly available data, implementing PII detection and filtering in your data pipeline, respecting robots.txt and rate limiting to avoid server impact, maintaining documentation of data sources and collection methods, and implementing data retention and deletion policies.

Scaling Your Data Pipeline

For US ML teams building production AI systems, the data collection pipeline must scale from prototyping volumes (thousands of records) to production volumes (millions or billions of records). Key infrastructure considerations include distributed scraping architecture that can handle millions of pages daily, data storage that accommodates the volume and format requirements of AI training, processing pipelines for cleaning, deduplicating, and formatting data, and version control for training datasets to ensure reproducibility.

How Actowiz Supports AI Training Data Collection

Actowiz Solutions provides enterprise-grade web scraping infrastructure optimized for AI training data collection. Our capabilities include large-scale text extraction from 1,000+ web platforms, multi-language data collection supporting 50+ languages, structured data delivery in JSON, CSV, and custom formats, PII filtering and content quality assurance, and dedicated data engineering support for custom collection requirements.

Whether you need product data for eCommerce AI, review data for sentiment models, or domain-specific content for specialized applications, our infrastructure delivers clean, structured, high-quality training data at the scale your ML team needs.

See the quality and structure of Actowiz training data. Request a free sample dataset in your target domain.

Contact Us Today!Conclusion

Actowiz Solutions helps US AI teams collect high-quality training data at scale through enterprise-grade web scraping, data cleaning, and structured delivery.

You can also reach us for all your mobile app scraping, data collection, web scraping , and instant data scraper service requirements!