The web scraping industry is undergoing its most significant technological shift in a decade. Traditional scraping — the practice of writing code that targets specific HTML elements using CSS selectors or XPath — has been the standard approach since the early 2000s. It works, but it is fundamentally fragile. Every time a website changes its layout, selectors break, scrapers fail, and engineering teams scramble to fix them.

In 2026, AI-powered web scraping is replacing this brittle paradigm with something far more resilient. Vision-LLM (Large Language Model) agents can "see" web pages the way humans do — identifying prices, product titles, and other data points visually rather than through code-dependent element targeting. The implications for US businesses that depend on web data are profound.

Traditional web scraping relies on identifying specific HTML elements on a web page and extracting their content. A scraper targeting an Amazon product price might look for a specific CSS class like .a-price-whole or an XPath like //span[@class='a-price-whole'].

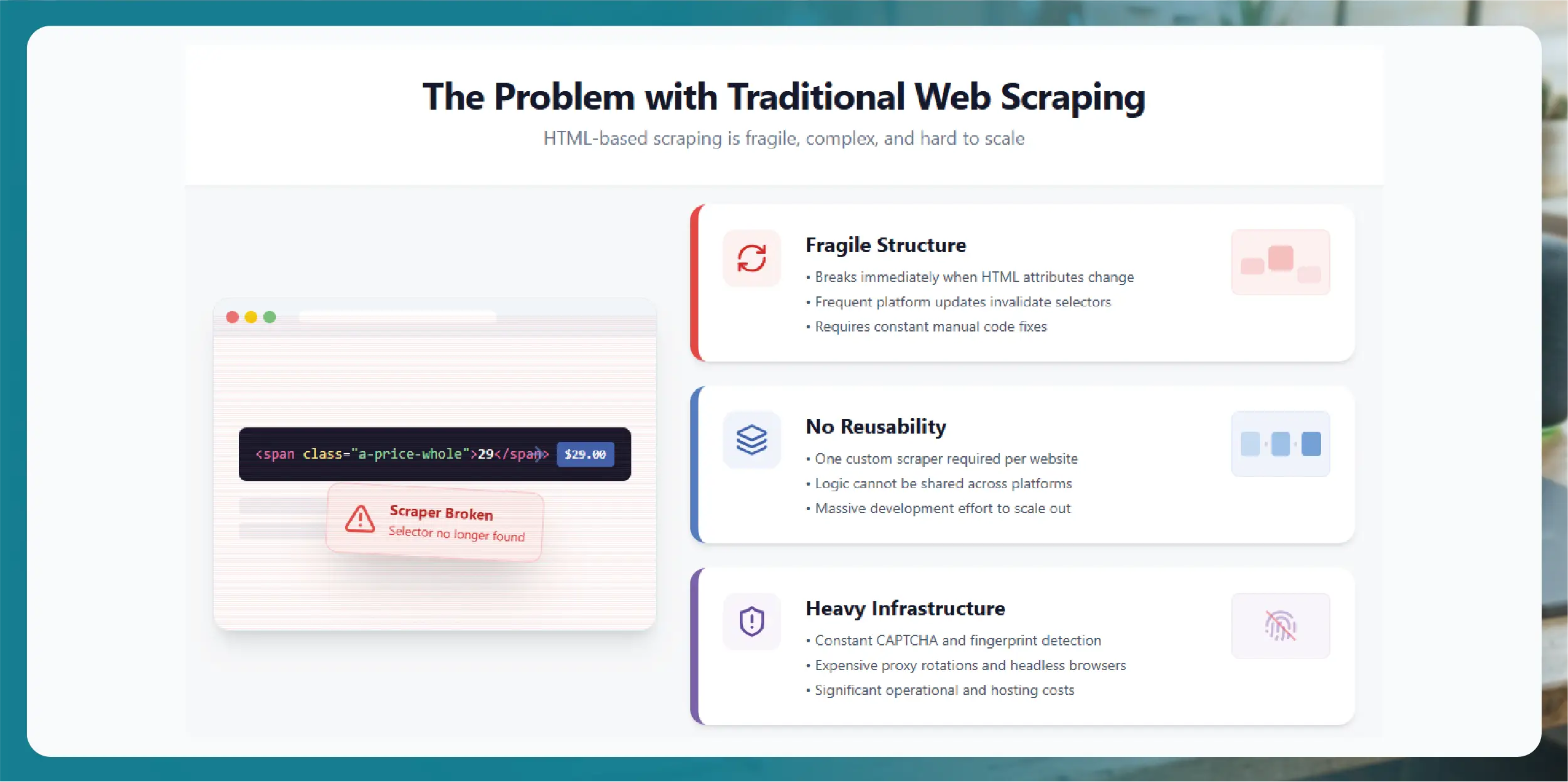

This approach has three fundamental weaknesses.

Fragility: When Amazon (or any website) changes its HTML structure, class names, or page layout, traditional scrapers break. Major eCommerce platforms update their frontend code frequently — sometimes weekly. Each change requires manual scraper maintenance by engineering teams.

Platform-specific code: Every website requires a custom scraper. The code that scrapes Amazon product pages cannot scrape Walmart pages. Scaling to cover hundreds of platforms means building and maintaining hundreds of separate scraping scripts.

Anti-bot evasion complexity: Modern websites deploy sophisticated anti-bot measures including CAPTCHAs, browser fingerprinting, and behavioral analysis. Traditional scrapers require extensive infrastructure for proxy rotation, headless browser management, and CAPTCHA solving.

AI-powered scraping fundamentally changes the extraction approach. Instead of targeting HTML elements, AI systems extract data through visual understanding, natural language processing, and adaptive learning.

Vision-LLM scrapers render a web page in a headless browser and process the visual output — the same view a human user sees. The AI model identifies data elements by their visual appearance and context rather than their underlying code structure.

A price displayed in large, bold text near a product title is recognized as a price regardless of what CSS class it uses. A product rating shown as stars is identified as a rating whether it is rendered as SVG images, Unicode characters, or CSS shapes.

This visual approach is inherently resilient to HTML changes. When Amazon redesigns its product page layout, the price still looks like a price — and the AI still extracts it correctly.

AI scraping systems can extract data by describing what you want in natural language rather than writing code. Instead of specifying a CSS selector, you tell the AI: "Extract the product name, price, rating, number of reviews, and stock status from this product page."

The AI model interprets this instruction and identifies the corresponding data elements on the page — adapting automatically to different page layouts and structures.

AI-powered scrapers learn from experience. When they encounter a new page layout or an unfamiliar data presentation format, they adapt based on their training on millions of web pages. Over time, the system becomes more accurate and more resilient without manual intervention.

Traditional approach: Build and maintain separate scrapers for each of the 100 platforms you need to monitor. Each scraper requires ongoing maintenance. AI approach: A single AI extraction pipeline can process pages from any platform. Adding a new platform to your monitoring requires describing the data you want to extract — not building a new scraper from scratch.

Traditional scrapers require ongoing maintenance every time a target website updates its frontend code. For companies monitoring dozens of platforms, this maintenance burden is substantial — often requiring 2-3 full-time engineers just to keep scrapers running. AI-powered scrapers reduce maintenance dramatically because they are not dependent on specific HTML structures. Website redesigns that would break traditional scrapers are handled automatically by the AI's visual understanding.

Some data is extremely difficult to extract with traditional selectors. Dynamic pricing that loads via JavaScript after the initial page render, data embedded in images or infographics, interactive elements that require user interaction to reveal data, and A/B tested pages where the HTML structure varies between visitors — all of these scenarios are challenging for traditional scrapers but handled naturally by AI systems that process pages visually.

Traditional scrapers excel at extracting structured data from predictable formats. AI-powered scrapers can also extract insights from unstructured content like product reviews, social media posts, and forum discussions. This enables sentiment analysis, trend detection, and competitive intelligence that goes beyond simple data extraction.

AI-powered scraping is not a silver bullet. There are important practical considerations.

Cost: AI inference is more computationally expensive than traditional HTML parsing. For extremely high-volume scraping (billions of pages), the cost differential is significant. A hybrid approach — using AI for complex or frequently changing pages and traditional methods for stable, high-volume sources — often provides the best cost-performance balance.

Speed: AI-based extraction is slower per page than traditional parsing. For applications requiring sub-second extraction latency, traditional methods may still be necessary for the extraction step (though AI can handle the page rendering and anti-bot evasion).

Accuracy validation: While AI extraction is remarkably accurate, it is not infallible. Production systems should include validation layers that check extracted data against expected formats, ranges, and historical baselines.

The most effective scraping systems in 2026 combine AI and traditional methods. AI handles page rendering, anti-bot evasion, and intelligent content identification. For well-understood, stable page structures, traditional parsers handle high-speed extraction. AI validates extraction quality and adapts to changes automatically.

This hybrid approach delivers the resilience and adaptability of AI with the speed and cost efficiency of traditional methods.

Actowiz Solutions has integrated AI-powered scraping into our data extraction infrastructure. Our system uses AI visual understanding for automatic adaptation to website changes across 1,000+ platforms. Natural language extraction enables rapid deployment of new data sources without custom scraper development. Hybrid processing combines AI intelligence with traditional speed for optimal cost-performance. Continuous quality validation uses AI to verify extraction accuracy in real time.

The result is 99% data accuracy, dramatically reduced maintenance overhead, and the ability to scale to new platforms faster than any traditional approach.

Actowiz Solutions combines AI-powered and traditional web scraping technologies to deliver enterprise-grade data extraction from 1,000+ platforms with 99% accuracy.

You can also reach us for all your mobile app scraping, data collection, web scraping , and instant data scraper service requirements!

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

Complete guide to scraping Airbnb, VRBO, and Booking.com for short-term rental pricing, occupancy, and market intelligence. Built for STR investors, revenue managers, and hospitality analysts.

Discover how a Dubai cloud kitchen group saved $2.1M annually and scaled to 80+ virtual brands using Talabat and Careem food intelligence. Learn how data-driven insights optimize menus, pricing, and growth.

Track UK Grocery Products Daily Using Automated Data Scraping across Morrisons, Asda, Tesco, Sainsbury’s, Iceland, Co-op, Waitrose, and Ocado for insights.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.