Real estate platforms like Inmuebles24 dominate the Spanish and Mexican property markets. Analysts, investors, rental operators, real estate agencies, and valuation firms rely on this data for:

Inmuebles24 offers rich data:

This tutorial shows how to build a production-grade Inmuebles24 Data Extraction Engine using:

This is the exact scraping workflow Actowiz Solutions uses to power property dashboards for Spain + Mexico.

Let’s begin.

pip install selenium

pip install beautifulsoup4

pip install pandas

pip install requests

pip install lxml

pip install undetected-chromedriver

Inmuebles24 loads content dynamically → Selenium required.

Mexico

https://www.inmuebles24.com/casas-en-venta-en-ciudad-de-mexico.html

Spain

https://www.inmuebles24.com/casas-en-venta-en-madrid.html

You can modify these to scrape:

import undetected_chromedriver as uc

from selenium.webdriver.common.by import By

from selenium.webdriver.common.keys import Keys

from time import sleep

browser = uc.Chrome()

browser.get("https://www.inmuebles24.com/casas-en-venta-en-ciudad-de-mexico.html")

sleep(5)

for _ in range(12):

browser.find_element(By.TAG_NAME, "body").send_keys(Keys.END)

sleep(2)

Card CSS class often contains "posting-card". Let's locate all cards.

cards = browser.find_elements(By.XPATH, '//div[contains(@class,"posting-card")]')

mx_records = []

Each card contains:

Let's extract these.

for card in cards:

try:

title = card.find_element(By.CLASS_NAME, "posting-card-title").text

except:

title = ""

try:

price = card.find_element(By.CLASS_NAME, "first-price").text

except:

price = ""

try:

location = card.find_element(By.CLASS_NAME, "posting-location").text

except:

location = ""

try:

details = card.find_element(By.CLASS_NAME, "posting-features").text

except:

details = ""

try:

url = card.find_element(By.TAG_NAME, "a").get_attribute("href")

except:

url = ""

mx_records.append({

"country": "Mexico",

"title": title,

"price_raw": price,

"location": location,

"details_raw": details,

"url": url

})

Detail pages contain:

Let’s scrape deep details.

import requests

from bs4 import BeautifulSoup

def scrape_inmuebles_detail(url):

try:

html = requests.get(url, timeout=10).text

soup = BeautifulSoup(html, "lxml")

description = soup.find("p", {"class": "posting-description-text"})

description = description.text.strip() if description else ""

specs = {}

spec_blocks = soup.find_all("li", {"class": "icon-feature"})

for sb in spec_blocks:

key = sb.find("span").text.strip()

val = sb.text.replace(key, "").strip()

specs[key] = val

amenities = []

for li in soup.select(".amenities-list li"):

amenities.append(li.text.strip())

return {

"description": description,

"specifications": specs,

"amenities": amenities

}

except Exception as e:

return {}

for row in mx_records:

row.update(scrape_inmuebles_detail(row["url"]))

Open the Spain URL:

browser.get("https://www.inmuebles24.com/casas-en-venta-en-madrid.html")

sleep(5)

Scroll + extract the same way.

for _ in range(10):

browser.find_element(By.TAG_NAME, "body").send_keys(Keys.END)

sleep(2)

cards = browser.find_elements(By.XPATH, '//div[contains(@class,"posting-card")]')

spain_records = []

for card in cards:

try:

title = card.find_element(By.CLASS_NAME, "posting-card-title").text

except:

title = ""

try:

price = card.find_element(By.CLASS_NAME, "first-price").text

except:

price = ""

try:

location = card.find_element(By.CLASS_NAME, "posting-location").text

except:

location = ""

try:

details = card.find_element(By.CLASS_NAME, "posting-features").text

except:

details = ""

try:

url = card.find_element(By.TAG_NAME, "a").get_attribute("href")

except:

url = ""

spain_records.append({

"country": "Spain",

"title": title,

"price_raw": price,

"location": location,

"details_raw": details,

"url": url

})

for row in spain_records:

row.update(scrape_inmuebles_detail(row["url"]))

import pandas as pd

df = pd.DataFrame(mx_records + spain_records)

df.head()

Prices appear like:

Let’s clean:

import re

def clean_price(val):

if not val:

return None

nums = re.findall(r"\d[\d,]*", val)

if not nums:

return None

return int(nums[0].replace(",", ""))

Apply:

df["price_num"] = df["price_raw"].apply(clean_price)

def detect_currency(val):

if "€" in val:

return "EUR"

if "MXN" in val or "$" in val:

return "MXN"

return None

df["currency"] = df["price_raw"].apply(detect_currency)

from currency_converter import CurrencyConverter

c = CurrencyConverter()

def convert_to_usd(row):

try:

return c.convert(row["price_num"], row["currency"], "USD")

except:

return None

df["price_usd"] = df.apply(convert_to_usd, axis=1)

details_raw usually includes:

Extract bedrooms:

def extract_beds(val):

match = re.search(r"(\d+)\s?(rec|hab|bed)", val.lower())

return int(match.group(1)) if match else None

Extract bathrooms:

def extract_baths(val):

match = re.search(r"(\d+)\s?ba", val.lower())

return int(match.group(1)) if match else None

Extract area:

def extract_area(val):

match = re.search(r"(\d+)\s?m²", val.lower())

return int(match.group(1)) if match else None

Apply:

df["beds"] = df["details_raw"].apply(extract_beds)

df["baths"] = df["details_raw"].apply(extract_baths)

df["area_m2"] = df["details_raw"].apply(extract_area)

Final fields:

df.to_csv("inmuebles24_property_data.csv", index=False)

Price distribution:

import plotly.express as px

fig = px.box(df, x="country", y="price_usd", title="Price Distribution: Spain vs Mexico")

fig.show()

Requires multiple scroll loops.

Regex must support Spanish real estate terms.

MXN $, EUR €, USD $.

Some units load via JS only.

Delay required to avoid blocking.

Actowiz Solutions uses:

…to deliver clean data at scale.

Choose Actowiz if you need:

We support:

In this tutorial, you learned how to:

This lays the foundation for a complete Spain + Mexico real estate intelligence platform.

You can also reach us for all your mobile app scraping, data collection, web scraping, and instant data scraper service requirements!

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

Extract real-time travel mode data via APIs to power smarter AI travel apps with live route updates, transit insights, and seamless trip planning.

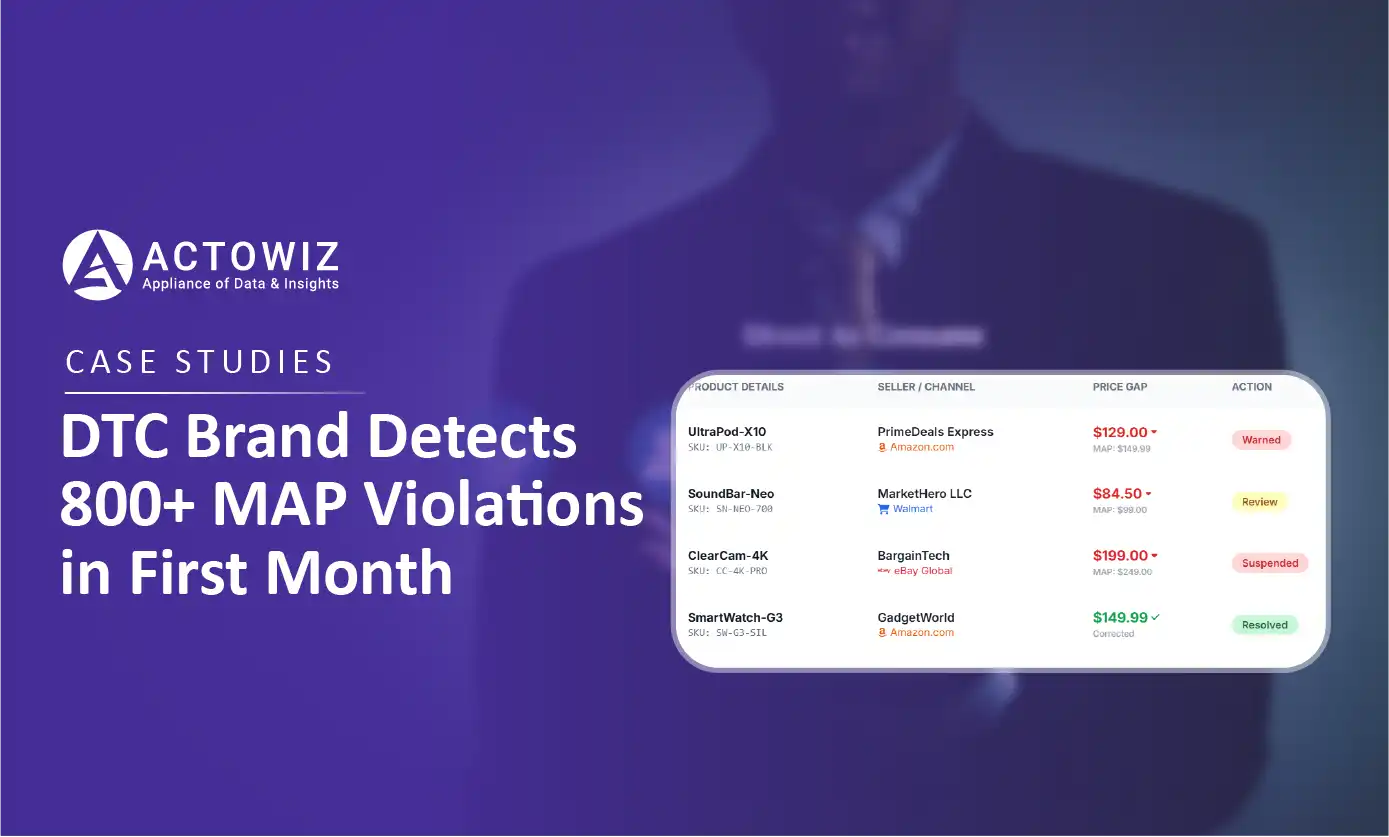

How a $50M+ consumer electronics brand used Actowiz MAP monitoring to detect 800+ violations in 30 days, achieving 92% resolution rate and improving retailer satisfaction by 40%.

Track UK Grocery Products Daily Using Automated Data Scraping across Morrisons, Asda, Tesco, Sainsbury’s, Iceland, Co-op, Waitrose, and Ocado for insights.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.