Luxury eCommerce websites are different from ordinary retail stores. They use:

But brands, pricing analysts, resale platforms, and competitive intelligence teams often need:

This tutorial teaches you how to scrape Dior, Louis Vuitton, Gucci, and Sephora safely and cleanly using Python, Selenium, and BeautifulSoup.

Important: Luxury sites are sensitive. Use ethical scraping practices, delays, rotating IPs, and avoid high frequency crawling.

Let's begin.

pip install selenium

pip install beautifulsoup4

pip install requests

pip install pandas

pip install pillow

pip install lxmlYou'll use:

Luxury websites typically:

That's why Selenium is required.

from selenium import webdriver

from selenium.webdriver.common.by import By

from time import sleep

import pandas as pd

browser = webdriver.Chrome()

browser.get("https://www.dior.com/en_gb/beauty/makeup")

sleep(4)

Scroll for products:

for _ in range(6):

browser.find_element(By.TAG_NAME, "body").send_keys(Keys.END)

sleep(2)

Locate product cards:

items = browser.find_elements(By.XPATH, '//article[contains(@class,"product-tile")]')

Extract details:

dior_records = []

for item in items:

try:

name = item.find_element(By.CLASS_NAME, "product-tile__title").text

except:

name = ""

try:

price = item.find_element(By.CLASS_NAME, "price__amount").text

except:

price = ""

try:

url = item.find_element(By.TAG_NAME, "a").get_attribute("href")

except:

url = ""

dior_records.append({"brand": "Dior", "name": name, "price": price, "url": url})Louis Vuitton has dynamic grid loading + region locks.

Open:

browser.get("https://www.louisvuitton.com/eng-ae/women/all-handbags")

sleep(5)

Scroll:

for _ in range(10):

browser.find_element(By.TAG_NAME, "body").send_keys(Keys.END)

sleep(2)

Extract:

lv_items = browser.find_elements(By.XPATH, '//li[@class="product-item"]')

lv_records = []

for item in lv_items:

try:

title = item.find_element(By.CLASS_NAME, "product-item__title").text

except:

title = ""

try:

price = item.find_element(By.CLASS_NAME, "product-item__price").text

except:

price = ""

try:

url = item.find_element(By.TAG_NAME, "a").get_attribute("href")

except:

url = ""

lv_records.append({"brand": "Louis Vuitton", "name": title, "price": price, "url": url})Open Gucci UAE:

browser.get("https://www.gucci.com/us/en/ca/women/handbags-c-women-handbags")

sleep(5)

Scroll:

for _ in range(8):

browser.find_element(By.TAG_NAME, "body").send_keys(Keys.END)

sleep(2)

Extract:

gucci_records = []

products = browser.find_elements(By.XPATH, '//div[contains(@class,"product-tiles-grid-item")]')

for p in products:

try:

title = p.find_element(By.CLASS_NAME, "product-tiles-grid-item__name").text

except:

title = ""

try:

price = p.find_element(By.CLASS_NAME, "product-tiles-grid-item__price").text

except:

price = ""

try:

url = p.find_element(By.TAG_NAME, "a").get_attribute("href")

except:

url = ""

gucci_records.append({"brand": "Gucci", "name": title, "price": price, "url": url})Open Sephora UAE:

browser.get("https://www.sephora.ae/en/categories/makeup")

sleep(5)

Extract:

sephora_records = []

items = browser.find_elements(By.XPATH, '//div[contains(@class,"product-grid-item")]')

for item in items:

try:

title = item.find_element(By.CLASS_NAME, "product-item-name").text

except:

title = ""

try:

price = item.find_element(By.CLASS_NAME, "price").text

except:

price = ""

try:

url = item.find_element(By.TAG_NAME, "a").get_attribute("href")

except:

url = ""

sephora_records.append({"brand": "Sephora", "name": title, "price": price, "url": url})df = pd.DataFrame(dior_records + lv_records + gucci_records + sephora_records)

df

Now you have a consolidated luxury brand dataset across 4 platforms.Luxury products include:

Add regex:

import re

def extract_size(t):

match = re.search(r"(\d+ml|\d+ g|\d+oz)", t.lower())

return match.group(1) if match else None

df["size"] = df["name"].apply(extract_size)

Extract color variant:

def extract_color(t):

colors = ["pink","red","brown","black","white","gold","blue","beige"]

for c in colors:

if c in t.lower():

return c

return None

df["color"] = df["name"].apply(extract_color)Luxury products have complex categories.

Train a simple rule-based classifier:

def classify_category(name):

n = name.lower()

if "bag" in n or "tote" in n or "wallet" in n:

return "Luxury Handbag"

if "lipstick" in n or "foundation" in n:

return "Luxury Makeup"

if "perfume" in n or "eau" in n:

return "Luxury Fragrance"

return "Other"

df["category"] = df["name"].apply(classify_category)df.to_csv("luxury_brand_data.csv", index=False)

Output sample:

{

"brand": "Gucci",

"name": "GG Marmont mini bag black",

"price": "$2350",

"url": "https://www.gucci.com/...",

"size": null,

"color": "black",

"category": "Luxury Handbag"

}Luxury brands aggressively block scrapers.

Prices are region-specific.

HTML structure differs by region.

Large data transfers.

Some products hide shades behind JS pop-ups.

Must be handled manually or auto-clicked.

Use DIY for:

Use Actowiz Solutions for:

Actowiz crawlers handle:

Across USA, UAE, UK, Europe, Singapore and more.

Luxury eCommerce scraping requires:

With this tutorial, you can extract pricing, product metadata, URLs, colors, and sizes across Dior, LV, Gucci, and Sephora.

But if your goal is production-scale luxury analytics, Actowiz Solutions provides a complete Luxury Brand Intelligence Suite trusted by global retail teams.

You can also reach us for all your mobile app scraping, data collection, web scraping , and instant data scraper service requirements!

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

Extract real-time travel mode data via APIs to power smarter AI travel apps with live route updates, transit insights, and seamless trip planning.

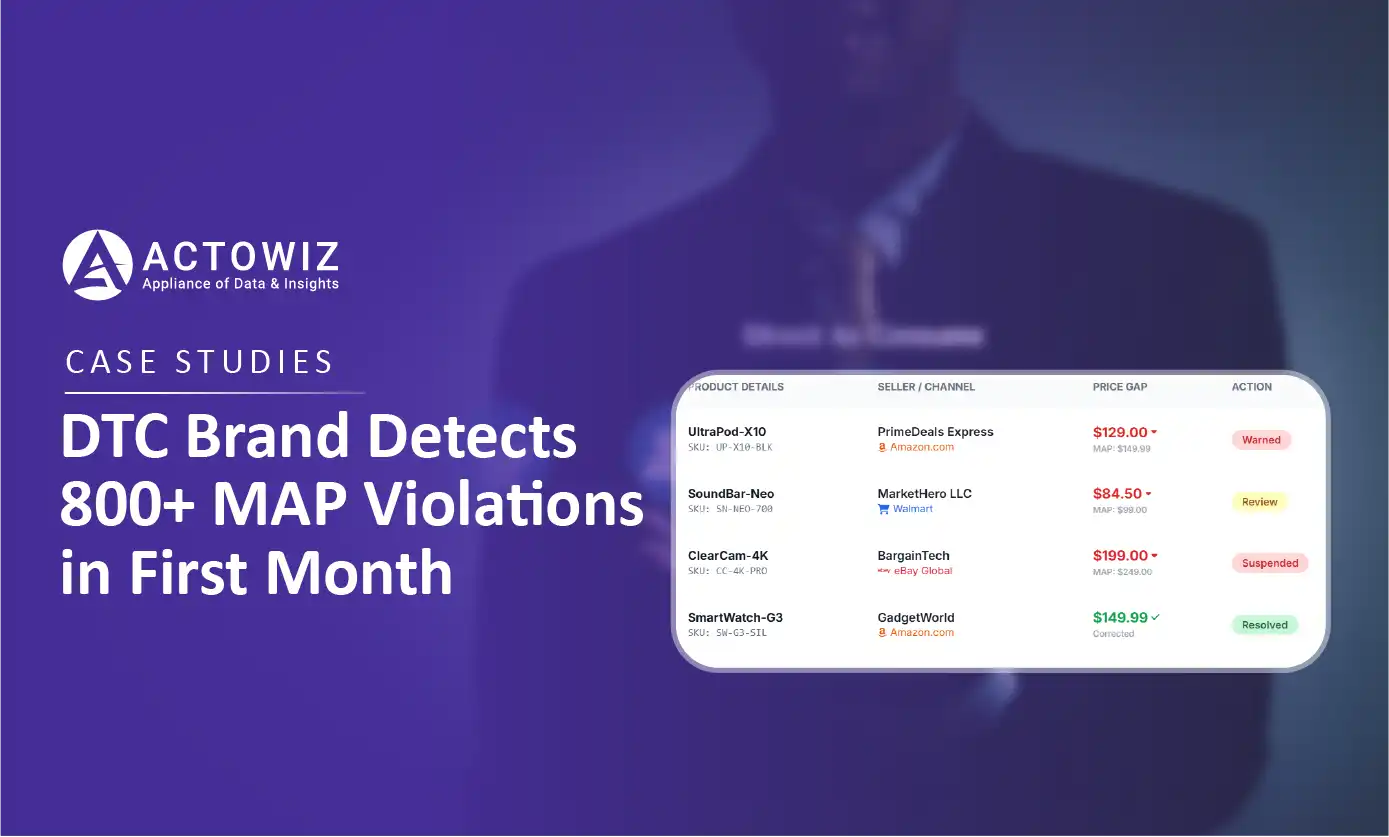

How a $50M+ consumer electronics brand used Actowiz MAP monitoring to detect 800+ violations in 30 days, achieving 92% resolution rate and improving retailer satisfaction by 40%.

Track UK Grocery Products Daily Using Automated Data Scraping across Morrisons, Asda, Tesco, Sainsbury’s, Iceland, Co-op, Waitrose, and Ocado for insights.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.