India’s real estate ecosystem has become increasingly dependent on RERA (Real Estate Regulatory Authority) data. Every state maintains a separate RERA portal with:

Brands, investors, lending institutions, property portals, valuation firms, and consultants all depend on this data.

But PAN India RERA data scraping is extremely challenging because:

This tutorial shows how to build a RERA Data Aggregation Engine using:

This is the same technical framework Actowiz Solutions deploys for clients across real estate intelligence.

pip install selenium

pip install beautifulsoup4

pip install pandas

pip install requests

pip install pypdf

pip install tabula-py

pip install fuzzywuzzy

pip install python-LevenshteinEach state has its own portal:

| State | Portal |

|---|---|

| Maharashtra | https://maharera.mahaonline.gov.in |

| Gujarat | https://gujrera.gujarat.gov.in |

| Karnataka | https://rera.karnataka.gov.in |

| Delhi | https://rera.delhi.gov.in |

| Tamil Nadu | https://www.tnrera.in |

| Rajasthan | https://rera.rajasthan.gov.in |

| Telangana | https://rera.telangana.gov.in |

from selenium import webdriver

from selenium.webdriver.common.by import By

from time import sleep

browser = webdriver.Chrome()

browser.get("https://maharera.mahaonline.gov.in/SearchList/Search")

sleep(3)Example: Select “Mumbai Suburban”

district_dropdown = browser.find_element(By.ID, "DistrictID")

district_dropdown.click()

option = browser.find_element(By.XPATH, '//option[contains(text(),"Mumbai Suburban")]')

option.click()

search_btn = browser.find_element(By.ID, "btnSearch")

search_btn.click()

sleep(4)rows = browser.find_elements(By.XPATH, '//table[@id="tblProjects"]/tbody/tr')

rera_records = []

for row in rows:

cols = row.find_elements(By.TAG_NAME, "td")

if len(cols) > 5:

rera_records.append({

"rera_id": cols[0].text,

"project_name": cols[1].text,

"promoter": cols[2].text,

"district": cols[3].text,

"type": cols[4].text,

"status": cols[5].text,

"details_url": cols[1].find_element(By.TAG_NAME, "a").get_attribute("href")

})Each RERA portal shows deeper details inside project page:

import requests

from bs4 import BeautifulSoup

def scrape_project_details(url):

try:

r = requests.get(url)

soup = BeautifulSoup(r.text, "lxml")

table = soup.find("table", {"class": "table"})

rows = table.find_all("tr")

data = {}

for tr in rows:

tds = tr.find_all("td")

if len(tds) == 2:

data[tds[0].text.strip()] = tds[1].text.strip()

return data

except:

return {}

for entry in rera_records:

entry["details"] = scrape_project_details(entry["details_url"])Most RERA portals provide PDF documents:

To extract text:

from pypdf import PdfReader

def extract_pdf_text(pdf_url):

response = requests.get(pdf_url)

with open("temp.pdf", "wb") as f:

f.write(response.content)

reader = PdfReader("temp.pdf")

text = ""

for page in reader.pages:

text += page.extract_text() + "\n"

return textExtract all PDFs linked on project page:

def get_pdfs(soup):

pdf_links = []

for a in soup.find_all("a", href=True):

if ".pdf" in a["href"].lower():

pdf_links.append(a["href"])

return pdf_linksEvery state writes names differently:

Use fuzzy matching:

from fuzzywuzzy import fuzz

def normalize_name(name):

name = name.lower().strip()

unwanted = ["pvt ltd", "private limited", "llp", "developers", "group", "builders"]

for w in unwanted:

name = name.replace(w, "")

return name

df["clean_project"] = df["project_name"].apply(normalize_name)

df["clean_promoter"] = df["promoter"].apply(normalize_name)Once you scrape Maharashtra, Karnataka, Gujarat, etc.:

final_df = pd.concat([mh_df, ka_df, gj_df, dl_df])

final_df.reset_index(drop=True, inplace=True)Your final aggregated dataset should contain:

| Field | Description |

|---|---|

| rera_id | Unique project registration number |

| project_name | Raw name |

| project_clean | Normalized name |

| promoter | Builder / developer name |

| promoter_clean | Normalized |

| state | Maharashtra, Gujarat, Delhi… |

| city/district | Location |

| start_date | As per RERA |

| end_date | Completion date |

| status | Ongoing / Completed |

| project_type | Residential / Commercial |

| document_links | List of PDFs |

| pdf_text | Extracted content |

| qpr_updates | Quarterly progress reports |

| litigation | Complaints if any |

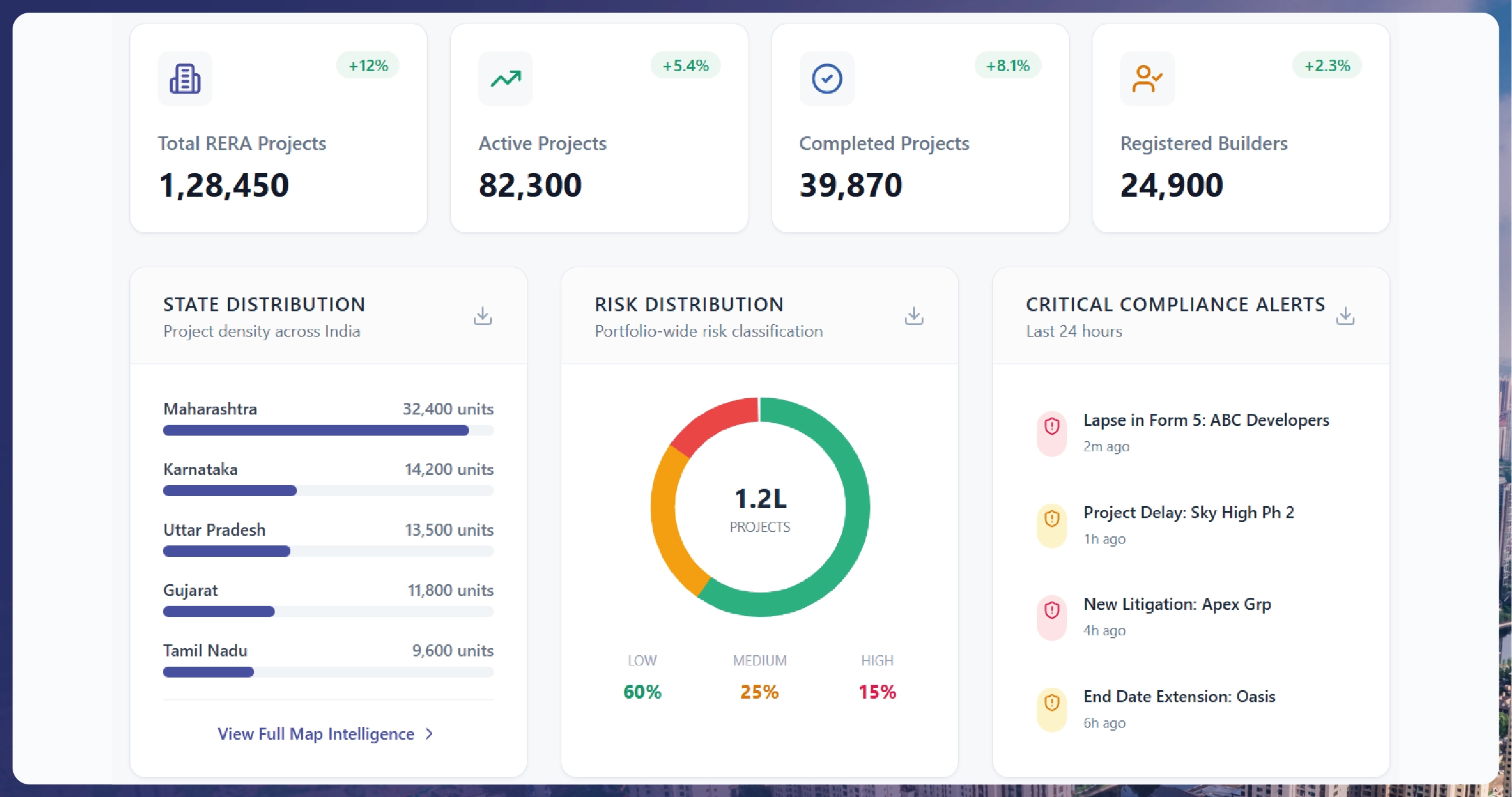

final_df.to_csv("PAN_India_RERA_Data.csv", index=False)Use dashboards for:

Requires AI-based solving or manual intervention.

Every state portal is different.

Different formats, scanned documents.

Some states have outdated servers.

Frequent scraping may get blocked.

DIY works for:

But use Actowiz Solutions for:

We support all 28 states & UTs with RERA coverage.

This tutorial taught you how to:

RERA data is one of the richest sources of real estate truth — and with the right pipelines, it becomes a powerful engine for analytics, compliance, and investment intelligence.

You can also reach us for all your mobile app scraping, data collection, web scraping, and instant data scraper service requirements!

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

Extract real-time travel mode data via APIs to power smarter AI travel apps with live route updates, transit insights, and seamless trip planning.

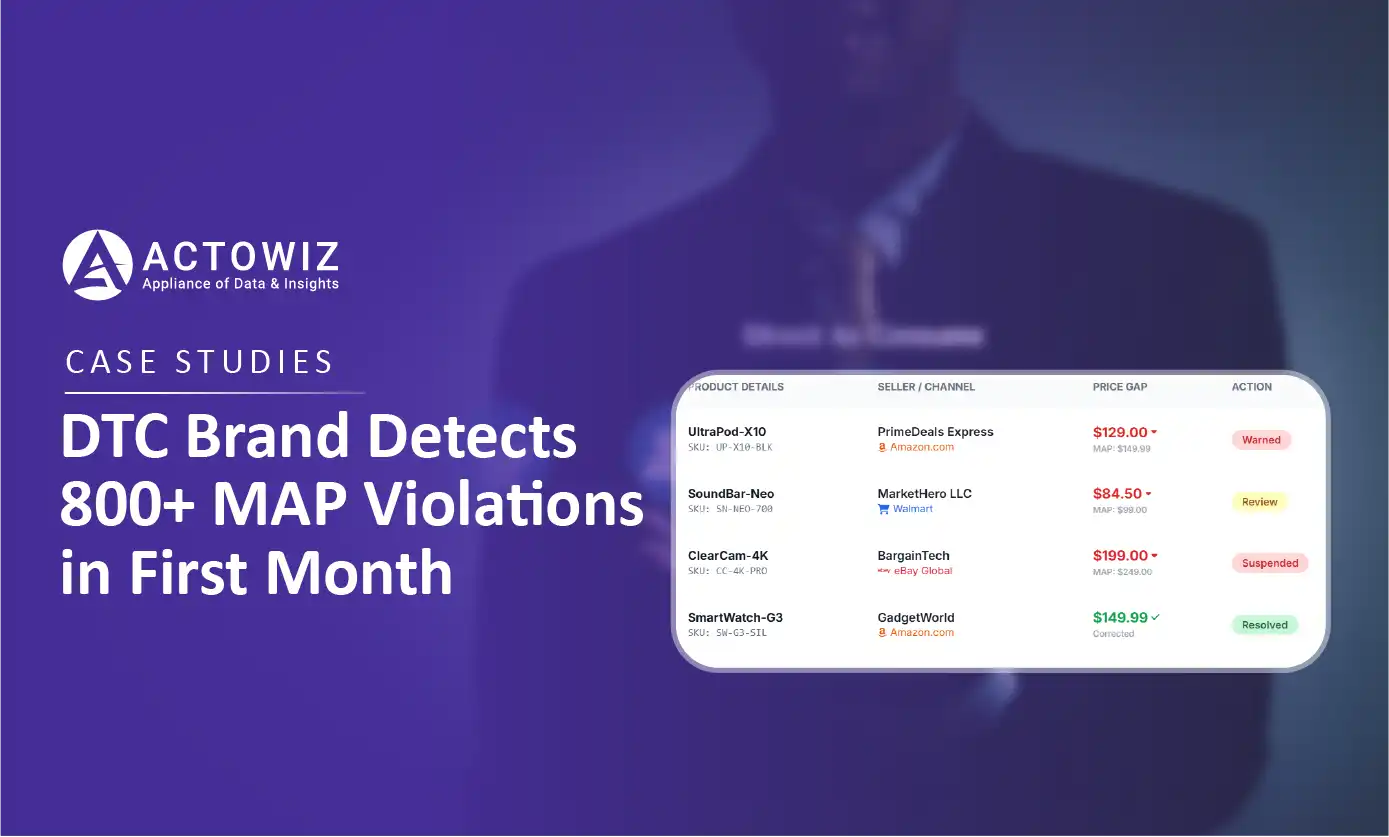

How a $50M+ consumer electronics brand used Actowiz MAP monitoring to detect 800+ violations in 30 days, achieving 92% resolution rate and improving retailer satisfaction by 40%.

Track UK Grocery Products Daily Using Automated Data Scraping across Morrisons, Asda, Tesco, Sainsbury’s, Iceland, Co-op, Waitrose, and Ocado for insights.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.