Discover how a quantitative hedge fund generated 240 bps of alpha using real-time retail inventory data. Learn how alternative data and analytics drive smarter investment strategies and market outperformance.

Financial Services / Alternative Data

Mid-sized quantitative hedge fund, $2.4B AUM (name withheld under NDA)

18 months (ongoing)

240 basis points of annualized alpha attributed to data-driven signal

A US-based quantitative hedge fund specializing in consumer discretionary and retail equities partnered with Actowiz Solutions to build a real-time retail inventory data pipeline across 17 major US retailers and e-commerce platforms. Within six months of deployment, the fund was using scraped inventory velocity, in-stock rates, and pricing signals as a core input to their retail equity strategy. Over the following 12 months, the signal contributed an estimated 240 basis points of annualized alpha, with a Sharpe ratio improvement of 0.37 on the retail sleeve of the portfolio.

This case study documents how the engagement was structured, what technical challenges were solved, and how data is operationalized inside a quantitative investment process.

The client is a quantitative hedge fund managing $2.4 billion across multi-strategy portfolios. Their retail and consumer discretionary sleeve represents approximately 18% of AUM. Historically, this sleeve was driven by fundamental research combined with traditional alt-data inputs — credit card panel data, foot traffic metrics, and satellite imagery.

By early 2024, the investment team had identified a critical gap: their credit card and foot traffic data lagged real-world retailer performance by 2-4 weeks, and increasingly failed to capture the online channel that now represents 30-50% of most retailers’ revenue.

They needed a dataset that:

Hedge fund data requirements differ from typical enterprise scraping in three critical ways:

For backtesting and signal validation, the fund needs to know exactly what data was visible on each historical date — no retroactive corrections, no “refreshed” fields that silently change. This requires immutable historical archives with timestamped snapshots.

Using scraped data for securities trading requires careful handling: documented data sources, compliance with publisher terms, and avoidance of any data that could be construed as material non-public information (MNPI).

A scraping pipeline that fails during earnings season is worse than no data at all — it creates information asymmetry the fund cannot explain to investors. The fund needed enterprise SLAs, redundancy, and monitoring.

Before engaging Actowiz, the fund had tested two DIY approaches (in-house engineering and a generic scraping vendor). Both had failed:

Actowiz designed a custom retail inventory data platform with four core components:

Continuous monitoring of in-stock rate, product availability, and stock signals across 17 retailers:

The scraper tracks:

One of the most complex parts of the engagement: mapping 2.8 million SKUs to their parent brands, and brands to publicly traded tickers (including Procter & Gamble’s 65+ brand portfolio, Unilever’s complex brand tree, PVH’s multi-brand holdings, etc.).

This mapping was built through a combination of:

Every scraped data point is written to an append-only data warehouse with full timestamp integrity. No retroactive updates. No “latest” fields. Historical state is queryable exactly as it was seen at any prior moment.

This enables the fund’s research team to backtest signals with confidence that results aren’t contaminated by lookahead bias — a critical differentiator.

99.95% uptime SLA with redundant scraping infrastructure across 3 geographic regions

Burst capacity automatically scaling 5x during peak retail events

Anomaly detection with real-time alerts on data quality deviations

Provenance documentation — every data point traceable to source URL, scrape timestamp, extraction version, and validation checks

Month 1-2: Infrastructure design, retailer prioritization, and initial scraper development Month 3: Production pipeline live for first 8 retailers; historical backfill initiated Month 4-5: Expansion to remaining 9 retailers; SKU-to-brand mapping completed Month 6: Full production with 24-month historical backfill; fund begins live signal integration Month 6-18: Continuous refinement, new data sources added quarterly, signal tuning alongside fund research team

The fund ran rigorous backtests and forward tests on the signal:

Consider a hypothetical earnings forecast for a major home improvement retailer:

Week 1 (3 weeks before earnings): Inventory data shows a 9% QoQ decline in in-stock rates on seasonal outdoor goods across 2,400 tracked SKUs, while competitors show flat trends.

Week 2: The fund’s signal model integrates this with foot traffic data, credit card panels, and commodity input trends. It identifies a potential sell-through acceleration not yet priced in.

Week 3: Fund builds a modest long position ahead of earnings

Week 4 (earnings day): Company reports Same Store Sales beat of +3.8% vs. +1.2% consensus. Stock moves 6% on the print.

The inventory signal didn’t guarantee the outcome — but consistently improved probabilistic accuracy across dozens of such decisions per quarter, compounding into the alpha attribution.

The fund’s previous attempts (DIY and generic vendor) underdelivered not because the data was unavailable — but because retail-scale scraping is a specialized engineering discipline. The time to first alpha was compressed from 18+ months of DIY attempts to 6 months with a specialized partner.

Any hedge fund building on scraped data needs append-only, immutable historical archives. Vendors that “refresh” historical data silently destroy backtest validity.

SKU-to-brand-to-ticker mapping was 40% of the engagement effort but 80% of the value. Raw inventory data without clean linkage to tradeable tickers is academic curiosity, not investable signal.

Retail earnings, Black Friday, Prime Day, Back-to-School — these peak events generate the signal. Infrastructure must scale for them even if baseline load is 10% of peak.

Actowiz Solutions powers enterprise-grade web data extraction for financial services firms, Fortune 500 brands, and market intelligence platforms. Our alternative data infrastructure serves quantitative hedge funds, private equity firms, and credit analytics platforms across the US, UK, UAE, and India.

Our financial services specializations: Retail inventory and pricing alt-data - E-commerce velocity signals - Consumer discretionary brand intelligence - Real estate listing velocity - Travel and hospitality demand signals - Automotive inventory and pricing

“The inventory signal gave us a four-week lead on retail earnings surprises. That lead time is worth everything in this market.”

— Head of Alternative Data, Portfolio Management Team

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

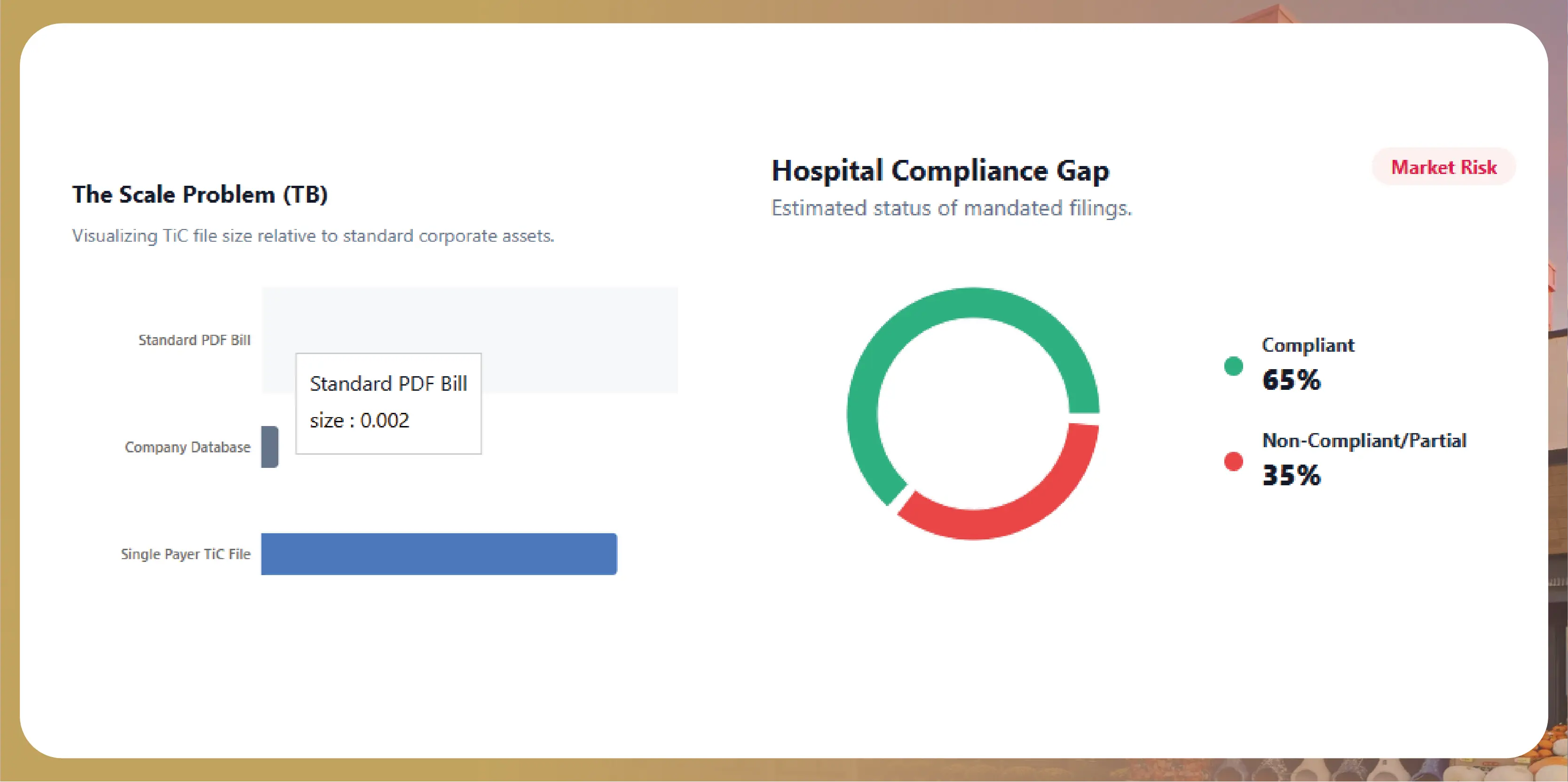

How healthcare payers, startups, and analysts scrape CMS-mandated hospital price transparency files at scale. Complete 2026 guide to MRF extraction and use cases.

Discover how a Dubai cloud kitchen group saved $2.1M annually and scaled to 80+ virtual brands using Talabat and Careem food intelligence. Learn how data-driven insights optimize menus, pricing, and growth.

Track UK Grocery Products Daily Using Automated Data Scraping across Morrisons, Asda, Tesco, Sainsbury’s, Iceland, Co-op, Waitrose, and Ocado for insights.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.