If you work in real estate analytics, you already know how important price monitoring has become.

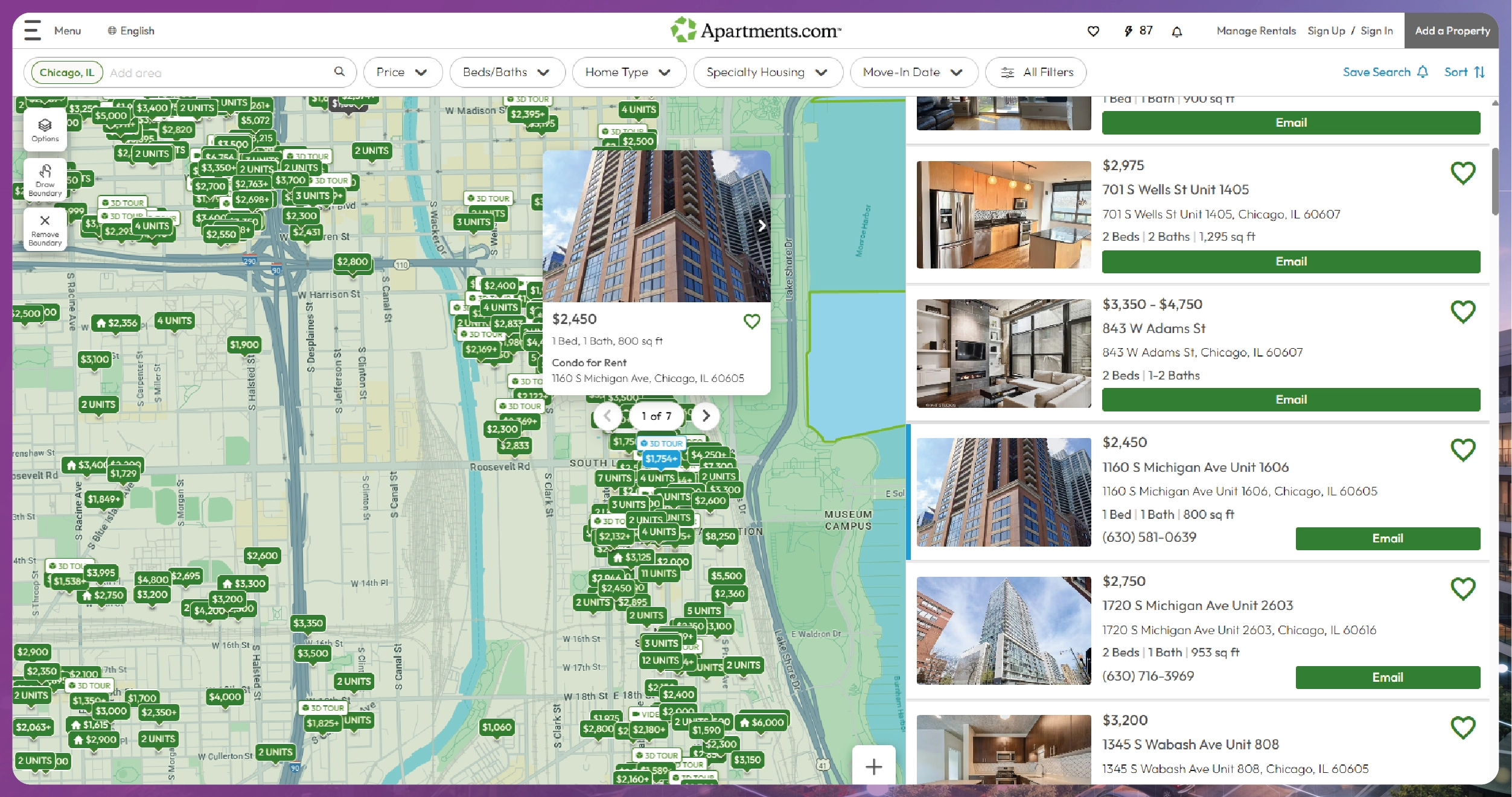

Platforms like Redfin (USA) and Apartments.com provide massive datasets for:

This tutorial explains how to scrape real estate listings from Redfin and Apartments.com, clean the data, normalize attributes, and build a real estate price monitoring system using Python.

This is the same technical framework Actowiz Solutions deploys for large property intelligence clients across the USA.

Real estate websites use a mix of:

In this tutorial:

pip install selenium

pip install pandas

pip install requests

pip install beautifulsoup4

pip install lxml

pip install undetected-chromedriverWe’ll use undetected_chromedriver because Redfin sometimes blocks default Selenium.

Redfin shows listings like:

Let’s start scraping.

import undetected_chromedriver as uc

from selenium.webdriver.common.by import By

from time import sleep

browser = uc.Chrome()

browser.get("https://www.redfin.com/city/30749/CA/San-Francisco")

sleep(5)for _ in range(10):

browser.find_element(By.TAG_NAME, "body").send_keys(Keys.END)

sleep(2)cards = browser.find_elements(By.XPATH, '//div[contains(@class,"HomeCard")]')

redfin_records = []for card in cards:

try:

price = card.find_element(By.CLASS_NAME, "homecardV2Price").text

except:

price = ""

try:

address = card.find_element(By.CLASS_NAME, "homeAddressV2").text

except:

address = ""

try:

beds = card.find_element(By.XPATH, './/div[contains(text(),"Beds")]').text

except:

beds = ""

try:

baths = card.find_element(By.XPATH, './/div[contains(text(),"Baths")]').text

except:

baths = ""

try:

sqft = card.find_element(By.XPATH, './/div[contains(text(),"Sq Ft")]').text

except:

sqft = ""

try:

url = card.find_element(By.TAG_NAME, "a").get_attribute("href")

except:

url = ""

redfin_records.append({

"platform": "Redfin",

"price": price,

"address": address,

"beds": beds,

"baths": baths,

"sqft": sqft,

"url": url

})Apartments.com listings include:

browser.get("https://www.apartments.com/san-francisco-ca/")

sleep(5)for _ in range(12):

browser.find_element(By.TAG_NAME, "body").send_keys(Keys.END)

sleep(2)apt_records = []

listings = browser.find_elements(By.XPATH, '//article[contains(@class,"placard")]')for item in listings:

try:

name = item.find_element(By.CLASS_NAME, "property-title").text

except:

name = ""

try:

address = item.find_element(By.CLASS_NAME, "property-address").text

except:

address = ""

try:

price = item.find_element(By.CLASS_NAME, "property-pricing").text

except:

price = ""

try:

beds = item.find_element(By.CLASS_NAME, "property-beds").text

except:

beds = ""

try:

url = item.find_element(By.TAG_NAME, "a").get_attribute("href")

except:

url = ""

apt_records.append({

"platform": "Apartments.com",

"name": name,

"address": address,

"price": price,

"beds": beds,

"url": url

})import pandas as pd

df = pd.DataFrame(redfin_records + apt_records)

df.head()Prices appear like:

We need a clean price.

import re

def clean_price(p):

if not p:

return None

p = p.replace(",", "")

if "M" in p:

return float(p.replace("$","").replace("M","").strip()) * 1_000_000

if "K" in p:

return float(p.replace("$","").replace("K","").strip()) * 1_000

match = re.findall(r"\d+", p)

return int(match[0]) if match else None

Apply:

df["price_clean"] = df["price"].apply(clean_price)def extract_number(val):

match = re.findall(r"\d+", val)

return int(match[0]) if match else None

df["beds_num"] = df["beds"].apply(extract_number)

df["baths_num"] = df["baths"].apply(extract_number)

df["sqft_num"] = df["sqft"].apply(extract_number)Open each listing URL and parse details:

import requests

from bs4 import BeautifulSoup

def scrape_unit_details(url):

try:

html = requests.get(url, timeout=10).text

soup = BeautifulSoup(html, "lxml")

amenities = []

for li in soup.select(".amenities .specList li"):

amenities.append(li.text.strip())

availability = soup.select_one(".availabilityInfo")

availability = availability.text.strip() if availability else None

return {

"amenities": amenities,

"availability": availability

}

except:

return {}

Attach details:

for row in apt_records:

row.update(scrape_unit_details(row["url"]))Final dataset fields:

| Column | Meaning |

|---|---|

| platform | Redfin or Apartments.com |

| address | Property location |

| price_clean | Numeric price |

| price_type | rent / sale |

| beds_num | #beds |

| baths_num | #baths |

| sqft_num | area |

| availability | units available |

| amenities | list |

| url | source link |

df.to_csv("real_estate_price_monitoring.csv", index=False)Use a cron job or schedule:

0 6 * * * python scrape_real_estate.pyThis gives you:

Just like Actowiz Solutions’ pipeline.

Sometimes requires intercepting API requests.

Heavy pages → need careful scrolling.

Frequent scraping can get blocked → use rotating proxies.

Amenities vary by listing.

Some cities use multi-level pagination.

Actowiz Solutions uses:

Use Actowiz when you need:

Our real estate intelligence engine supports:

And combines:

With this tutorial, you now know how to:

This becomes your foundation for:

Actowiz Solutions can deploy a complete, production-grade real estate data intelligence stack for your team.

You can also reach us for all your mobile app scraping, data collection, web scraping, and instant data scraper service requirements!

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

Extract real-time travel mode data via APIs to power smarter AI travel apps with live route updates, transit insights, and seamless trip planning.

How a $50M+ consumer electronics brand used Actowiz MAP monitoring to detect 800+ violations in 30 days, achieving 92% resolution rate and improving retailer satisfaction by 40%.

Track UK Grocery Products Daily Using Automated Data Scraping across Morrisons, Asda, Tesco, Sainsbury’s, Iceland, Co-op, Waitrose, and Ocado for insights.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.