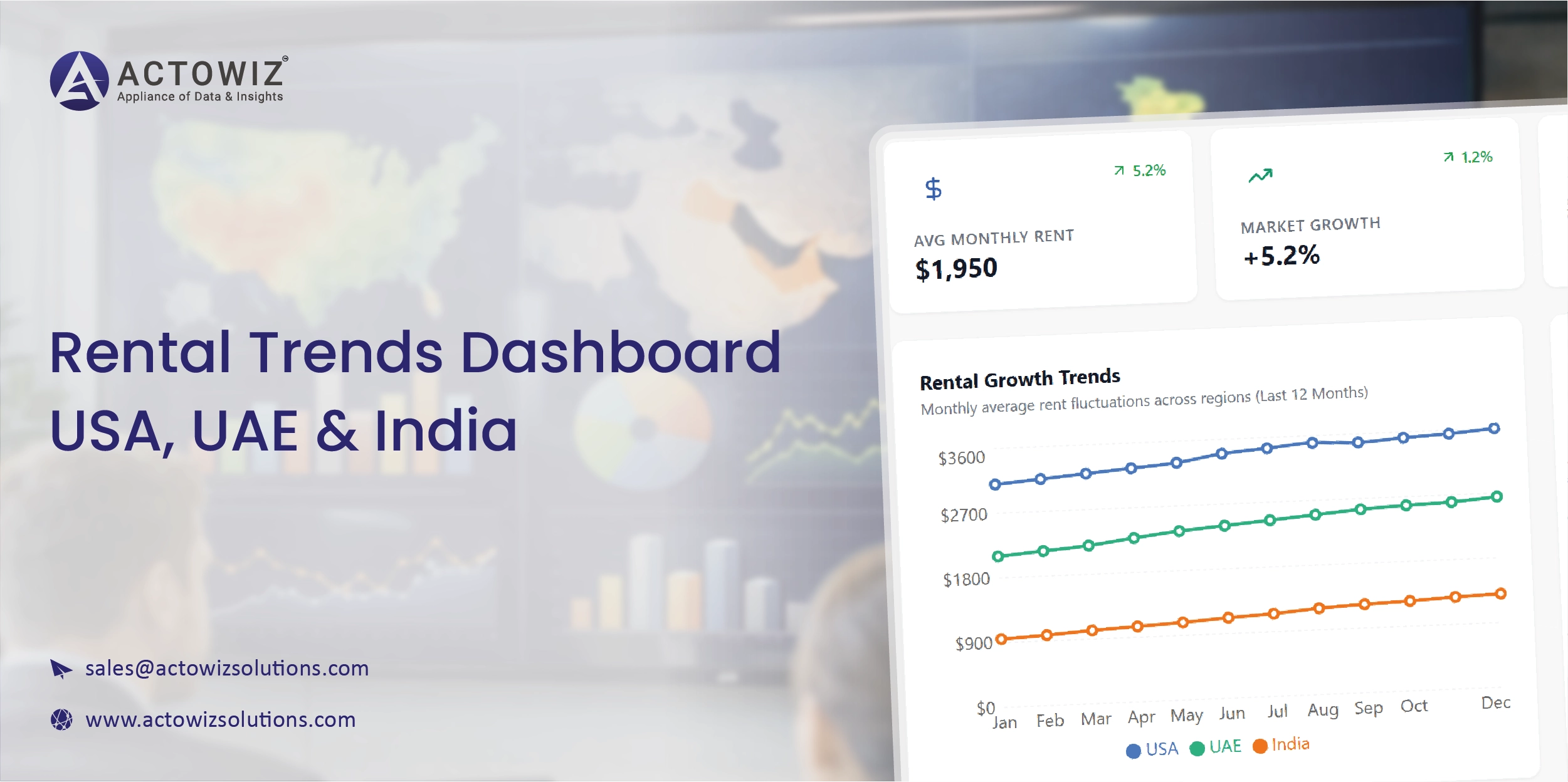

Rent prices are changing faster than ever.

Platforms like Zillow (USA), Apartments.com (USA), Bayut (UAE), Property Finder (UAE), 99acres (India), Magicbricks (India) update rental listings daily and influence:

This tutorial shows how to build a complete Rental Trends Dashboard using:

You’ll learn how to scrape rental listings from USA, UAE, and India, clean the data, extract key indicators, and build a functional dashboard dataset.

This is the same workflow Actowiz Solutions uses in large-scale rental intelligence projects.

pip install selenium

pip install beautifulsoup4

pip install requests

pip install pandas

pip install matplotlib

pip install plotly

pip install undetected-chromedriverWe will scrape:

And then merge them into a unified rental intelligence table.

Zillow rental pages show:

Example URL:

https://www.zillow.com/homes/for_rent/San-Francisco,-CA_rb/

import undetected_chromedriver as uc

from selenium.webdriver.common.by import By

from selenium.webdriver.common.keys import Keys

from time import sleep

browser = uc.Chrome()

browser.get("https://www.zillow.com/homes/for_rent/San-Francisco,-CA_rb/")

sleep(5)for _ in range(12):

browser.find_element(By.TAG_NAME, "body").send_keys(Keys.END)

sleep(2)cards = browser.find_elements(By.XPATH, '//article')

usa_rentals = []for card in cards:

try:

address = card.find_element(By.CLASS_NAME, "list-card-addr").text

except:

address = ""

try:

price = card.find_element(By.CLASS_NAME, "list-card-price").text

except:

price = ""

try:

beds = card.find_element(By.CLASS_NAME, "list-card-details").text

except:

beds = ""

try:

url = card.find_element(By.TAG_NAME, "a").get_attribute("href")

except:

url = ""

usa_rentals.append({

"country": "USA",

"platform": "Zillow",

"address": address,

"price_raw": price,

"beds_raw": beds,

"url": url

})Example URL:

https://www.bayut.com/to-rent/apartments/dubai/

browser.get("https://www.bayut.com/to-rent/apartments/dubai/")

sleep(5)for _ in range(10):

browser.find_element(By.TAG_NAME, "body").send_keys(Keys.END)

sleep(2)uae_rentals = []

items = browser.find_elements(By.XPATH, '//article[contains(@class,"ee734f1a")]')for item in items:

try:

title = item.find_element(By.CLASS_NAME, "_7afabd84").text

except:

title = ""

try:

price = item.find_element(By.CLASS_NAME, "_105b8a67").text

except:

price = ""

try:

location = item.find_element(By.CLASS_NAME, "_162e6467").text

except:

location = ""

try:

url = item.find_element(By.TAG_NAME, "a").get_attribute("href")

except:

url = ""

uae_rentals.append({

"country": "UAE",

"platform": "Bayut",

"location": location,

"price_raw": price,

"title": title,

"url": url

})Example link:

https://www.99acres.com/search/rent/residential-apartments/bangalore

browser.get("https://www.99acres.com/search/rent/residential-apartments/bangalore")

sleep(5)india_rentals = []

cards = browser.find_elements(By.XPATH, '//div[contains(@class,"boxWrap")]')for c in cards:

try:

price = c.find_element(By.CLASS_NAME, "srpRentPrice").text

except:

price = ""

try:

details = c.find_element(By.CLASS_NAME, "srpDataWrap").text

except:

details = ""

try:

url = c.find_element(By.TAG_NAME, "a").get_attribute("href")

except:

url = ""

india_rentals.append({

"country": "India",

"platform": "99acres",

"details": details,

"price_raw": price,

"url": url

})import pandas as pd

df = pd.DataFrame(usa_rentals + uae_rentals + india_rentals)

df.head()Remove unwanted characters:

import re

def clean_price(val):

if not val:

return None

val = val.replace(",", "").replace("AED","").replace("₹","").replace("$","")

nums = re.findall(r"\d+", val)

return int(nums[0]) if nums else None

df["price_num"] = df["price_raw"].apply(clean_price)from currency_converter import CurrencyConverter

c = CurrencyConverter()

def convert_to_usd(row):

if row["country"] == "USA":

return row["price_num"]

if row["country"] == "UAE":

return c.convert(row["price_num"], "AED", "USD")

if row["country"] == "India":

return c.convert(row["price_num"], "INR", "USD")

df["price_usd"] = df.apply(convert_to_usd, axis=1)USA (Zillow beds) comes in text like "2 bds | 1 ba | 850 sqft".

UAE (Bayut) includes "2 Beds • 3 Baths".

India (99acres) includes "2 BHK".

Let's extract:

def extract_beds(val):

match = re.search(r"(\d+)\s?(bd|bed|beds|bhk)", val.lower())

return int(match.group(1)) if match else None

df["beds"] = df["beds_raw"].fillna("") + df["title"].fillna("") + df["details"].fillna("")

df["beds"] = df["beds"].apply(extract_beds)median_rent = df.groupby(["country"])["price_usd"].median()

print(median_rent)Using Plotly:

import plotly.express as px

fig = px.box(df, x="country", y="price_usd", title="Rental Price Distribution (USA vs UAE vs India)")

fig.show()Actowiz Solutions often builds:

Example:

df["rent_index"] = df["price_usd"] / df["beds"]df.to_csv("rental_trends_dashboard.csv", index=False)Use Actowiz if you need:

We support:

With scalable extraction across:

In this tutorial, you learned how to:

Congratulations — you now have a complete Rental Trends Dashboard engine.

You can also reach us for all your mobile app scraping, data collection, web scraping , and instant data scraper service requirements!

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

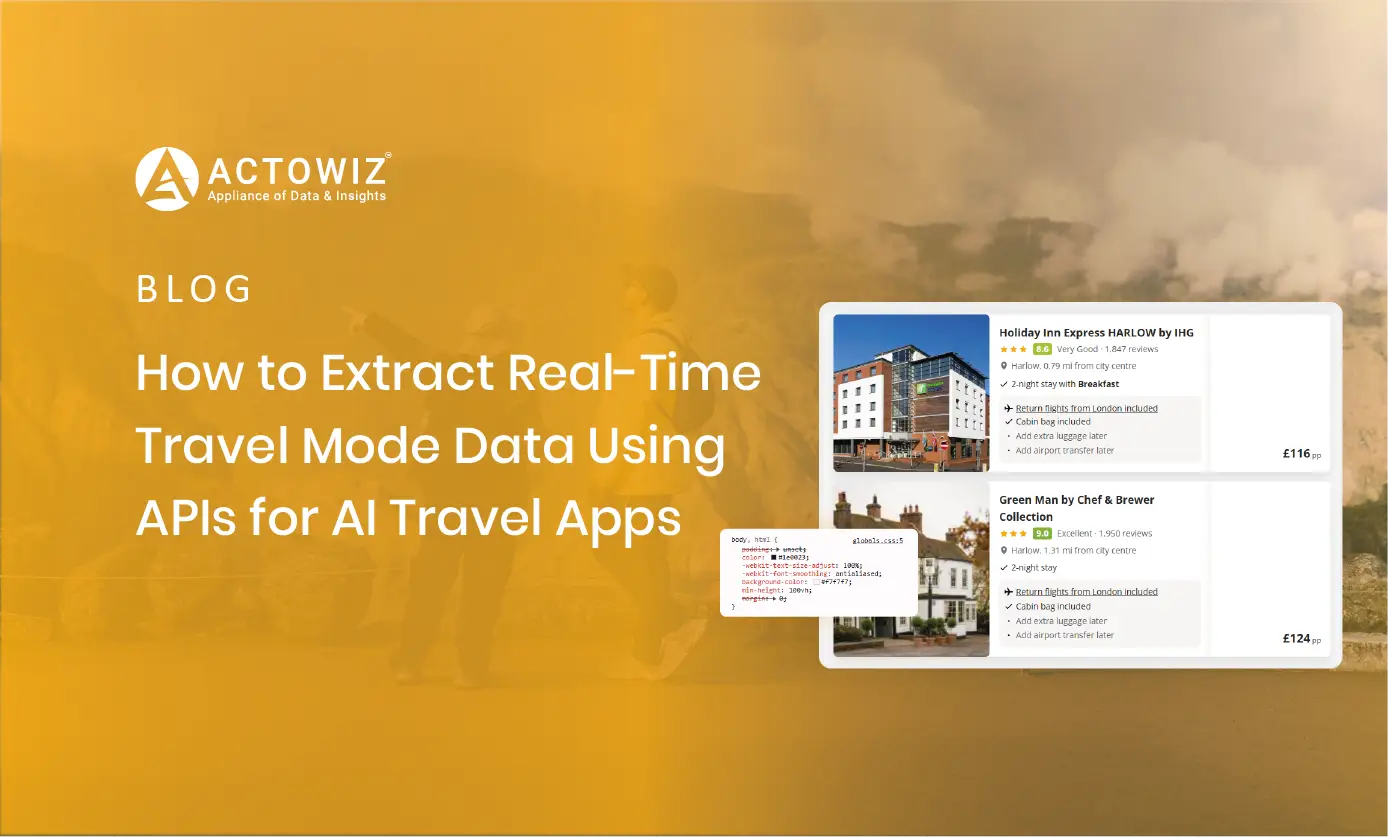

Extract real-time travel mode data via APIs to power smarter AI travel apps with live route updates, transit insights, and seamless trip planning.

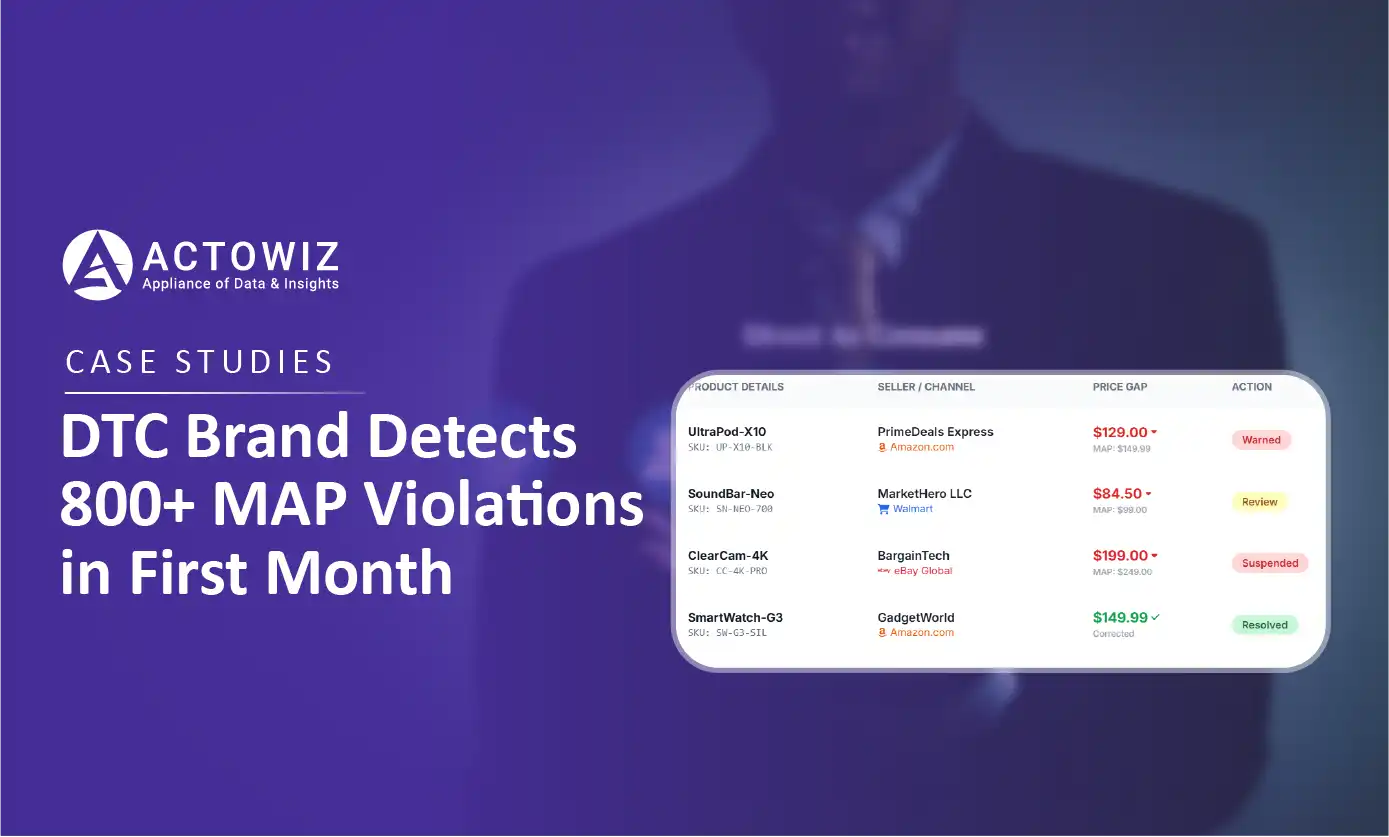

How a $50M+ consumer electronics brand used Actowiz MAP monitoring to detect 800+ violations in 30 days, achieving 92% resolution rate and improving retailer satisfaction by 40%.

Track UK Grocery Products Daily Using Automated Data Scraping across Morrisons, Asda, Tesco, Sainsbury’s, Iceland, Co-op, Waitrose, and Ocado for insights.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.