Hospitality and retail brands depend heavily on customer feedback across platforms like:

For large brands such as:

… Reviews determine:

This tutorial shows how Actowiz Solutions builds end-to-end Review & Rating Intelligence Pipelines using:

pip install selenium

pip install undetected-chromedriver

pip install requests

pip install beautifulsoup4

pip install pandas

pip install textblob

pip install nltk

pip install sklearn

pip install lxmlWe’ll scrape from:

You can extend this to Zomato/Swiggy easily.

We will collect reviews from multiple locations.

Google Maps query: https://www.google.com/maps/search/Starbucks/

Yelp query: https://www.yelp.com/search?find_desc=Starbucks

TripAdvisor query: https://www.tripadvisor.com/Search?q=Starbucks

import undetected_chromedriver as uc

from selenium.webdriver.common.by import By

from selenium.webdriver.common.keys import Keys

from time import sleep

browser = uc.Chrome()

browser.get("https://www.google.com/maps/search/starbucks/")

sleep(5)scrollable = browser.find_element(By.CLASS_NAME, "m6QErb")

for _ in range(60):

browser.execute_script("arguments[0].scrollTop = arguments[0].scrollHeight", scrollable)

sleep(1.5)cards = browser.find_elements(By.XPATH, '//div[contains(@class, "Nv2PK")]')

gm_locations = []for c in cards:

try: name = c.find_element(By.CLASS_NAME, "qBF1Pd").text

except: name = ""

try: rating = c.find_element(By.CLASS_NAME, "MW4etd").text

except: rating = ""

try: reviews = c.find_element(By.CLASS_NAME, "UY7F9").text

except: reviews = ""

try: address = c.find_element(By.CLASS_NAME, "rllt__details").text

except: address = ""

try: url = c.find_element(By.TAG_NAME, "a").get_attribute("href")

except: url = ""

gm_locations.append({

"store_name": name,

"rating_summary": rating,

"review_count": reviews,

"address": address,

"url": url

})gm_reviews = []

for loc in gm_locations[:20]: # limit for tutorial

browser.get(loc["url"])

sleep(4)try:

btn = browser.find_element(By.XPATH, '//button[contains(@aria-label,"reviews")]')

btn.click()

sleep(3)

except:

continuepanel = browser.find_element(By.CLASS_NAME, "m6QErb")

for _ in range(40):

browser.execute_script("arguments[0].scrollTop = arguments[0].scrollHeight", panel)

sleep(1)cards = browser.find_elements(By.XPATH, '//div[@data-review-id]')

for r in cards:

try: author = r.find_element(By.CLASS_NAME, "d4r55").text

except: author = ""

try: rating = r.find_element(By.CLASS_NAME, "fzvQZe").get_attribute("aria-label")

except: rating = ""

try: text = r.find_element(By.CLASS_NAME, "wiI7pd").text

except: text = ""

try: date = r.find_element(By.CLASS_NAME, "rsqaWe").text

except: date = ""

gm_reviews.append({

"platform": "Google Maps",

"store": loc["store_name"],

"rating": rating,

"review_text": text,

"date": date,

"author": author,

"address": loc["address"]

})browser.get("https://www.yelp.com/search?find_desc=Starbucks")

sleep(4)cards = browser.find_elements(By.XPATH, '//div[contains(@class,"container")]')

yelp_locations = []for c in cards:

try: name = c.find_element(By.CLASS_NAME, "css-1egxyvc").text

except: continue

try: rating = c.find_element(By.CSS_SELECTOR, '[aria-label$="star rating"]').get_attribute("aria-label")

except: rating = ""

try: reviews = c.find_element(By.CLASS_NAME, "reviewCount").text

except: reviews = ""

try: url = c.find_element(By.TAG_NAME, "a").get_attribute("href")

except: url = ""

yelp_locations.append({

"store_name": name,

"rating_summary": rating,

"review_count": reviews,

"url": url

})yelp_reviews = []

for loc in yelp_locations[:20]:

browser.get(loc["url"])

sleep(4)

Scroll:

for _ in range(20):

browser.find_element(By.TAG_NAME, "body").send_keys(Keys.END)

sleep(1)

Extract reviews:

blocks = browser.find_elements(By.XPATH, '//li[contains(@class,"review")]')

for b in blocks:

try: text = b.find_element(By.CLASS_NAME, "comment").text

except: text = ""

try: rating = b.find_element(By.XPATH, './/div[contains(@aria-label,"star rating")]').get_attribute("aria-label")

except: rating = ""

try: date = b.find_element(By.CLASS_NAME, "css-chan6m").text

except: date = ""

yelp_reviews.append({

"platform": "Yelp",

"store": loc["store_name"],

"rating": rating,

"review_text": text,

"date": date

})TripAdvisor is key for hospitality brands like:

browser.get("https://www.tripadvisor.com/Search?q=Starbucks")

sleep(4)ta_links = browser.find_elements(By.XPATH, '//a[contains(@class,"review_count")]')

trip_urls = [a.get_attribute("href") for a in ta_links]ta_reviews = []

for url in trip_urls[:10]:

browser.get(url)

sleep(4)

blocks = browser.find_elements(By.XPATH, '//div[contains(@data-test-target,"review")]')

for b in blocks:

try: text = b.find_element(By.CLASS_NAME, "QewHA").text

except: text = ""

try: rating = b.find_element(By.CSS_SELECTOR, "svg[aria-label]").get_attribute("aria-label")

except: rating = ""

try: date = b.find_element(By.CLASS_NAME, "euPKI").text

except: date = ""

ta_reviews.append({

"platform": "TripAdvisor",

"rating": rating,

"review_text": text,

"date": date

})import pandas as pd

df = pd.DataFrame(gm_reviews + yelp_reviews + ta_reviews)

df.head()Different platforms have different rating formats:

Let's convert everything to a 5-point scale.

import re

def clean_rating(val):

nums = re.findall(r"\d+\.?\d*", val)

return float(nums[0]) if nums else None

df["rating_num"] = df["rating"].apply(clean_rating)from textblob import TextBlob

df["sentiment"] = df["review_text"].apply(lambda x: TextBlob(x).sentiment.polarity)Sentiment scale:

Define complaint keywords:

keywords = {

"service": ["slow", "rude", "bad service"],

"cleanliness": ["dirty", "unclean", "messy"],

"price": ["expensive", "overpriced"],

"taste": ["bad taste", "not good", "cold food"],

"waiting": ["long wait", "delay"]

}Extract occurrences:

for cat, words in keywords.items():

df[f"kw_{cat}"] = df["review_text"].apply(

lambda x: any(w.lower() in x.lower() for w in words)

)df.groupby("platform")["rating_num"].mean()df.groupby("platform")["sentiment"].mean()complaints = df[

["kw_service","kw_cleanliness","kw_price","kw_taste","kw_waiting"]

].sum()store_scores = df.groupby("store")["sentiment"].mean()df.to_csv("hospitality_review_intelligence.csv", index=False)Actowiz deploys systems capable of:

Scaling strategies:

| Platform | Challenges | Solutions |

|---|---|---|

| Google Maps | dynamic content + anti-bot | undetected-chromedriver, slow scroll, proxies |

| Yelp | rate-limiting | user-agent rotation |

| TripAdvisor | varied HTML structure | adaptive parsers |

Actowiz uses:

In this full tutorial, you learned how to:

This forms the backbone of a Review & Reputation Intelligence Platform used by global enterprise clients of Actowiz Solutions.

You can also reach us for all your mobile app scraping, data collection, web scraping , and instant data scraper service requirements!

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

Extract real-time travel mode data via APIs to power smarter AI travel apps with live route updates, transit insights, and seamless trip planning.

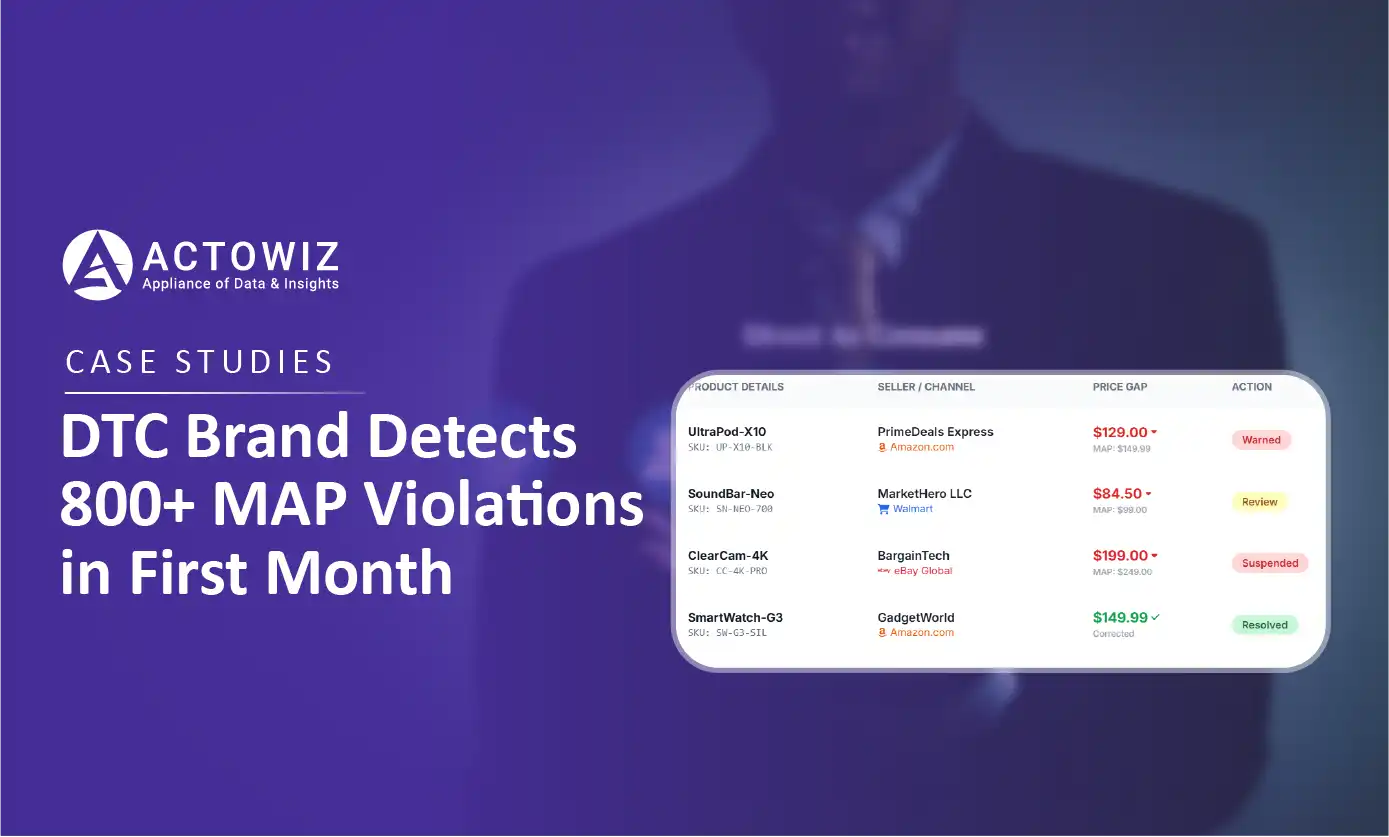

How a $50M+ consumer electronics brand used Actowiz MAP monitoring to detect 800+ violations in 30 days, achieving 92% resolution rate and improving retailer satisfaction by 40%.

Track UK Grocery Products Daily Using Automated Data Scraping across Morrisons, Asda, Tesco, Sainsbury’s, Iceland, Co-op, Waitrose, and Ocado for insights.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.