LinkedIn job posting data scraping using Python and Selenium is a guide-based article. It will teach how to scrape the LinkedIn job posting data using Python and libraries. You can learn the basics of scraping web data, going through web pages, scraping data, and saving information in a digestible format.

We'll start the tutorial with an introduction to necessary python libraries, including Pandas, Selenium, and BeautifulSoup. Then, we'll set up the environment on how to initiate the chrome browser, set up search question parameters, and log in to LinkedIn.

After setting up the environment, we'll go through the process of extracting job posting data from LinkedIn. It consists of redirecting to the page to search for a job, scrolling the page, and loading all job posts. Lastly, we'll review the primary process of scraping relevant data for each job post.

Then the post shows the process of saving data in a Pandas DataFrame and converting it into CSV format for further processing. Throughout the post, we have shared the significance of ethical and responsible web data extraction. We also motivated readers to go through the terms and services of the target website carefully.

Scraping web data is a robust process that enables us to use automated processes to gather data from multiple websites in a single click. Using python libraries like BeautifulSoup, Selenium, and Pandas, you can paste scripts in editors to see web pages, scrape data, and save it to study further in a usable format.

First, we should set up the environment using the needed Python libraries. With selenium, we also need to parse HTML content using Beautiful Soup and data manipulation with the help of Pandas.

Then, we should set up the search question parameters like job location and title we wish to explore. To explain, let's take an example of search query parameters below.

Now, let's set up the path to the executable Chromedriver. It is a particular executable that the selenium web driver uses to regulate Chrome. You can download the web driver from its official website and place it in your project directory.

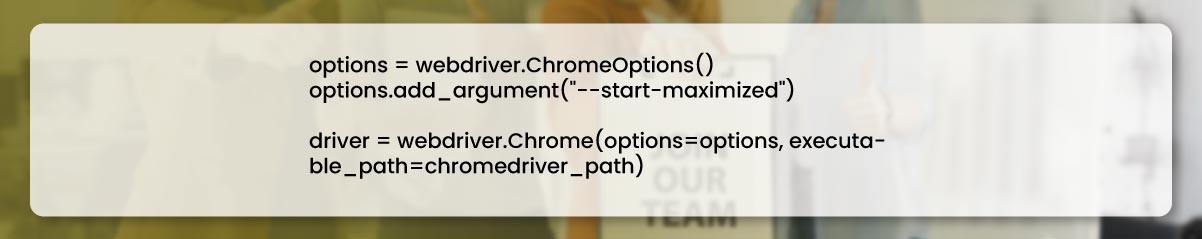

Then, we have to initiate the new instance of chrome driver to maximize the window.

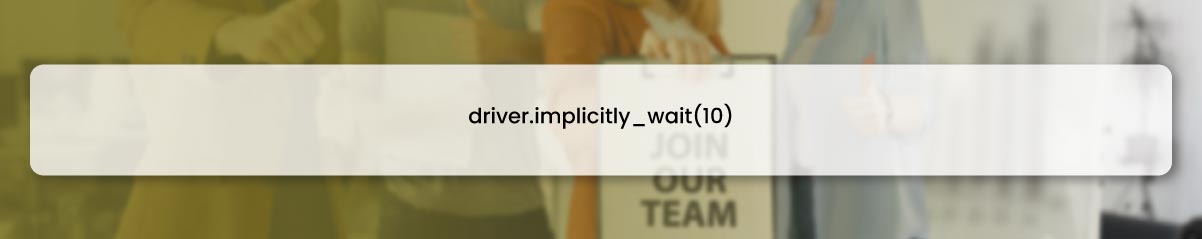

After allowing enough time to load the page before interacting, set the 10-second implicit waiting time.

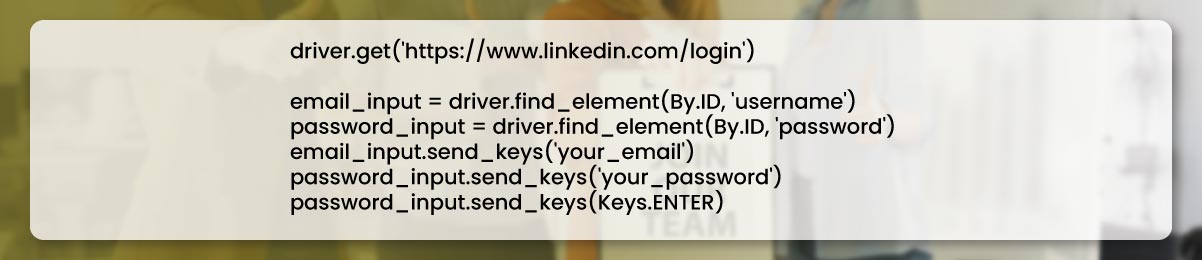

Now, we have to go to the LinkedIn log-in page. Fill in the email id and password, and hit the log-in button.

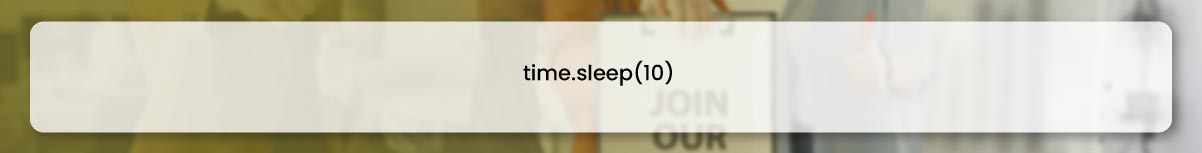

After logging in to the LinkedIn account, we must wait until the page loads.

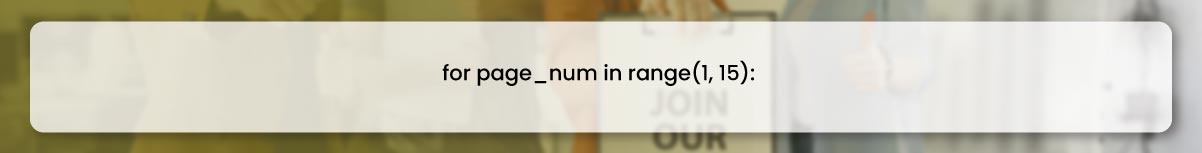

Here comes the primary step: we can scrap job posting data by looping the first fifteen job search result pages. For this case, we are scraping only a couple of pages.

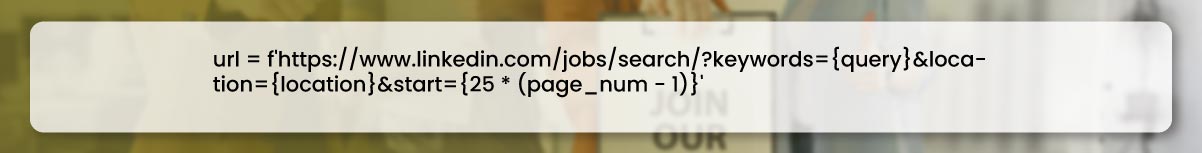

After that, we'll use the search query parameters with the page number to set the job search page URL.

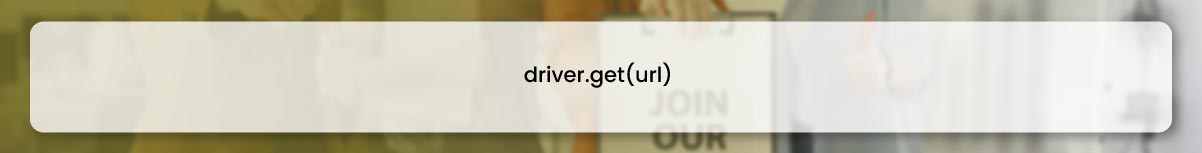

Now, we should navigate the page of LinkedIn job search.

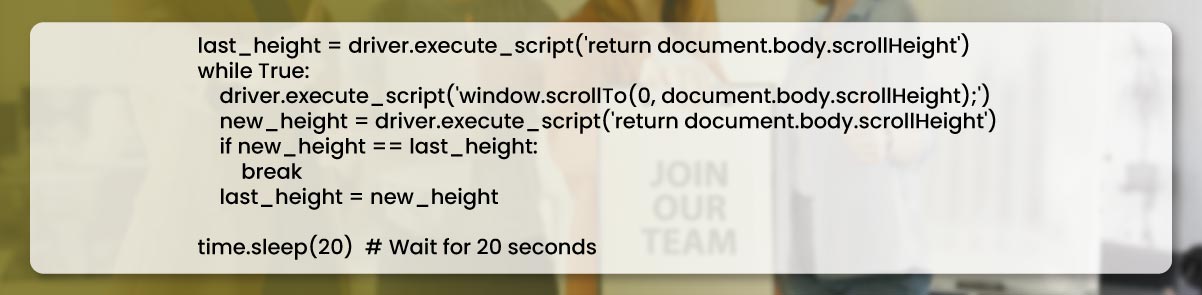

We should scroll the page to the bottom to load all the job posts.

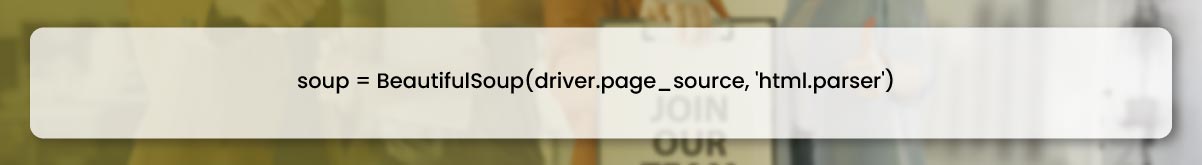

Then we will parse the HTML page content having the BeautifulSoup library.

We have the option to convert the directory list to Pandas data frame.

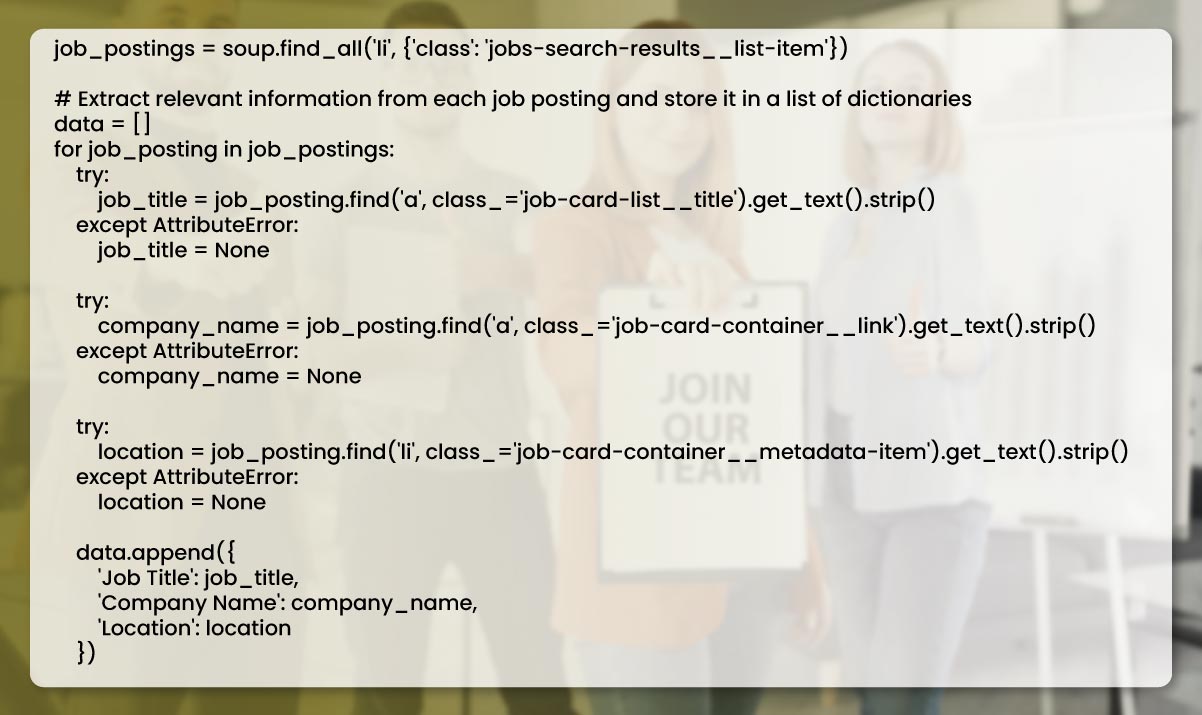

Then, we can search the job postings page with the help of the CSS selector.

We have the option to convert the directory list to Pandas data frame.

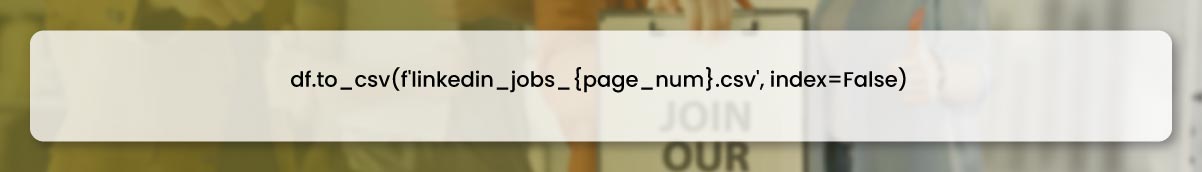

Finally, we can save the data in the CSV file.

To run EDA on collected job posting data from LinkedIn with the help of scraping code, we can access the Pandas library of Python and load the data from CSV files, filter, process and clean it to use in further analysis.

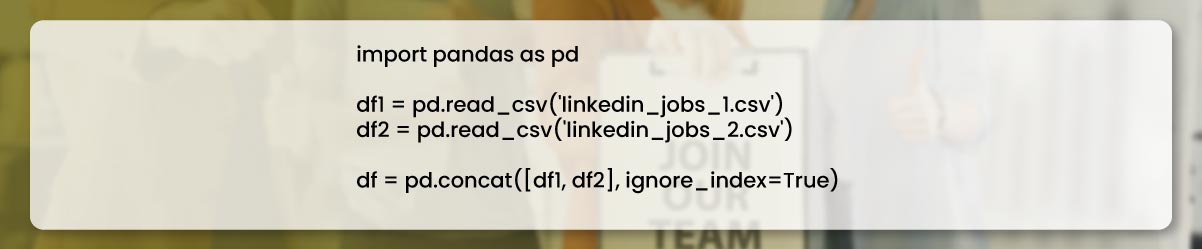

Firstly, we should integrate the Pandas library and upload data from CSV files to Pandas DataFrame.

Here, we consider 2 CSV files with LinkedIn job posting data, namely linked_jobs_a.csv, and linked_jobs_b.csv, that we created by scraping data. We import data from these files in separate data frames and then use function concat() to concatenate them in the single Pandas DataFrame.

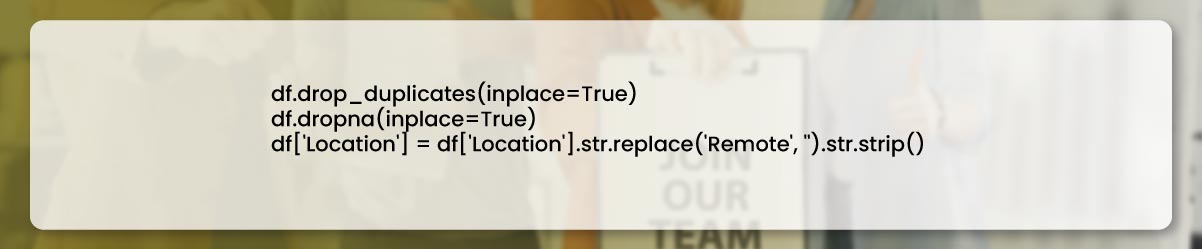

We can delete duplicate rows and missing values and use the formatting to hide useless characters. Then, we can access different methods and pandas functions to handle the data preprocessing. Let's take an example.

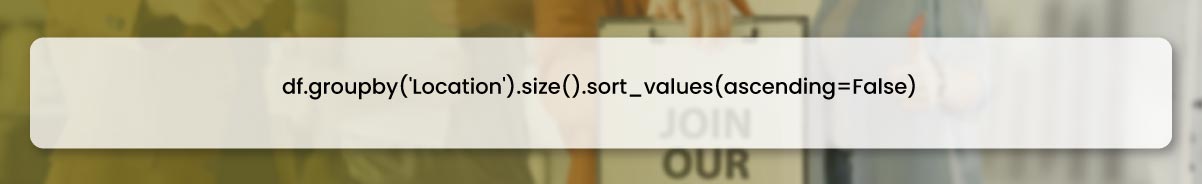

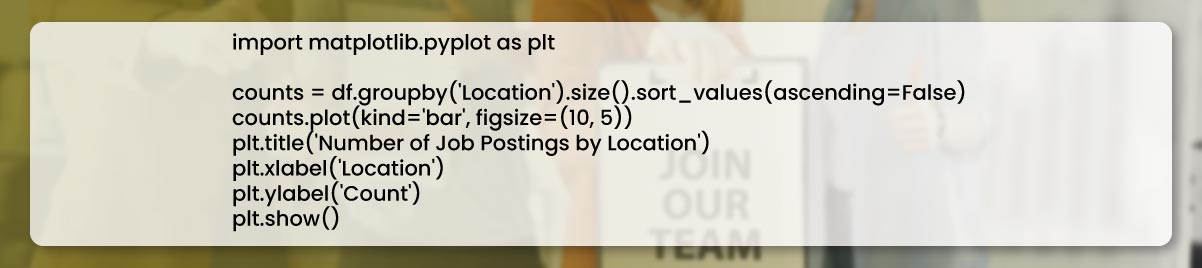

After cleaning and preprocessing the data, we can perform different visualizations and analyses to get insights into locations and the number of job postings for every location.

We can also generate a bar chart to observe the count of job postings for every location.

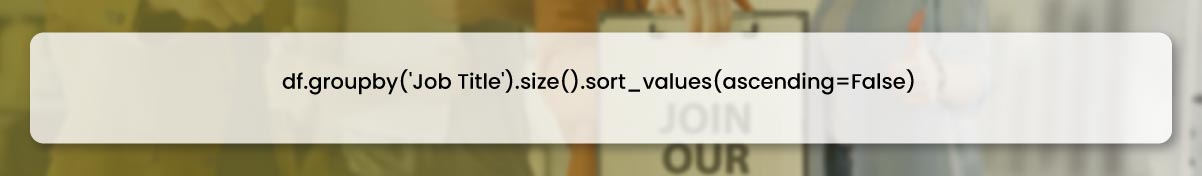

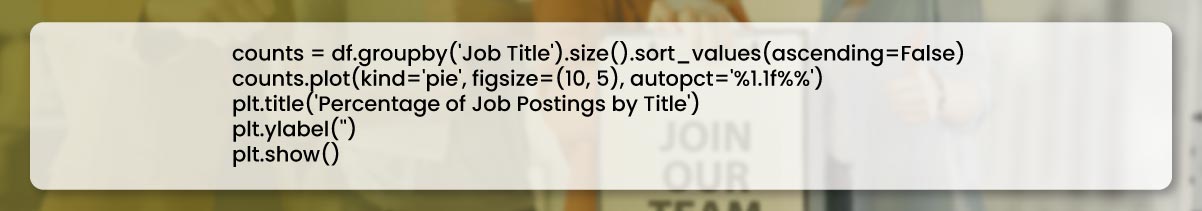

With location data analysis, we can also analyze job titles and the name of the company offering the job. We can collect the data for Job Titles and the Number of job postings for every job title in a file.

We can also generate a pie diagram to observe the percentage share of job listings for every job title.

EDA is a mandatory stage in data analytics. It helps us understand the relationships and structured patterns of data and get analytics to get the decision- making signal. We can use the Pandas library to analyze, clean, and preprocess the scraped data and get valuable insights after running the web data scraping code.

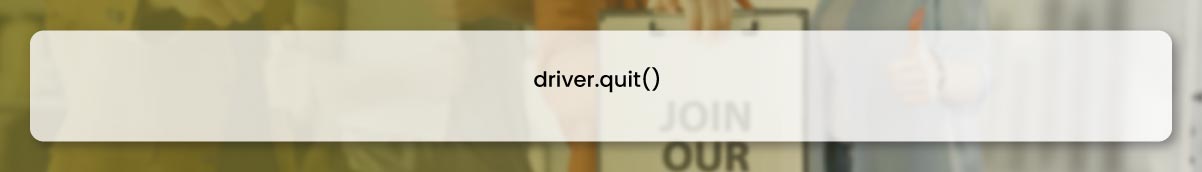

This tutorial is a comprehensive tutorial to scrape LinkedIn job posting data with Python, Selenium, and other libraries. It also helps you with environment setup and data analytics using Pandas DataFrame. Contact Actowiz Solutions for web scraping services and get valuable LinkedIn Job Posting Data.

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

Complete guide to scraping Shopify store data in 2026. Extract product prices, reviews, and inventory from Shopify stores for competitive intelligence.

Discover how Natural Grocers achieved a 23% increase in promotional ROI using real-time organic product pricing intelligence. Learn how data-driven pricing strategies enhance promotions and retail performance.

Track UK Grocery Products Daily Using Automated Data Scraping across Morrisons, Asda, Tesco, Sainsbury’s, Iceland, Co-op, Waitrose, and Ocado for insights.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.