Customer sentiment is one of the strongest indicators of:

When businesses operate across:

...they need automated review extraction + sentiment modeling to understand what customers really feel.

This tutorial shows how to build a full Sentiment Intelligence Pipeline using:

Platforms covered:

This is the same approach Actowiz Solutions uses in enterprise-grade sentiment dashboards.

Install the required packages:

pip install selenium

pip install undetected-chromedriver

pip install beautifulsoup4

pip install pandas

pip install textblob

pip install nltk

pip install lxmlThese provide:

Import everything:

import undetected_chromedriver as uc

from selenium.webdriver.common.by import By

from selenium.webdriver.common.keys import Keys

from bs4 import BeautifulSoup

from time import sleep

import pandas as pd

from textblob import TextBlob

import reThe scraper collects:

Sample output:

{

"platform": "Google",

"store_name": "Starbucks, Times Square",

"rating": 4,

"review_text": "Loved the place! Quick service.",

"date": "2 weeks ago",

"sentiment": 0.75

}Google Maps loads review text inside a scrollable container.

browser = uc.Chrome()

browser.get("https://www.google.com/maps/place/Starbucks+Times+Square")

sleep(4)review_btn = browser.find_element(By.XPATH, '//button[contains(@aria-label,"reviews")]')

review_btn.click()

sleep(3)panel = browser.find_element(By.CLASS_NAME, "m6QErb")

for _ in range(40):

browser.execute_script("arguments[0].scrollTop = arguments[0].scrollHeight", panel)

sleep(1)gm_reviews = []

cards = browser.find_elements(By.XPATH, '//div[@data-review-id]')for c in cards:

try:

author = c.find_element(By.CLASS_NAME, "d4r55").text

except:

author = ""

try:

rating = c.find_element(By.CLASS_NAME, "fzvQZe").get_attribute("aria-label")

except:

rating = ""

try:

text = c.find_element(By.CLASS_NAME, "wiI7pd").text

except:

text = ""

try:

date = c.find_element(By.CLASS_NAME, "rsqaWe").text

except:

date = ""

gm_reviews.append({

"platform": "Google",

"author": author,

"rating": rating,

"review_text": text,

"date": date

})browser.get("https://www.yelp.com/biz/starbucks-new-york")

sleep(4)for _ in range(20):

browser.find_element(By.TAG_NAME, "body").send_keys(Keys.END)

sleep(1)yelp_reviews = []

blocks = browser.find_elements(By.XPATH, '//li[contains(@class,"review")]')for b in blocks:

try:

text = b.find_element(By.CLASS_NAME, "comment").text

except:

text = ""

try:

rating = b.find_element(By.XPATH, './/div[contains(@aria-label,"star rating")]').get_attribute("aria-label")

except:

rating = ""

try:

date = b.find_element(By.CLASS_NAME, "css-chan6m").text

except:

date = ""

yelp_reviews.append({

"platform": "Yelp",

"rating": rating,

"review_text": text,

"date": date

})Zomato uses dynamic containers and pagination.

browser.get("https://www.zomato.com/mumbai/starbucks-fort/reviews")

sleep(4)for _ in range(20):

browser.find_element(By.TAG_NAME, "body").send_keys(Keys.END)

sleep(1.2)zo_reviews = []

blocks = browser.find_elements(By.XPATH, '//div[contains(@class,"rev-text")]')for b in blocks:

try:

text = b.text

except:

text = ""

# Rating (inside preceding sibling div)

try:

rating = b.find_element(By.XPATH, '../../div/div[contains(@class,"rated")]').text

except:

rating = ""

zo_reviews.append({

"platform": "Zomato",

"rating": rating,

"review_text": text

})Swiggy reviews appear under store pages.

browser.get("https://www.swiggy.com/restaurants/starbucks-bandra-west-mumbai")

sleep(4)for _ in range(20):

browser.find_element(By.TAG_NAME, "body").send_keys(Keys.END)

sleep(1)sw_reviews = []

blocks = browser.find_elements(By.XPATH, '//div[contains(@class,"_2xsFi")]')for b in blocks:

try: text = b.find_element(By.CLASS_NAME, "_2xsFi").text

except: text = ""

try:

rating = b.find_element(By.CLASS_NAME, "_3LWZl").text

except:

rating = ""

sw_reviews.append({

"platform": "Swiggy",

"rating": rating,

"review_text": text

})df = pd.DataFrame(gm_reviews + yelp_reviews + zo_reviews + sw_reviews)def extract_rating(val):

nums = re.findall(r"\d+\.?\d*", str(val))

return float(nums[0]) if nums else None

df["rating_num"] = df["rating"].apply(extract_rating)We use TextBlob for polarity scores:

def get_sentiment(text):

return TextBlob(text).sentiment.polarity

df["sentiment"] = df["review_text"].apply(get_sentiment)Values:

df.groupby("platform")["sentiment"].mean()Define keyword groups:

complaints = {

"service": ["slow", "rude", "unprofessional"],

"delivery": ["late", "cold", "leaking", "delay"],

"taste": ["bland", "bad taste", "not good"],

"cleanliness": ["dirty", "messy", "unclean"],

"pricing": ["expensive", "overpriced"]

}Tag each review:

for tag, words in complaints.items():

df[f"kw_{tag}"] = df["review_text"].apply(

lambda x: any(w.lower() in x.lower() for w in words)

)df.to_csv("sentiment_analysis_reviews.csv", index=False)DIY scripts work if:

But Actowiz Solutions is better for:

We deliver:

In this tutorial, you learned how to:

This forms the backbone of Actowiz Solutions’ Sentiment Intelligence for F&B, retail, delivery, and hospitality brands.

You can also reach us for all your mobile app scraping, data collection, web scraping , and instant data scraper service requirements!

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

Extract real-time travel mode data via APIs to power smarter AI travel apps with live route updates, transit insights, and seamless trip planning.

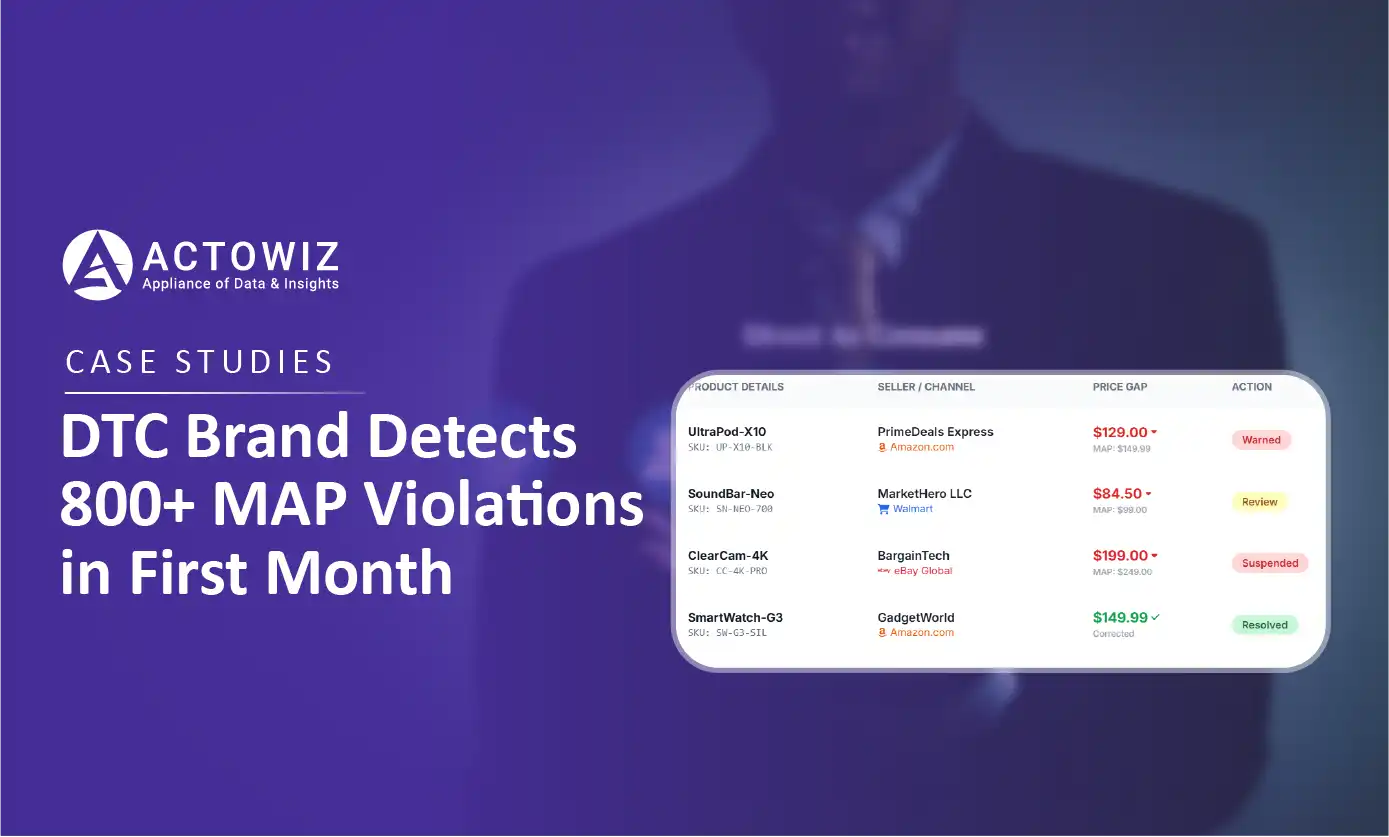

How a $50M+ consumer electronics brand used Actowiz MAP monitoring to detect 800+ violations in 30 days, achieving 92% resolution rate and improving retailer satisfaction by 40%.

Track UK Grocery Products Daily Using Automated Data Scraping across Morrisons, Asda, Tesco, Sainsbury’s, Iceland, Co-op, Waitrose, and Ocado for insights.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.