As a leading e-commerce website, the client needed complete product and pricing data from outlets spread across the U.S. This case study shows how Actowiz Solutions did location-based data scraping for cataloging.

Our client is the leading e-commerce company in the U.S.

As a leading e-commerce website, the client needed complete product and pricing data from outlets spread across the U.S. Before looking at hiring data scraping services, the client used to collect relevant data to do analysis manually.

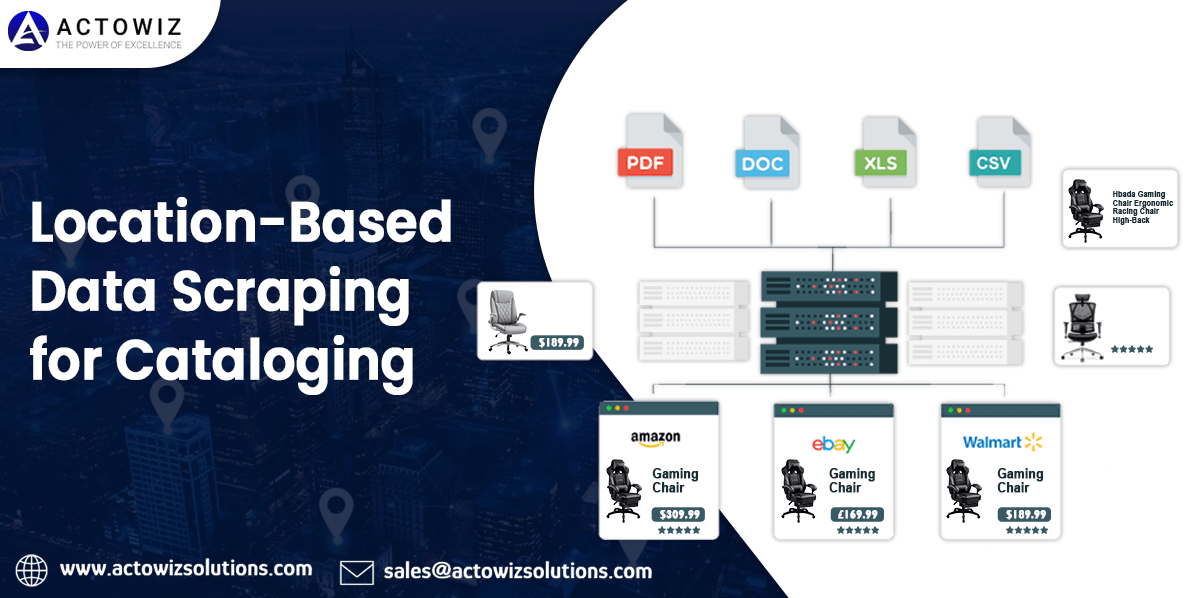

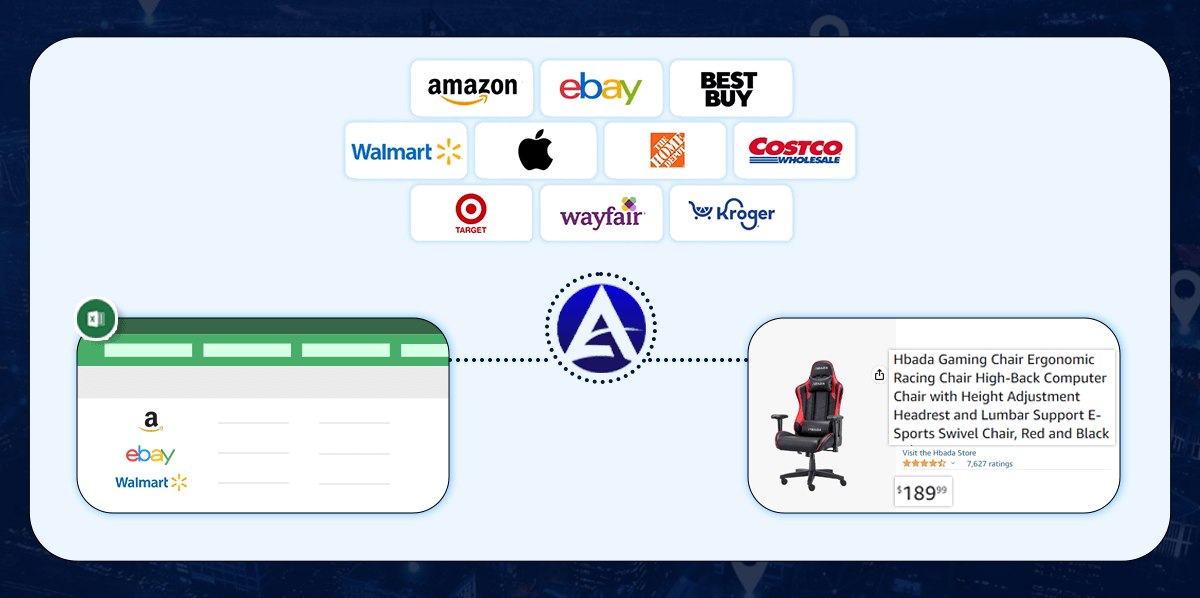

The requirements comprised scraping product data from e-commerce platforms and retail store outlets, filtered depending on zip codes about store locations. Taking location-based data scraping, a parallel data collection procedure had to be done on competitors’ e-commerce websites to scrape pricing and product data.

The data was used to analyze product strategies and pricing benchmarking for complete product catalogs. The customer wanted to use Actowiz Solutions’ data scraping services to automate the data scraping procedure based on locations and zip codes.

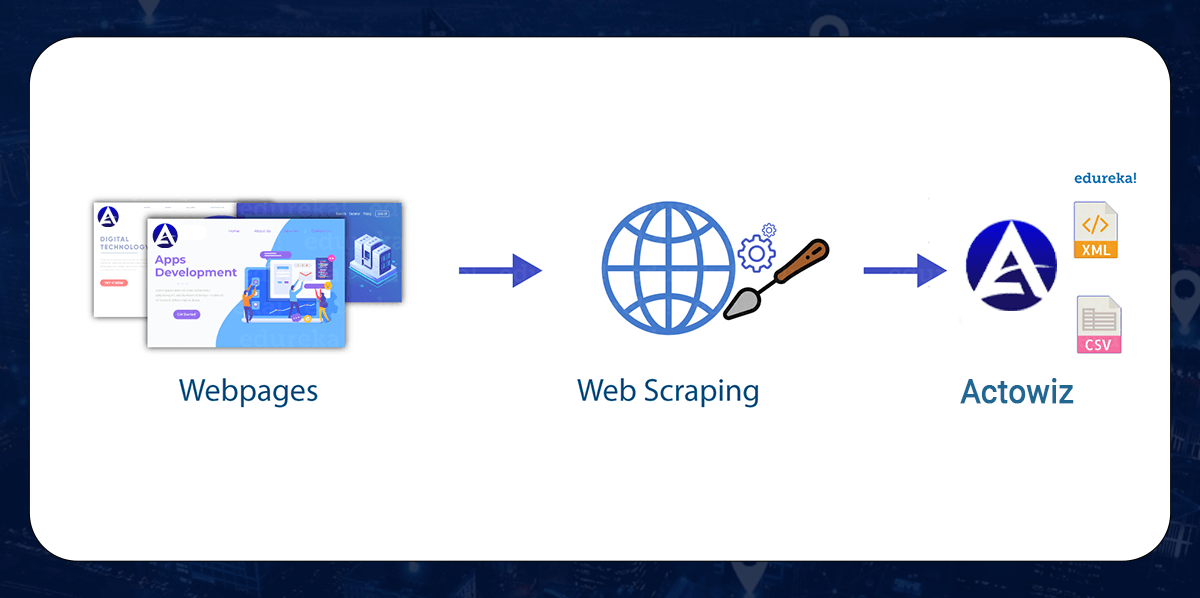

Site-particular crawling was employed that focused on a client’s site. The solution scraped pre-defined data points from a client’s site; required data fields were unique serial identifiers of products, products’ names, categories, URL links, store location, crawling timestamp, pricing, and inventory stock accessibility.

Considering a customer’s interest in price benchmarking, data scrapers were created for competitors’ websites. Crawlers gathered data from fields like a unique product identifier, product name, URL link, categories, store, location, pricing, crawl timestamp, and stock accessibility in the inventories.

The data collected from the two executions were classified by the zip codes for locations and were utilized by a client for more analysis. The datasets were delivered to a client in the JSON format using Actowiz Solutions’ REST API.

When any specific day’s crawling is completed, data merge in a single file per website and is driven to the FTP folder of a client from where they have imported data into internal systems, which automatically track all price differences across different sellers. Our client has created a sentiment analysis system for gauging how users observe their products from collected reviews. They get this helpful in understanding what works well for them and what requirements need to be altered.

With site crawling services from Actowiz Solutions, our client has assured us that no manual layers are there related with data acquisition process, thus saving up on many human resource costs, person-hours, and server costs of getting a devoted team and crawler maintenance had been created in-house. Now, all they have to do is use data that we provide as per the specifications.

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

Australian Top Supermarkets Dataset to analyze pricing, store locations, and consumer trends for smarter retail insights and growth strategies.

Discover how agencies use brand data intelligence for cross-border lead generation. Learn how data-driven insights improve client pitches, targeting, and conversion rates globally.

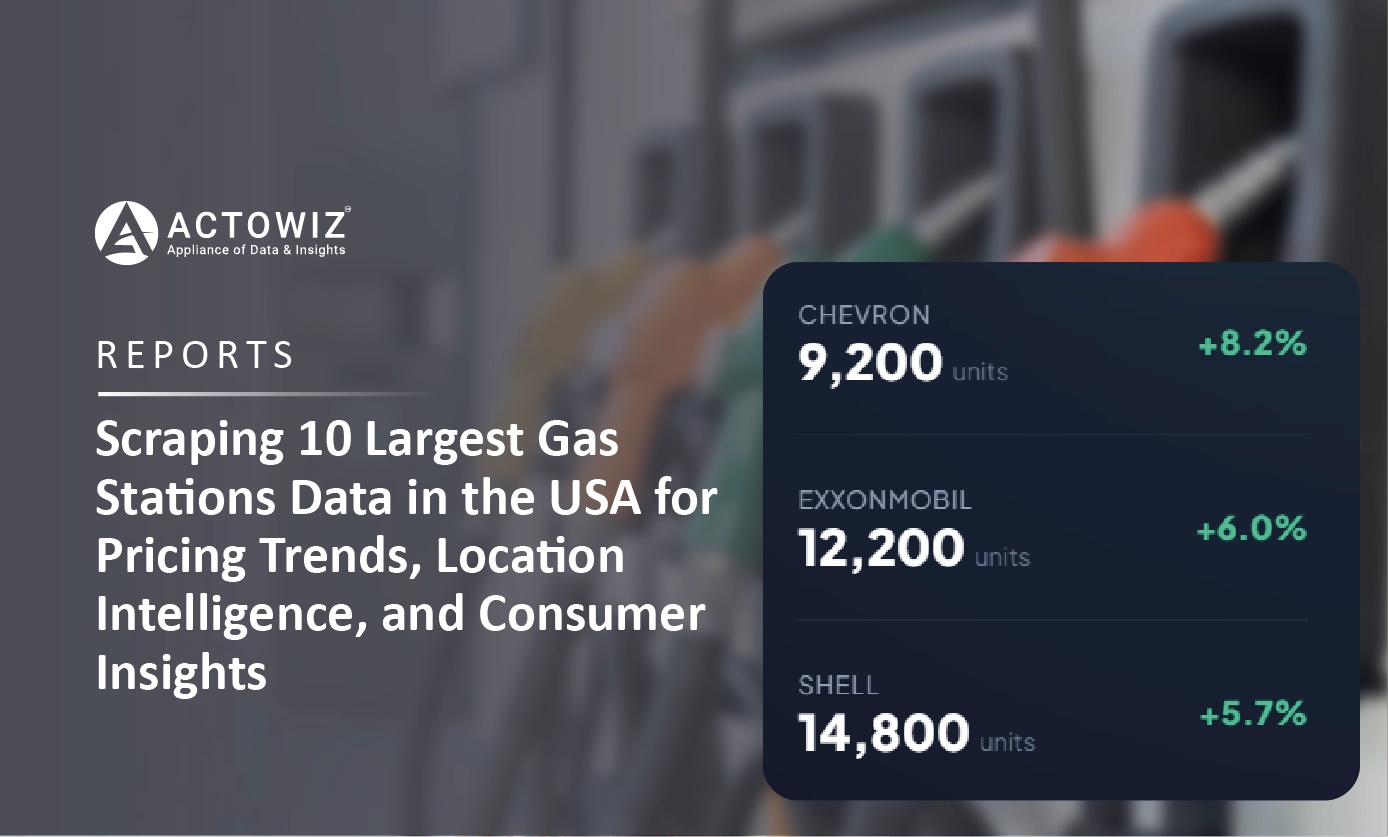

Scraping 10 Largest Gas Stations Data in the USA for pricing insights, location intelligence, and smarter fuel market analytics.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.