Rightmove’s market capitalisation exceeds £4.5 billion. The platform collects over £900 million annually from UK estate agents who have no real choice but to pay up. Zoopla, OnTheMarket, and PrimeLocation have spent years trying to disrupt this dominance with mixed success.

For everyone else — UK PropTech startups, mortgage brokers, buy-to-let investors, build-to-rent operators, property fund managers — the challenge is simpler but no less urgent: how do you access UK property market data without being held hostage by a portal that charges you more every renewal cycle?

The answer, increasingly, is comprehensive UK property data extraction across Rightmove, Zoopla, OnTheMarket, and the long tail of estate agent websites. With the right data infrastructure, you can build mortgage comparison tools, investor dashboards, AVMs (automated valuation models), market intelligence reports, and consumer-facing property search products — without being dependent on any single portal’s commercial whims.

This guide breaks down exactly how UK property data extraction works in 2026 — what data is available, the technical challenges specific to the UK market, and how leading UK PropTech players operationalise scraped data into real commercial products.

UK property markets have been volatile since 2020 — pandemic-driven demand, mini-budget shocks, mortgage rate spikes, BTL policy changes. Investors and analysts need real-time data to navigate markets that were previously assumed to be slow-moving.

Build-to-rent (BTR) has exploded as an institutional asset class. Rental data — once messy and unstandardised — now drives billions in investment decisions. Institutional investors need granular, historical rental intelligence that no single portal provides adequately.

Digital mortgage brokers (Habito, Trussle, Molo) and consumer-facing platforms rely on property data for affordability calculations, loan-to-value optimisation, and product matching. This drives constant demand for clean property datasets.

UK estate agency is consolidating — major chains acquiring independents, PE-backed platforms rolling up regional players. M&A due diligence requires operational data platforms won’t voluntarily disclose.

Emerging property models (fractional ownership, rent-to-own, instant-buy programmes) need aggregated market data as foundational infrastructure. The old monopoly-portal model doesn’t serve these new business models.

Combining property listing data with local authority planning applications, Land Registry transactions, and HMRC Stamp Duty data creates intelligence that the portals themselves don’t offer — a differentiator for serious analytics players.

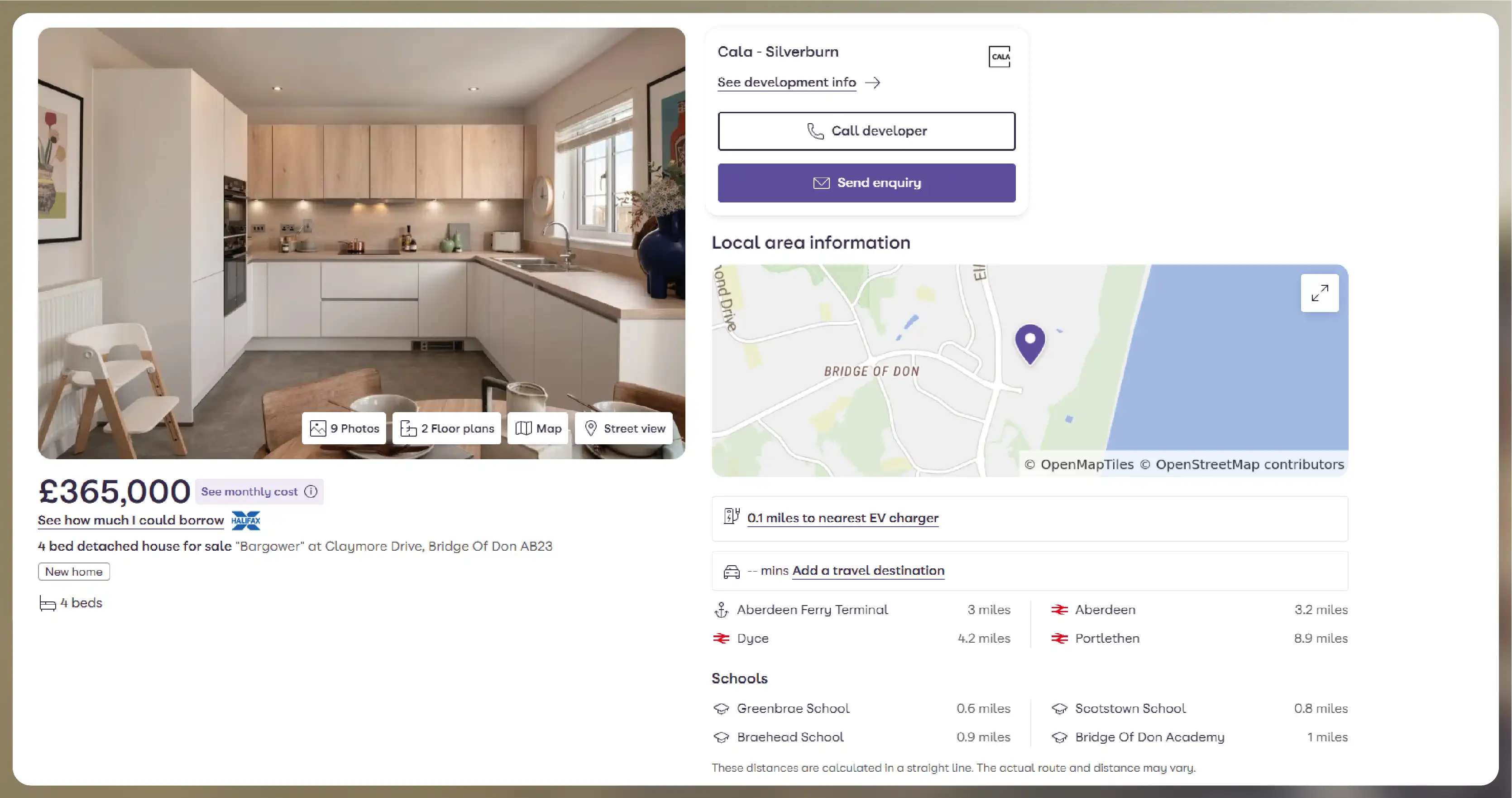

A comprehensive UK property data schema:

Listing ID (portal-specific, unified across portals)

UPRN (Unique Property Reference Number — gold standard for canonical property ID)

Address (full + postcode), latitude/longitude

Property type (detached, semi-detached, terraced, flat, bungalow, etc.)

Tenure (freehold, leasehold, share of freehold) + lease length if leasehold

Bedrooms, bathrooms, reception rooms, floor area (sq ft / sq m)

Asking price, price history, offer/sale status

Council tax band, EPC rating, build year

Agent name, agent branch, agent ID

Listing date, first-seen date, last-updated date

Service charge and ground rent (for leasehold)

Chain status (critical for mortgage timing)

Parking, garage, garden, outdoor space

New-build flag, shared ownership flag, Help-to-Buy flag

Floor plan and image URLs

For rentals additionally: Monthly rent, furnished status, available-from date - Deposit requirements, minimum tenancy length - Utility inclusions, council tax inclusions - Pet policy, smoking policy - Student / professional / family targeting

VC-backed UK PropTech startups use comprehensive scraped data to build consumer-facing search engines, investor dashboards, and analytics products. The scraped data is their core value proposition — and their moat against well-funded competitors relying on expensive portal APIs.

Platforms like Habito and Trussle use property data to power affordability calculations, automate loan-to-value assessments, and match customers to mortgage products. Real-time property data accelerates decision times from days to minutes.

BTL-focused platforms (GetGround, Provestor, and others) use scraped rental and sale data to identify high-yield opportunities, calculate net yields post-costs, and rank investment opportunities by expected return.

Institutional BTR operators (Grainger, Legal & General, Greystar UK) use scraped rental data to set and update rental pricing across their portfolios, benchmark against competing schemes, and report to LPs.

Progressive UK estate agent chains use competitor scraping to monitor stock turnover, pricing accuracy, and marketing effectiveness of rival firms — informing recruitment, territory expansion, and pricing strategies.

Auction-based platforms and iBuyers (where still operating) use scraped comparable-sales data to price acquisitions and sales accurately.

Some UK lenders have integrated scraped property data into AVM models, enabling instant valuations for fast mortgage decisions — reducing desktop valuation costs and speeding application processing.

Some UK councils and housing associations use scraped data for market intelligence on temporary accommodation costs, affordable housing markets, and rental market analysis.

UK-listed property REITs and institutional funds use scraped data as supplementary signal to traditional sources — tracking regional market trends, price momentum, and competitive positioning.

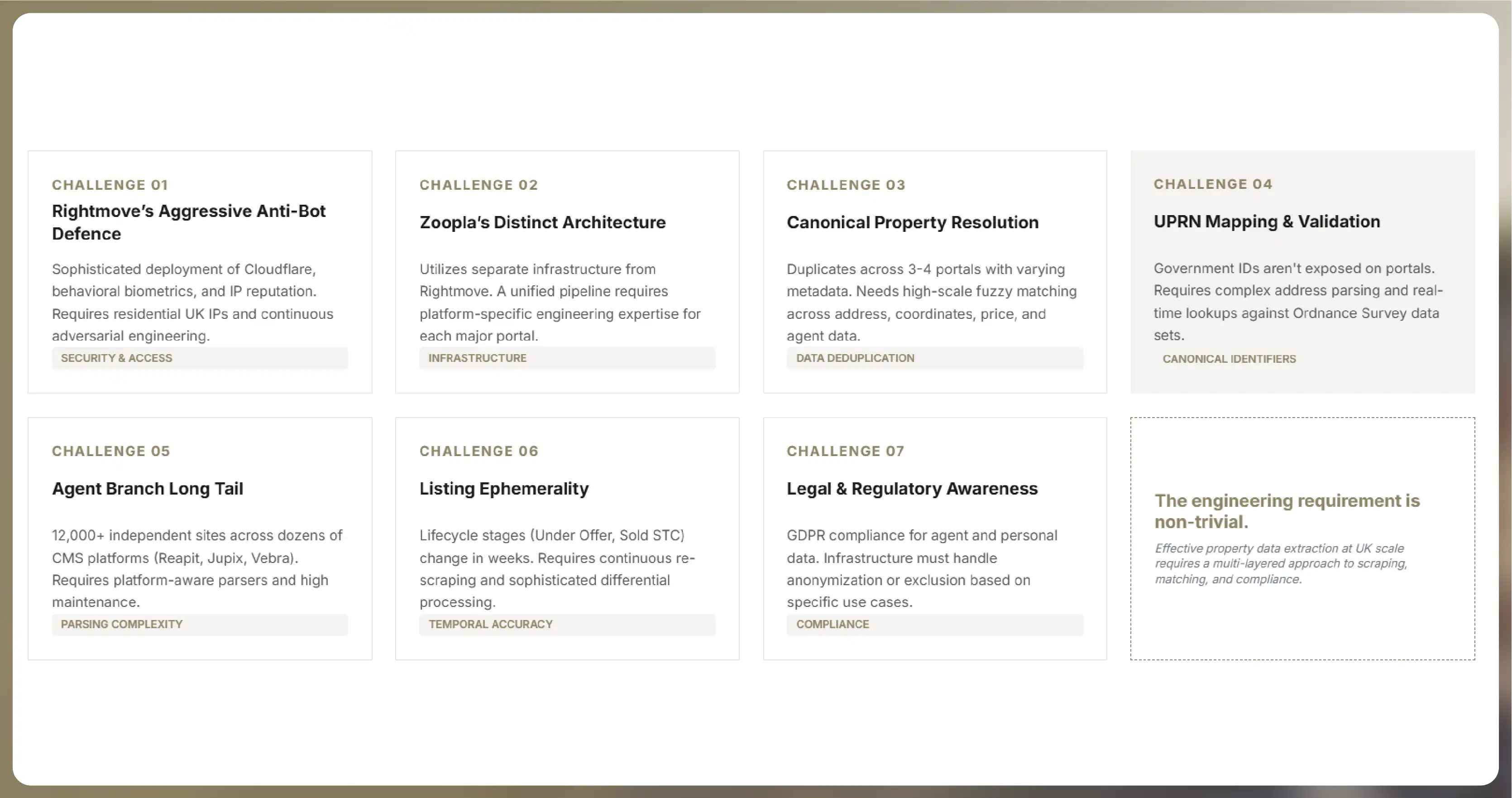

Rightmove deploys sophisticated bot detection — Cloudflare, behavioural biometrics, IP reputation, session anomaly detection. Naive scrapers face instant blocks. Effective scraping requires residential UK IPs, realistic browsing patterns, and continuous adversarial engineering.

Zoopla uses different anti-bot infrastructure than Rightmove, requiring a separate engineering approach. Single-pipeline scraping across both portals needs specialised expertise on each.

The same property is often listed on 3-4 portals with slightly different addresses, descriptions, and photos. Building a unified view requires fuzzy matching on address + coordinates + price + agent — non-trivial at UK scale.

UPRN is the government-blessed canonical property ID, but it’s not exposed on portal listings. Mapping listings to UPRN requires address parsing and lookup against Ordnance Survey data — a specialised capability.

12,000+ independent estate agent websites use dozens of different CMS platforms (Reapit, Agent OS, Jupix, Vebra, etc.). Each has its own HTML structure. Comprehensive coverage requires platform-aware parsers and continuous maintenance.

Properties go from “For Sale” → “Under Offer” → “Sold STC” → “Sold” in weeks to months. Capturing full lifecycle requires continuous re-scraping and sophisticated differential processing.

UK data laws (GDPR particularly) require careful handling of any personal data in listings (agent names, contact details). Scraping infrastructure must anonymise, aggregate, or exclude personal data depending on use case.

Actowiz Solutions operates one of the most comprehensive UK property data scraping platforms — serving PropTech startups, mortgage brokers, institutional investors, BTR operators, estate agent chains, and property funds.

What we deliver:

Our UK property data pipeline covers 1.8M+ active UK property listings with 99%+ data quality and daily refresh.

Scraping publicly visible property listings generally aligns with accepted web scraping practices. UK courts have generally followed US precedent on public data scraping. Each portal’s Terms of Service should be reviewed with legal counsel for your specific use case, and personal data within listings must be handled in line with UK GDPR.

Yes — every property in our dataset is mapped to its UPRN where possible, providing the government-blessed canonical identifier.

We cover all major estate agent chains (Connells, Spicerhaart, Countrywide, Foxtons, Hamptons, Savills, Knight Frank, Strutt & Parker, and many more) plus 12,000+ independent agent websites.

Yes — regulatory data enrichment (Land Registry transactions, EPC ratings, planning applications) is available as an add-on service.

Our pipeline is designed for GDPR compliance, with personal data (agent contacts, named individuals) handled separately from aggregate property data. Specific compliance configurations depend on client use case.

Commercial property scraping (RightBiz, EG, Costar, Zoopla Commercial) is available as a separate service category.

UK property data engagements start at £3,500/month for focused regional or property-type coverage. Full-market enterprise plans with regulatory data integration are custom-quoted.

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

Complete guide to sourcing compliant, high-quality LLM training data from the web in 2026. Legal frameworks, technical infrastructure, data quality, and cost benchmarks.

Scrape Cracker Barrel restaurants locations Data in the USA in 2026 to analyze store presence, expansion trends, and location intelligence.

.webp)

Explore how McDonald’s restaurant locations data scraping in the USA in 2026 reveals store density, customer reach, and growth areas.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.