When you manage 200 SKUs, a pricing analyst with a spreadsheet can keep up. At 2,000 SKUs, you need a dedicated team. At 10,000 SKUs and above, manual monitoring is not just impractical — it is mathematically impossible to do with any meaningful accuracy.

Yet this is exactly the scale at which pricing intelligence matters most. Large catalogs contain thousands of micro-opportunities: a competitor raising prices on a niche product, a stockout in a high-margin category, a new entrant undercutting on your fastest-moving items. Each of these events represents revenue either captured or lost, depending entirely on whether you see it in time to respond.

This guide walks through exactly how enterprise e-commerce brands build and operate automated price monitoring systems that track 10,000 or more SKUs across multiple competitors and marketplaces — without adding headcount.

Before diving into solutions, it is worth understanding exactly why scale creates such a challenge.

Consider a mid-size e-commerce brand selling 10,000 products. Each product competes against an average of 5 sellers across 3 marketplaces (Amazon, Walmart, and their own DTC site versus competitors). That creates 150,000 competitor price points to track.

If each competitor changes prices an average of once per day, that is 150,000 data points collected daily. Over a month, you are managing 4.5 million data points. Over a year, 54 million.

No spreadsheet handles this. No team of analysts can process it manually. And yet, every one of those data points potentially represents a pricing decision that affects your revenue.

This is why automated price monitoring exists. It converts an impossible manual task into a scalable data pipeline.

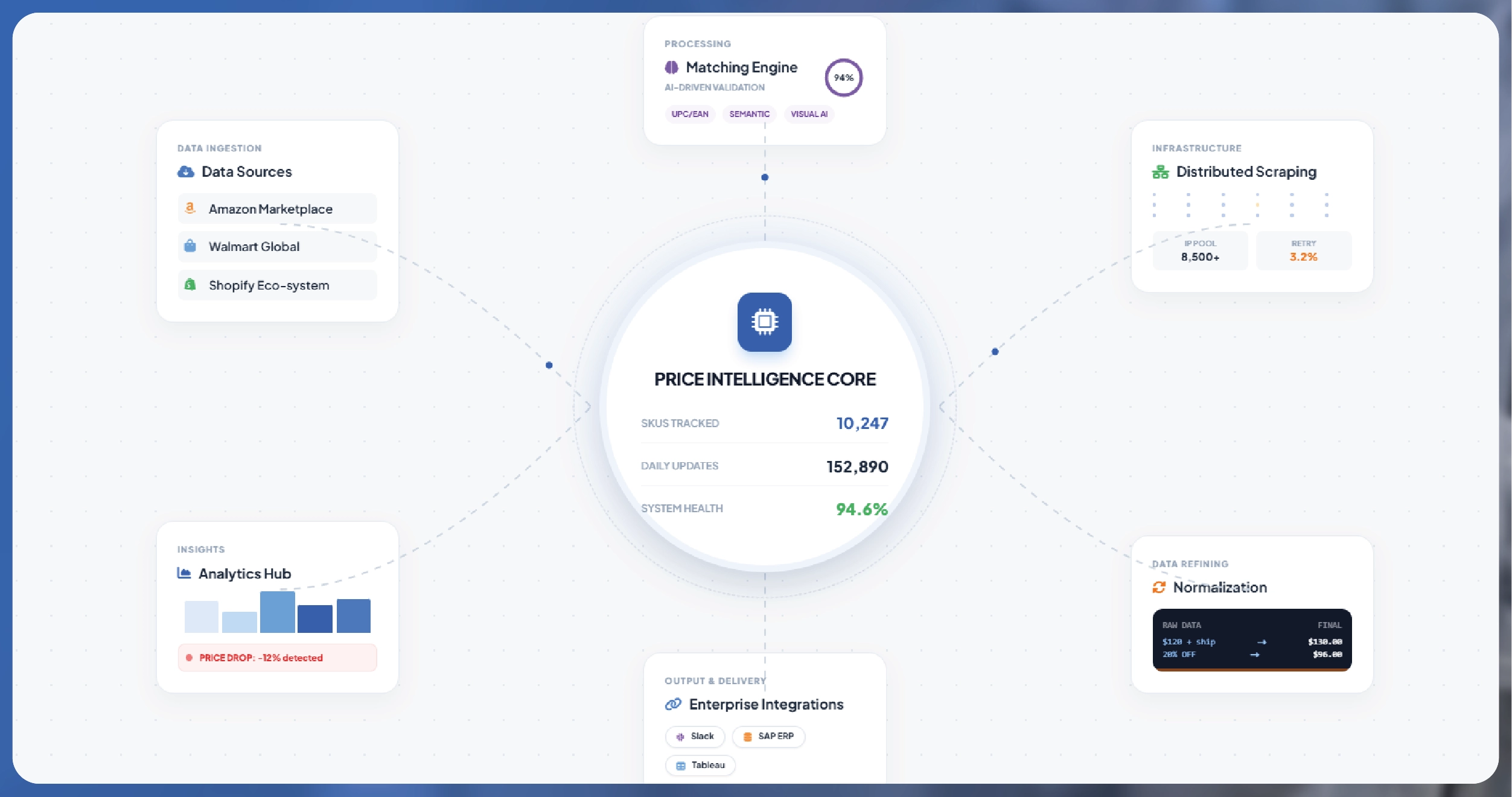

A well-designed price monitoring system for 10,000+ SKUs has five core components. Understanding each one helps you evaluate solutions and avoid common implementation failures.

The first and often most challenging component. Your product catalog needs to be matched to competitor listings across every marketplace. A "Nike Air Max 90 Men's Size 10 White" on your site needs to be correctly mapped to the equivalent listing on Amazon, the competitor's DTC site, and every other channel.

At small scale, this matching is manual. At 10,000+ SKUs, it requires automated matching using UPC/EAN codes, product titles, attribute comparison, and image matching algorithms. A good matching engine achieves 92 to 97 percent accuracy automatically, with the remaining edge cases flagged for human review.

Scraping 150,000 URLs daily requires serious infrastructure. A single server sending 150,000 requests would be blocked within hours. Enterprise scraping systems distribute requests across thousands of IP addresses using rotating residential proxies, vary request patterns to mimic human browsing behavior, and implement intelligent retry logic for failed requests.

The scraping layer also handles the technical complexity of different website structures. Amazon product pages have a different DOM structure than Walmart, which differs from Shopify-based DTC sites. The scraping system needs parsers for each source that extract the correct price, availability, and shipping data regardless of layout changes.

Raw scraped data is inconsistent. Prices may include or exclude tax, shipping costs vary, currencies differ for international monitoring, and promotional prices are presented in multiple formats (percentage off, dollar amount off, strikethrough pricing, coupon clipping).

The normalization pipeline converts all of this into a consistent schema: base price, effective price (after all discounts), shipping cost, total landed cost, currency, and availability status. This normalized data is what makes apples-to-apples comparison possible across tens of thousands of products.

Data without visibility is useless. The dashboard layer presents pricing intelligence in formats that enable rapid decision-making. At a minimum, this includes competitor price history charts for every SKU, configurable alerts for significant price movements, daily summary reports showing the biggest competitive shifts, margin analysis showing where you are overpriced or underpriced, and category-level trend reporting.

The best dashboards also include anomaly detection — automatically flagging unusual patterns like a competitor suddenly dropping prices across an entire category, which might indicate a clearance event or strategic repositioning.

Price intelligence creates value when it connects to action. The integration layer pushes data to the systems where pricing decisions happen: your repricing tool, your ERP system, your merchandising platform, or your business intelligence stack. Common integrations include API connections to Amazon Seller Central and Walmart Seller Hub for automated repricing, Shopify, BigCommerce, or Magento for DTC price updates, Tableau, Looker, or Power BI for executive reporting, and Slack or email for real-time alerts.

Every e-commerce brand with a technical team asks the same question: should we build this in-house or buy from a provider?

| Factor | Build In-House | Professional Provider |

|---|---|---|

| Setup Time | 3-6 months minimum | 2-4 weeks |

| Annual Cost (10K SKUs) | $150K-300K (eng salary + infra) | $24K-60K |

| Proxy Infrastructure | Build and maintain yourself | Included |

| Anti-Bot Handling | Constant cat-and-mouse game | Provider's core competency |

| Product Matching | Build ML model from scratch | Pre-built, 95%+ accuracy |

| Maintenance Burden | Ongoing (site changes break scrapers) | Provider handles |

| Scalability | Requires re-architecture at scale | Built for scale from day one |

| Data Quality SLA | No guarantee | Contractual guarantees |

The honest assessment: building in-house makes sense only if price monitoring is a core competitive differentiator in your business model and you have a dedicated engineering team with web scraping experience. For the vast majority of e-commerce brands, partnering with a specialized provider delivers better data quality at a fraction of the cost.

Week 1: Onboarding and catalog mapping

Share your product catalog, identify priority competitors, and define which marketplaces to monitor. The provider maps your products to competitor listings.

Week 2: Initial data collection and validation

First scraping runs execute. Data is validated for accuracy, product matches are verified, and any edge cases are resolved.

Week 3: Dashboard setup and alert configuration

Your team gets access to the analytics dashboard. Configure alerts based on your specific thresholds — for example, notify when any competitor drops price by more than 5% on a top-100 SKU.

Week 4: Integration and automation

Connect the data pipeline to your repricing tools, BI systems, and communication channels. Run initial automated pricing rules on a pilot set of SKUs.

Month 2-3: Optimization

With real data flowing, refine your pricing rules based on actual market response. Expand monitoring to additional competitors and marketplaces. Begin advanced analytics like price elasticity modeling and promotional impact analysis.

Track these metrics to evaluate your price monitoring ROI.

| KPI | What It Measures | Target Improvement |

|---|---|---|

| Price Competitiveness Index | % of SKUs priced within 3% of market | 85%+ |

| Buy Box Win Rate | % of time you hold the Buy Box | +15–25 pp |

| Margin Per Unit | Average profit after pricing optimization | +3–8 pp |

| Response Time | Time from competitor change to your response | <60 minutes |

| Revenue Per SKU | Average monthly revenue per product | +12–20% |

| Data Coverage Rate | % of competitor prices successfully tracked | 95%+ |

You can also reach us for all your mobile app scraping, data collection, web scraping , and instant data scraper service requirements!

Products without standard identifiers require attribute-based matching — comparing titles, descriptions, images, and specifications to find equivalent competitor listings. This is more complex but achievable with modern matching algorithms. Expect slightly lower accuracy (88-93% vs 95-97% with UPC matching) with the remainder handled through manual review.

Yes. Enterprise price monitoring systems support multi-country, multi-currency scraping. Prices are converted to a base currency for comparison, with exchange rates updated daily. This is particularly valuable for brands selling on Amazon EU marketplaces, where sellers from different countries compete on the same listings.

MAP (Minimum Advertised Price) monitoring is a specific application of price scraping. The system tracks authorized and unauthorized resellers, flags any listing below your MAP threshold, and generates violation reports with screenshots and timestamps that your legal team can use for enforcement.

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

F&B Live Ingredient Cost Intelligence helps brands track real-time ingredient prices to protect margins and optimize pricing during volatility.

Buc-ee's locations data scraping in the USA in 2026 helps brands unlock location insights, optimize expansion strategies, and gain a competitive edge.

Mother's Day 2025 E-commerce Insights report — 47,000+ SKUs across 12 platforms. Pricing, discounts, stock-outs & what brands should expect in 2026.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.