Web scraping often receives negative criticism due to its potential for malicious activities. However, it's essential to recognize that web scraping can also be utilized for positive purposes. This blog post aims to debunk common myths surrounding web scraping, shedding light on how this technique can be harnessed for good. Let’s go through the biggest myths people have about web scraping.

There is a common misconception that web scraping is an illegal activity. However, it is important to understand that web scraping is perfectly legal under certain conditions. Here are the facts:

Data Privacy and Terms of Service: It is crucial to comply with data privacy laws and the website's Terms of Service (ToS) being scraped. By adhering to the rules, regulations, and stipulations set by the website, you can ensure the legality of your web scraping activities. Collecting personally identifiable information (PII) or password-protected data is generally prohibited.

Open Source and Anonymized Data: Targeting open source web data that is anonymized and working with data collection networks compliant with regulations such as CCPA (California Consumer Privacy Act) and GDPR (General Data Protection Regulation) is a reliable approach. This ensures your web scraping activities align with privacy standards and legal requirements.

Legal Perspective: In the United States, no federal laws explicitly prohibit web scraping as long as the information being collected is publicly available, and no harm is inflicted upon the target site during the scraping process. In the European Union and the United Kingdom, web scraping is viewed within the realm of intellectual property under the Digital Services Act. It states that the reproduction of publicly available content is not illegal, implying that as long as the scraped data is publicly accessible, you are legally in compliance.

It is important to note that while web scraping itself may be legal, how it is conducted, and the collected data must be done responsibly and ethically. Understanding and abiding by relevant laws and regulations is crucial to ensure the legality of your web scraping activities.

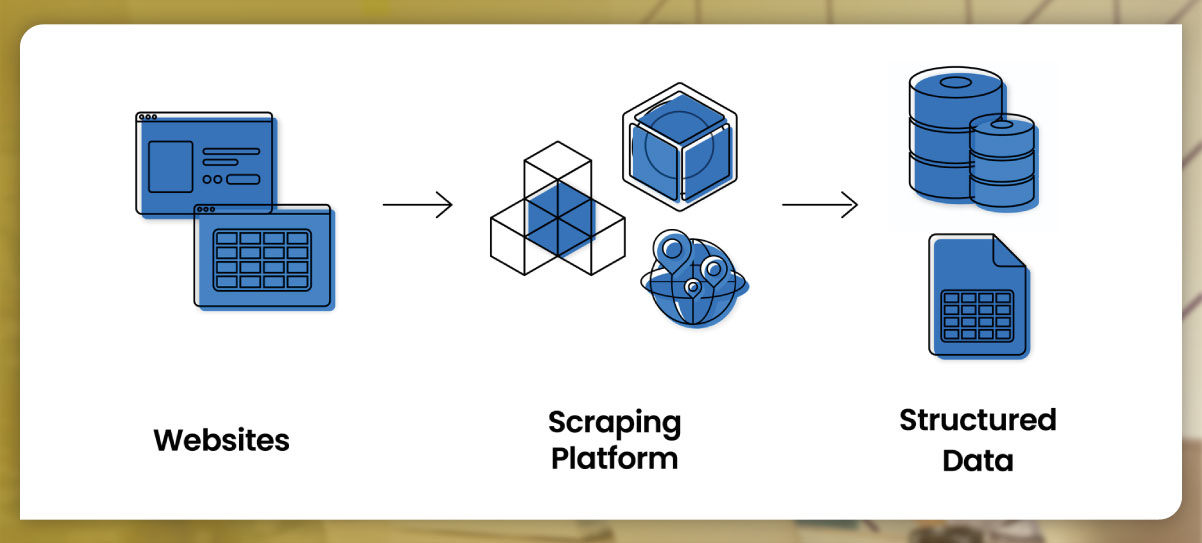

One prevailing myth is that web scraping is exclusively limited to developers. However, this assumption neglects that tools and solutions are now available that enable professionals without technical backgrounds to harness the power of web scraping. Let's explore the reality:

Technical Skills and Developer Dependency: It is true that specific scraping techniques traditionally require the technical skills that developers possess. These methods involve writing custom code to extract data from websites. However, it is essential to recognize that this is not the only approach to web scraping.

Zero-Code Solutions: Recently, the emergence of zero-code tools has revolutionized web scraping. These solutions automate the scraping process by providing pre-built data scrapers, eliminating the need for coding knowledge. Non-technical professionals can leverage these tools to extract data without dependency on developers.

Web Scraping Templates: Zero-code tools often come equipped with web scraping templates specifically designed for popular websites such as Amazon or Booking.com. These templates are ready-made frameworks that streamline data extraction, making it accessible to business-minded individuals.

Professionals without technical backgrounds can now tap into the benefits of web scraping by utilizing zero-code tools and leveraging web scraping templates. It empowers them to take control of their data intake and derive valuable insights without the need for extensive coding knowledge or developer expertise.

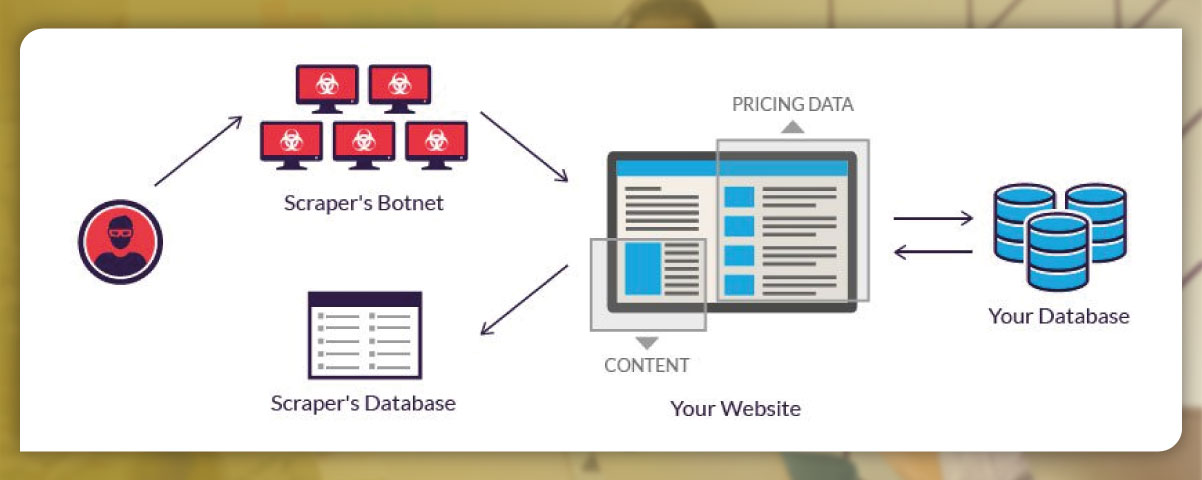

One common misconception is that web scraping is synonymous with hacking. However, this is far from the truth. Let's clarify the distinction:

Hacking: Hacking involves illegal activities to exploit private networks or computer systems. Hackers aim to gain unauthorized access, steal sensitive information, or manipulate systems for personal gain. Hacking is associated with malicious intent and is universally condemned.

Web Scraping: In contrast, web scraping is a practice that revolves around accessing publicly available information from target websites. It is a legitimate method businesses use to gather data for various purposes, such as market research, competitive analysis, or price comparison. Web scraping is carried out within the bounds of legality and ethics, respecting the terms of service and applicable laws.

The primary goal of web scraping is to collect data that is openly accessible to the public, contributing to fairer market competition and enabling businesses to enhance their services. It does not involve malicious activities or intrusions into private networks or systems.

It is essential to differentiate between hacking and web scraping to avoid misconceptions and recognize its legitimate and beneficial nature when conducted responsibly and ethically.

It is a common misconception that web scraping is a straightforward and effortless task. However, the reality is quite different. Let's explore why scraping is not as easy as it may seem:

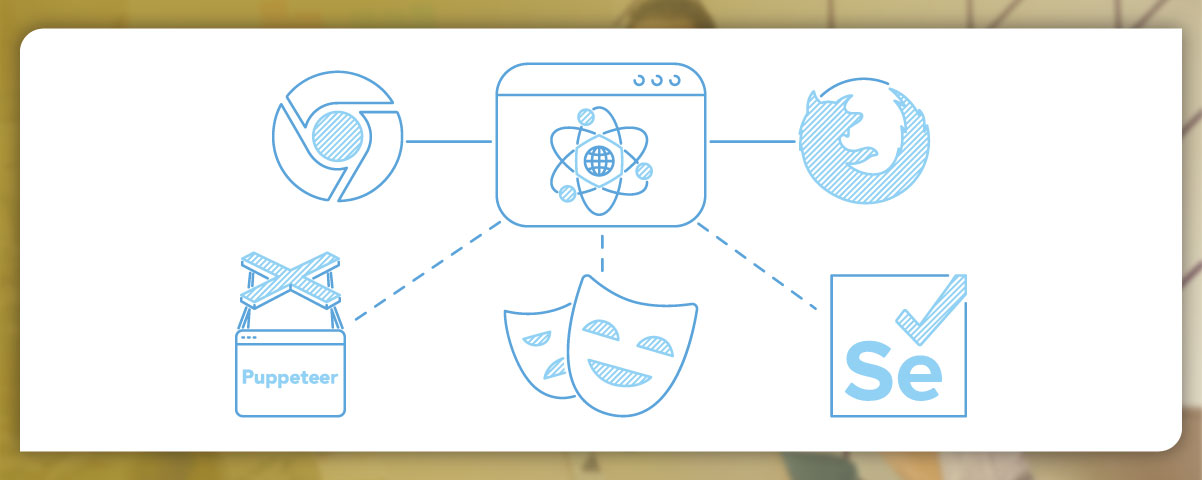

Technical Expertise: Web scraping requires technical knowledge and skills. Whether using Java, Selenium, PHP, or PhantomJS, it is essential to have a team with expertise in scripting languages to develop and maintain scraping scripts. This technical aspect adds complexity to the process.

Complex Architectures and Blocking Mechanisms: Target websites often have intricate structures and employ various mechanisms to deter or block scraping activities. These measures can include CAPTCHAs, IP blocking, or anti-scraping technologies. Overcoming these hurdles demands in-depth technical understanding and continuous monitoring to adapt scraping techniques accordingly.

Resource-Intensive: Scraping large volumes of data or dealing with complex websites can be resource-intensive. It may require substantial computing power, network bandwidth, and storage capacity to handle the scraping process effectively.

Data Cleaning and Structuring: Raw scraped data often requires cleaning, synthesis, and structuring to make it usable and suitable for analysis. This step involves handling missing or inconsistent data, removing duplicates, and organizing the data in a structured format for further analysis.

Web scraping is far from being an easy task. It demands technical expertise, continuous monitoring and adaptation, and proper data processing. It requires a dedicated team with the necessary skills and resources to navigate the challenges and complexities that arise during the scraping process.

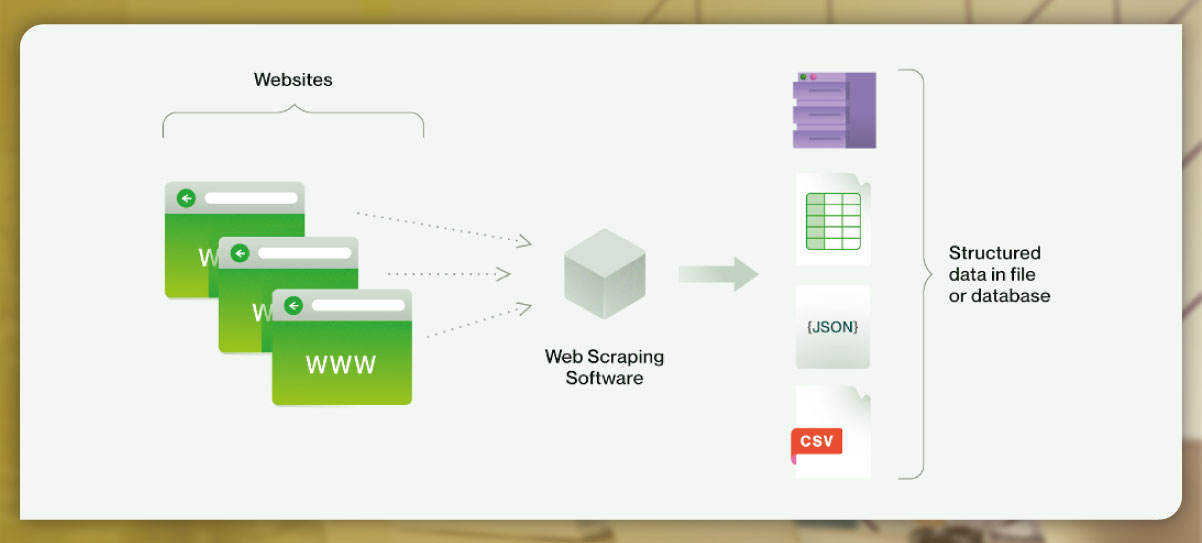

It is a common misconception to assume that once data is collected through web scraping, it is immediately ready for use. However, several considerations and steps are involved in preparing the collected data before it can be effectively utilized. Let's explore these aspects:

Data Format Compatibility: The format in which the target information is captured may not align with the format required by your systems or applications. For instance, if the scraped data is in JSON format, but your systems can only process CSV files, a conversion or transformation process is necessary to make the data compatible.

Structuring and Synthesizing: The collected data may require additional structuring and synthesis to make it coherent and meaningful. This could involve organizing the data into relevant categories, merging data from different sources, or creating relationships between elements.

Data Cleaning: Raw scraped data often contains inconsistencies, errors, or duplicated entries. Data cleaning involves identifying and rectifying such issues, ensuring data integrity and reliability. Removing corrupted or duplicated files is a common task during this phase.

Formatting and Structuring for Analysis: To perform meaningful analysis, the data needs to be formatted and structured in a way that allows for effective processing and interpretation. This includes organizing data into tables or databases, applying appropriate data types, and ensuring consistency across the dataset.

Only after these steps have been carried out and the data has been properly formatted, cleaned, and structured can it be considered ready for analysis and utilization. It is essential to recognize that data preparation is a vital part of the web scraping process, requiring attention and effort to ensure the collected data's accuracy, reliability, and usability.

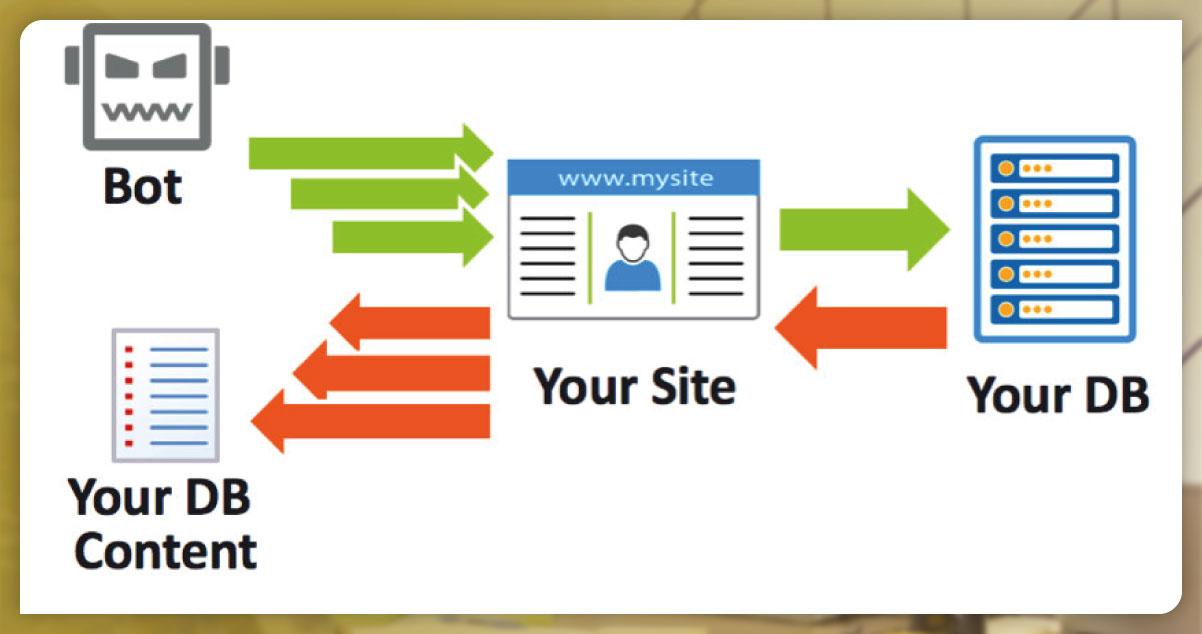

One prevalent myth is the belief that data scraping is a fully automated process by bots with minimal human involvement. However, the reality is quite different. Let's explore the truth:

Manual Oversight: Web scraping is often a manual process requiring technical teams' involvement. They oversee the scraping process, develop and maintain the necessary scripts, and troubleshoot any issues. Human intervention is essential to ensure the accuracy and efficiency of the scraping operation.

Technical Expertise: Data scraping involves technical skills and knowledge to develop scripts, handle complex website structures, and navigate anti-scraping mechanisms. Technical teams with expertise in scripting languages and web technologies must carry out the scraping effectively.

Automation Tools: While manual oversight is typically involved, automation tools can streamline the scraping process. Web Scraper IDE tools provide a user-friendly interface to create scraping workflows and automate certain aspects of data collection. These tools can simplify the process for users with limited technical skills.

Pre-collected Datasets: Alternatively, pre-collected datasets can be purchased for specific needs, eliminating the need for involvement in the complexities of the data scraping process. These datasets are already collected and prepared, allowing users to access the required information without directly engaging in the scraping operation.

Data scraping is not a completely automated process conducted solely by bots. It requires manual oversight, technical expertise, and automation tools or pre-collected datasets. Human involvement is crucial to ensure the accuracy, customization, and successful execution of the scraping process.

It is a misconception to assume that scaling up data scraping operations is easy. Let's examine why this myth is not valid:

Infrastructure and Resources: To scale up in-house data scraping operations, you must invest in additional servers, hardware, and software resources. This requires financial investment and technical expertise to establish and maintain the infrastructure. Scaling operations in-house also entails hiring and training new team members to manage the increased workload.

Cost Considerations: Scaling up data scraping operations can be expensive. Adding new servers and maintaining them, along with other infrastructure costs, can significantly impact a company's budget. The larger the operation, the higher the costs multiply. Upkeep expenses alone can reach an average of $1,500 per month for a single server, which can add up substantially for larger businesses.

Technical Challenges: Scaling up data scraping operations involves building new scrapers and ensuring they can handle the increased volume of data. This requires expertise in scripting languages and web technologies to develop and maintain efficient scraping scripts for each target site. Overcoming technical challenges and ensuring the scalability and reliability of the operation can be complex.

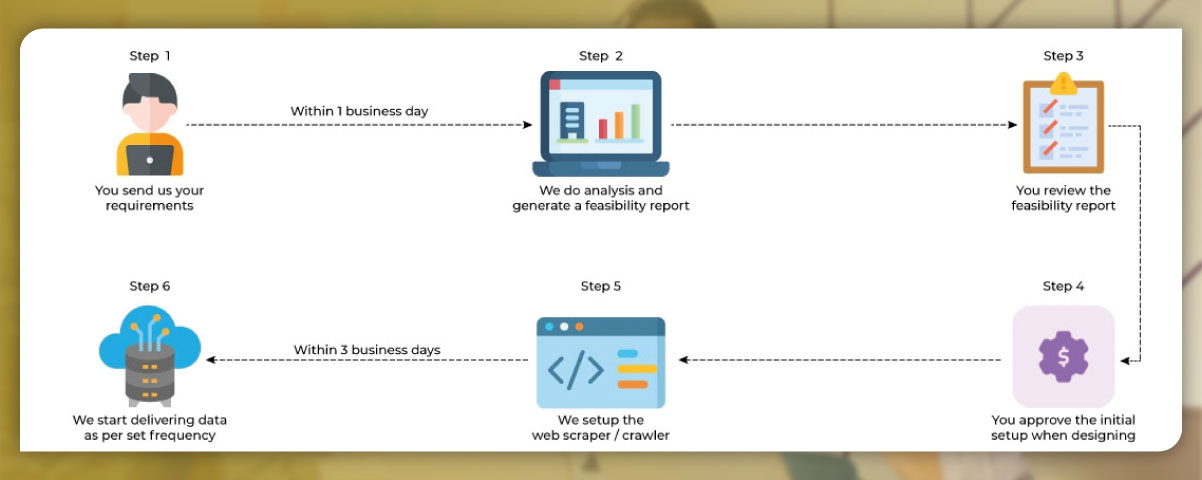

However, by relying on Data as a Service (DaaS) providers, scaling up data scraping operations becomes easier. DaaS providers offer third-party infrastructure and teams dedicated to data collection. They have the resources, technology, and expertise to handle the scaling requirements effectively. DaaS providers often have extensive coverage of constantly changing web domains, providing live maps of the data landscape.

Scaling up data scraping operations is not effortless when managing operations in-house. It involves significant investments in infrastructure, resources, and expertise. Alternatively, relying on DaaS providers can simplify the scaling process by leveraging their existing infrastructure and specialized teams.

This myth is not entirely accurate. While web scraping has the potential to gather large amounts of data, it does not guarantee that all of it will be usable or accurate. Here's why:

Data Quality: Manual data collection methods can sometimes result in inaccurate or illegible data. It is crucial to use tools and systems that incorporate quality validation measures to ensure the accuracy and reliability of the collected data. Validating data through real peer devices and routing traffic appropriately can help establish credibility with target sites and retrieve more accurate datasets for specific geographic regions.

Sample Validation: Using a data collection network that employs quality validation techniques allows for initially retrieving a small data sample. This sample can be validated to ensure its accuracy and suitability before running the complete data collection job. This approach helps save time and resources by focusing on collecting high-quality data rather than a vast quantity of potentially flawed information.

It's essential to recognize that web scraping can provide valuable data. Still, the usability and reliability of the data depend on factors such as data quality measures, validation techniques, and the accuracy of the scraping process. Taking steps to ensure data quality and validation can enhance the usefulness of the collected data.

Now that you have a clearer understanding of data scraping, you can approach your future data collection jobs more confidently. You can make informed decisions and leverage web scraping effectively by dispelling misconceptions. Remember these key points:

Legal Practice: Data scraping is legal as long as it adheres to the terms of service of target websites and does not involve collecting password-protected or personally identifiable information (PII). Familiarize yourself with the legal boundaries and ensure compliance.

Accessibility: Web scraping is not limited to developers. With zero-code tools and pre-built data scrapers, professionals without technical backgrounds can also harness the power of data scraping. Explore user-friendly solutions that simplify the process.

Distinction from Hacking: Differentiate web scraping from hacking. While hacking involves illegal activities, web scraping is accessing publicly available information for legitimate purposes, such as enhancing business competitiveness and improving consumer services.

Technical Challenges: Recognize that data scraping requires technical skills and resources. Overcoming challenges, such as complex site architectures and changing blocking mechanisms, may necessitate the expertise of a technical team. Be prepared to invest time and effort into addressing technical aspects.

Data Preparation: Understand that collected data may not be ready for immediate use. Properly preparing the data enhances its usability and effectiveness. Consider data formats, cleaning, structuring, and synthesis to ensure compatibility with your systems.

Automation and Scalability: While manual data scraping requires technical oversight, automation tools, and data service providers can simplify the process and support scalability. Explore options that fit your needs, whether leveraging automation or relying on third-party infrastructure.

By embracing these facts and dispelling misconceptions, you can approach data collection jobs with a more informed and strategic mindset. Use web scraping to gather valuable insights, enhance decision-making, and drive business success.

For more information, contact Actowiz Solutions now! You can also call us for all your mobile app scraping or web scraping service requirements.

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

Complete guide to scraping Shopify store data in 2026. Extract product prices, reviews, and inventory from Shopify stores for competitive intelligence.

Discover how Natural Grocers achieved a 23% increase in promotional ROI using real-time organic product pricing intelligence. Learn how data-driven pricing strategies enhance promotions and retail performance.

Track UK Grocery Products Daily Using Automated Data Scraping across Morrisons, Asda, Tesco, Sainsbury’s, Iceland, Co-op, Waitrose, and Ocado for insights.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.