The fusion of machine learning and web scraping represents an exciting advancement in web automation. This exploration will delve into three innovative projects that leverage AI for web scraping: Auto Product Detail Extraction, Product Mapping, and Browser Fingerprint Generator.

Many startups and businesses today claim to be empowered by AI and work on AI-based projects. However, some of these claims might be driven by internet hype rather than substantial advancements in artificial intelligence. This trend often involves applying "machine learning" labels to products or services, which can create an illusion of superior efficiency, intelligence, and seamlessness.

Upon closer examination, these AI enthusiasts may need to help distinguish between different AI subfields, such as artificial intelligence, deep learning, and machine learning. They might not understand the nuances and intricacies within these domains, including references to important figures like Čapek and Asimov, who have contributed significantly to AI.

While AI has made remarkable progress in recent years, it is crucial to critically assess claims made by businesses and startups and consider the actual capabilities and underlying technologies being employed. It is essential to differentiate between genuine AI advancements and instances where the AI label is used merely for marketing purposes. By looking closer beyond the surface-level talk and flashy taglines, one can better discern whether AI is being leveraged effectively or is merely a superficial addition to enhance marketing appeal.

Despite its seemingly straightforward nature of making web robots, web scraping presents significant technical complexities. While some may assume that teaching these robots a few things is easy, the reality is quite different. The fusion of AI and Robotic Process Automation (RPA) introduces a unique set of challenges. At Actowiz Solutions, we take pride in being among the few in the market constantly working towards combining these fields. Still, we recognize the formidable nature of this endeavor (no pressure intended for the pioneers in the AI Lab).

Web scraping involves:

Navigating dynamic websites.

Handling diverse structures and formats.

Circumventing anti-scraping measures.

Extracting data accurately.

Integrating RPA, which focuses on automating repetitive tasks, with AI, which enables intelligent decision-making and data analysis, requires meticulous planning and implementation. It entails developing advanced algorithms, machine learning models, and techniques for efficient data extraction, processing, and interpretation.

At Actowiz Solutions, we are dedicated to pushing the boundaries of web scraping by harnessing the power of both RPA and AI. Our team actively tackles the technical challenges associated with this integration. We strive to provide our clients with cutting-edge web scraping solutions that leverage automation and intelligent data handling.

While the path may be arduous, we are committed to advancing the combination of RPA and AI in web scraping, delivering innovative capabilities to our customers.

There are numerous methods to improve a machine's ability to extract the web effectively. We are here to impart our knowledge and showcase three web data scraping projects that our AI lineup has been developing: Browser Fingerprint Generator, Product Mapping, and Auto Product Data Extraction. Let's take a brief glimpse at how to automate an automation process.

1. Product mapping - Empowering Businesses with Comprehensive Insights

When we think of competitor’s analysis in the retail industry, the image that comes to mind is often not any team manually comparing comparable products on various online catalogs and logging details into documents. Surprisingly, this is still the reality of many businesses, as humans are often more accurate than available tools in executing this task. However, our AI Test bed at Actowiz Solutions is determined to change that.

Our AI Test bed team, led by Kačka and Matěj, is developing a model to determine whether similar products, such as a laptop at Amazon and a laptop at eBay, are the similar item using minor price differences. To accomplish this, we have made datasets of pre-checked pairs of equivalent products from various categories like electronics and household supplies. This dataset is then used to train our model to understand the idea of comparison and apply this algorithm for determining if products in every category are identical.

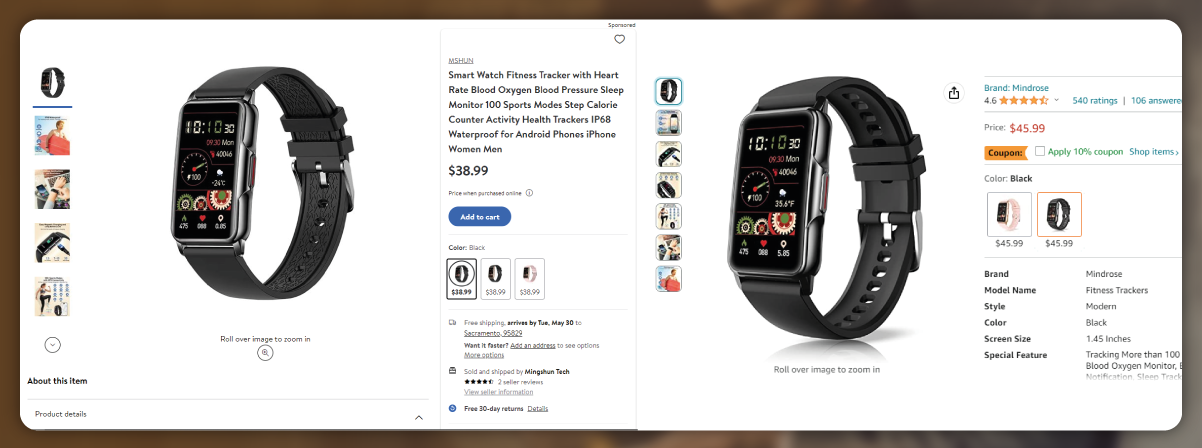

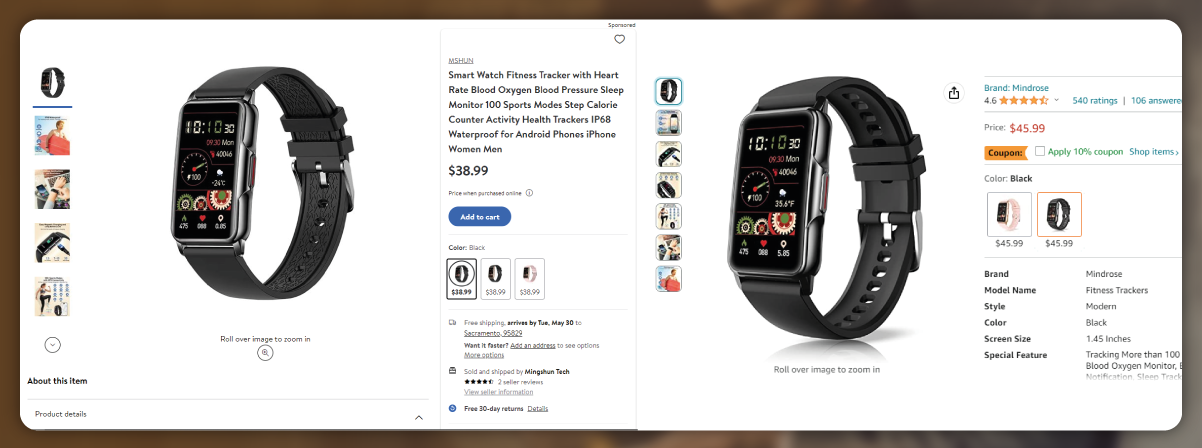

Online catalogs vary in how they represent their products, making it difficult for machines to distinguish between them. Attributes such as names, descriptions, specifications, and visual elements like image size or rotation can influence AI decision-making. Our AI team's task is to train the algorithm to handle these cases effectively, including scenarios with reworded names, missing attributes, or subtle image changes. However, we encounter several challenges in this process.

Developing the Product Mapping project requires significant effort and expertise. Our team is dedicated to overcoming these challenges and ensuring the AI algorithm can accurately analyze and compare products from catalogs. By automating the competitor analysis process, we aim to provide businesses with efficient and accurate insights to drive their decision-making and competitive strategies.

In summary, our AI Lab is actively working on the Product Mapping project, striving to enhance competitor analysis in the retail industry. Despite the complexities involved, we are committed to training the algorithm to tackle the nuances of product comparison across various online catalogs.

In product mapping, an AI model must consider various product attributes and learn to compare them accurately. We have developed multiple models using standard machine learning methods like random forests, logistic regression, SVM classifiers, linear regression, decision trees, and neural networks. The initial results were promising, as the AI model, without any past training from datasets, could identify some matching pairs. It created a collection of matching and no-matching pairs, with the majority being accurate matches. These results are measured by accuracy and recall, which can be adjusted according to specific needs. The flexibility allows us to prioritize a higher certainty rate or more results.

Regarding language, we first challenged model using Czech products, which proved beneficial due to the complex nature of Czech morphology, conjugation, and declination. As a result, we expect even advanced quality results for English. Additionally, the most critical component of a model, a classifier, is language-blind, enabling its application to all other domains.

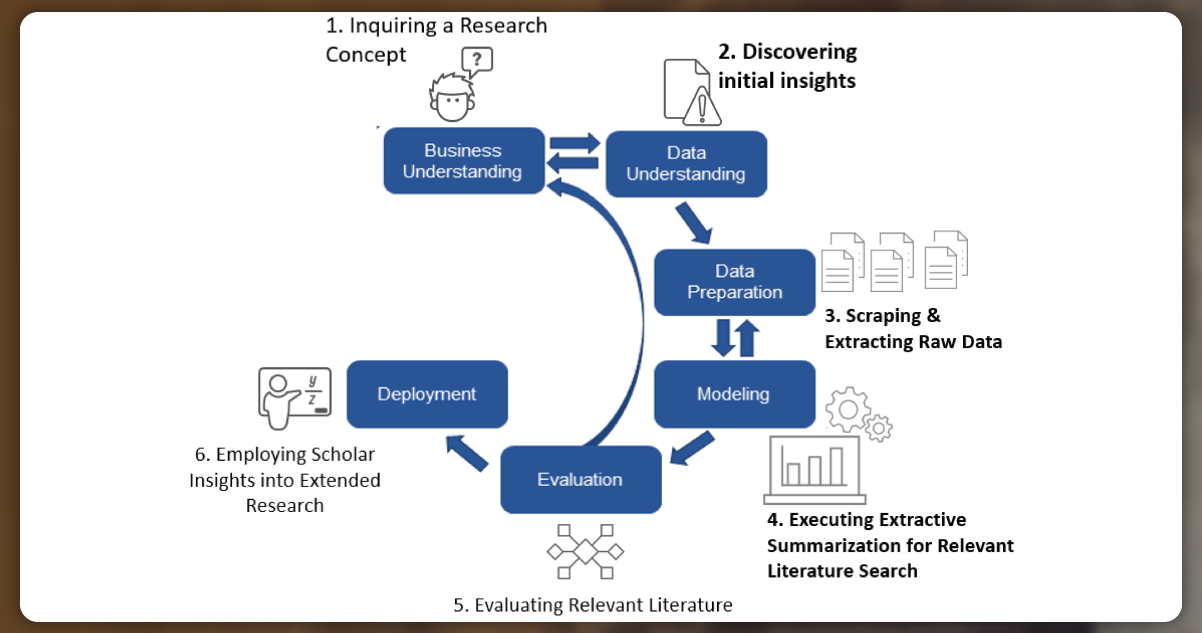

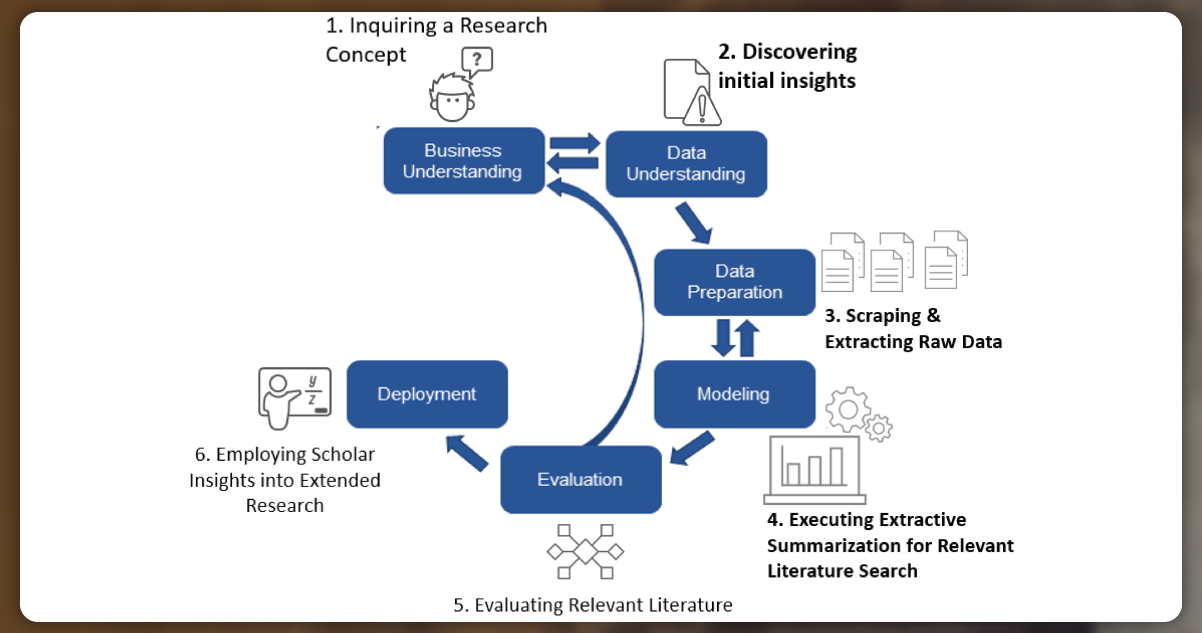

Our ultimate goal is to make a generic AI model adaptable to different use cases. Currently, the model goes through five stages:

Checking extracted data and adjusting preprocessing

Annotating a data sample

Fine-tuning a pre-trained model

Estimating performance

Running for data production

Each stage presents opportunities for improvement, including superior-labeled data, enhanced data parsing and preprocessing, code optimizations, additional advanced classifiers, and potential rewriting of Python code to C++ for faster execution.

As we gather additional data and get confidence in the system's results, we are confident that we can create a versatile actor that works effectively. Preferably, in the future, a deployment procedure would involve providing the actor with a dataset pair, and it would seamlessly go for production.

We aim to create a robust and adaptable AI solution for product mapping by continuously refining the model and incorporating advancements at each stage.

2. Auto Product Detail Scraping - Empowering Web Data Automation Developers

One of the biggest challenges in web extraction, and web automation is the constant need for developers to adjust their scrapers when a website layout changes. Identifying the exact changes and modifying the scraper can be frustrating and time-consuming. As web automation developers, we understand the pain of dealing with broken scrapers and their impact on productivity.

Imagine if a program could automatically detect changes in the website layout, analyze newer CSS selectors, and fix the scraper accordingly. Sounds like a dream, right? Well, that's precisely what our AI data scraping project, Automated Product Detail Extraction, aims to achieve.

While humans can easily recognize visual cues and understand the significance of layout changes, machines view all data as just data. Teaching a machine to differentiate between different elements on a webpage, such as names, descriptions, and prices, is not a simple task. Jan, who is leading the project, is working on training the machine to identify specific attributes like prices and distinguish them from other elements.

Once Jan's program achieves Auto Product Detail Scraping, it will have profound implications. It can generate new data scrapers or routinely update existing ones, relieving web automation developers from manually searching for changes and updating selectors. This tool will be a lifesaver for developers and businesses that rely on seamless and uninterrupted web data scraping.

Our ultimate goal is to provide developers with a tool that significantly reduces the time spent on selector detection and manual search, allowing them to focus on more meaningful coding tasks. With Automated Product Detail Extraction, we aim to revolutionize web scraping, making it more efficient, robust, and developer-friendly.

3. Header and Fingerprint Generators - Enhancing Anti-Anti-Extraction Protections

The days of effortlessly building a seamless web scraper are long gone. Nowadays, the web extraction landscape is an ongoing arms race, with one side developing sophisticated anti-scraping procedures while the other side devises clever workarounds to overcome. Websites have implemented various strategies to differentiate between bot and human visitors, including HTTP request analysis, user behavior analysis, and browser fingerprinting. These procedures are understandable, as websites need to protect themselves from potentially disruptive or malicious scraping activities.

One particularly effective anti-bot measure is fingerprinting-related detection. Websites create complex formulas using various data points such as device information, IP address, operating system, and browser provisions obtained through cookies. By analyzing user behavior and correlating it with that data, websites can accurately determine whether a visitor is a human or a bot. If a visitor's profile matches recognized bot fingerprints, they may be identified like a bot and subjected to bans or restrictions. Simply rotating IP addresses or altering user agents are no longer sufficient to evade detection. Web scraping techniques must evolve to overcome these challenges.

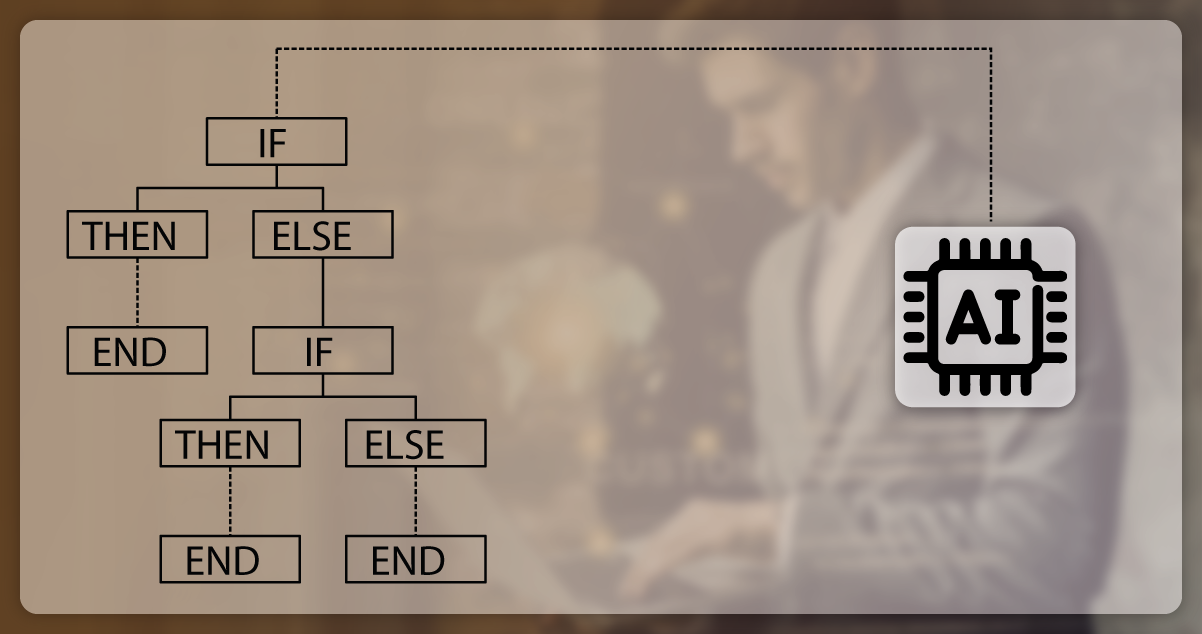

To counter fingerprinting-based detection, powerful web scrapers generate authentic browser headers and fingerprints. Creating an anti-fingerprinting program that emulates human-browser fingerprints involves capturing the intricate dependencies found in real headers and fingerprints. This can be accomplished by utilizing a dependency model, such as a Bayesian network, which utilizes the captured dependencies to generate fingerprints that closely resemble those of genuine human users.

It is essential to recognize that websites also utilize machine learning algorithms to analyze user behavior and accurately detect and block bots. To outsmart these models, one must decipher the underlying rules and mechanisms they employ. By understanding and adapting to the detection methods employed by websites, scrapers can enhance their ability to bypass anti-scraping measures and achieve successful data extraction.

In practice, our team collects data on browsing patterns to train our model in generating plausible combinations of browsers, operating systems, devices, and other attributes used in fingerprinting. This data is collected from recognized "passing" fingerprints, categorized, and then fed into an AI model for facilitating its learning process. The goal is to have the AI model produce fingerprints, which are both random and human-like enough to bypass anti-scraping measures without being flagged by websites. Observing success rates for each fingerprint and establishing a feedback sphere will further enhance the AI model's performance over time.

Producing accurate web fingerprints is a complex task that goes beyond a simple crash course in web scraping. With the advent of anti-bot ML-based algorithms, the battle has evolved into a machine-versus-machine scenario. Nowadays, staying ahead in the data scraping business and achieving successful scraping at scale often requires leveraging such technologies and strategies.

In summary, we have covered three AI-powered web scraping projects: producing web fingerprints to identify CSS selectors for real scraper repairs, battle anti-scraping measures, and product mapping to do competitor analysis. We hope that this discussion has shed some daylight on the intricacies of this challenging combination and that, in the inevitable war between machines, they will give up our lives and ultimately preserve humanity. Cheers to that!

Want to know more about how to build practical AI models for web scraping? Contact Actowiz Solutions now! You can also call us for all your mobile app scraping or web scraping service requirements.

Core Scraping Services

Amazon Data Scraping #1 Walmart Data Scraping Shopify Store Scraping HOT TikTok Shop Scraping HOT Flipkart Data ScrapingTop Global Platforms

Platforms by Region

🇺🇸 USA🇬🇧🇪🇺 UK/EU🇮🇳 India🇦🇪 ME🌏 SEA🌎 LATAM🇨🇳🇯🇵🇰🇷🇦🇺 AUAmazon Data Scraping #1 Walmart Data Scraping Target Data Scraping NEW Shopify Scraping HOT TikTok Shop Scraping HOT Costco Data Scraping NEW Best Buy Scraping NEW Home Depot Scraping NEW Etsy Data Scraping NEW Shein Data Scraping NEW DoorDash Scraping NEW Instacart Scraping NEWTesco Data Scraping NEW Sainsbury's Scraping NEW ASDA Data Scraping NEW Ocado Scraping NEW ASOS Data Scraping NEW Rightmove Scraping NEW Deliveroo Scraping NEW Zalando Scraping NEW Otto Scraping NEW Cdiscount Scraping NEW Carrefour Scraping NEW Allegro Scraping NEW Bol.com Scraping NEWFlipkart Data Scraping JioMart Data Scraping NEW BigBasket Scraping NEW Myntra Data Scraping NEW Nykaa Data Scraping NEW Blinkit Data Scraping Zepto Data Scraping Zomato Data Scraping Swiggy Data ScrapingNoon Data Scraping NEW Amazon.ae Scraping NEW Talabat Data Scraping NEW Careem Data Scraping NEW PropertyFinder Scraping NEWPricing & Promotions

MAP Violations Brand Protection Counterfeit Detection Price Intelligence AI HOT Data IntelligenceBrand & Intelligence

Share of Search Content Audit & PDP Reviews & Ratings Retail Media Buy Box Monitoring Social Commerce HOT Live Commerce NEW Agentic Commerce NEWDigital Shelf & Search

Assortment Planning Competitive Benchmarking Product Availability Seller Intelligence NEW Q-Commerce NEWAssortment

E-commerce Intelligence Hyperlocal Insights POI & Store Locator DTC Brand Analytics NEWFor Retailers

Marketplace Scrapers

Amazon API TikTok Shop API HOT Uber Eats API Airbnb API Zepto / Blinkit API Instacart API NEW Talabat API NEWData APIs

Web Extract API Reviews API SERP API Pricing Webhook NEWUniversal APIs

Live Crawler API Scheduler Realtime Alerts Webhook Delivery 🐍 Python SDK 💚 Node.js SDKDelivery & SDKs

Knowledge Center

Digital Shelf Playbook MAP Compliance Guide Pricing Intel Guide Scraping Compliance TikTok Shop Guide NEW Cross-Border Guide NEWGuides & Playbooks

Sample Datasets HOT ROI Calculator NEW API Postman Collection Demo Dashboards Free API Playground NEW Press KitDownloads & Tools

Trust Center About Us FAQs CareersTrust & Company