Building an enterprise data extraction infrastructure can be complex, but it can be manageable. Businesses must clearly understand how to construct a scalable infrastructure for data extraction.

Customizing the procedure to meet specific requirements sustainably is essential. However, many organizations need help finding developers with the necessary expertise, need help forecasting budgets accurately, or identify suitable solutions that align with their needs.

This blog provides valuable insights for various data extraction purposes such as lead generation, price intelligence, and market research. It emphasizes the significance of crucial elements, including a scalable architecture, high-performance configurations, crawl efficiency, proxy infrastructure, and automated data quality assurance.

To maximize the value of your data, it is crucial to ensure that your web scraping project is built on a well-crafted and scalable architecture. A robust architecture provides a solid foundation for efficient and effective data extraction.

Establishing a scalable architecture is crucial for the effectiveness of a large-scale web scraping project. A vital component of this architecture is creating a well-designed index page that includes links to all the other pages requiring data extraction. While developing an effective index page can be complex, leveraging an enterprise data extraction tool can significantly simplify and accelerate the process. This tool enables you to construct a scalable architecture efficiently, saving time and effort in implementing your web scraping project.

In many instances, an index page serves as a gateway to multiple other pages requiring scraping. In e-commerce scenarios, these pages often take the form of category "shelf" pages, which contain links to various product pages.

Similarly, a blog feed is typically available for blog articles, providing links to individual blog posts. However, it is essential to segregate discovery spiders from extraction spiders to achieve scalable enterprise data extraction.

Decoupling the discovery and extraction processes allows you to streamline and scale your data extraction efforts. This approach allows for efficient management of resources, improved performance, and easier maintenance of your web scraping infrastructure.

In enterprise e-commerce data extraction scenarios, it is beneficial to employ a two-spider approach. One spider, known as the product discovery spider, is responsible for discovering and storing the URLs of enterprise data products within the target category. The other spider scraps the desired data from the identified product pages.

This separation of processes allows for a clear distinction between crawling and scraping, enabling more efficient allocation of enterprise data resources. By dedicating resources to each process individually, bottlenecks can be avoided, and the overall performance of the web scraping operation can be optimized.

Spider design and crawling efficiency take center stage when aiming to construct a high-output enterprise data extraction infrastructure. Once you have established a scalable architecture in your data extraction project's initial planning phase, the next crucial step is to configure your hardware and spiders for optimal performance.

Speed becomes a critical factor when undertaking enterprise data extraction projects at scale. In many applications, the ability to complete a full scrape within a defined timeframe is of utmost importance. For instance, e-commerce companies use price intelligence data to adjust prices. Thus, their spiders must scrape their competitors' product catalogs within a few hours to enable timely adjustments.

1. Create a deeper understanding about a web scraping software

2. Finetune your spiders with hardware to maximize the crawling speed

3. Ensure you get the right crawling efficiency and hardware to extract at scale

4. Make sure you're not wasting your team’s efforts on needless procedures

5. Consider that speed is the high priority while organizing configurations

Achieving high-speed performance in an enterprise-level web scraping infrastructure poses significant challenges. To address these challenges, your web scraping team must maximize hardware efficiency and eliminate unnecessary processes to squeeze out every ounce of speed. This involves fine-tuning hardware configurations, optimizing resource utilization, and streamlining the data extraction to minimize time wasted on redundant tasks. By prioritizing efficiency and eliminating bottlenecks, your team can ensure optimal speed and productivity in your web scraping operations.

To achieve optimal speed and efficiency in enterprise web scraping projects, teams must develop a comprehensive understanding of the web scraper software market and the enterprise data framework they are utilizing.

Maintaining crawling efficiency and robustness is essential when scaling an enterprise data extraction project. The objective should be to extract the required data accurately and reliably while minimizing the number of requests made.

Every additional request or unnecessary data extraction can significantly impact the crawling speed. Therefore, the focus should be on extracting the precise data in the fewest requests possible.

Acknowledging the challenges posed by navigating websites with sloppy code and constantly evolving structures is essential. These factors require adaptability and continuous monitoring to ensure the web scraping process remains adequate and efficient. Regular updates and adjustments to the scraping techniques are necessary to handle the dynamic nature of websites and maintain the desired level of crawling efficiency.

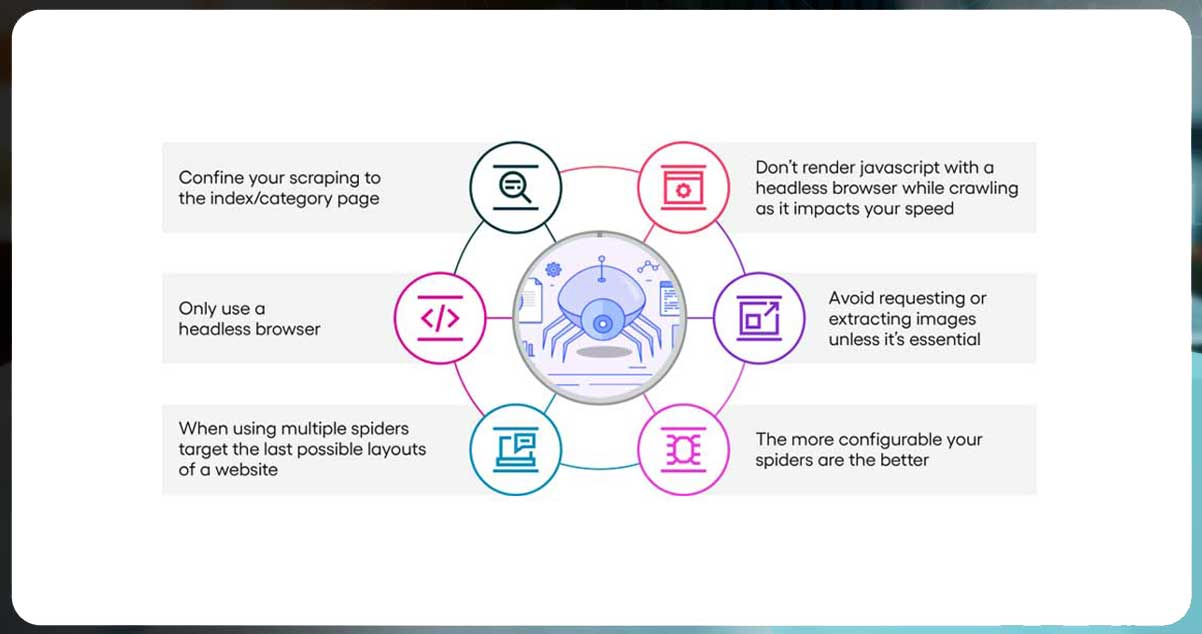

It's important to anticipate that the target website may undergo changes impacting your spider's data extraction coverage or quality every 2-3 months. To handle this, it is recommended to follow best practices and employ a single product extraction spider that can adapt to various page layouts and website rules.

Rather than creating multiple spiders for each possible layout, having a highly configurable spider is advantageous. This allows for flexibility in accommodating different page structures and ensures that the spider can adjust to website layout changes without requiring significant modifications.

By focusing on configurability and adaptability, your spider can effectively handle various page layouts and continue to extract data accurately, even as the website evolves.

To optimize crawling speed and resource utilization in web scraping projects, consider the following best practices:

Use A Headless Browser Sparingly: Deploy serverless functions with headless browsers like Splash or Puppeteer only when necessary. Rendering JavaScript with a headless browser during crawling consumes significant resources and can slow down the crawling process. It is recommended to use headless browsers as a last resort.

Minimize Image Requests And Extraction: Avoid requesting or extracting images unless they are essential for your data extraction needs. Extracting images can be resource-intensive and may impact crawling speed. Focus on extracting the required textual data and prioritize efficiency.

Confine Scraping To Index/Category Pages: Whenever possible, extract data from the index or category page rather than requesting each item page. For example, in product data scraping, if the necessary information (product names, prices, ratings, etc.) can be obtained from the shelf page, avoid making additional requests to individual product pages.

Consider Fallback Options: In cases where the engineering team cannot immediately fix broken spiders, having a fallback solution can be beneficial. Actowiz Solutions, for instance, utilizes a machine learning-based data extraction tool that automatically identifies target fields on the website and returns the desired results. This allows for continued data extraction while the spiders are being repaired.

Implementing these practices can enhance crawling efficiency, reduce resource consumption, and ensure a more reliable and streamlined web scraping process.

To ensure reliable and scalable web scraping at an enterprise level, it is essential to establish a robust proxy management infrastructure. Proxies are crucial in enabling location-specific data targeting and maintaining high scraping efficiency.

A well-designed proxy management system is necessary to avoid common challenges associated with proxy usage and to optimize the scraping process.

To achieve effective and scalable enterprise data extraction, it is crucial to have a comprehensive proxy management strategy in place. This includes employing a large proxy pool and implementing various techniques to ensure optimal proxy usage. Critical considerations for successful proxy management include:

1. Extensive proxy list: Maintain a diverse and extensive list of proxies from reputable providers. This ensures a wide range of IP addresses, increasing the chances of successful data extraction without being detected as a bot.

2. IP rotation and request throttling: Implement IP rotation to switch between proxies for each request. This helps prevent detection and blocking by websites that impose restrictions based on IP addresses. Additionally, consider implementing request throttling to control the frequency and volume of requests, mimicking human-like behavior.

3. Session management: Manage sessions effectively by maintaining state information, such as cookies, between requests. This ensures continuity and consistency while scraping a website, enhancing reliability and reducing the risk of being detected as a bot.

4. Blacklisting prevention: Develop mechanisms to detect and avoid blacklisting by monitoring proxy health and response patterns. If a proxy becomes unreliable or gets blacklisted, remove it from the rotation and replace it with a functional one.

5. Anti-bot countermeasures: Design your spider to overcome anti-bot countermeasures without relying on heavy headless browsers like Splash or Puppeteer. While capable of rendering JavaScript, these browsers can significantly impact scraping speed and resource consumption. Explore alternative methods such as analyzing network requests, intercepting API calls, or parsing dynamic content to extract data without needing a headless browser.

By implementing a robust proxy management system and optimizing your spider's behavior to handle anti-bot measures, you can ensure efficient and scalable enterprise data extraction while minimizing the risk of being detected or blocked.

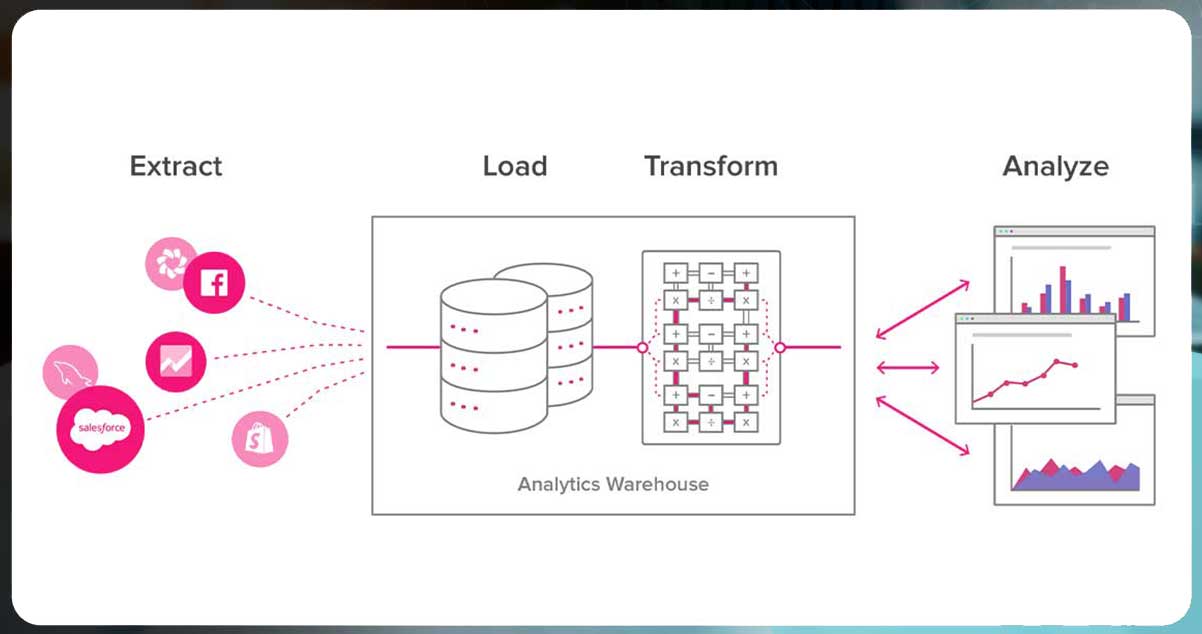

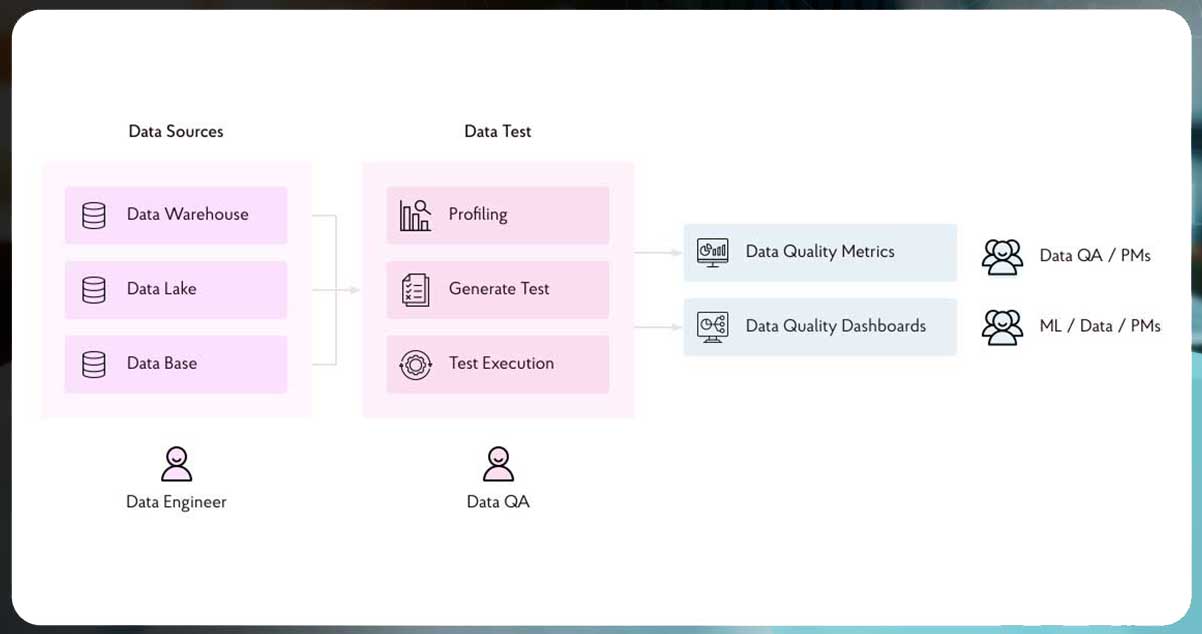

Automated data quality assurance is crucial to any enterprise data extraction project. However, the extracted data's reliability and accuracy directly impact the project's value and effectiveness. It should be more noticed in favor of focusing on building spiders and managing proxies.

To ensure high-quality data for enterprise data extraction, it is essential to implement a robust automated data quality assurance system.

By automating the data quality assurance process, you can effectively validate and monitor the reliability and accuracy of the extracted data. This is particularly crucial when dealing with large-scale web scraping projects that involve millions of records per day, as manual validation becomes impractical.

To establish a successful enterprise data extraction infrastructure, it is essential to comprehend your data requirements and design an architecture that caters to those needs. Consider crawl efficiency throughout the development process.

Once all the necessary elements, including high-quality data extraction automation, are in place, analyzing reliable and valuable data becomes seamless. This instills confidence in your organization's ability to handle such projects without concerns.

Now that you have gained valuable insights into the best practices and procedures for ensuring enterprise data quality through web scraping, it is time to build your enterprise web scraping infrastructure. Our team of expert developers is available to assist, making the process smooth and manageable.

Contact us today to discover how we can effectively support you in managing these processes and achieving your data extraction goals. You can also call us for all your mobile app scraping or web data collection service requirements.

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

Complete guide to scraping Shopify store data in 2026. Extract product prices, reviews, and inventory from Shopify stores for competitive intelligence.

Discover how Natural Grocers achieved a 23% increase in promotional ROI using real-time organic product pricing intelligence. Learn how data-driven pricing strategies enhance promotions and retail performance.

Track UK Grocery Products Daily Using Automated Data Scraping across Morrisons, Asda, Tesco, Sainsbury’s, Iceland, Co-op, Waitrose, and Ocado for insights.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.