Introduction

In the digital age, e-commerce platforms have become our go-to destinations for shopping, offering a vast array of products at our fingertips. Whether you're a savvy shopper, a market researcher, or a business owner looking to gain a competitive edge, extracting price and item data from e-commerce websites can provide valuable insights. In this blog, we will explore how you can scrape data from popular e-commerce platforms like Amazon, Flipkart, Myntra, Ajio, and Tata Cliq, using a user-input URL in a text box.

Why Scrape E-Commerce Data?

Let's delve into the various reasons why scraping e-commerce data is essential and discuss each point in detail:

Price Comparison

- Consumer Perspective: Price is a critical factor for consumers when making purchasing decisions. E-commerce data scraping enables consumers to compare prices for the same product across multiple online retailers.

- Value: It helps consumers find the best deals, potentially saving them money on their purchases.

- Example Use Case: Imagine you're looking to buy a smartphone. By scraping data from various e-commerce sites, you can quickly compare prices, discounts, and shipping options to make an informed choice.

Market Research

- Business Perspective: For businesses, understanding the market is fundamental to success. E-commerce data scraping provides a wealth of information on product trends, customer preferences, and market dynamics.

- Value: It allows businesses to tailor their strategies, product offerings, and marketing campaigns to meet customer demands effectively.

- Example Use Case: An e-commerce retailer wants to launch a new line of clothing. By scraping data from fashion e-commerce sites, they can identify popular trends, preferred colors, and pricing strategies.

Competitive Analysis

- Business Perspective: Staying ahead of the competition is a constant challenge. E-commerce data scraping helps businesses monitor their competitors closely.

- Value: It provides insights into competitor pricing, product ranges, customer reviews, and marketing tactics.

- Example Use Case: A technology company scrapes data from rival websites to track their latest product releases, pricing changes, and customer feedback to adjust its own product strategy accordingly.

Inventory Management

- Retailer Perspective: For retailers, effective inventory management is crucial to meet customer demand without overstocking or running out of products.

- Value: Scraped data helps track product availability, sales trends, and pricing fluctuations, enabling retailers to optimize their inventory.

- Example Use Case: An online bookstore uses scraped data to monitor book availability, ensuring they restock titles that are in high demand and manage their inventory efficiently.

Content Aggregation

- Content Creator Perspective: Content creators and affiliate marketers often use scraped data to curate product listings and create informative content.

- Value: It streamlines the process of collecting product information, allowing content creators to focus on generating engaging content.

- Example Use Case: A lifestyle blogger aggregates product descriptions, images, and prices from various e-commerce sites to create a comprehensive gift guide for their readers.

Dynamic Pricing

- Business Perspective: Dynamic pricing strategies involve adjusting prices based on various factors, including demand and competitor prices.

- Value: Scraped data, especially real-time pricing information, is crucial for implementing dynamic pricing and maintaining competitiveness.

- Example Use Case: A travel booking platform scrapes data from airlines and hotels to adjust its prices in real-time, offering customers the best deals.

Customer Reviews and Ratings

- Business Perspective: Customer feedback is invaluable for businesses looking to improve their products and services.

- Value: Scraped customer reviews and ratings offer insights into product quality, customer satisfaction, and areas for enhancement.

- Example Use Case: An electronics manufacturer analyzes customer reviews scraped from e-commerce platforms to identify common product issues and prioritize improvements.

Product Information Enrichment

- Business Perspective: Accurate and comprehensive product information is essential for attracting and retaining customers.

- Value: Scraped data helps enrich product databases with details like specifications, features, and user-generated content.

- Example Use Case: An online grocery store scrapes data to update product listings with nutritional information, ensuring customers have access to complete product details.

Price Tracking and Alerts

- Consumer and Business Perspective: Price tracking allows both consumers and businesses to stay informed about price changes.

- Value: By setting up price alerts, users can receive notifications when product prices drop or reach a specific threshold.

- Example Use Case: A frequent traveler sets up price alerts for flight tickets, ensuring they book at the most affordable rates.

Content Syndication

- Business Perspective: Content syndication involves sharing product listings, descriptions, and prices with other online marketplaces or affiliate websites.

- Value: It expands product reach and potential sales by reaching a broader audience.

- Example Use Case: An e-commerce retailer syndicates its product listings to affiliate websites, increasing the visibility of its products across multiple platforms.

Adaptive Marketing

- Marketing Perspective: Marketers leverage scraped data to tailor their advertising campaigns effectively.

- Value: Real-time pricing and product availability data enable marketers to deliver targeted messages to their audience.

- Example Use Case: An online fashion retailer adjusts its Facebook ad campaigns based on the availability of specific clothing items and ongoing promotions.

Demand Forecasting

- Business Perspective: Accurate demand forecasting is essential for efficient supply chain management.

- Value: Analyzing historical e-commerce data helps businesses predict future demand patterns and optimize inventory levels.

- Example Use Case: An electronics manufacturer uses scraped data to forecast demand for its products during the holiday season, ensuring they meet customer needs without excess inventory.

Scraping e-commerce data is not only beneficial but also essential for consumers, businesses, and content creators. It empowers data-driven decision-making, enhances customer experiences, and enables businesses to adapt and thrive in the competitive online marketplace. However, it's crucial to conduct web scraping ethically, respecting the terms of service and legal regulations of the targeted websites, to ensure a responsible and sustainable data harvesting process.

Tools You'll Need for Scraping E-Commerce Data

To scrape data effectively, you'll require the following tools:

Programming Language: Choose a programming language for web scraping. Python is a popular choice due to its rich ecosystem of libraries and tools for web scraping.

Web Scraping Libraries:

- Beautiful Soup: A Python library that parses HTML and XML documents and provides a convenient way to extract data from web pages.

- Scrapy: A robust and versatile web scraping framework for Python that allows you to build and scale web scraping projects easily.

- Selenium: A web automation framework that allows you to interact with web pages, making it useful for scraping dynamic websites.

HTTP Request Library: Use a library like requests (Python) to send HTTP requests to web servers and retrieve HTML content from web pages.

User Interface (UI): Depending on your project's requirements, you may need to create a user-friendly interface for users to input URLs or configure scraping parameters.

The Scraping Process

Let's break down each step of the scraping process in detail:

Step 1: User Input

The scraping process begins by providing a user-friendly interface, typically a web page or application, where users can interact and input the URL of the product page they wish to scrape. This URL serves as the starting point for data extraction. The user input interface should be intuitive, allowing users to paste the URL easily. Additionally, it can include options for configuring scraping parameters, such as selecting specific data elements to extract or setting filters.

Step 2: Fetch the Web Page

Once the user has input the URL, the web scraping script, often written in Python, utilizes the requests library to send an HTTP request to the web server hosting the specified URL. The server responds by providing the HTML content of the web page. It's crucial to handle potential errors gracefully, such as invalid URLs, network connectivity issues, or server timeouts. Proper error handling ensures the robustness of the scraping process.

Step 3: Parse the HTML

With the HTML content of the web page retrieved, the next step involves parsing this raw HTML using a parsing library like BeautifulSoup. BeautifulSoup allows the script to navigate the HTML structure, locate specific HTML elements, and extract data from them. This step is vital as it provides the means to identify and capture the desired information, such as product names, prices, and customer reviews.

Step 4: Data Extraction

Data extraction is where the scraping script identifies the HTML elements containing the data of interest. In the context of e-commerce platforms, these elements typically include product names, prices, descriptions, images, and more. BeautifulSoup is used to target these elements by specifying the HTML tags, attributes, and their positions within the HTML structure. Once identified, the script extracts the data and stores it in a structured format, such as a Python data structure, a CSV (Comma-Separated Values) file, a JSON (JavaScript Object Notation) document, or a database.

Step 5: Handling Multiple Pages

Many e-commerce websites present product listings across multiple pages, often in a paginated format. To scrape data comprehensively, the scraping script may need to implement a mechanism to navigate through these pages systematically. This could involve identifying pagination elements, extracting links to subsequent pages, and repeating the scraping process for each page. Proper handling of pagination ensures that all relevant data is collected.

Step 6: Data Cleaning

Scraped data may contain unwanted characters, HTML tags, or formatting that can affect its accuracy and usability. Data cleaning involves preprocessing the extracted data to remove such artifacts and inconsistencies. Common data cleaning tasks include stripping HTML tags, converting data types, handling missing values, and standardizing formats. Clean data is essential for accurate analysis and reporting.

Step 7: Data Storage

The final step of the scraping process involves deciding how to store the extracted and cleaned data. The choice of storage format depends on the project's requirements. Common options include:

CSV (Comma-Separated Values): Suitable for tabular data, such as product listings.

JSON (JavaScript Object Notation): Ideal for structured data with nested elements.

Database: A relational or NoSQL database can accommodate large datasets and provide querying capabilities for further analysis.

Selecting an appropriate storage format ensures that the scraped data is readily accessible for analysis, reporting, and integration into other applications.

The scraping process involves a series of systematic steps, starting with user input and culminating in data extraction, cleaning, and storage. Properly executed web scraping allows individuals and businesses to access valuable information from e-commerce websites efficiently.

Ethical Considerations

When scraping e-commerce websites, it's essential to adhere to ethical guidelines and respect the terms of service of each platform. Avoid overloading their servers with requests, use rate limiting, and ensure that your scraping activities do not disrupt the normal functioning of the website.

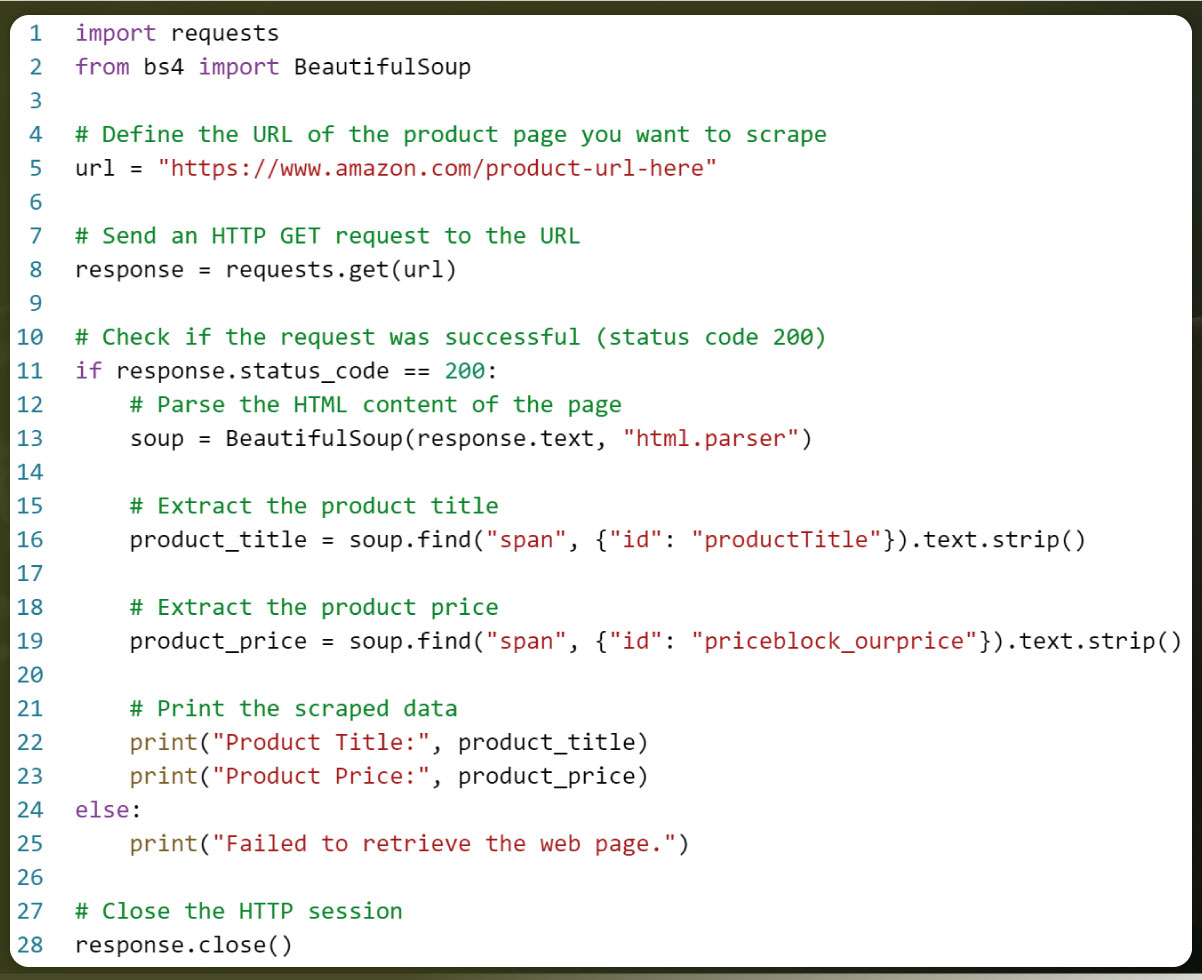

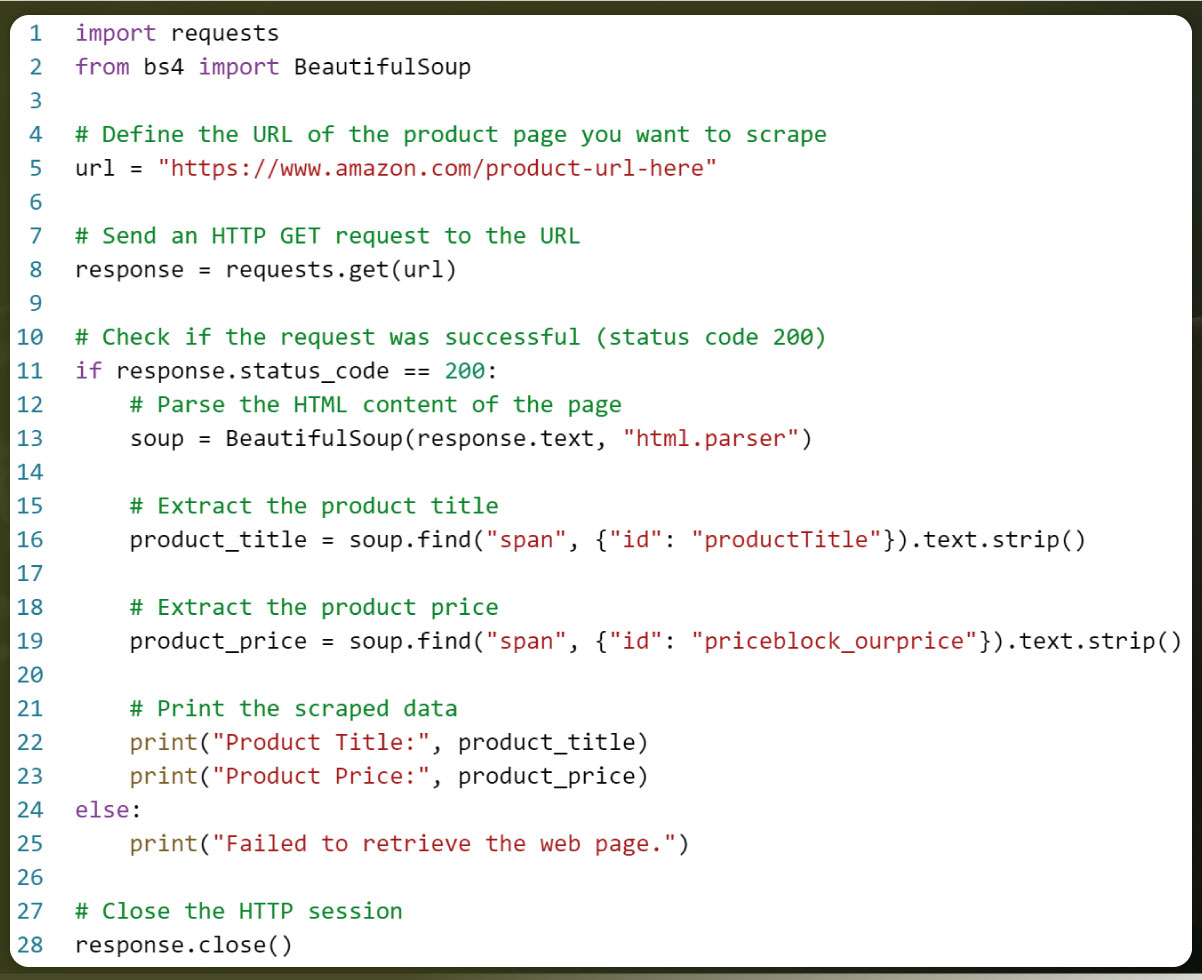

Let’s Take An Example

Here is a simplified example of scraping price and item data from a single e-commerce website, but keep in mind that scraping data from multiple websites require specific adaptations and handling for each site's structure and policies. Additionally, web scraping should always be conducted ethically and in compliance with the terms of service of the websites you are scraping.

Here's a Python example using BeautifulSoup to scrape price and item data from Amazon. You can adapt this code for other e-commerce websites by adjusting the HTML structure and elements as needed:

To scrape data from other e-commerce websites like Flipkart, Myntra, Ajio, and Tata Cliq, you would need to:

Inspect the HTML structure of each website to identify the specific HTML elements that contain the item title and price information.

Modify the code above to target those elements and extract the data accordingly.

Repeat the process for each website, adapting the code as needed.

Remember to respect the terms of service of each website, implement error handling, and consider using user-agent headers and proxies to avoid being blocked or rate-limited during scraping.

Conclusion

Actowiz Solutions stands as your trusted partner in unlocking the vast potential of e-commerce data. Our expertise in web scraping allows you to extract valuable insights from top platforms like Amazon, Flipkart, Myntra, Ajio, and Tata Cliq. By leveraging our cutting-edge solutions, you gain a competitive edge, make data-driven decisions, and stay ahead in the dynamic world of online commerce.

Don't miss out on the opportunities that e-commerce data can offer. Actowiz Solutions is here to empower your business with actionable information. Contact us today to embark on your data journey and drive your success to new heights. Let's scrape the path to prosperity together! You can also reach us if you have requirements related to data collection, mobile app scraping, instant data scraper and web scraping service.

Core Scraping Services

Amazon Data Scraping #1 Walmart Data Scraping Shopify Store Scraping HOT TikTok Shop Scraping HOT Flipkart Data ScrapingTop Global Platforms

Platforms by Region

🇺🇸 USA🇬🇧🇪🇺 UK/EU🇮🇳 India🇦🇪 ME🌏 SEA🌎 LATAM🇨🇳🇯🇵🇰🇷🇦🇺 AUAmazon Data Scraping #1 Walmart Data Scraping Target Data Scraping NEW Shopify Scraping HOT TikTok Shop Scraping HOT Costco Data Scraping NEW Best Buy Scraping NEW Home Depot Scraping NEW Etsy Data Scraping NEW Shein Data Scraping NEW DoorDash Scraping NEW Instacart Scraping NEWTesco Data Scraping NEW Sainsbury's Scraping NEW ASDA Data Scraping NEW Ocado Scraping NEW ASOS Data Scraping NEW Rightmove Scraping NEW Deliveroo Scraping NEW Zalando Scraping NEW Otto Scraping NEW Cdiscount Scraping NEW Carrefour Scraping NEW Allegro Scraping NEW Bol.com Scraping NEWFlipkart Data Scraping JioMart Data Scraping NEW BigBasket Scraping NEW Myntra Data Scraping NEW Nykaa Data Scraping NEW Blinkit Data Scraping Zepto Data Scraping Zomato Data Scraping Swiggy Data ScrapingNoon Data Scraping NEW Amazon.ae Scraping NEW Talabat Data Scraping NEW Careem Data Scraping NEW PropertyFinder Scraping NEWPricing & Promotions

MAP Violations Brand Protection Counterfeit Detection Price Intelligence AI HOT Data IntelligenceBrand & Intelligence

Share of Search Content Audit & PDP Reviews & Ratings Retail Media Buy Box Monitoring Social Commerce HOT Live Commerce NEW Agentic Commerce NEWDigital Shelf & Search

Assortment Planning Competitive Benchmarking Product Availability Seller Intelligence NEW Q-Commerce NEWAssortment

E-commerce Intelligence Hyperlocal Insights POI & Store Locator DTC Brand Analytics NEWFor Retailers

Marketplace Scrapers

Amazon API TikTok Shop API HOT Uber Eats API Airbnb API Zepto / Blinkit API Instacart API NEW Talabat API NEWData APIs

Web Extract API Reviews API SERP API Pricing Webhook NEWUniversal APIs

Live Crawler API Scheduler Realtime Alerts Webhook Delivery 🐍 Python SDK 💚 Node.js SDKDelivery & SDKs

Knowledge Center

Digital Shelf Playbook MAP Compliance Guide Pricing Intel Guide Scraping Compliance TikTok Shop Guide NEW Cross-Border Guide NEWGuides & Playbooks

Sample Datasets HOT ROI Calculator NEW API Postman Collection Demo Dashboards Free API Playground NEW Press KitDownloads & Tools

Trust Center About Us FAQs CareersTrust & Company