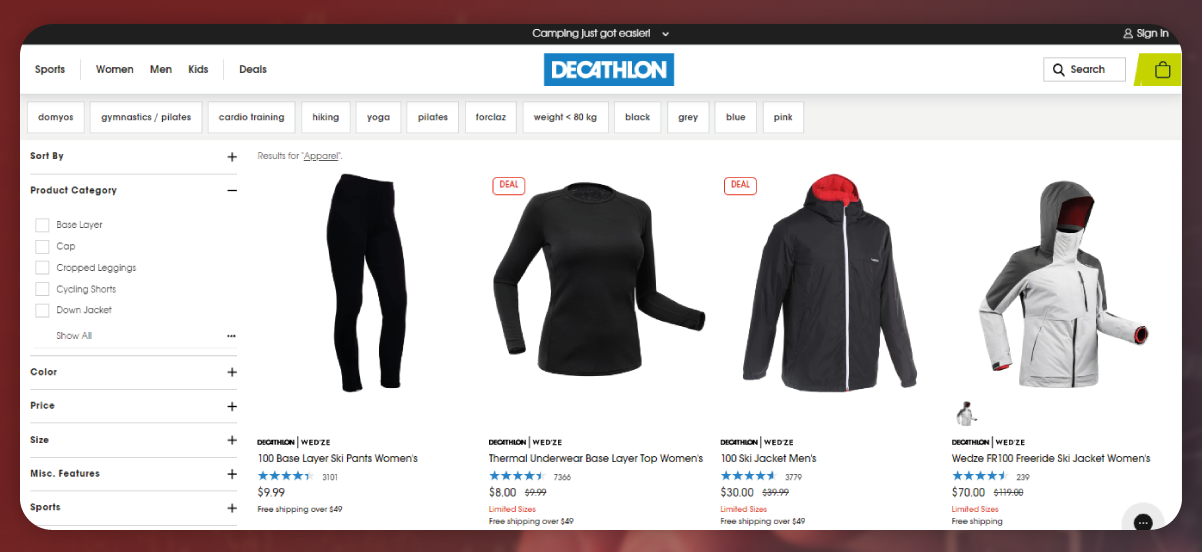

Decathlon is a well-known retailer in the sporting goods industry, offering a wide range of products like shoes, sports apparel, and equipment. Extracting Decathlon site can offer important insights into product pricing, trends, and market details. In this blog, we will explore how to scrape apparel data from Decathlon's site by category through combination of Python and Playwright.

Playwright is an automation library that allows you to control web browsers such as Firefox, WebKit, and Chromium using programming languages like JavaScript and Python. It is an excellent tool for web scraping as it automates tasks like filling forms, clicking buttons, and scrolling. Using Playwright, we will navigate through each category on Decathlon's website and extract product information, including their name, pricing, and description.

In this blog, you'll gain a fundamental understanding of how to utilize Python and Playwright to extract data from Decathlon's site through category. We'll scrape different data attributes from separate product pages including Product URL, Brand, MRP, Product Name, Number of Reviews, Sale Price, Color, Features, Ratings, and Product Information.

Let’s go through a step-by-step blog guide for using Python and Playwright to extract apparel data from Decathlon by category.

To begin the process, we need to import the necessary libraries that will enable us to interact with the Decathlon website and extract the desired information. Here are the libraries we need to import:

The required libraries for scraping data from Decathlon's website by category using Playwright and Python are:

random: This library is used for generating random numbers, which can help generate test data or randomize the order of tests. While it may not directly relate to web scraping in this context, it can be helpful in other scenarios.

asyncio: This library is essential for handling asynchronous programming in Python. Since we will be using the asynchronous API of Playwright, asyncio allows us to write asynchronous code, making it easier to work with Playwright effectively.

pandas: This library is widely used for data analysis and manipulation. It provides convenient data structures and functions for handling tabular data. In this tutorial, we may use pandas to store and manipulate the data obtained from the web pages during scraping.

async_playwright: This library is the asynchronous API for Playwright, which is used to automate browser testing. With the asynchronous API, you can perform multiple operations concurrently, making your scraping tasks faster and more efficient.

By importing these libraries, you'll have the tools to automate browser interactions, handle asynchronous operations, and store and analyze scraped data from Decathlon's website using Playwright and Python.

The next step is to extract the URLs of the apparel products based on their respective categories. We will navigate through the Decathlon website and collect the URLs for each product in each category.

To extract the product URLs from a web page, we can define a Python function called get_product_urls. This function will utilize the Playwright library to automate browser testing and extract the resulting product URLs.

In this function, we start by initializing an empty list called product_urls. We use page.query_selector_all to find all the elements on the page that contain the product URLs, using the CSS selector a.product-link. We then iterate through each element and extract the href attribute, which contains the URL of the product page. The extracted URLs are appended to the product_urls list.

Next, we check if there is a "next" button on the page using page.query_selector. If a "next" button exists, we click on it using next_button.click(), and then recursively call the get_product_urls function to extract URLs from the next page. The URLs extracted from the subsequent pages are also appended to the product_urls list.

Finally, we return the product_urls list, which will contain all the extracted product URLs from the web page.

You can call this function within the previous scrape_urls function, passing the browser and page instances, to extract the product URLs for each category.

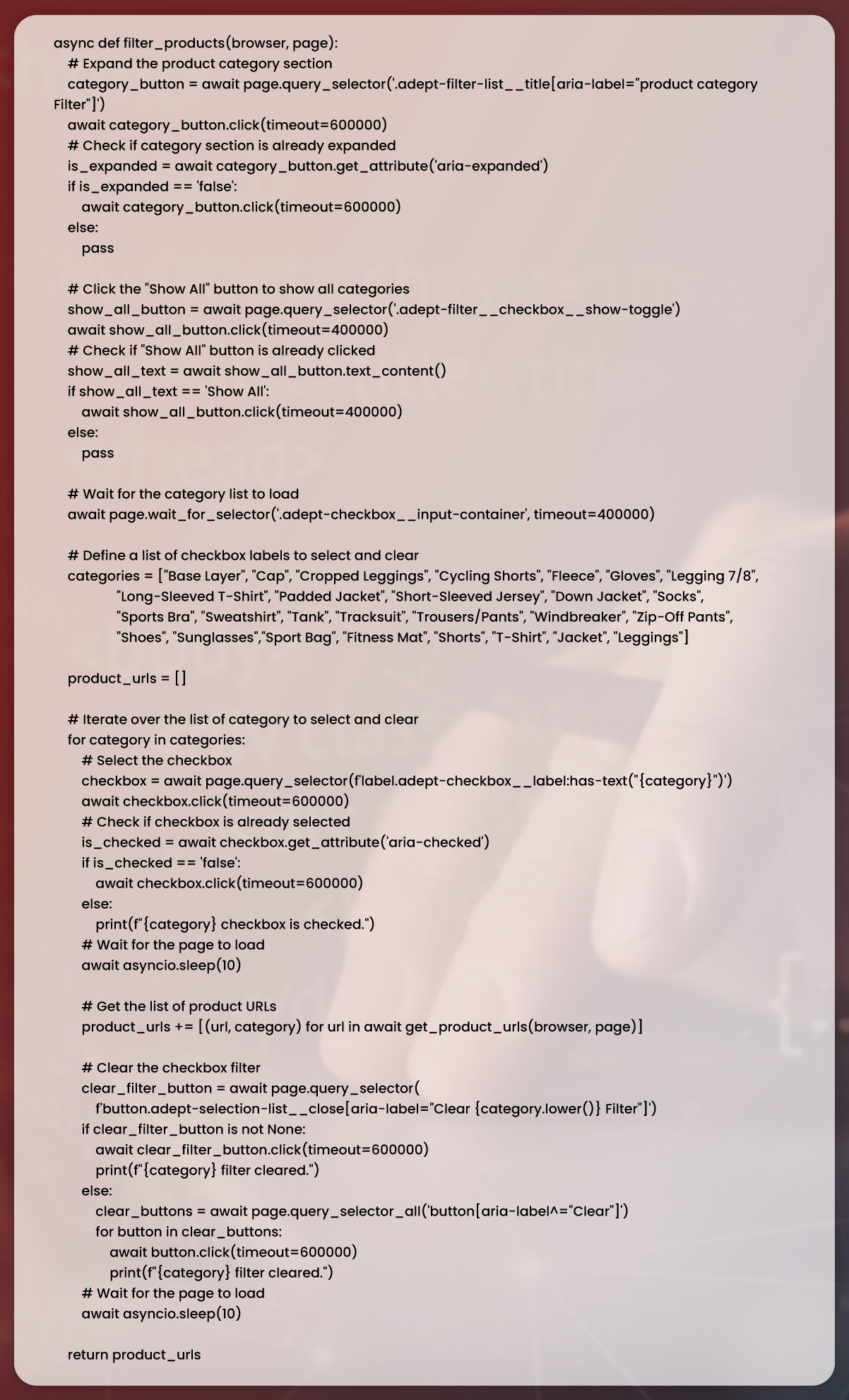

To scrape product URLs depending on a product category, we have to click on a product category button for expanding the list of available categories. Then, we will click on each category to filter the products and extract the corresponding URLs.

In the previous step, we filtered the products by category and obtained the respective product URLs. Now, we will proceed to extract the names of the products from the web pages.

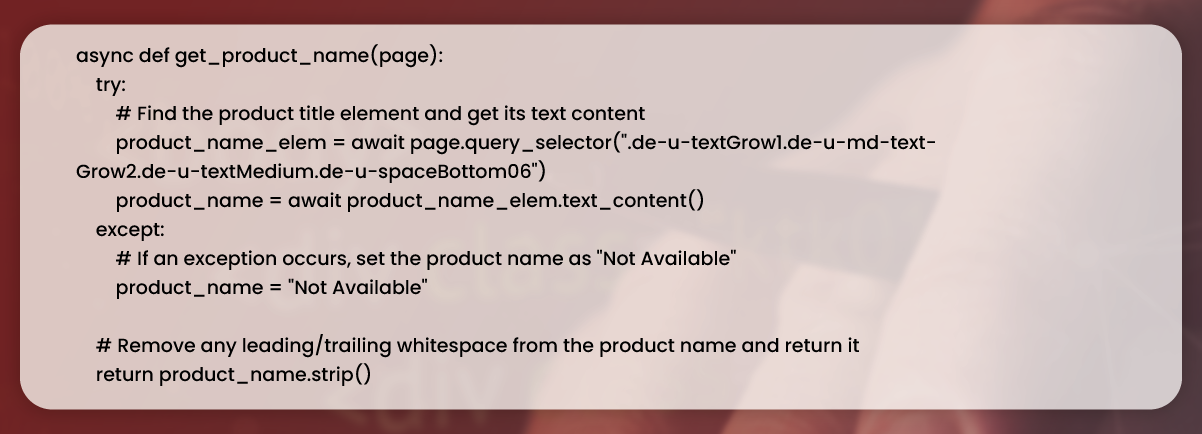

To extract the product names, we can define a function called extract_product_names. This function will take the page instance as a parameter and utilize Playwright's query selector to identify the elements containing the product names. We will then iterate through these elements and extract the text content, which represents the product names.

In this function, we start by initializing an empty list called product_names. We use page.query_selector_all with the CSS selector .product-name to find all elements on the page that contain the product names. Then, we iterate through each element and extract the text content using element.text_content(). The extracted names are appended to the product_names list.

You can call this function after navigating to a specific product page within your scraping script. By doing so, you will obtain a list of the product names from the page, which can be further processed or stored as needed.

In the previous step, we defined a function called extract_product_names to extract the names of the products from the web pages.

In this function, we attempt to find the corresponding product name element on the page using page.query_selector and passing the appropriate CSS selector, which is .product-name. If the element is found, we retrieve the text content using name_element.text_content() and append it to the product_names list. If the element is not found or if an exception occurs during the process, we append the string "Not Available" to the product_names list.

By handling potential exceptions and setting a default value of "Not Available" when the product name element is not found, we ensure that the function runs smoothly and provides a meaningful result even in case of unexpected situations.

You can call this function within your scraping script after navigating to the product page to extract the name of the product.

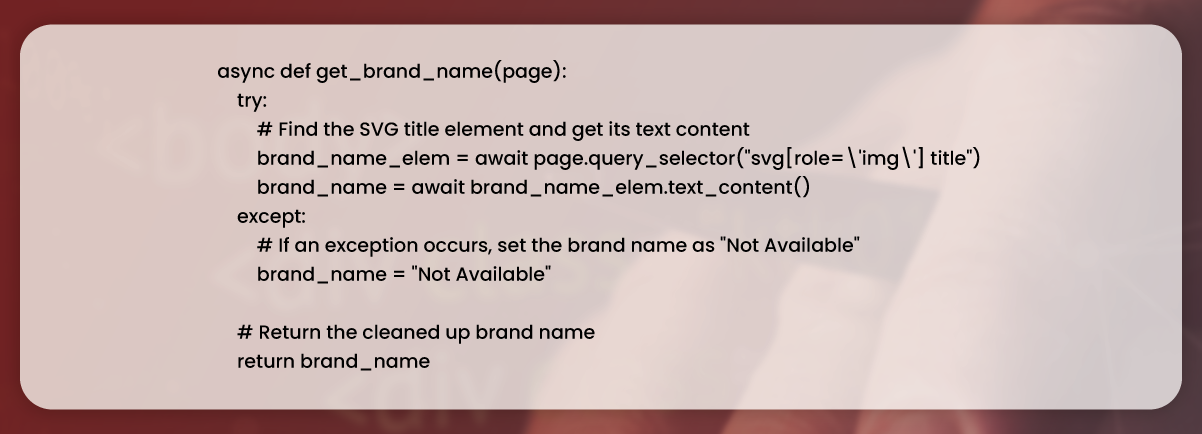

The next step is scraping of a brand of products from different web pages.

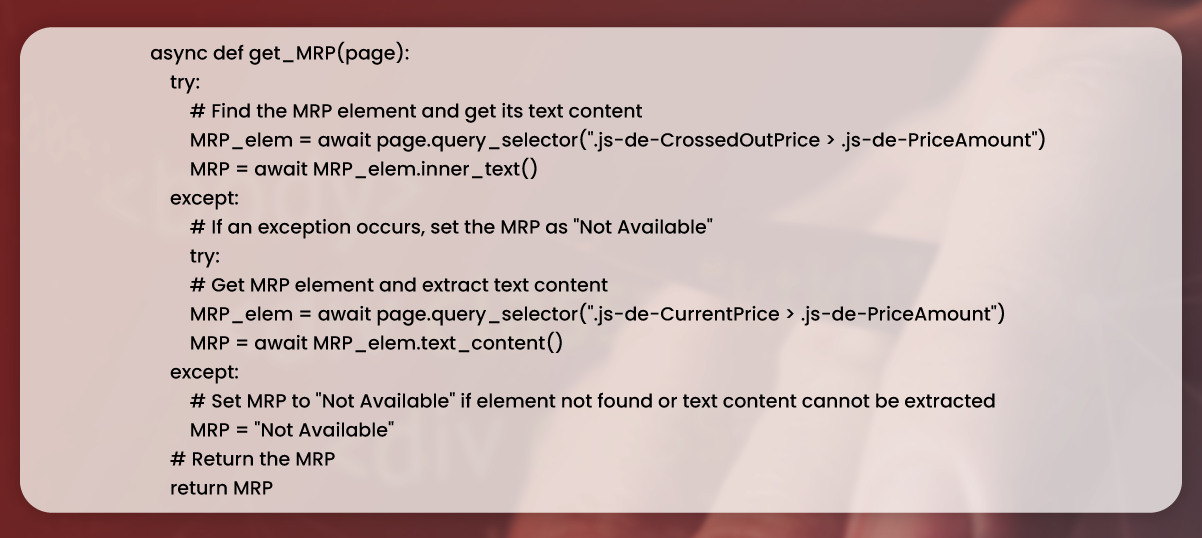

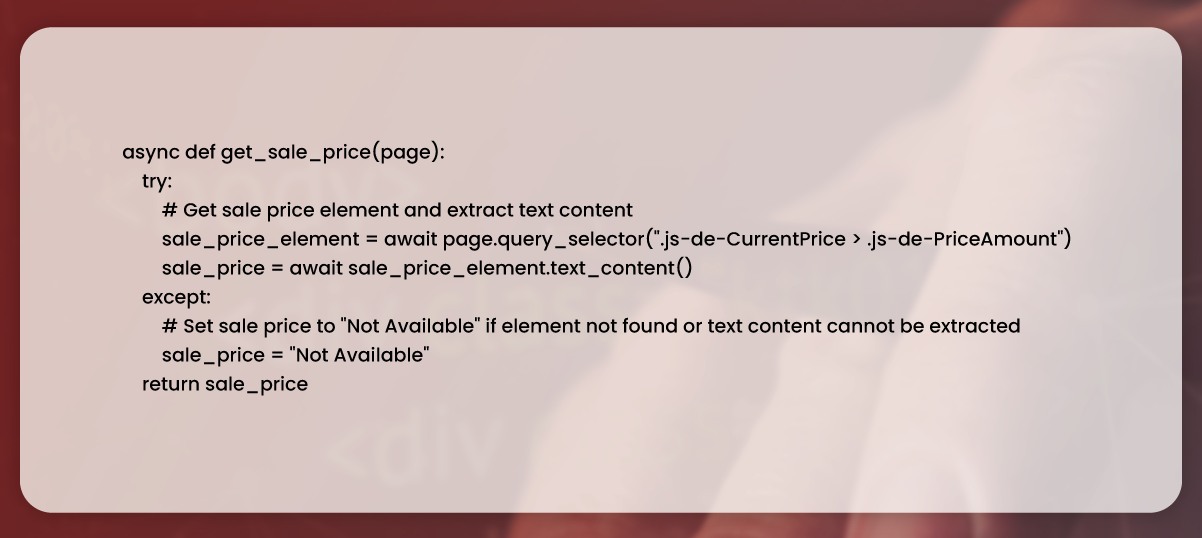

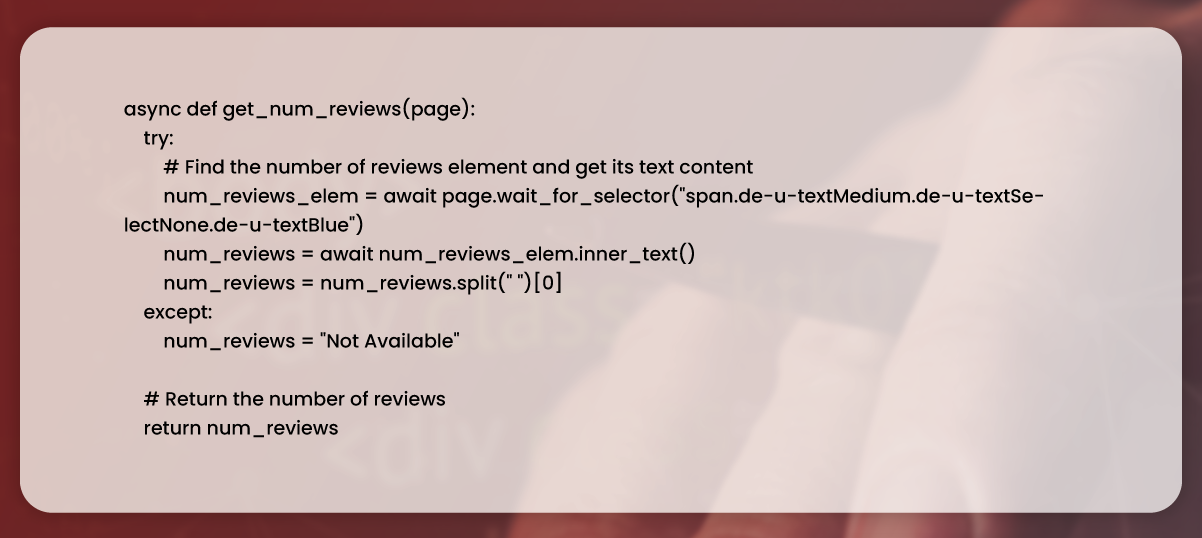

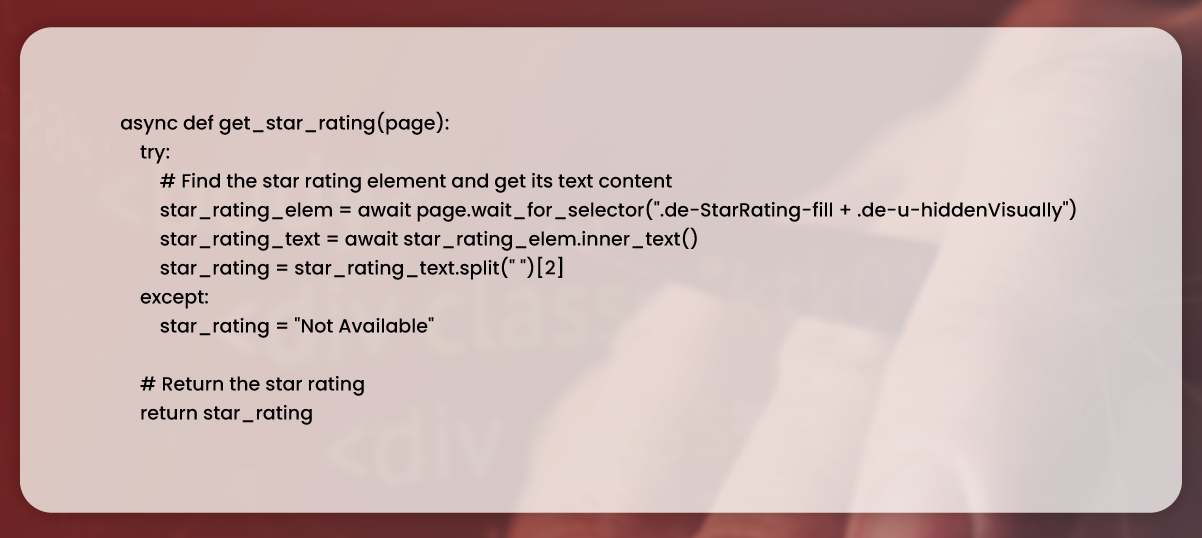

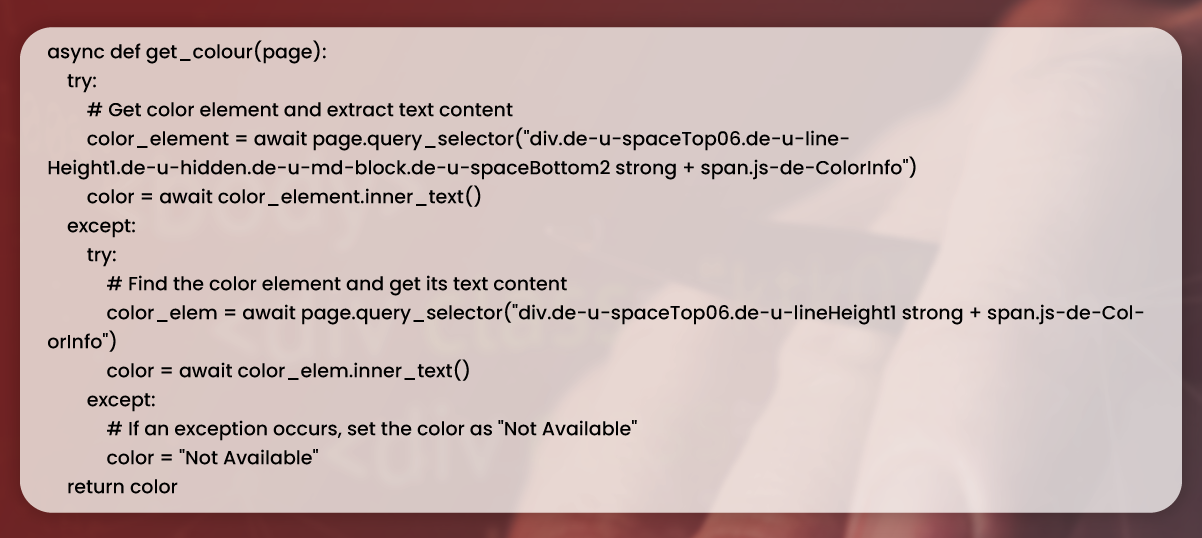

In the previous steps, we have seen how to extract the product name and brand from web pages. Now, let's move on to extracting other attributes such as sale price, MRP, ratings, color, total reviews, product information, and features.

For each of these attributes, we can define separate functions that use the query_selector method and text_content method, or similar methods, to select the relevant element on the page and extract the desired information. These functions will follow a similar structure as the extract_product_names and extract_product_brands functions.

In these functions, we attempt to locate the corresponding elements using appropriate CSS selectors for each attribute. If the element is found, we extract its text content and assign it to the respective variable. If an exception occurs during the process, we set the variable to an empty string or any default value that suits your needs.

You can call these functions within your scraping script, after navigating to the product page, to extract the desired attributes for each product.

Remember to adjust the CSS selectors used in these functions based on the structure of the web page you are scraping. Inspecting the HTML structure of the web page will help you identify the appropriate selectors for each attribute.

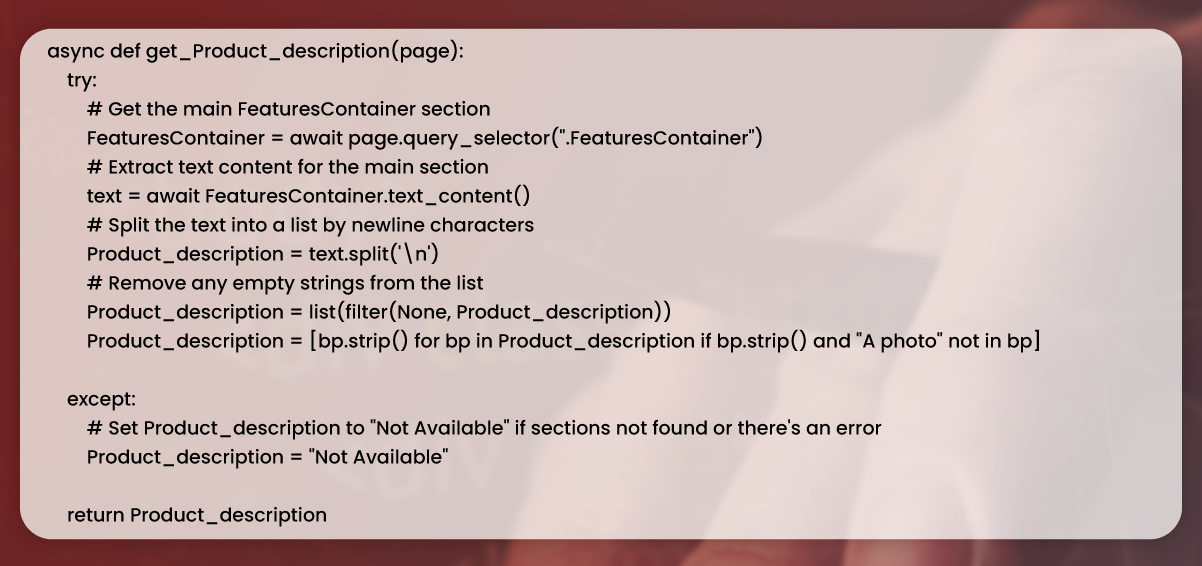

Certainly! Here's an updated explanation for the function that extracts the product description section from a Decathlon product page:

In this function, we attempt to locate the element containing the product description using the CSS selector .product-description. If the element is found, we extract its text content and assign it to the description variable.

To remove unwanted characters, we split the description text by the newline character (\n) and use a list comprehension to filter out any elements that are empty or contain only whitespace. We then use the strip() method to remove leading and trailing spaces from each remaining element. The resulting list of strings represents the cleaned product description.

By using this function in your scraping script, you can extract the product description from each product page and store it as a list of strings for further processing or analysis.

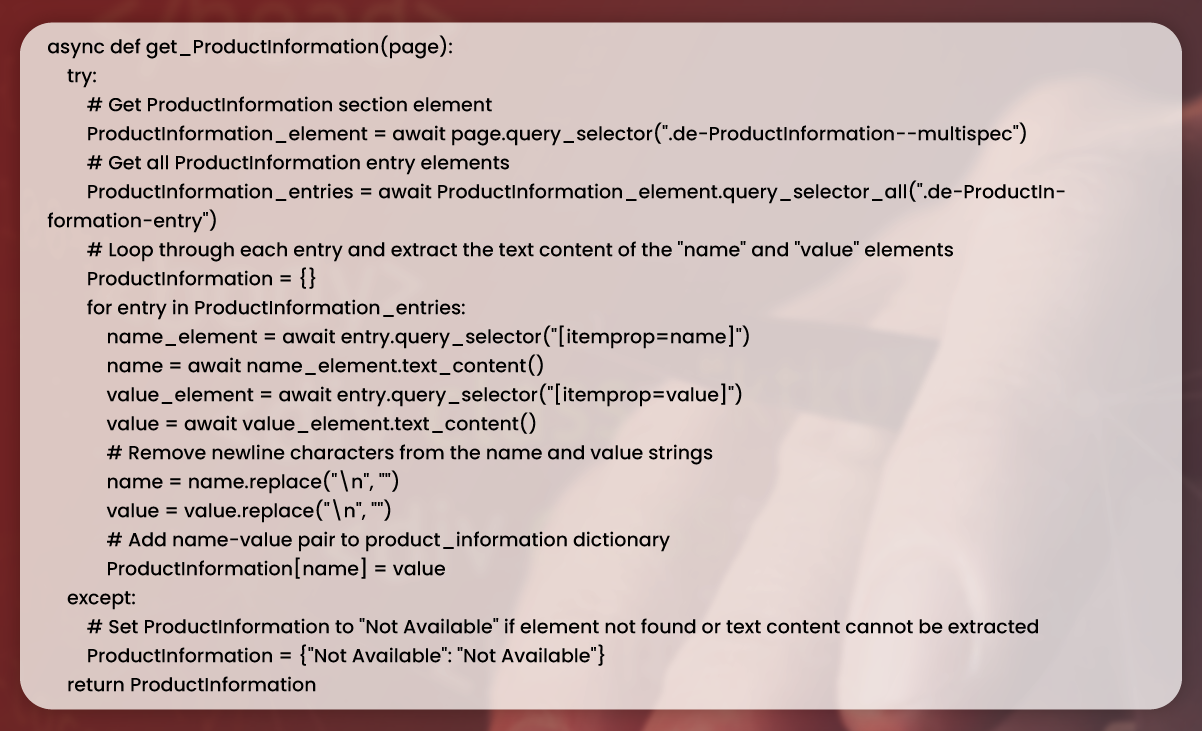

The get_product_information function is an asynchronous function that takes a page object as its parameter. It aims to scrape product details from Decathlon's website.

Here's an explanation of the function's logic:

By using this function, you can extract the product information from each product page on Decathlon's website, providing valuable insights and data for further analysis or processing.

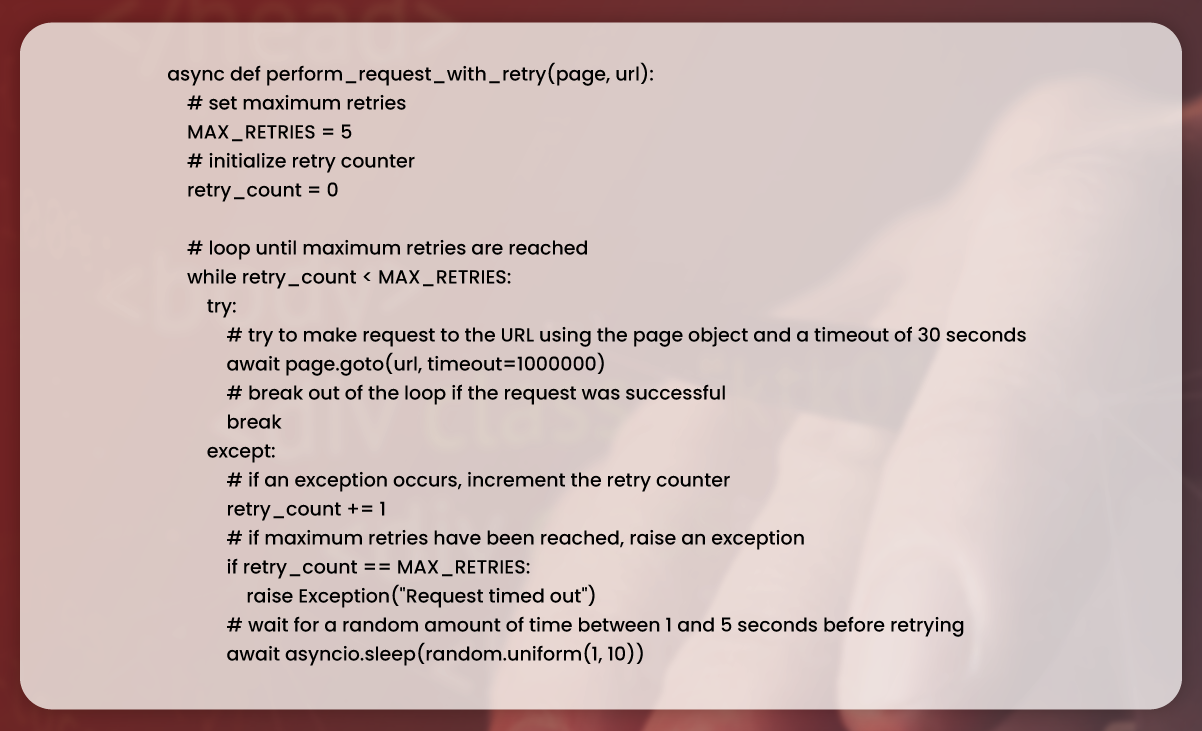

Implementing a retry mechanism is an important aspect of web scraping as it helps handle temporary network errors or unexpected responses from the website. The goal is to increase the chances of success by sending the request again if it fails initially.

In this script, a retry mechanism is implemented before navigating to a URL. It uses a while loop that continuously tries to navigate to the URL until either the request gets succeed or maximum retries has been tried. If maximum retries are reached without a successful request, the script increases an exception to handle the failure.

This function is particularly useful when scraping web pages because requests may occasionally time out or fail due to network issues. By incorporating a retry mechanism, you improve the reliability of the scraping process and increase the likelihood of obtaining the desired data.

The provided function is responsible for making a request of a particular link using goto method about page object from Playwright library. It incorporates a retry mechanism in case the initial request fails.

Here's an updated explanation of the function:

The perform_request_with_retry function performs a request to a specific link by utilizing the goto method of the page object. It implements a retry mechanism by allowing the request to be retried up to a maximum number of times described by MAX_RETRIES constant.

If the initial request fails, the function retries the request by entering a while loop. Between each retry, the function introduces a random wait time using the asyncio.sleep method. This random wait duration, ranging from 1 to 5 seconds, helps prevent rapid and frequent retries that could potentially exacerbate the request failures.

The function takes two arguments: link and page. The page argument represents the Playwright page object used to perform the request, while the link argument denotes the URL to which the request is made.

By utilizing this function, you can ensure that requests are retried in case of temporary failures, improving the overall reliability of your web scraping process.

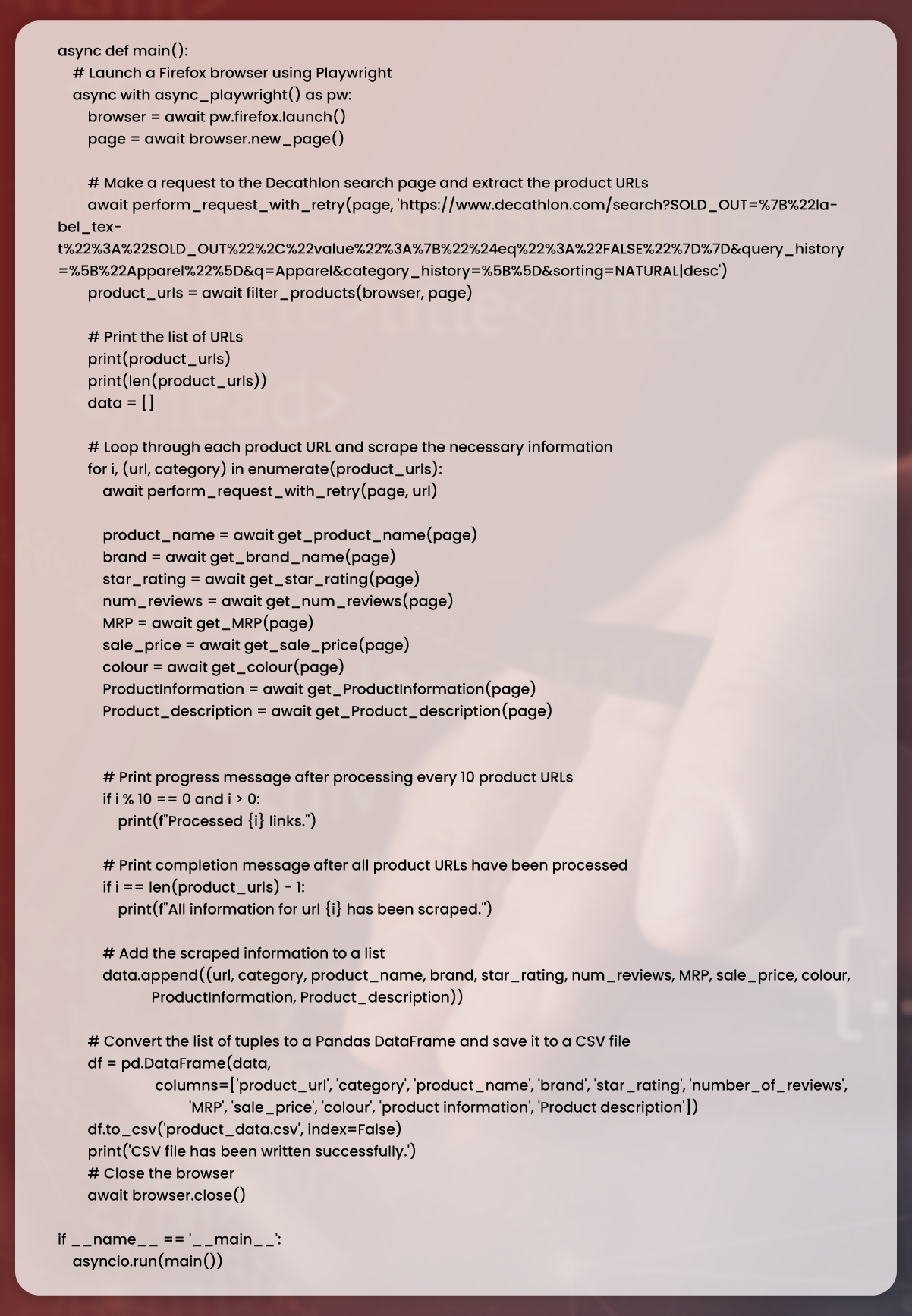

In the following step, we call functions as well as save data to empty lists.

The provided Python script demonstrates the usage of an asynchronous function named "main" to scrape product information from Amazon pages. It leverages the Playwright library to launch a Firefox browser and navigate to the Amazon page.

The "main" function follows these steps:

1. It utilizes the "extract_product_urls" function to extract the URLs of each product from the Amazon page, storing them in a list called "product_url".

2. It then iterates through each product URL in the "product_url" list.

3. For each URL, it uses the "perform_request_with_retry" function to load the product page, making use of a retry mechanism to handle temporary failures.

4. It extracts various information such as the product name, brand, star rating, number of reviews, MRP, sale price, color, features, and product information from each product page.

5. The extracted information is stored as a tuple in a list called "data".

6. After processing every 10 product URLs, it prints a progress message.

7. Once all the product URLs have been processed, it prints a completion message.

8. The data in the "data" list is converted to a Pandas DataFrame.

9. The DataFrame is saved as a CSV file using the "to_csv" method.

10. Finally, the script closes the browser instance using the "browser.close()" statement.

To execute the script, the "main" function is called using the "asyncio.run(main())" statement, which runs the "main" function as an asynchronous coroutine.

By running this script, you can scrape product information from Amazon pages, store it in a structured format, and save it as a CSV file for further analysis or processing.

In today's competitive business landscape, having access to accurate and up-to-date data is crucial for making informed decisions. Web scraping offers a valuable solution to extract data from websites like Decathlon, providing valuable insights into market trends, pricing, and competitor analysis.

Businesses can automate collecting data from Decathlon's website using tools like Playwright and Python. This enables them to gather information such as product offerings, pricing, reviews, and more, which can be used to gain a competitive edge and drive growth.

Partnering with a reputable web scraping company like Actowiz Solutions can further enhance the benefits of web scraping. Actowiz Solutions offers tailored web scraping solutions that cater to specific business needs, providing access to comprehensive and relevant data. From product details to pricing information and customer reviews, Actowiz Solutions enables brands to understand their industry and competition deeply.

By leveraging the power of web data, businesses can make data-driven decisions, optimize their operations, and drive profitability. Actowiz Solutions can provide the necessary expertise and tools to extract valuable data, whether for product development, market research, or marketing campaigns.

If you're ready to unlock the potential of web data for your brand, reach out to Actowiz Solutions, the experts in web scraping. Contact us today to explore how web scraping can revolutionize your business and give you a competitive advantage.

For all your web scraping, mobile app scraping, or instant data scraper service needs, Actowiz Solutions is here to assist you. Our team of experts is skilled in extracting data from various sources, including websites and mobile applications. Whether you need to gather market data, competitor information, or any other specific data set, we have the expertise and tools to deliver accurate and reliable results.

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

How US hotels and hospitality brands monitor competitor rates on Booking.com and Expedia. Automated travel price monitoring for RevPAR optimization.

See how our 2026 7-Eleven USA store location data scraping helped a retail brand optimize expansion planning, identify gaps, and boost market reach.

Track UK Grocery Products Daily Using Automated Data Scraping across Morrisons, Asda, Tesco, Sainsbury’s, Iceland, Co-op, Waitrose, and Ocado for insights.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.