In the wake of global health concerns, data-driven insights play a crucial role in tracking and managing disease outbreaks. Monkeypox data analysis with Python enables researchers, healthcare professionals, and data analysts to extract and process real-time information for better decision-making. By using Web Scraping Monkeypox statistics, organizations can collect valuable data from various sources, ensuring accurate epidemiological tracking.

Importance of Monkeypox Data Extraction

Accurate and timely data extraction for Monkeypox is crucial in managing outbreaks and mitigating the disease’s impact. By systematically collecting and analyzing case data, healthcare professionals, researchers, and policymakers can make informed decisions to protect public health.

Key Benefits of Monkeypox Data Extraction

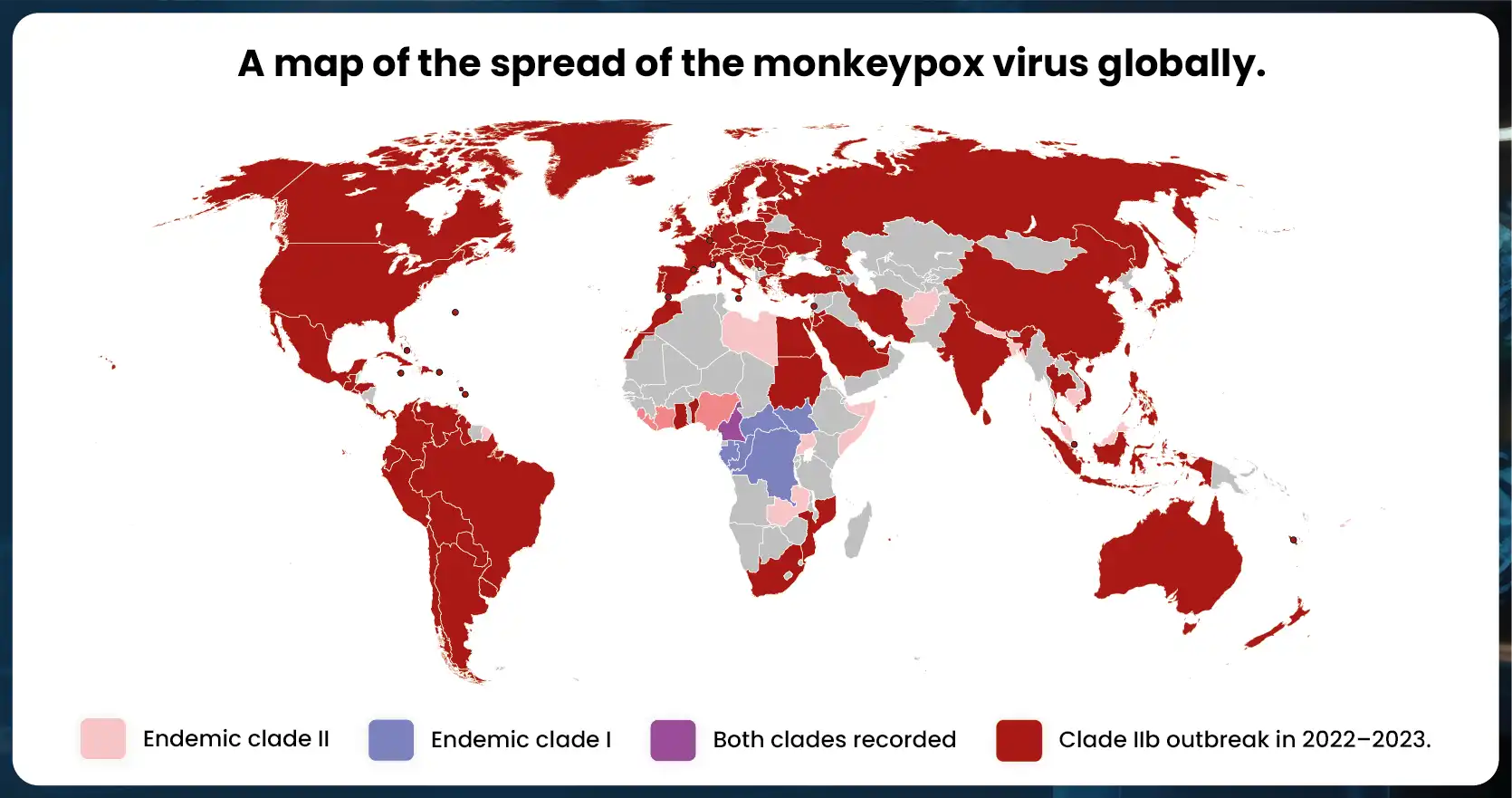

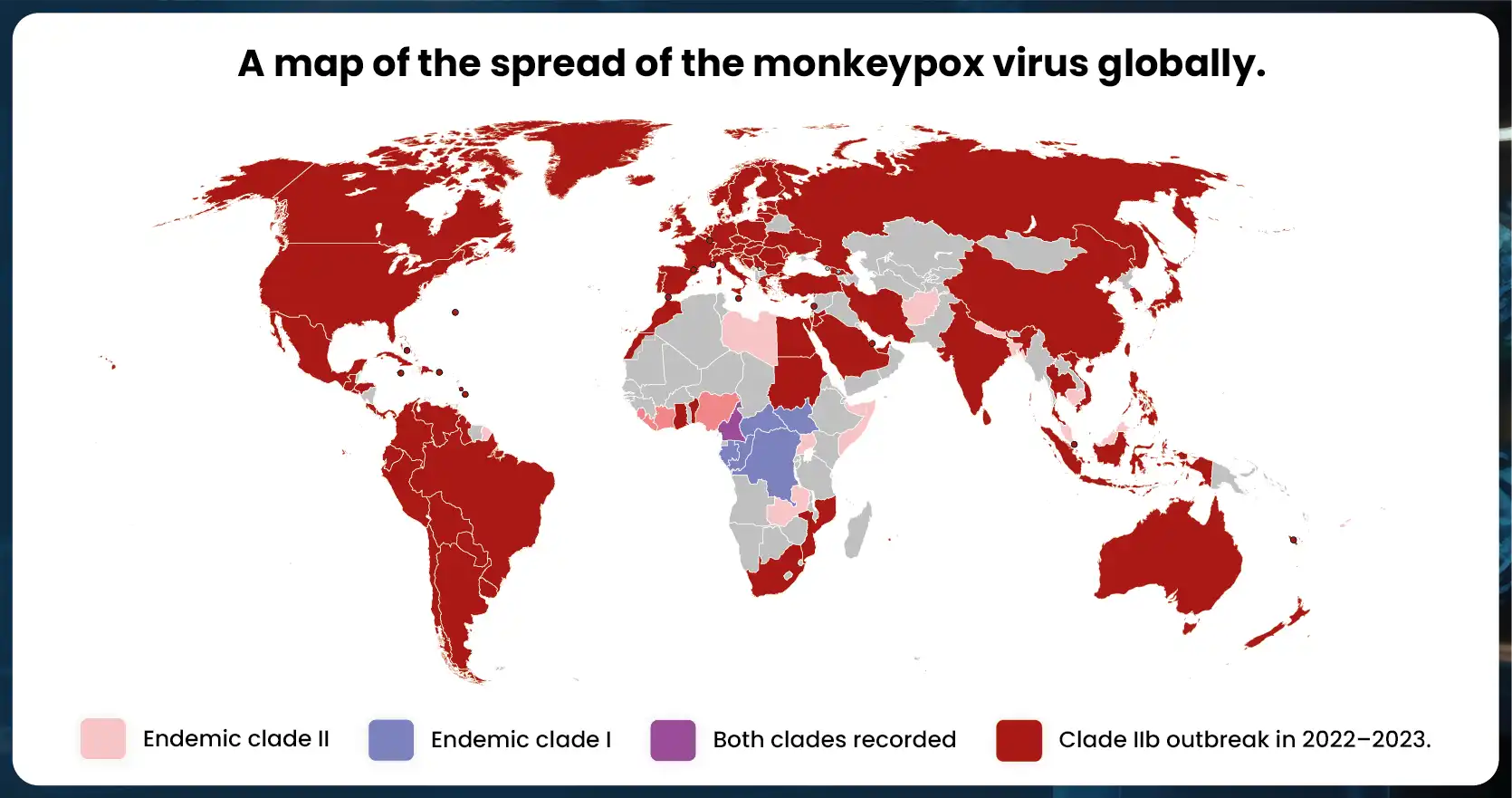

- Tracking Disease Spread:Monitoring case numbers across different regions helps identify affected areas and detect potential hotspots. This facilitates rapid intervention and containment strategies.

- Identifying High-Risk Areas:By analyzing demographic and geographical data, authorities can determine which regions and populations are most vulnerable. This aids in targeted public health measures, such as vaccination drives and awareness campaigns.

- Understanding Infection Trends and Mortality Rates:Analyzing historical and real-time data enables researchers to recognize patterns in transmission, recovery, and fatality rates. This information is vital for analyzing Monkeypox trends using Pandas, predicting future outbreaks, and refining treatment protocols.

- Assisting in Resource Allocation:Healthcare facilities rely on accurate Monkeypox data analysis with Python to ensure sufficient medical resources, including hospital beds, medications, and staff. Proper data mining enhances preparedness and response strategies.

Leveraging Pandas for Data Extraction

With the power of Pandas data extraction for Monkeypox, data scientists can transform raw Monkeypox datasets into structured formats suitable for analysis. This allows for:

- Efficient data cleaning and organization

- Generating insightful visualizations

- Performing statistical analysis

- Producing comprehensive reports for decision-making

Web Scraping Monkeypox Data

Using Python web scraping for Monkeypox data, researchers can automate the collection of case statistics from various sources. Web scraping services and web crawling techniques extract real-time information for further analysis.

By leveraging advanced web scraping Monkeypox statistics and data extraction techniques, stakeholders can enhance their understanding of Monkeypox outbreaks and contribute to more effective disease control measures.

Tools & Technologies for Web Scraping Monkeypox Data

To effectively gather and analyze Monkeypox case statistics, developers and data scientists utilize a combination of web scraping services, data extraction techniques, and powerful Python libraries. These tools help automate data collection, ensuring accurate and real-time insights into the disease’s spread.

1. Python & Web Scraping Libraries

Python web scraping for Monkeypox data is a widely used approach for collecting information from various online sources, including government websites, research articles, and news reports. Developers rely on the following libraries:

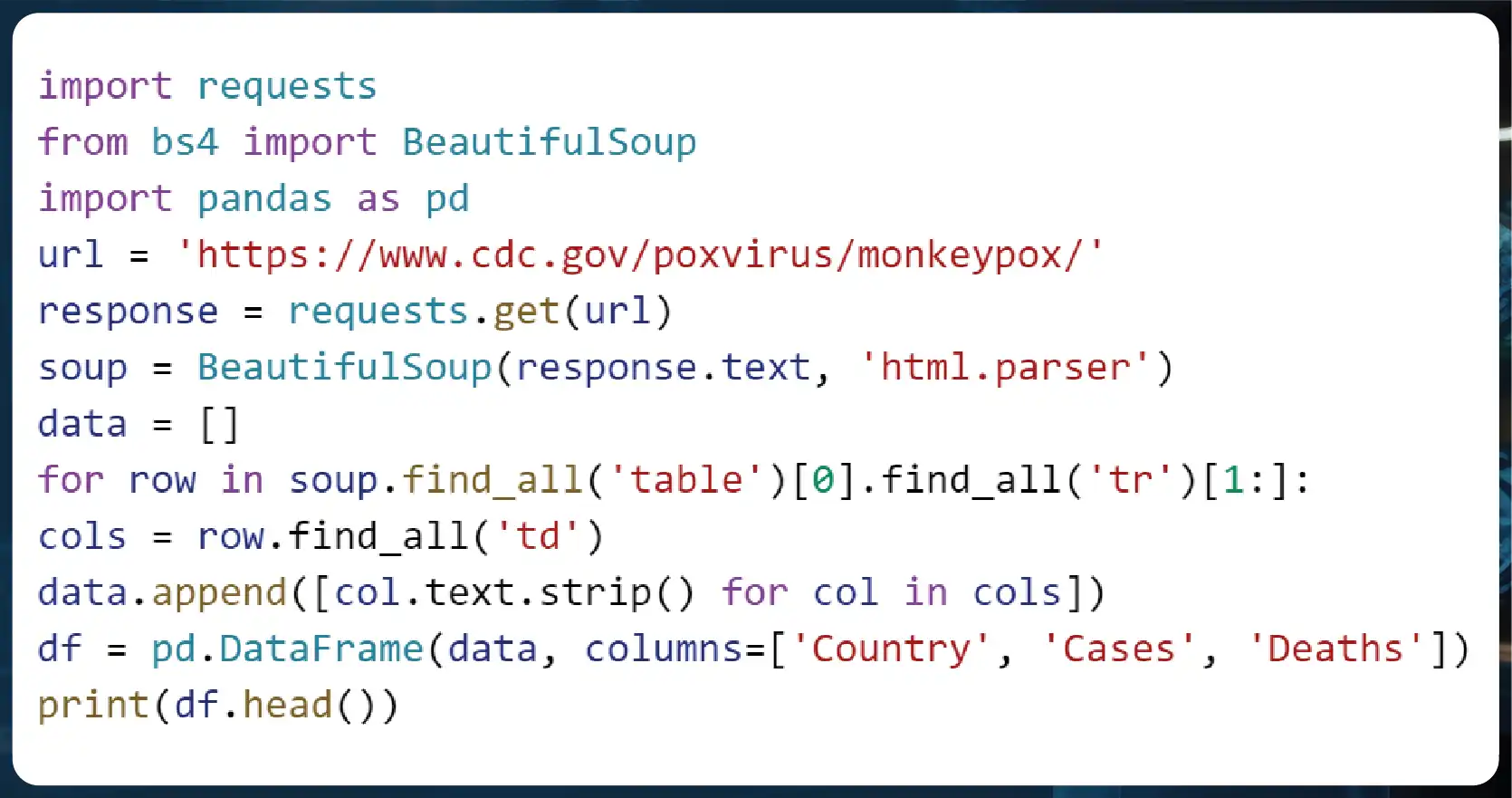

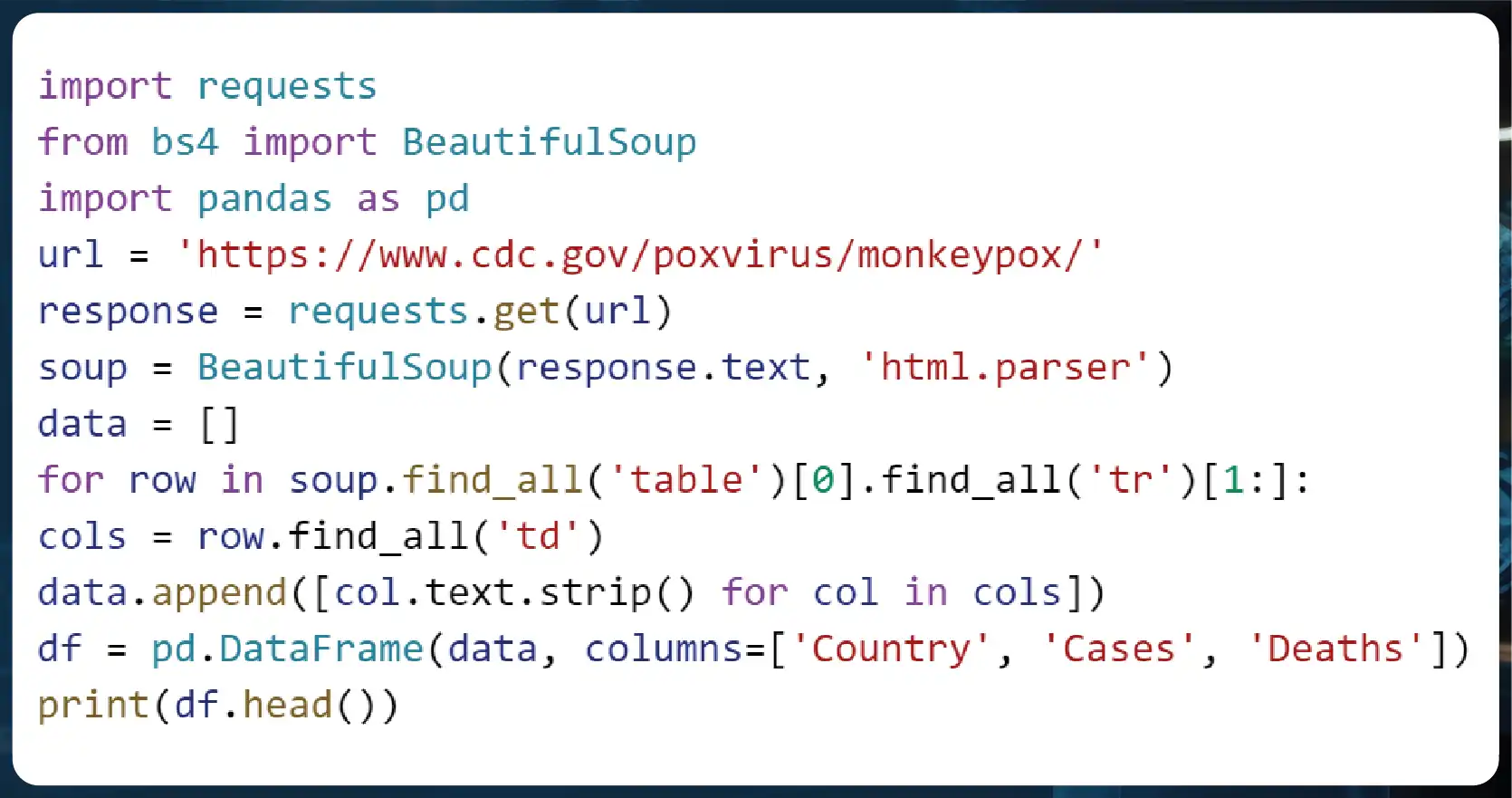

- BeautifulSoup – Used for web crawling and parsing HTML/XML documents, enabling structured data extraction.

- Scrapy – A robust web scraping framework that automates large-scale data mining tasks.

- Selenium – Ideal for scraping dynamic websites that require JavaScript execution.

- Requests – A simple library for handling HTTP requests and retrieving web content efficiently.

By leveraging BeautifulSoup to scrape Monkeypox information, researchers can extract essential details such as case numbers, reported symptoms, and region-wise statistics from multiple online sources. This facilitates data-driven decision-making and real-time monitoring of Monkeypox outbreaks.

2. Pandas for Data Analysis

Once the data is extracted, Pandas is used to process, clean, and analyze the information. A Pandas tutorial for Monkeypox data extraction can help data scientists transform raw data into meaningful insights. Key benefits include:

- Structuring extracted data into Pandas DataFrames for Monkeypox case statistics – Ensures that scraped data is organized, structured, and easily accessible for further processing.

- Performing trend analysis with Analyzing Monkeypox trends using Pandas – Helps researchers identify outbreak patterns, peak infection periods, and recovery trends.

- Data visualization for pattern recognition – Enables the creation of insightful graphs and charts for better interpretation of Monkeypox trends.

With Pandas data extraction for Monkeypox, analysts can efficiently clean datasets, remove inconsistencies, and extract valuable insights. This structured approach enhances the accuracy and reliability of Monkeypox data analysis with Python, allowing researchers to make informed predictions about the disease’s progression.

Enhancing Web Scraping with Automation

To improve efficiency, advanced web scraping services integrate data mining techniques, automating the entire pipeline from data collection to storage and analysis. Combining web crawling with Python web scraping for Monkeypox data ensures continuous monitoring and up-to-date reporting.

By utilizing these technologies, stakeholders can enhance disease surveillance, allocate healthcare resources effectively, and contribute to global health initiatives aimed at controlling Monkeypox outbreaks.

Step-by-Step Guide to Extracting Monkeypox Data

Step 1: Identifying Data Sources

To begin Scraping health data for Monkeypox analysis, researchers need to identify credible sources such as:

- World Health Organization (WHO)

- Centers for Disease Control and Prevention (CDC)

- Government health websites

- Research journals and reports

Step 2: Writing Python Scripts for Data Scraping

Developers can create Python scripts for Monkeypox data scraping using BeautifulSoup and Requests:

Step 3: Automating Data Collection

Using Automating Monkeypox data collection with Python, organizations can schedule periodic scrapes to keep their databases updated. Automation tools like Airflow and Cron Jobs can facilitate seamless data retrieval.

Step 4: Data Cleaning & Transformation

After extraction, Pandas data extraction for Monkeypox helps clean and structure the data:

df.dropna(inplace=True)

df['Cases'] = df['Cases'].astype(int)

df['Deaths'] = df['Deaths'].astype(int)

Step 5: Data Visualization

To make insights more accessible, Data visualization of Monkeypox cases with Pandas is crucial:

import matplotlib.pyplot as plt

df.plot(kind='bar', x='Country', y='Cases', title='Monkeypox Cases by Country')

plt.show()

Future Trends in Monkeypox Data Scraping (2025-2030)

.webp)

| Year |

Data Sources Growth (%) |

AI Adoption for Data Mining (%) |

| 2025 |

20% |

30% |

| 2026 |

25% |

40% |

| 2027 |

30% |

50% |

| 2028 |

35% |

60% |

| 2029 |

40% |

70% |

| 2030 |

50% |

80% |

Why Choose Actowiz Solutions for Web Scraping Services?

At Actowiz Solutions, we specialize in Web Scraping Services tailored for healthcare and epidemiology. Our expertise includes:

- High-Quality Data Extraction – Accurate and structured datasets

- Automated Data Mining – Continuous updates for real-time insights

- Scalable Solutions – Custom data pipelines for research institutions

- Security & Compliance – Ensuring ethical data scraping

With our cutting-edge Web Scraping API Services, businesses can easily integrate real-time Monkeypox case data into their analytics platforms.

Conclusion

As healthcare challenges evolve, data-driven decision-making becomes more critical than ever. Utilizing Web scraping CDC Monkeypox updates using Python, organizations can stay ahead with real-time insights. Whether you need data extraction, data mining, or web crawling, Actowiz Solutions has the expertise to support your analytical needs.

Ready to streamline your epidemiological data collection? Contact Actowiz Solutions today for expert Web Scraping Services! You can also reach us for all your mobile app scraping, data collection, web scraping, and instant data scraper service requirements!

.webp)