In Part 1 of the two-part series on data scraping e-commerce websites for price comparison, we used the Selenium-Python package to automate the procedure of extracting product prices and names from the Lazada website.

In Part 2 here, we will continue scraping on the Shopee website. Here we will concentrate on particular challenges with extracting the Shopee website rather than repeating the steps in Part I. We will also introduce a substitute to Selenium that worked better!

So, let’s begin!

wasn’t easy while using a Selenium tool, and we have highlighted four extra complexities a Shopee website had and a Lazada website hadn’t:

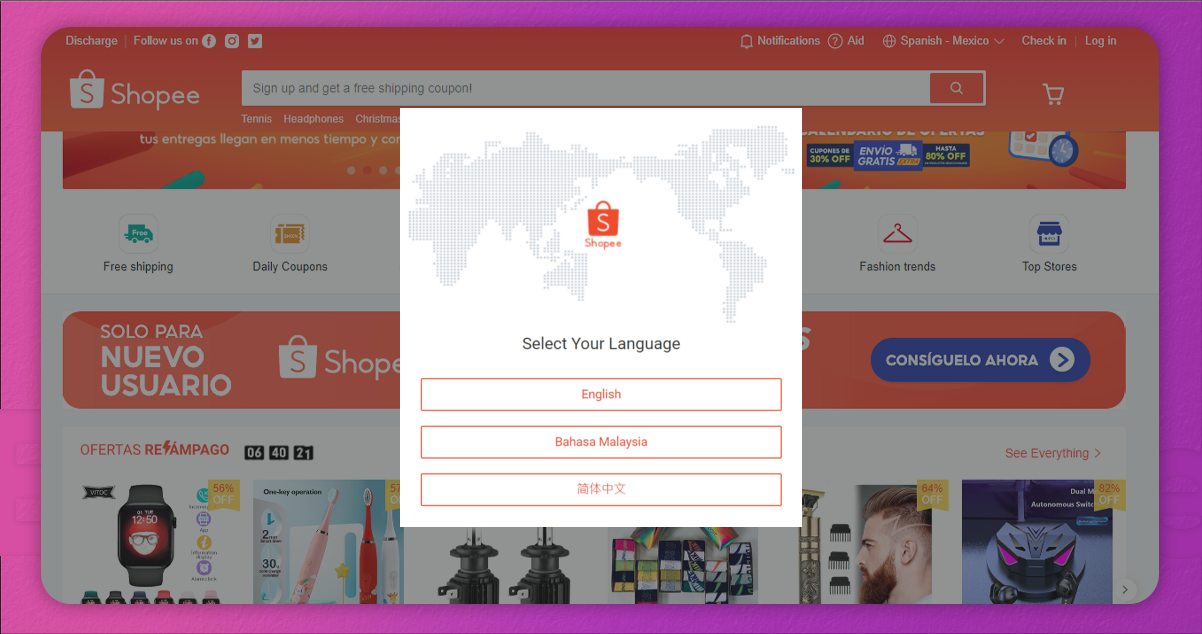

Popup Alerts (Extra Complexity = Low)The initial issue we meet is popup alerts, which come when you search:

We can automate clicking away from popup boxes using Selenium with the given script:

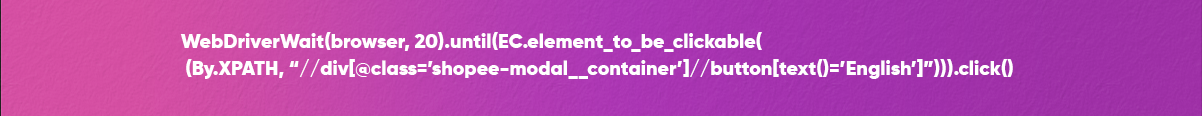

We also get that at times in Shopee search outcomes, one item might have two different pricing figures with a similar class name. Different prices imitate a pricing range where an item has a volume discount:

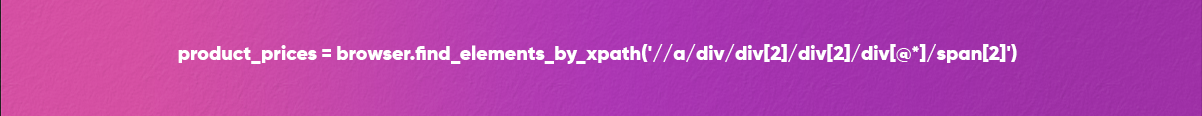

Using Selenium, we can stipulate the particular figure we need by using an XPath selector to choose the second span component that reflects the initial figure:

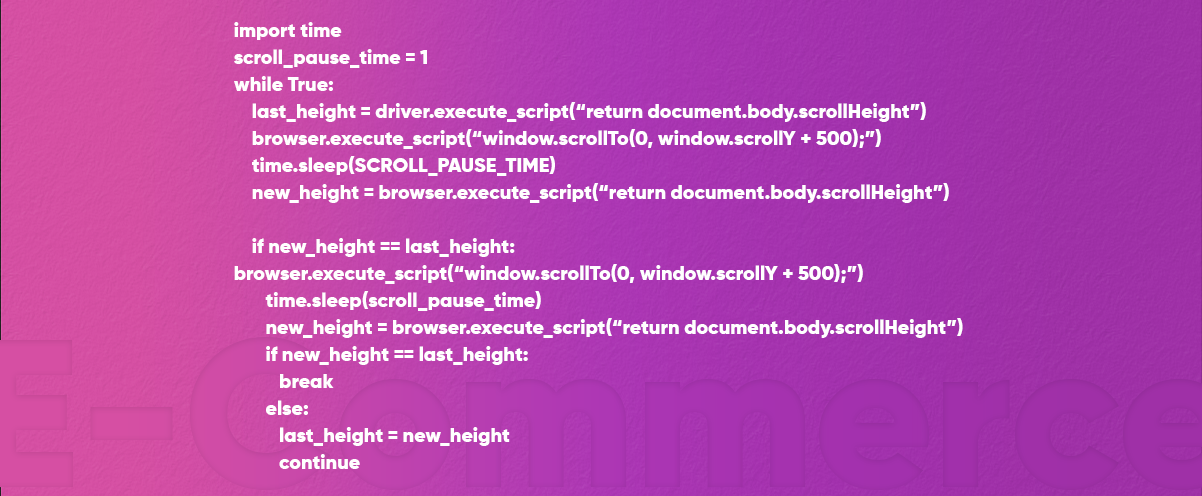

The Shopee site is a dynamic site, where page elements look dynamically only while scrolling down a page. It isn’t unusual because it helps a page in loading quicker without immediately loading all elements (Facebook works in the similar way).

However, this needs to automate scrolling to bottom of a page like you would do physically, with shorter waiting time for all page fundamentals to appear.

Also, Selenium allows automation to do browser scrolling, however the script for the particular automation could be lengthy because you might need to imitate the manual procedure of scrolling a bit more, and wait a few seconds for page elements to come, rinse and repeat till you reach end of a page.

We could write the script like this:

Here we can see that the code has become much more composite, and the automation procedure has also become slower with extra pause times.

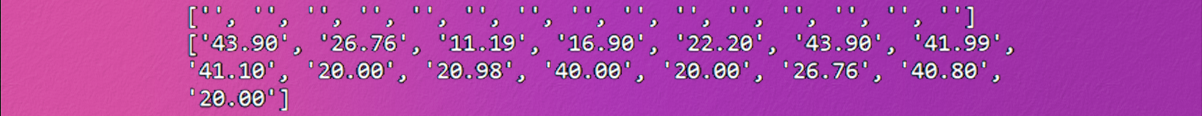

As observed earlier, the product names can’t be selected although they could be recognized with either XPath or class selectors and could be seen with a Chrome inspect tool. Due to that, running find_element doesn’t reoccurrence the anticipated item names, only empty strings.

We’ll have to write a few Javascript codes to deploy a CSS property, the language we are extremely unfamiliar with.

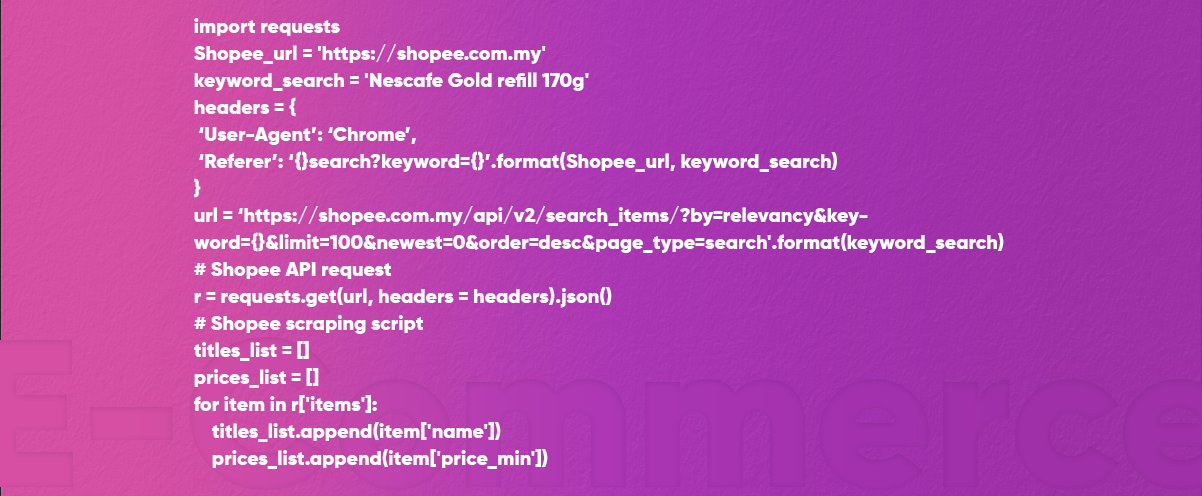

Fortunately, we found an easy way of scraping Shopee data: using Shopee’s API to ask for search results.

We were extremely lucky to find that on the web. Not all the websites will get or will share the API with you. Because Shopee helps you use the API to extract product information directly, it becomes much easier to utilize that rather than automating the extraction procedure with Selenium using the given code:

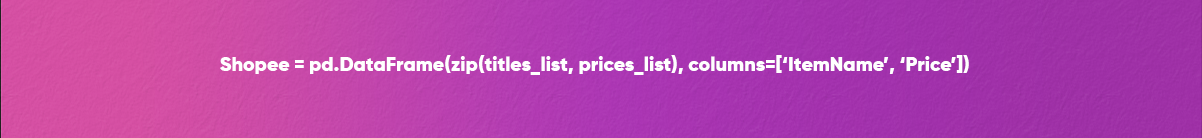

Now, we will make a pandas dataframe for organizing all the data:

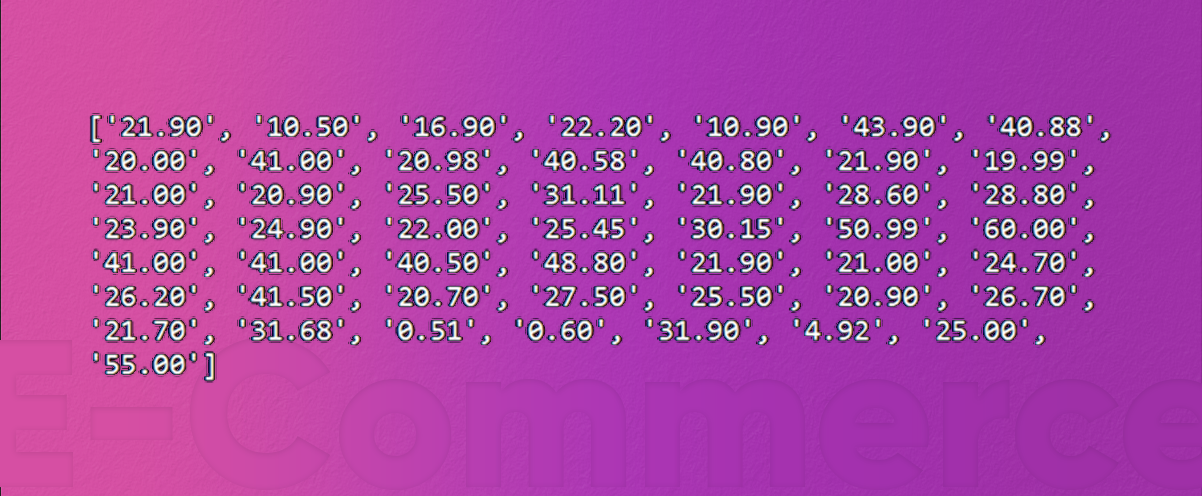

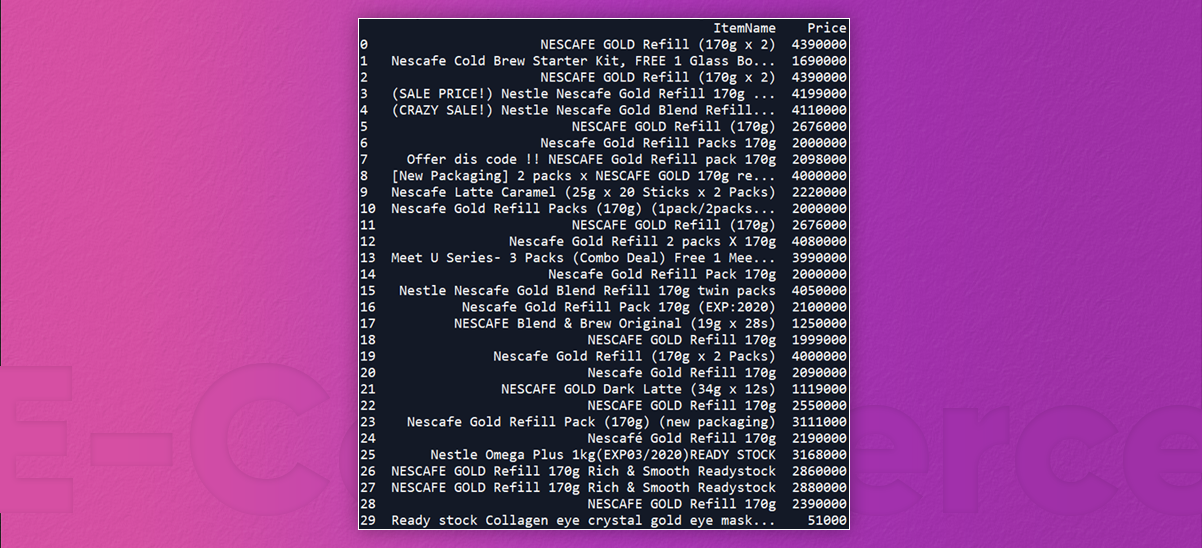

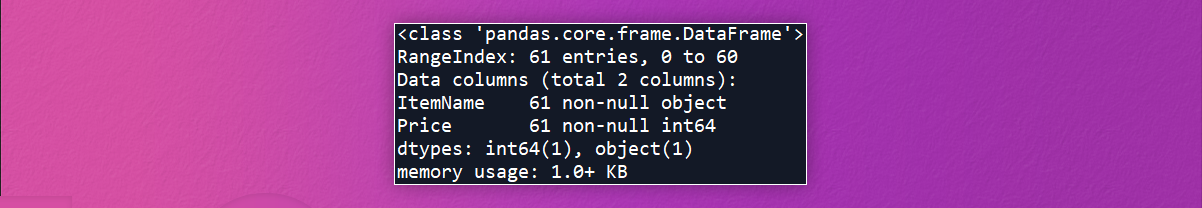

Printing output data of a dataframe offers the given results:

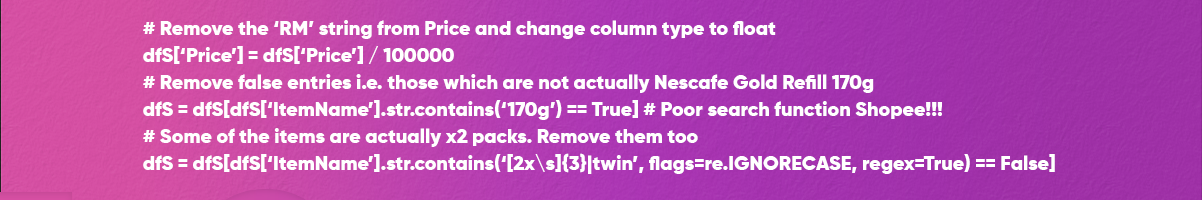

With a Lazada dataset, we would also require to conduct cleaning with the dataset. The key things we have to do include:

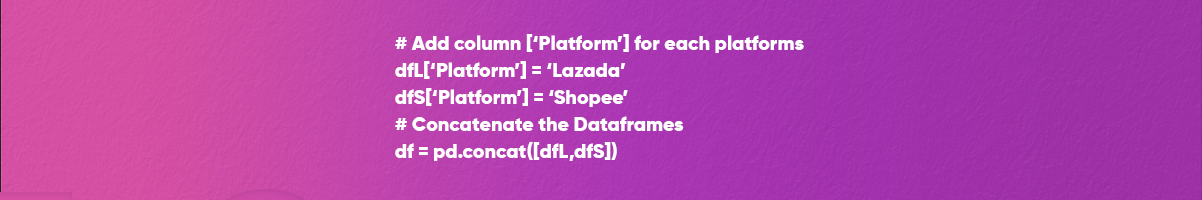

Now, it’s time to combine Shopee and Lazada datasets! We do that by utilizing a pandas concatenation technique:

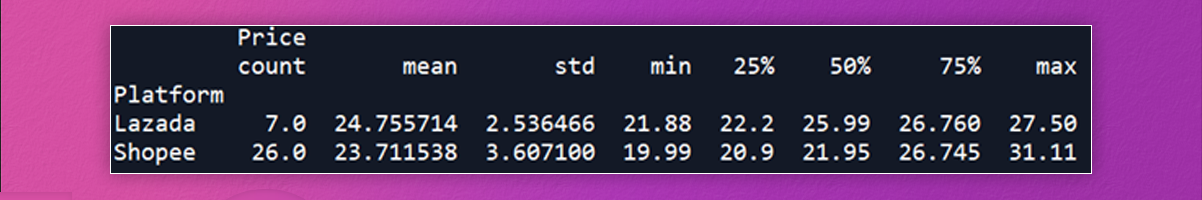

Now we need to compare between these two platforms. We could print a dataframe statistical structures using a describe method:

We would plot data using similar box plot created in the Part 1:

And that’s it! Depending on one item comparison, it does look that Shopee is the cheaper platform (having extra items).

Some notes before we finish off:

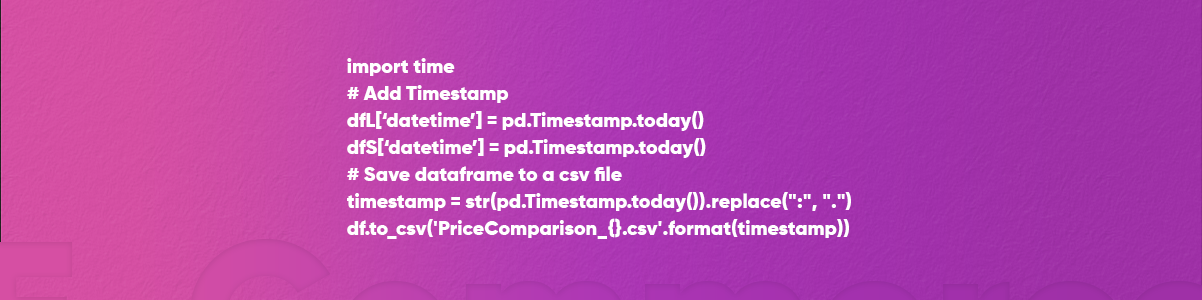

a) It’s useful to organize a price comparison between various time periods to analyze a pricing trend of any particular item. To do that, we could add a datetime column as well as save this to the csv file.

b) Though you can extract other items just by changing a keyword_search variable, you might have to clean a dataset otherwise from the given example.

c) This example is the small dataset, and so the cleaning and scraping exercise was much quicker.

That’s it for now!

For more information about scraping e-commerce website data to compare prices using Python, contact Actowiz Solutions now!

You can also reach us for all your mobile app scraping and web scraping services requirements.

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

Aggregate RERA data across all 28 Indian states + UTs. Real-time project, builder, and compliance intelligence for India ?40+ trillion real estate market.

Discover how a Q-commerce startup saved ₹2.8 Cr annually by tracking Blinkit, Zepto, and Instamart in real time. Learn how data-driven pricing and inventory insights boost efficiency and profitability.

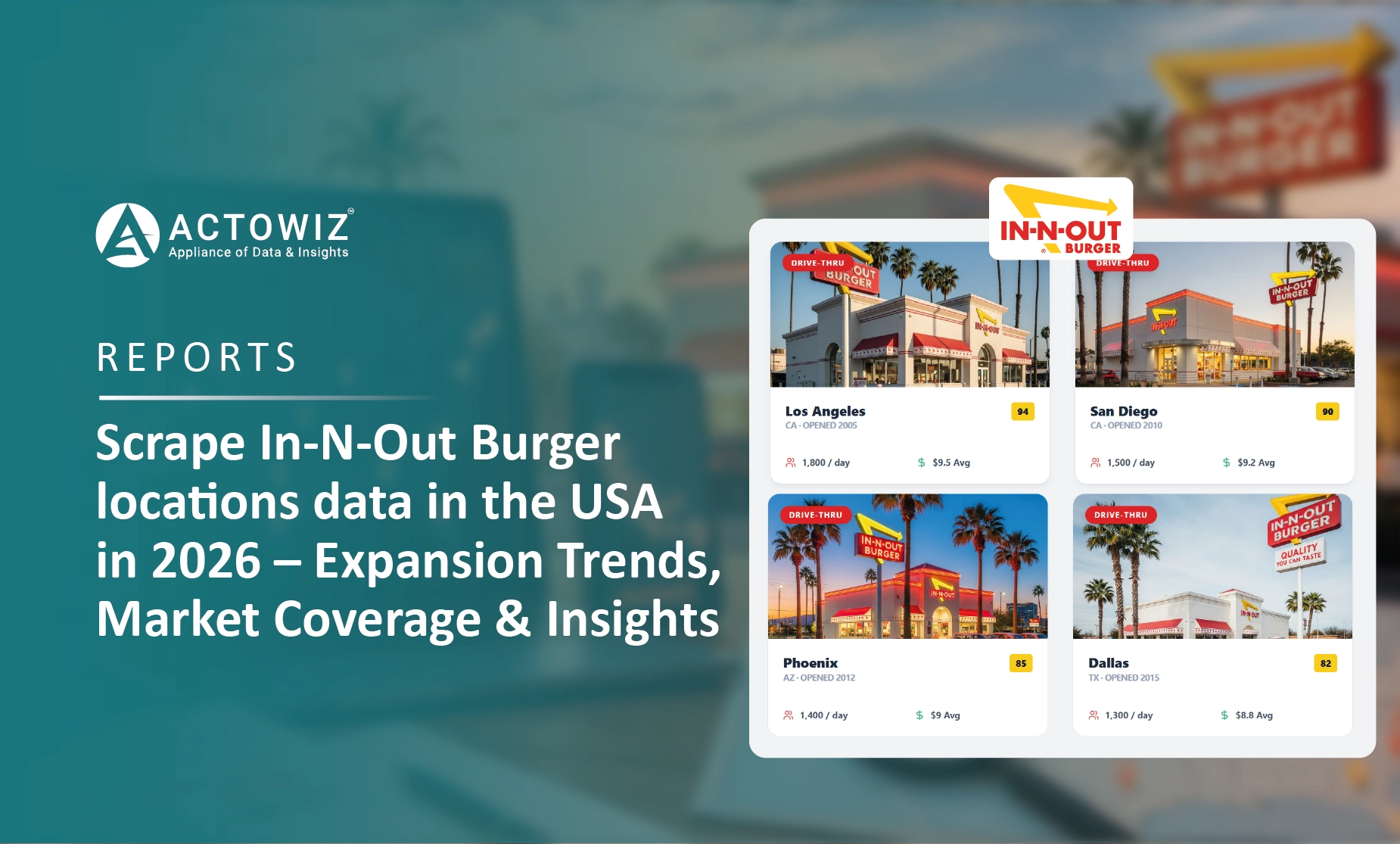

Scrape In-N-Out Burger locations data in the USA in 2026 to analyze store expansion, regional coverage, and market trends.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.