Fundamental knowledge extracting data using CSS selectors

CSS selectors state which part of markup any style applies therefore allowing to scrape data to match attributes and tags.

It’s a thing, which makes a self-determining set of libraries installed including various Python versions, which can co-occur with each other with the similar system therefore anticipation libraries or Python version fights.

📌Note: It is not a severe requirement for the given blog post.

You have to install chromium for playwright for working and operate a browser:

After doing that, if you’re using Linux, you may have to install extra things (playwright would prompt you with the terminal if anything is missing):

There’s a possibility that a request could get blocked. See how to decrease the chances of getting blocked when doing web scraping, eleven methods are there to bypass different blocks from maximum websites and a few of them would get covered in the blog post.

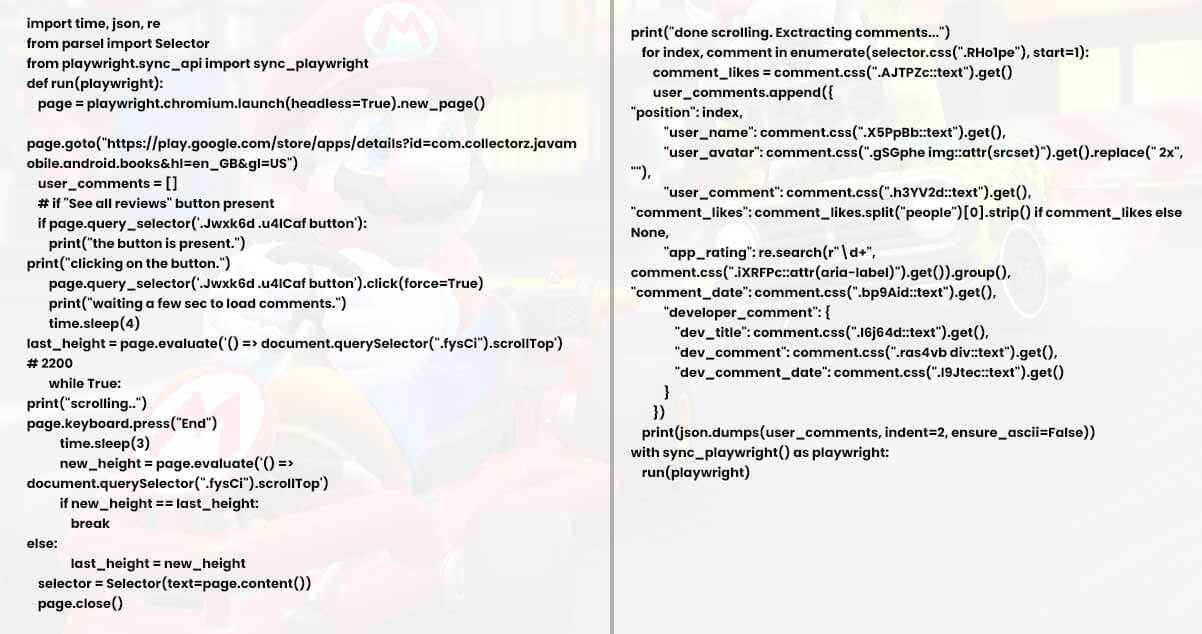

Import libraries

time for setting sleep() intervals among every scroll.

json just to do printing.

sync_playwright for synchronal API. playwright get asynchronous API while using an asyncio module.

Announce a function:

Prepare playwright, attach to chromium, launch() the browser new_page() and goto() the given URL:

playwright.chromiumis the connection to a Chromium browser example. launch() would launch a browser, and headless arguments will run that in a headless mode. The default is True.

new_page() makes a newer page in the new browser background.

page.goto("URL") would make the request to a given website.

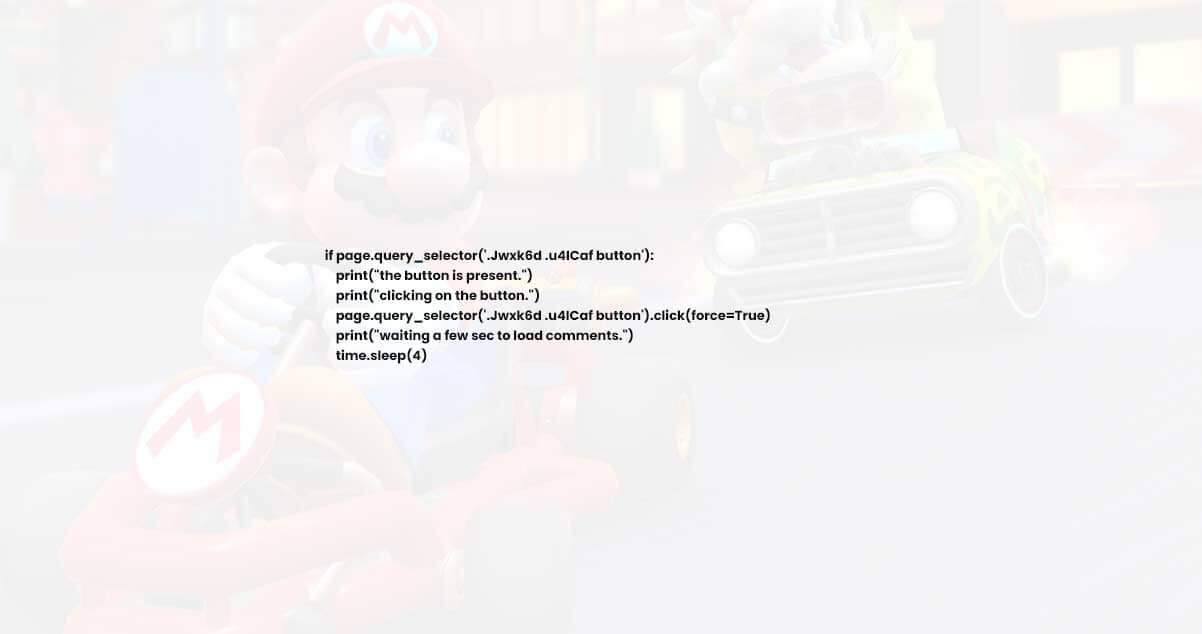

After that, we had to check in case, the button accountable to show all reviews is available and click on that if available:

query_selector is a function, which accepts the CSS selectors to get searched.

click is clicking on a button and force=True would bypass auto-waits and then click directly.

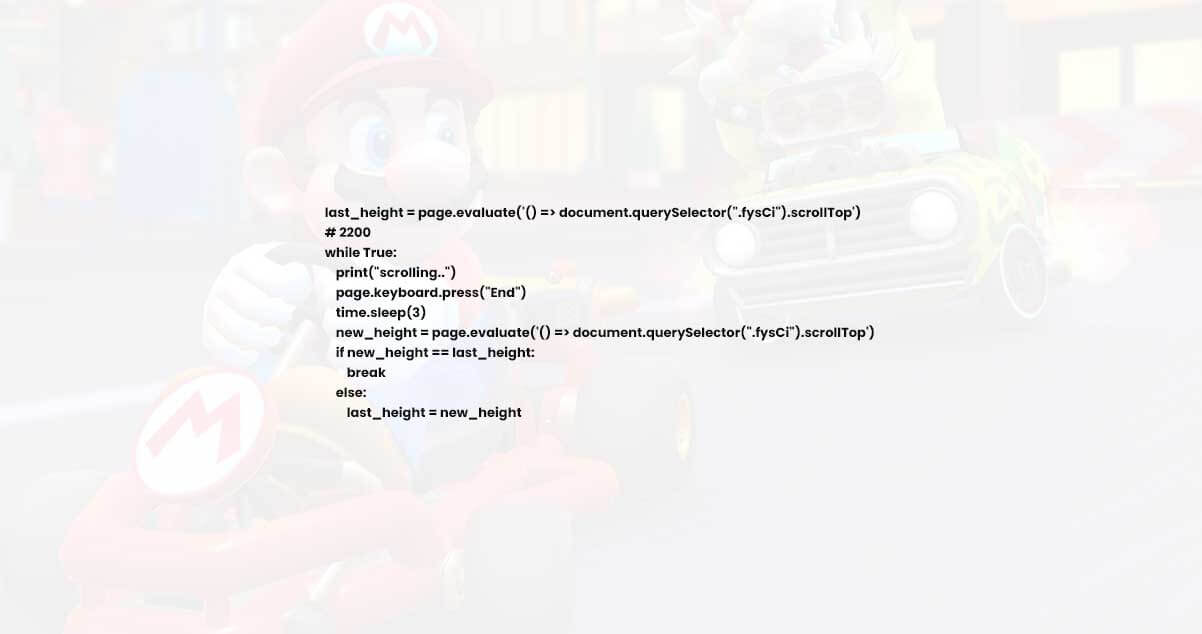

Scroll to bottom of a comments window:

page.evaluate() would run the JavaScript code within a browser context, which will measure height of a .fysCi selector. scrollTop finds total pixels scrolled from the given elements, in the case of CSS selector.

time.sleep(3) would stop executing code for 3 seconds for loading more comments.

Then this would measure the new_height after scroll running similar measurement JavaScript codes.

Finally, this would check if new_height == last_height, and exit the while loop using break.

else set a last_height to new_height as well as run an iteration (scroll) once more.

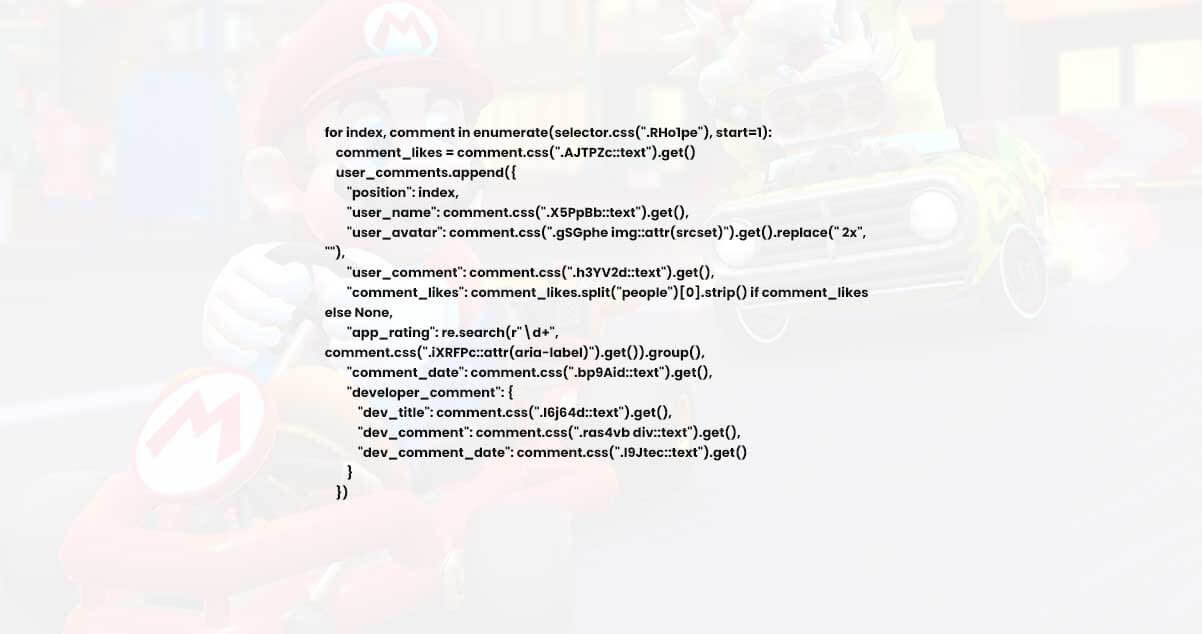

Then, pass scrolled the HTML content for parsel, close a browser:

Repeat the general results after a while loop gets done:

Print this data:

Run the code with context manager:

As we help scraping review data from the Google Play App, the section shows a comparison between DIY solutions and our solutions.

The major difference is, you don’t have to utilize browser automation for scraping results, make a parser from the scratch as well as maintain that.

Remember that there’s a chance also, which request could get blocked at a few points from the Google (CAPTCHA), we deal with that on the backend.

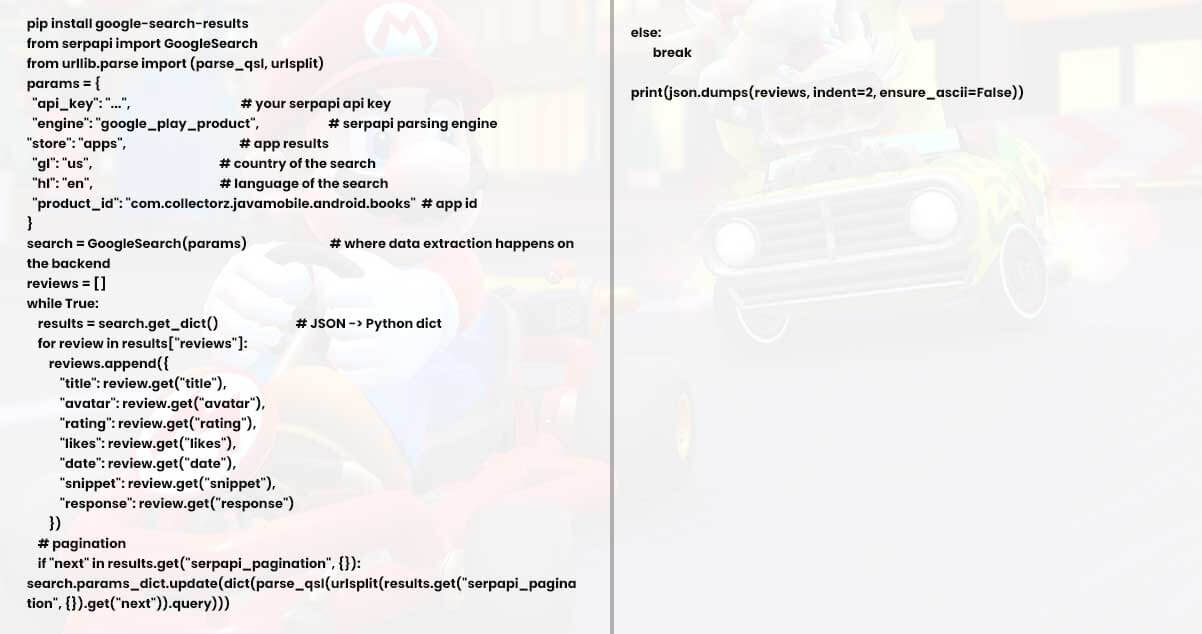

Install google-search-results from PyPi:

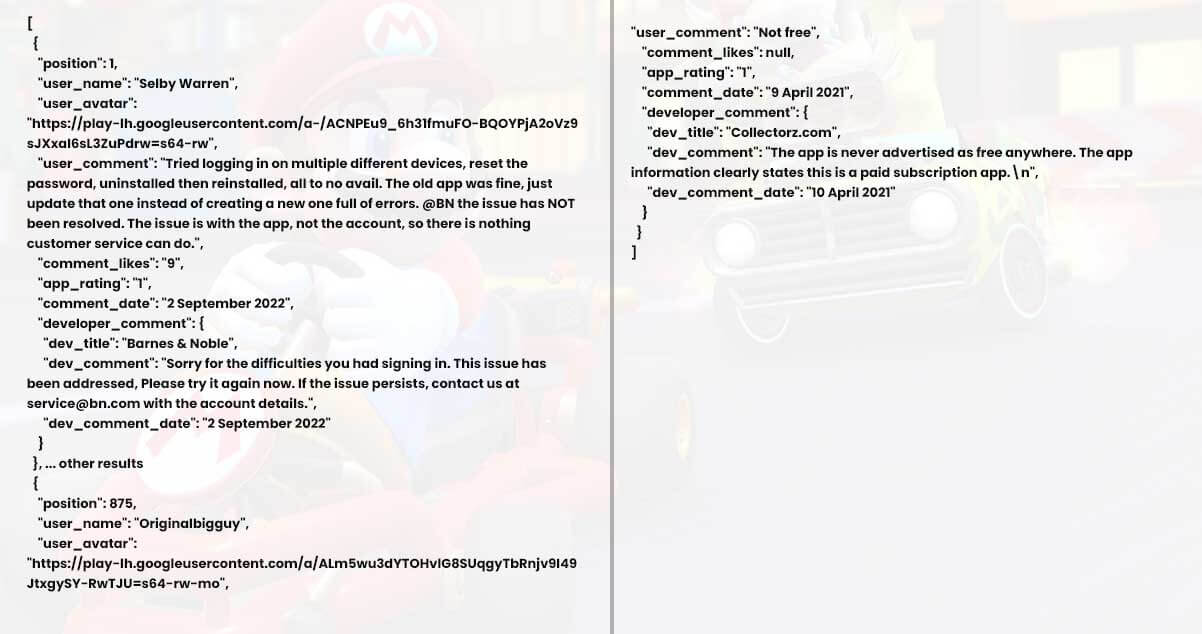

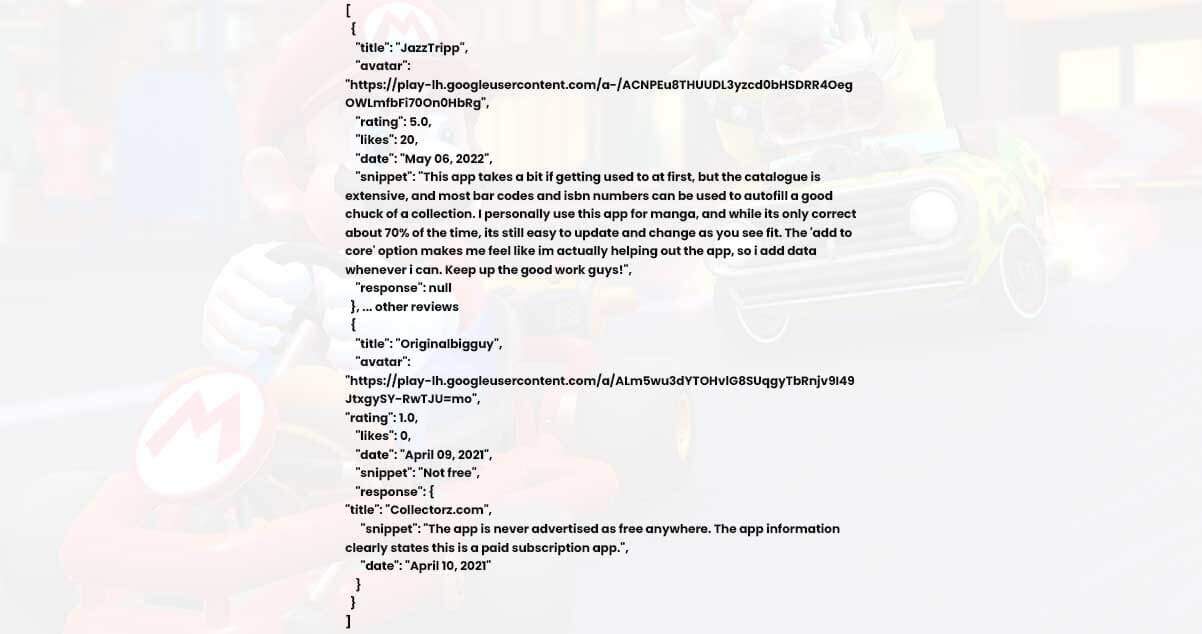

Output:

For more information, contact Actowiz Solutions now! You can also ask for a free quote for mobile app scraping and web scraping services requirements.

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

Struggling with dynamic pricing? Use Ola price data scraping and fare intelligence to gain real-time insights and optimize ride pricing strategies.

How we solved pricing inconsistencies using Real-Time Hotel Deals Data Extraction and Travel Pricing Intelligence to optimize rates, ensure parity, and boost revenue

Scrape 10 Largest Food Chains Data in the United States in 2026 to track pricing, market share, and consumer trends with real-time insights.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.