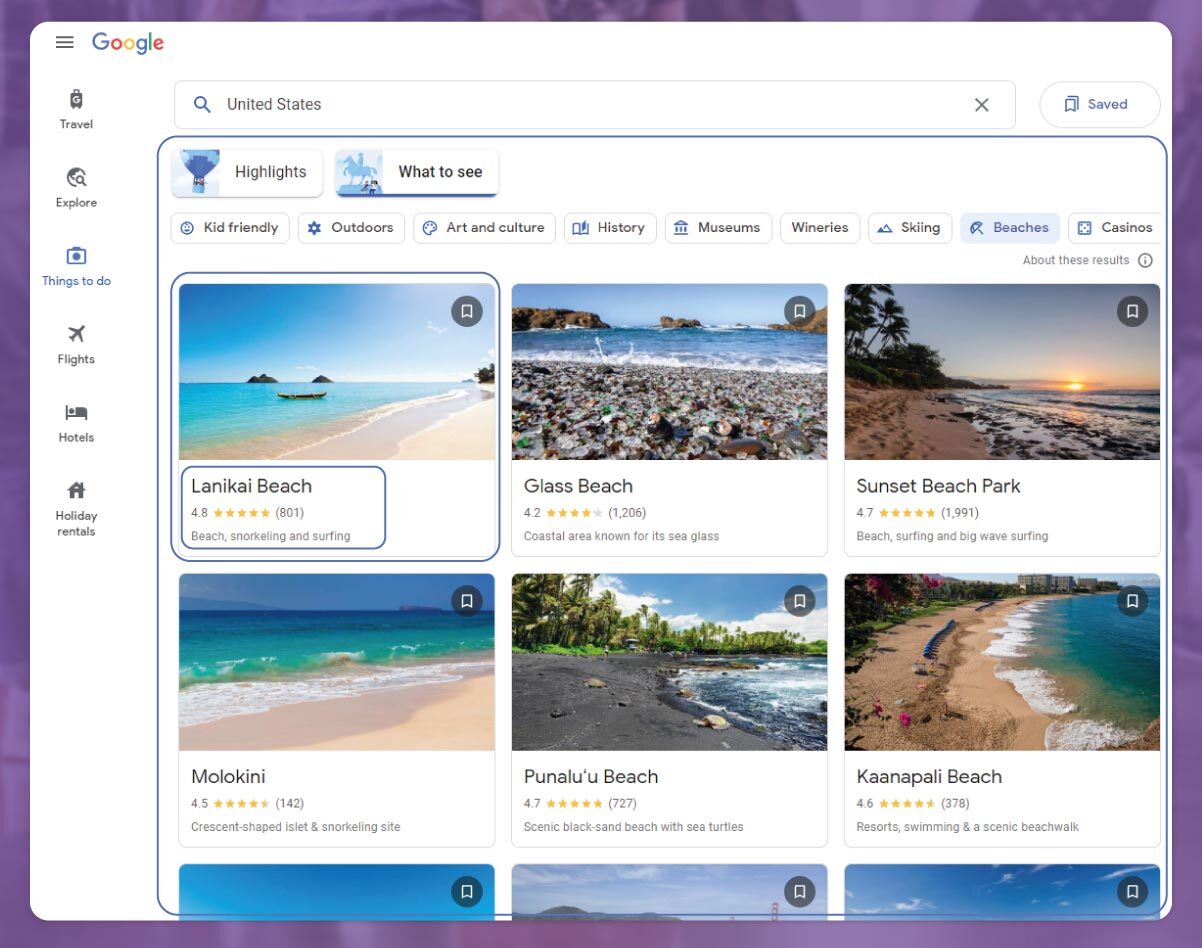

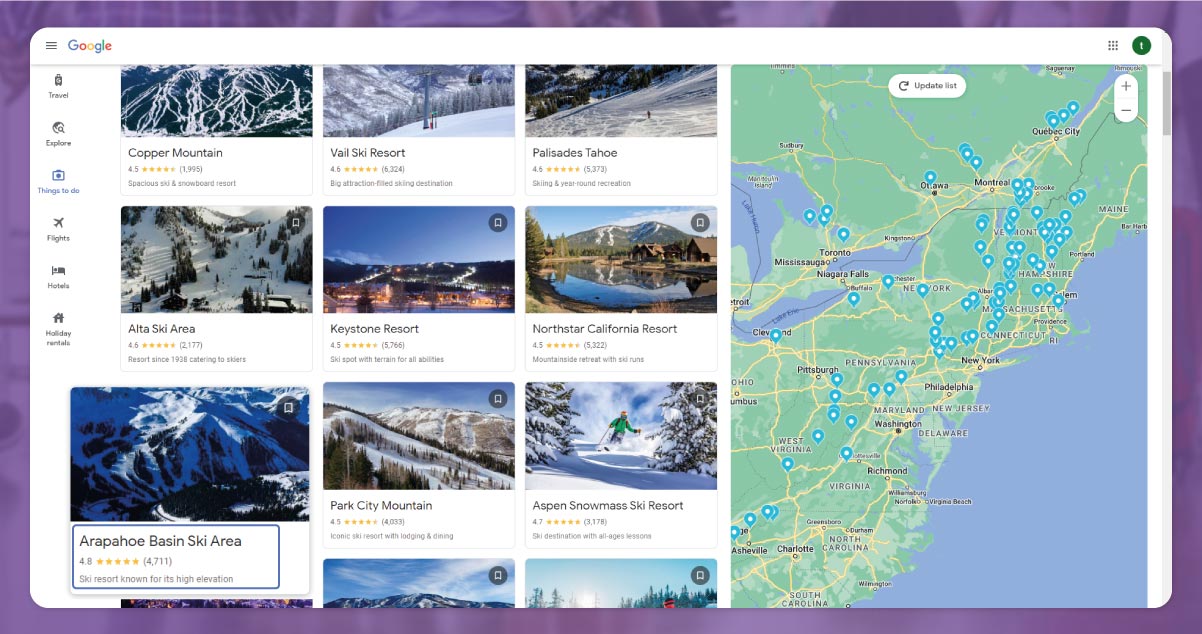

This post will share how to scrape Google Things To Do using node.js code. You will learn how to prepare before starting the process to scrape Google Things to do, the primary process, the detailed explanation of code, and the final output.

Here is the entire code:

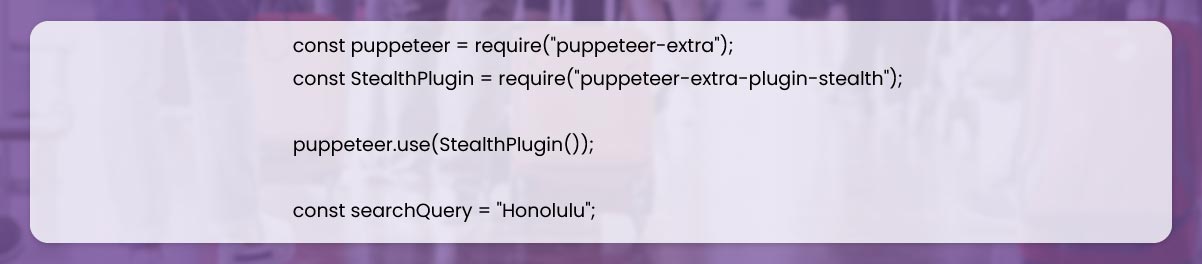

To start, we must create a Node.js project and then add Puppeteer-extra, puppeteer-extra-plugin-stealth, and Puppeteer packages to control Firefox, Chrome, or Chromium over DevTools protocols for any mode. Here, we will work in Chromium as a default browser.

For this, in our project directory, open write the command and enter:

$ npm init -yAnd then:

If you haven't installed Node.js on your device, you can go to their official website, download it, and follow the documentation to install it.

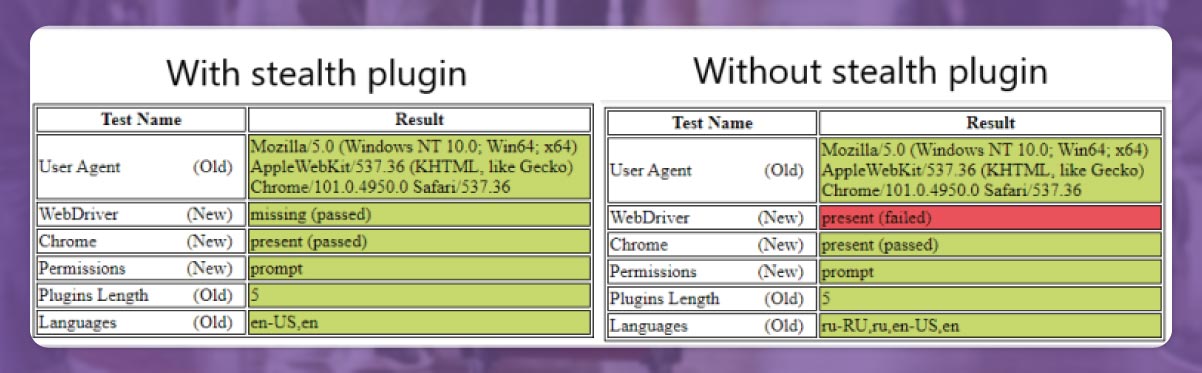

Note: you can also you Puppeteer without installing any extension. But it would help if you used extensions with puppeteer-extra and puppeteer-extra-plugin-stealth to avoid website detection when you use headless Chromium or webdriver. To check this, you can explore the headless test website in Chrome. Observe the difference below.

We'll finish the setup of the Node.js environment to run our project, and let's proceed to go through the code steps.

We will scrape the data using HTML elements of the Google things to do page. You can get the correct CSS selectors quickly with the help of a chrome extension selector gadget that allows you to collect CSS selectors after clicking the required browser element. But it only works effectively sometimes, mainly when loads of JavaScript use the website.

The below Gif redirects to the way to select various parts of the output with the help of SelectorGadget.

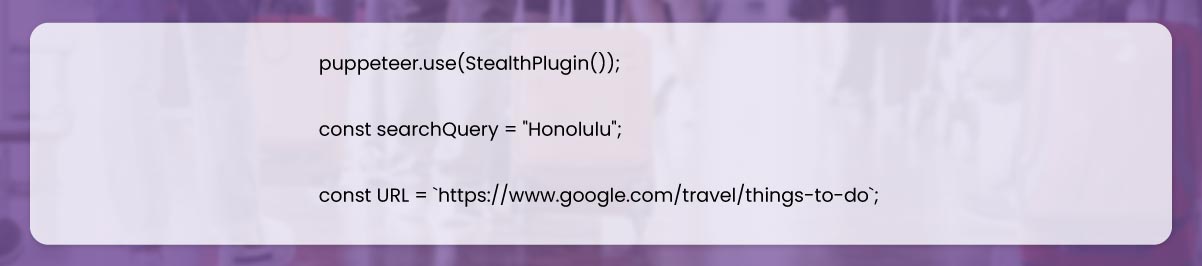

Decide Puppeteer control chromium from stealth plugin and Puppeteer extra library to avoid website detection.

Then, ask Puppeteer to use the stealth plugin and write a search inquiry to the URL.

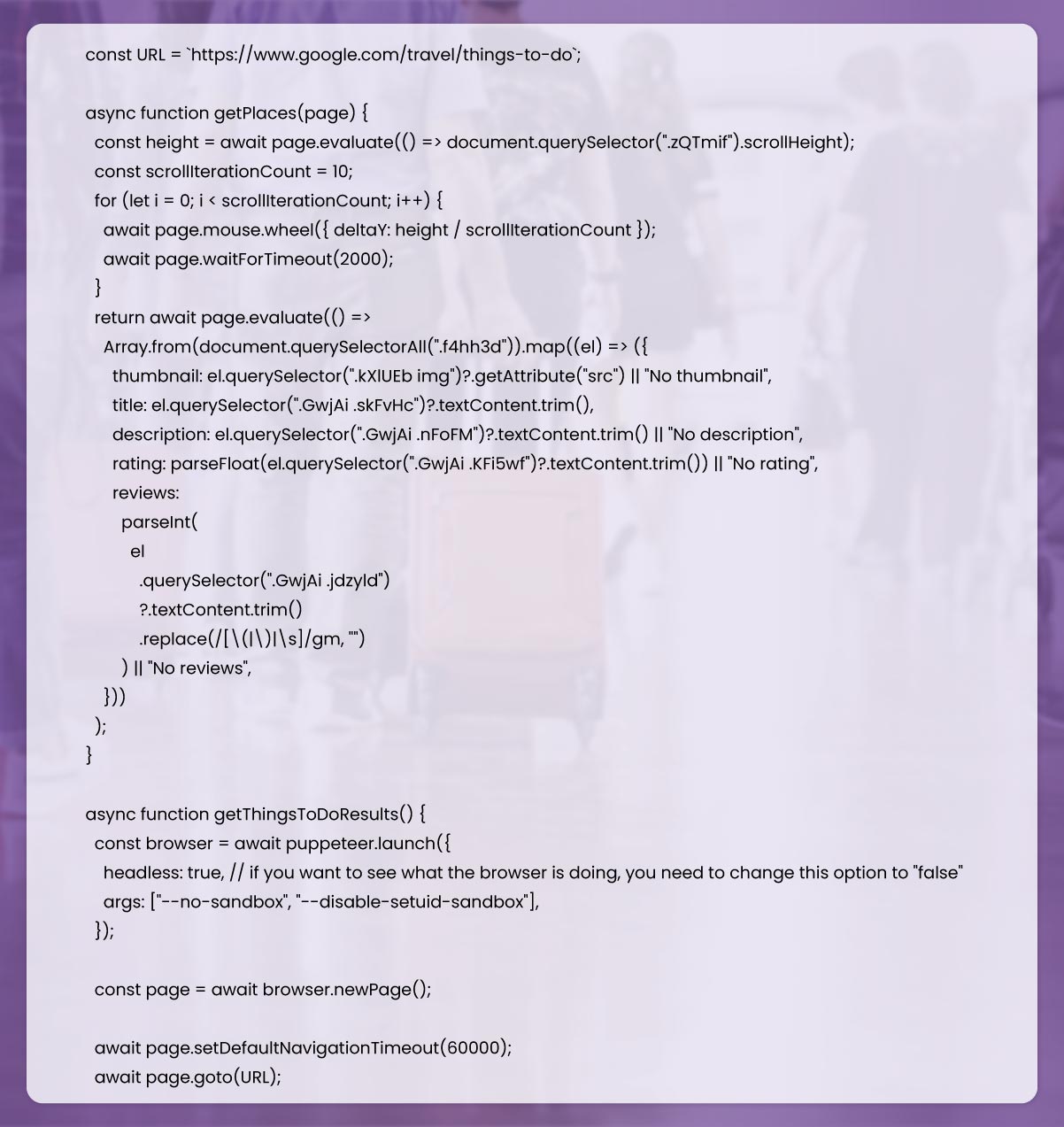

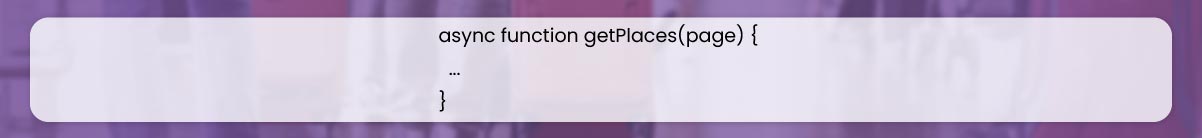

Then write a function and find places on the webpage.

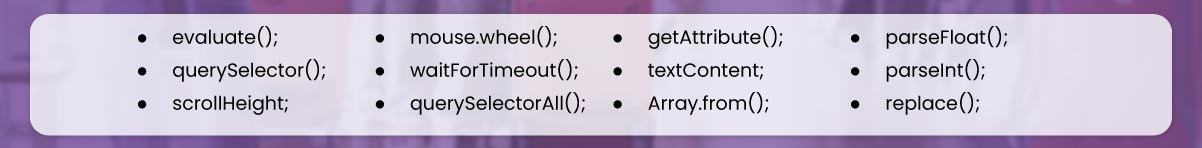

Using this function, we'll explore the below steps and properties to collect the preliminary information.

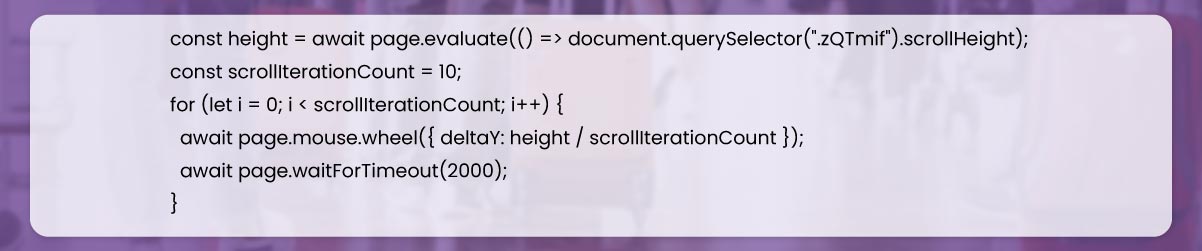

Firstly, we should scroll the webpage and load every thumbnail. For this step, get the page scroll Height, nominate the scroll iteration count, and then proceed with scrolling the page using for loop.

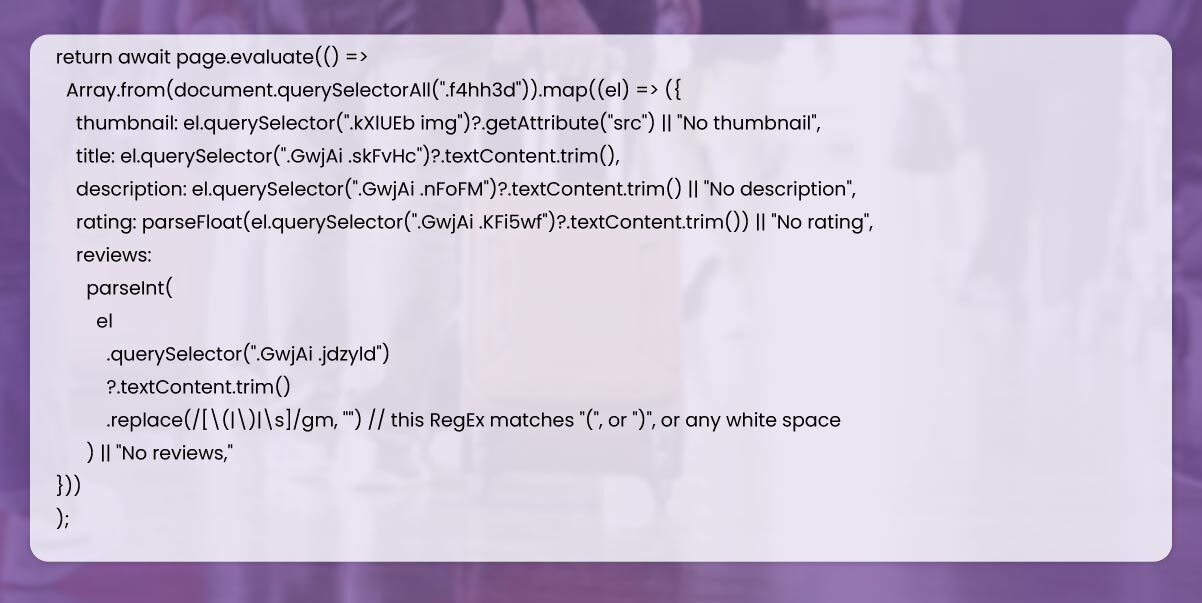

After that, collect and return every place data from the webpage with the help of evaluate() step.

Then, write the function to regulate the web browser and collect data from every category.

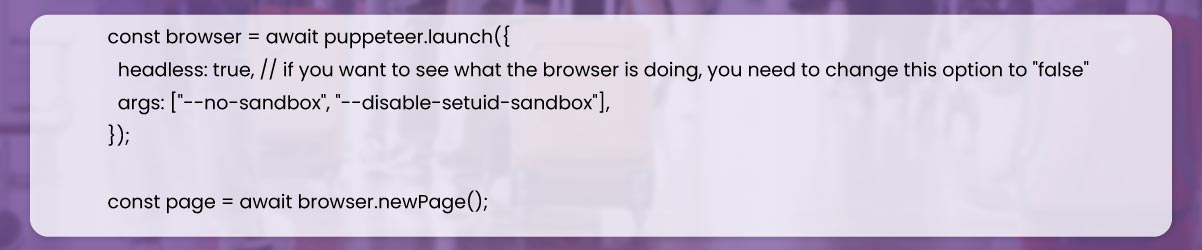

Here, we have to nominate a browser with the help of a puppeteer.launch() method using existing options like headless true and args ["--no-sandbox," "--disable-setuid-sandbox"].

The meaning of these options is that we utilize arguments with array and headless mode and enable the launching browser process using an online IDE. Then go to a new page, and open it.

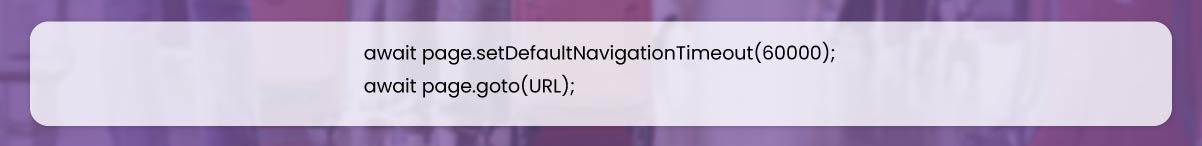

Then, change the default time from 30 seconds to 60 seconds allowing selectors to wait for slow internet speed using .setDefaultNavigationTimeout() method and go to the URL with the help of .goto() process.

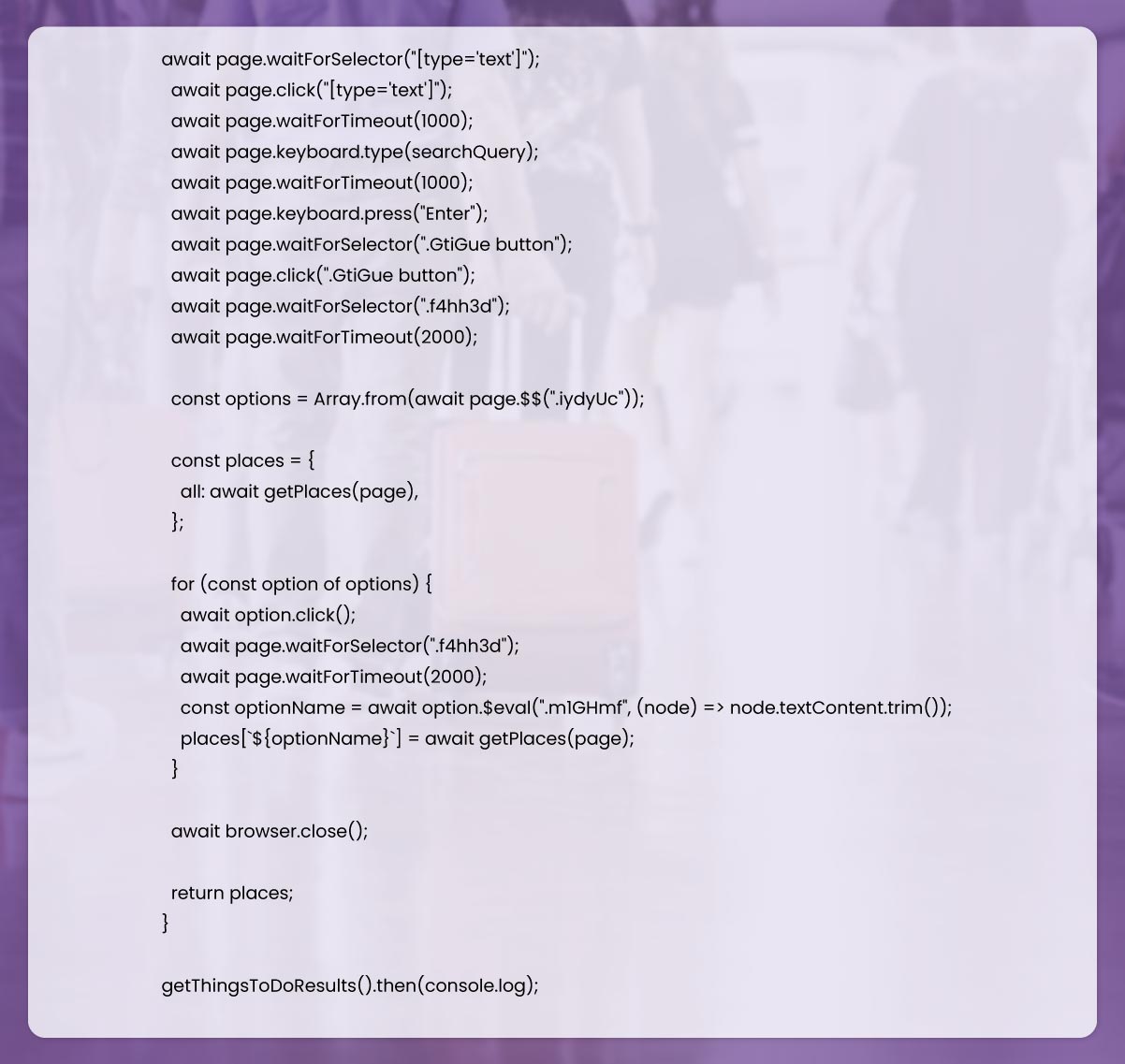

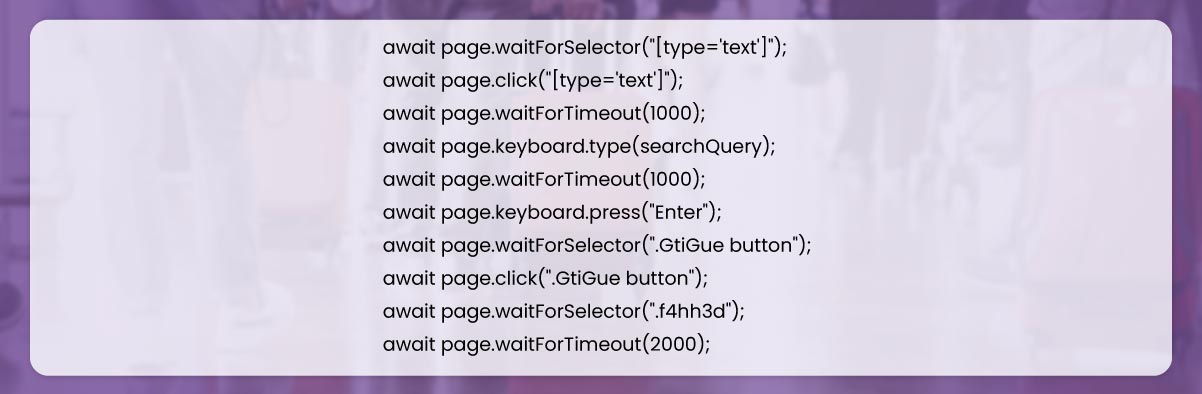

After that, we will wait till loading type=text selector with waitForSelector() command, then click on input and press searchquery keyboard.type() command. Enter the button using keyboard.press() command, then hit the see all top sights button.

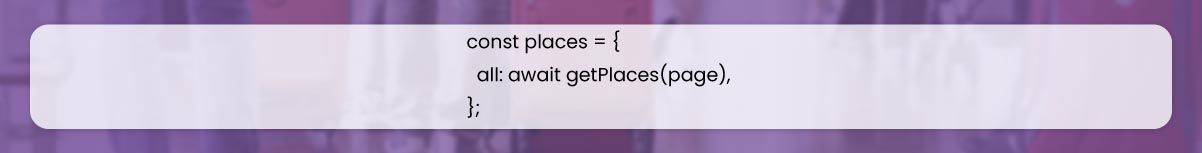

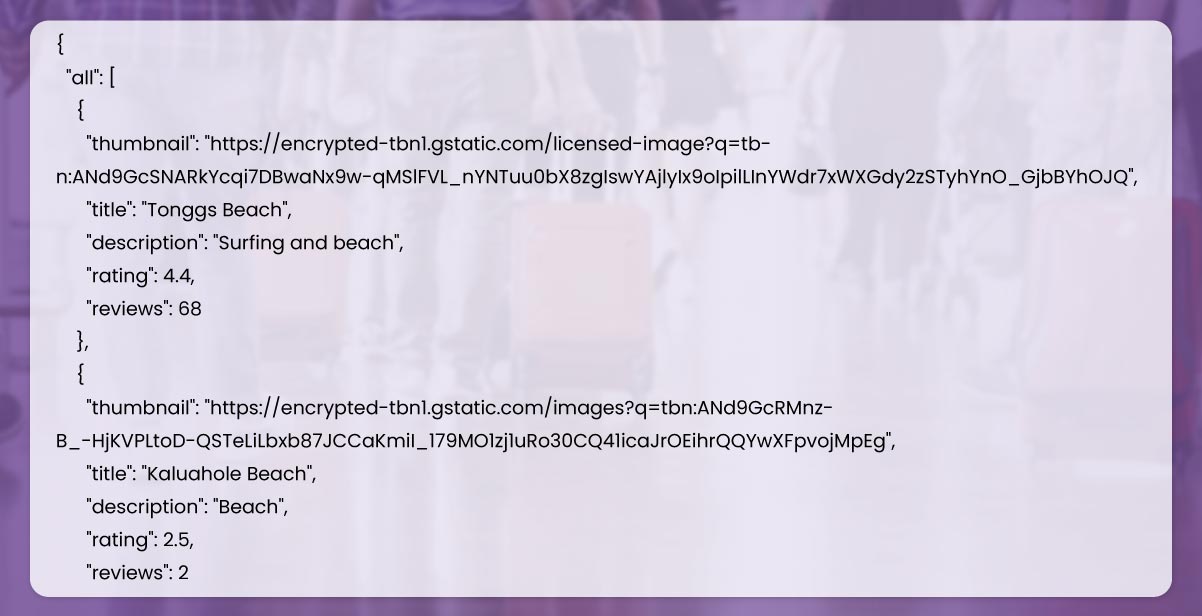

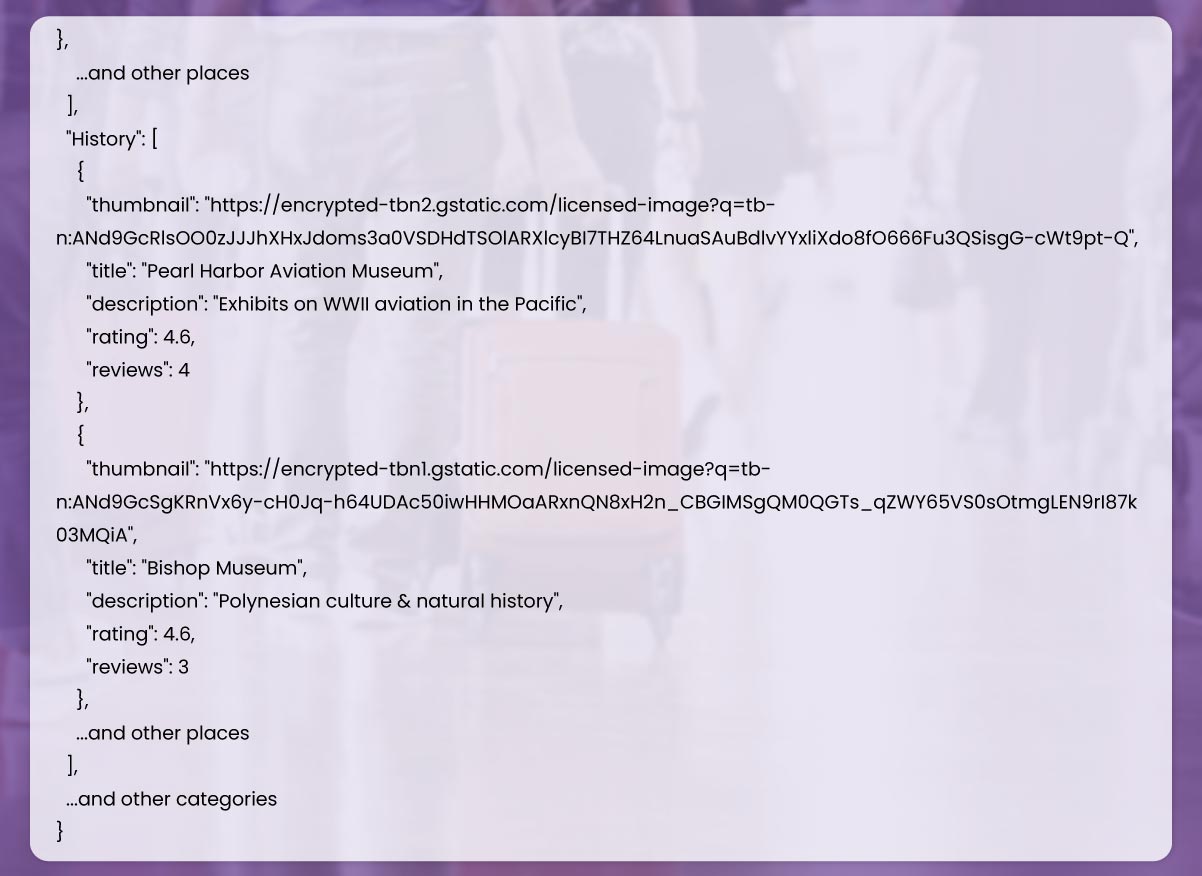

After this, we'll nominate the places object and add the information of places from the page to each key.

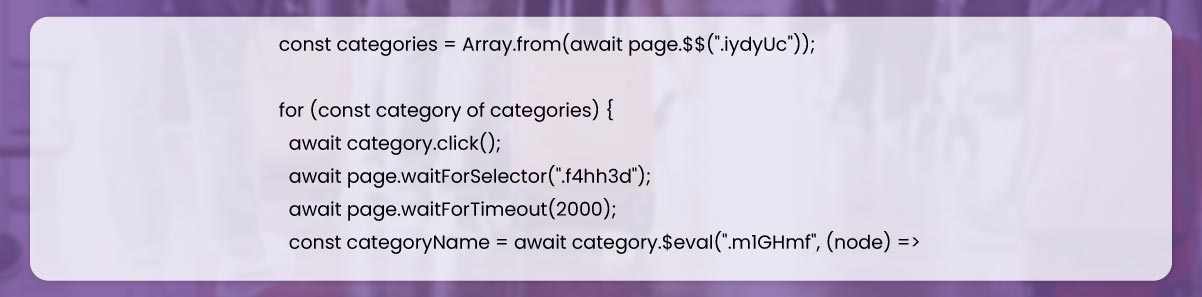

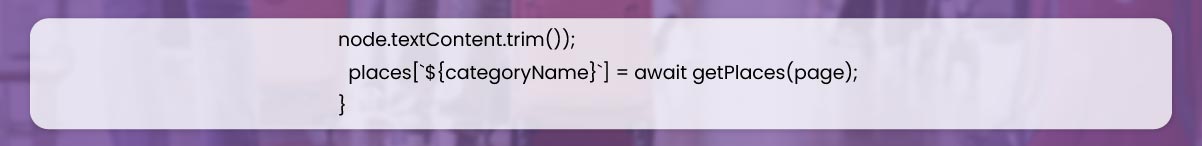

Then, we should collect each category from the webpage and get all the information of places from every category after clicking on each and setting to object key of places using the name of categories.

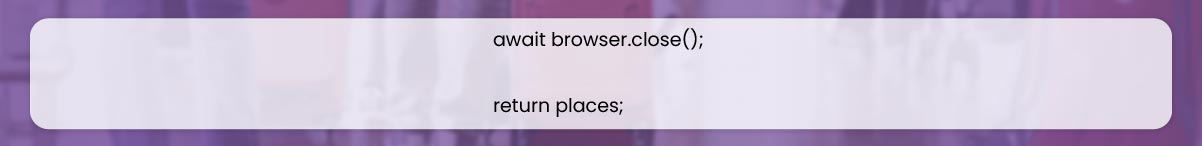

Finally, after receiving all the data, we will close the browser.

Then we can launch our tool to parse the data.

Did you find it helpful? If you wish to learn more or want us to help you with web scraping services, contact Actowiz Solutions.

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

The 7 US e-commerce platforms every brand should scrape in 2026 - Amazon, Walmart, Target, Costco, eBay, Best Buy & Wayfair. Insights by Actowiz Solutions.

Buc-ee's locations data scraping in the USA in 2026 helps brands unlock location insights, optimize expansion strategies, and gain a competitive edge.

Mother's Day 2025 E-commerce Insights report — 47,000+ SKUs across 12 platforms. Pricing, discounts, stock-outs & what brands should expect in 2026.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.