Get sourced data you want to kick-start an App project

- You are a developer

- You would love to create a

wonderful Web Application

- You are completely

dedicated towards your project

Although you tick all these points, you still require a domain associated

dataset before you write a one line code. It is because contemporary

applications use a huge amount of data at the same time or in batches for

providing value for users.

In this blog, we will explain our workflow to generate such datasets. You

would see how we deal with automated data scraping of different

websites with no manual intervention.

Our objective is to produce a dataset to make a Price Comparison

WebApp. A product category we will be utilizing as an example is hand-

bags. For this application, price and product data of hand-bags need to

be collected from various online-sellers every day. Though some sellers

offer an API to access all the

required details, not all follow the similar

route. Therefore, using web scrapping is certain!

In this example, we will create web spiders for 10 sellers using Scrapy

and Python. Then, we will automate the procedure using Apache Airflow

and there will be no requirement for manual involvements to execute the

whole procedure periodically.

A Live Demo Web App with Source Code

You can get all associated source code in the GitHub repository.

Our Web Scraping Workflow

Before we start any web data scraping

project, we need to define which

sites will get covered in this project. We have decided to include 10

websites that are the most stayed online stores in Turkey for hand bags.

You can observe them in our GitHub repository.

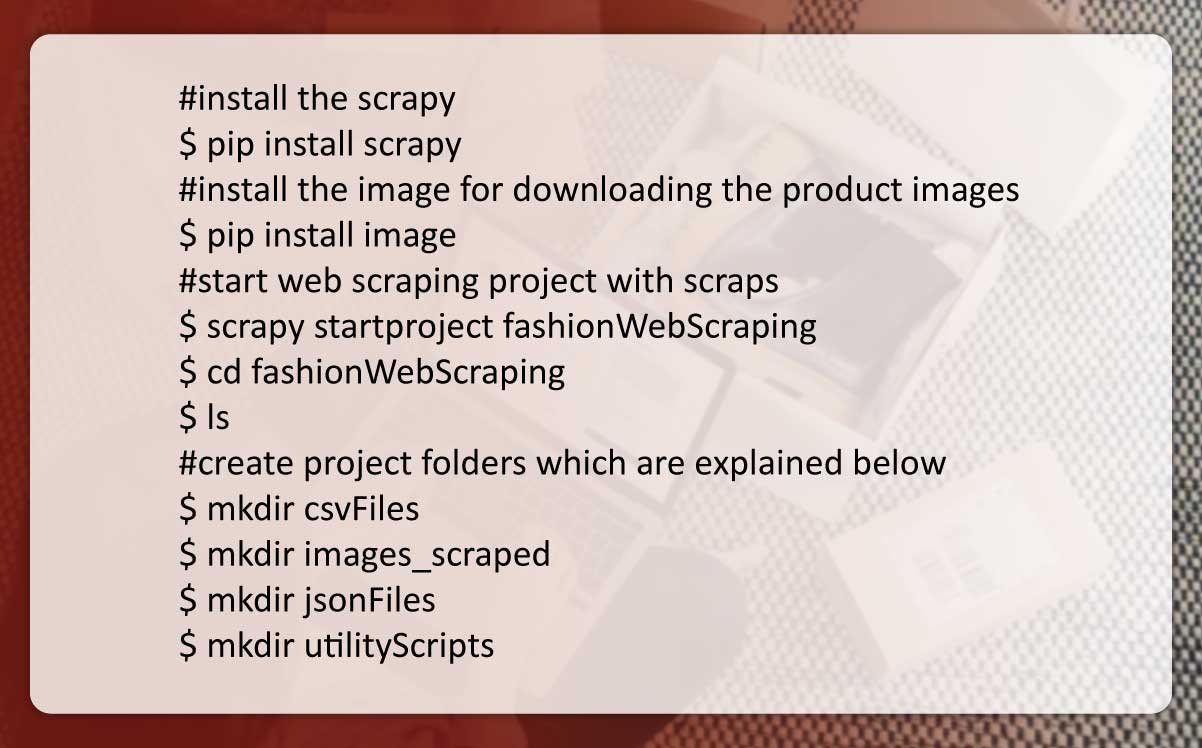

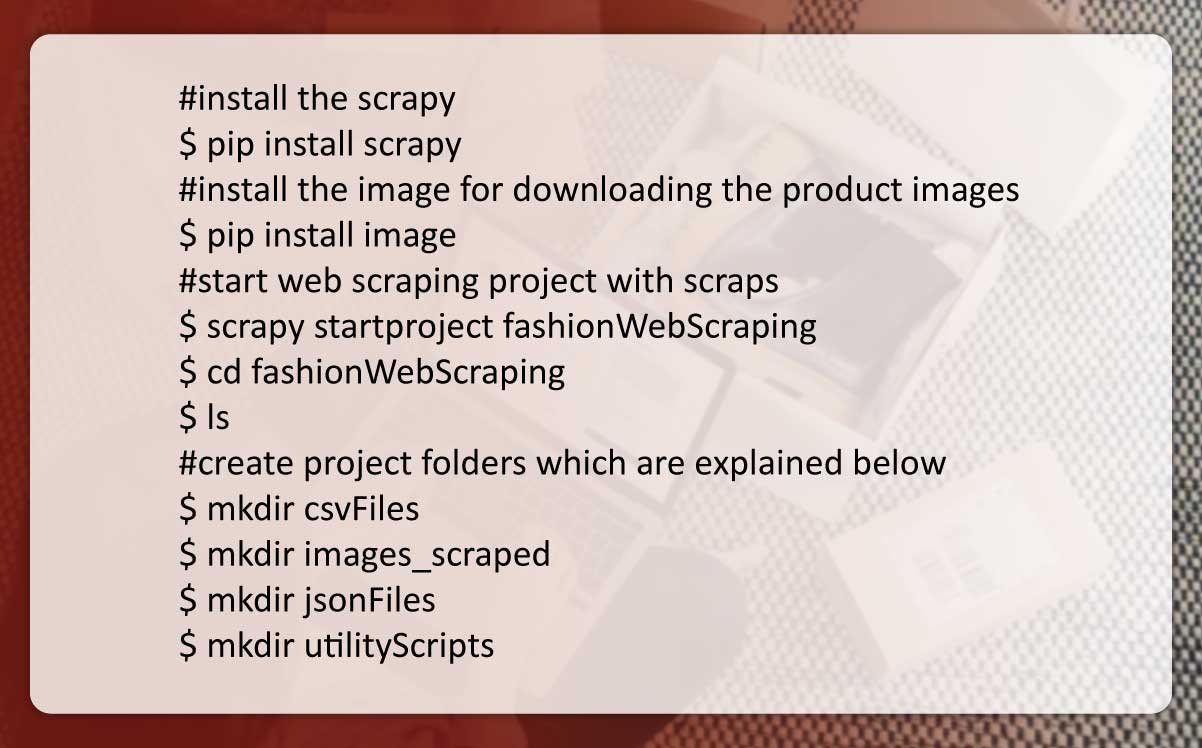

Step1: Install Scrapy and Set Up Project Folders

You need to install Scrapy in your computer and create a Scrapy project

before making any Scrapy spiders.

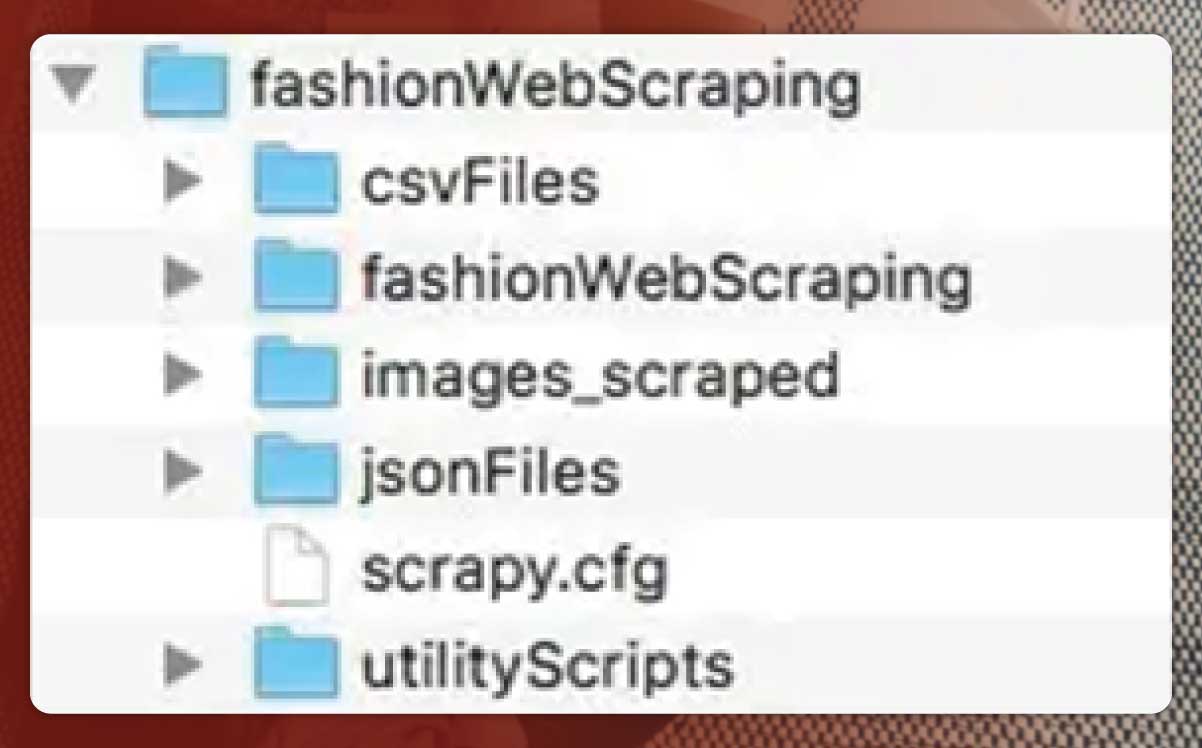

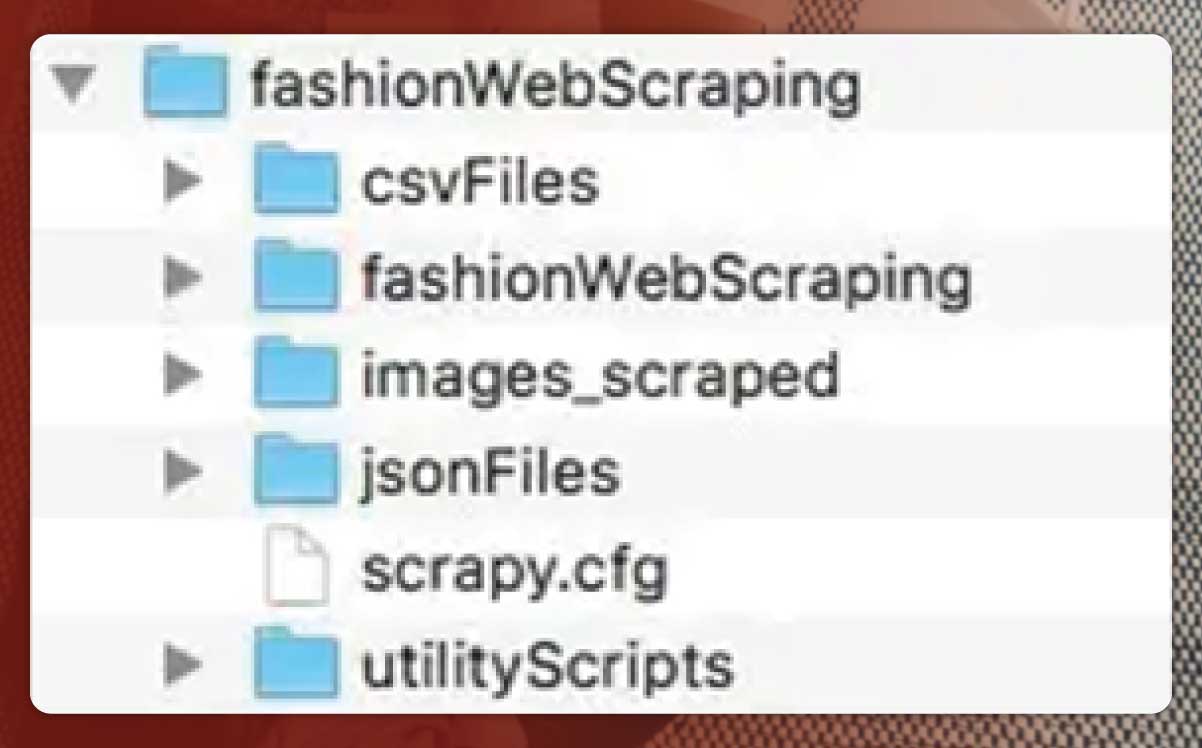

Project Files & Folders

We made a folder structure in the local computer for neatly placing

project files in separate folders.

A ‘csvFiles’ folder has a CSV file for all websites extracted. Spiders would

be reading from the CSV files to find ‘starting URLs’ for initiating

scraping as we do not need to hard-code them in spiders.

‘fashionWebScraping’ folder has Scrapy spiders with helper scripts

including ‘pipelines.py’, ‘settings.py’, and ‘item.py’. We need to modify a

few of Scrapy helper scripts for executing the scraping procedure

successfully.

Product images extracted will get saved in an ‘images_scraped’ folder.

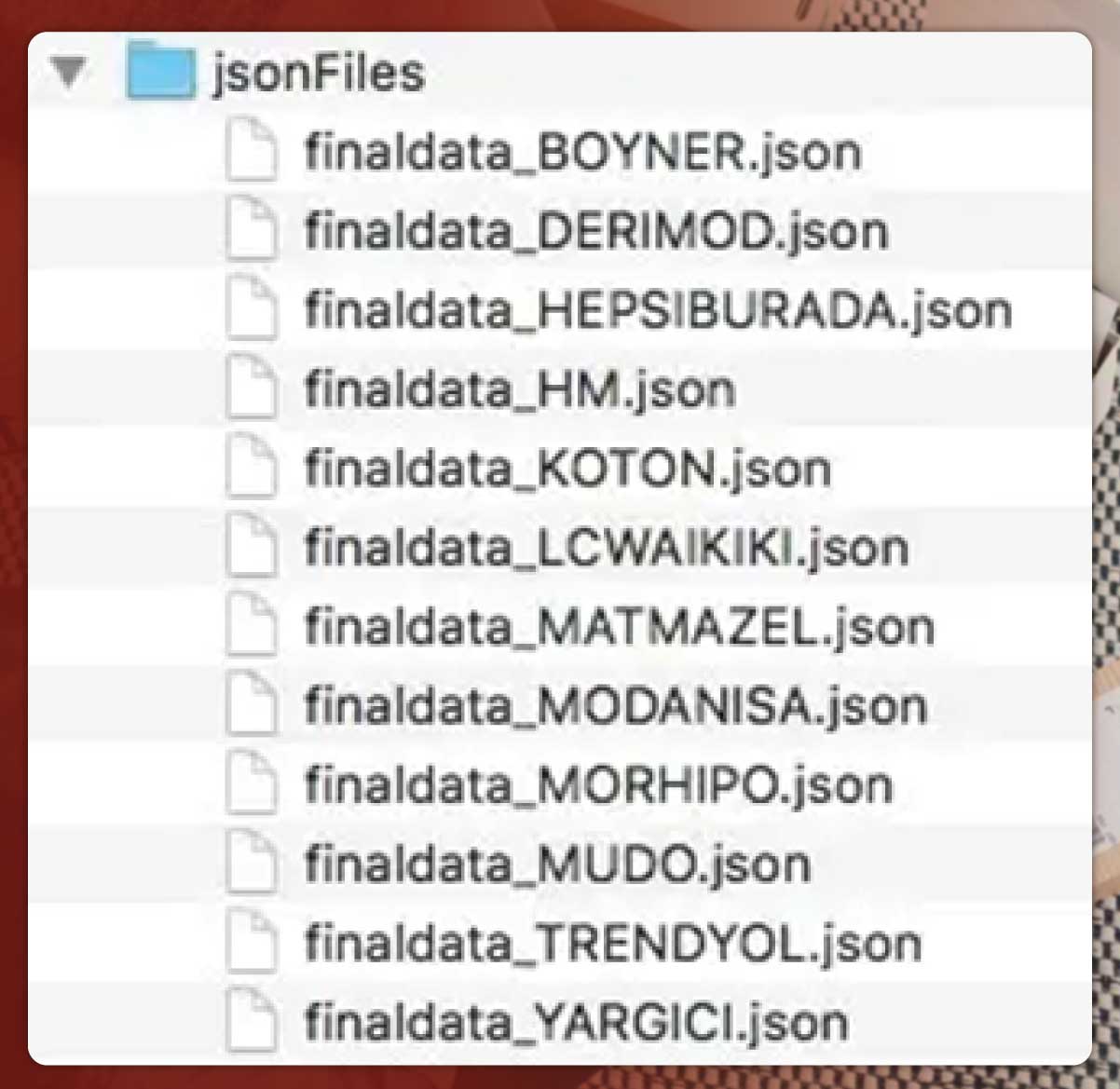

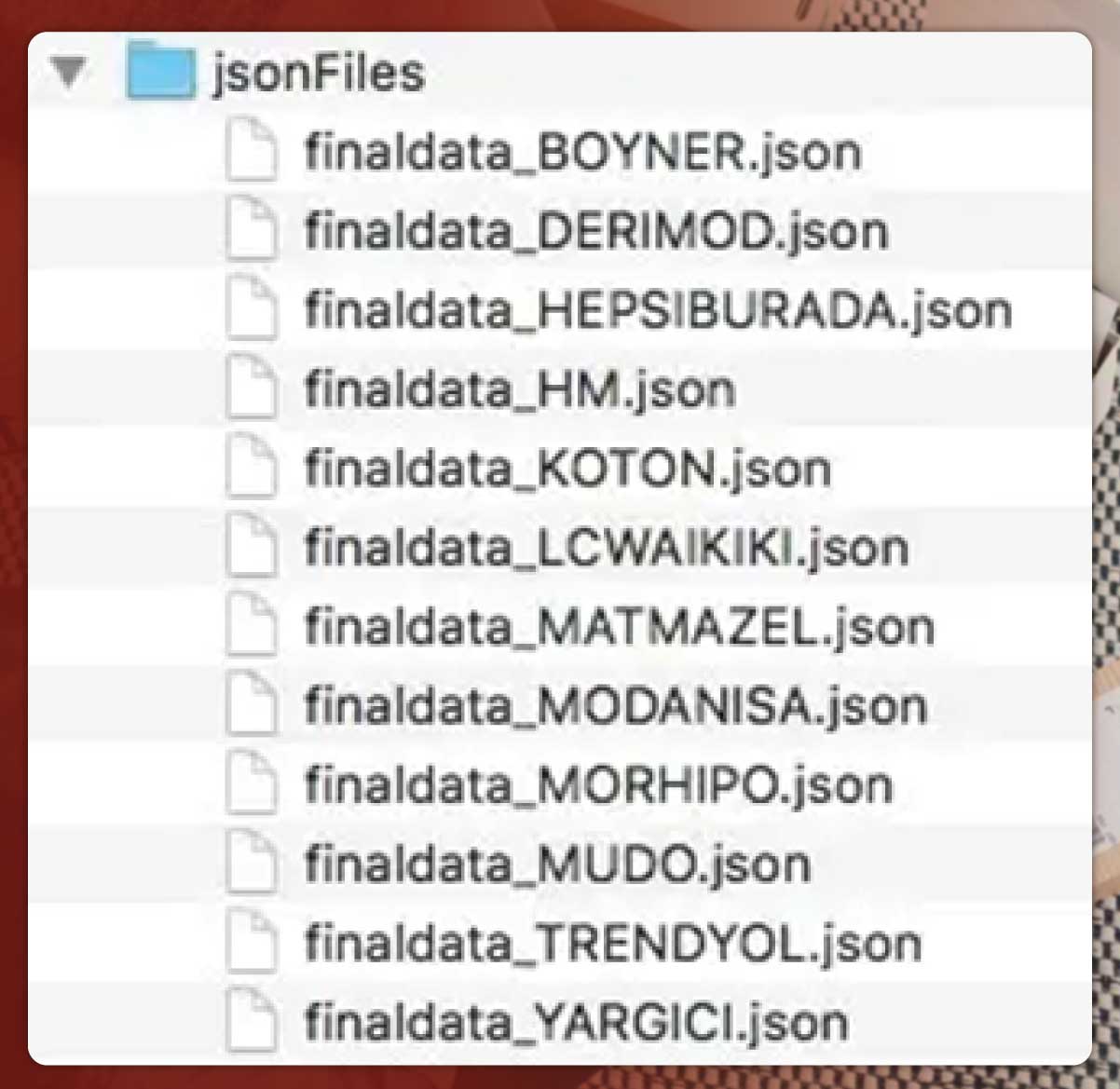

During the procedure of web data scraping, all the product data like

pricing, name, product links and image links would be saved in JSON

files within ‘jsonFiles’ folder.

There would be utility scripts to execute some tasks like;

- ‘deldub.py’ for

detecting and removing duplicate product data in

JSON files after data extraction ends.

- ‘deleteFiles.py’ for

deleting all the JSON files produced at prior

scrapping session.

- ‘jsonPrep.py’ is one

more utility script for detecting and deleting

null line objects in JSON files after data extraction ends.

- ‘jsonToes.py’ for

populating ElasticSearch clusters in the remote

location reading from JSON files. It provides a full-text real-time

search experience.

- ‘sitemap_gen.py’ is to

generate a site map that covers different

product links.

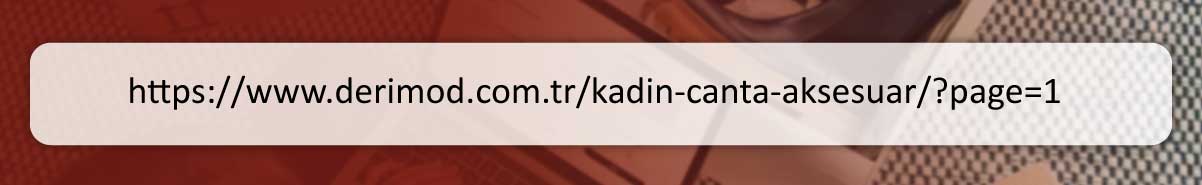

Step2: Understand Particular Site’s URL Structure with

Settling CSV Files for Preliminary URLs

After creation of project folders, the next step is populating the CSV files

with starting URLs for every website we like to extract.

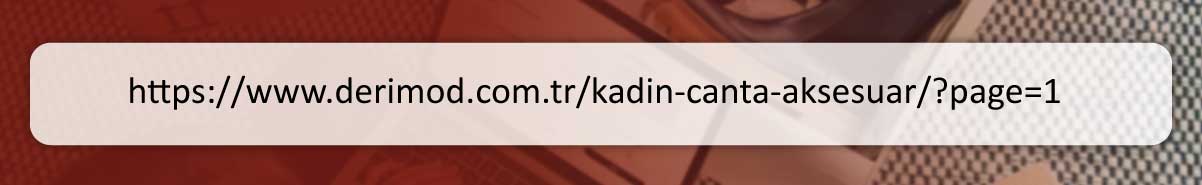

Nearly every e-commerce site

provides pagination for navigating users

through product list. Each time you navigate for next page, a page

parameter within URL increases. Just go through the example URL

given below, where a ‘page’ parameter gets used.

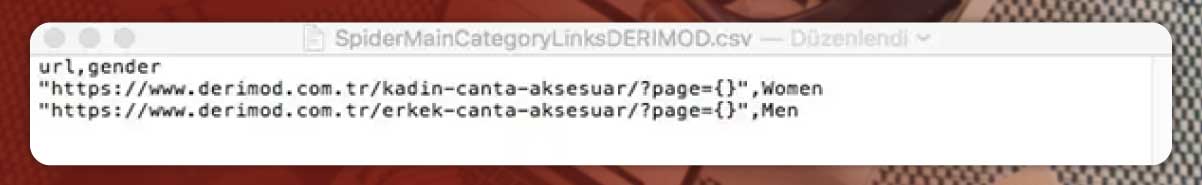

We will utilize {} placeholder to iterate URLs by incrementing values of

‘page’. We will utilize a ‘gender’ column within CSV file for defining

gender categories of a particular URL.

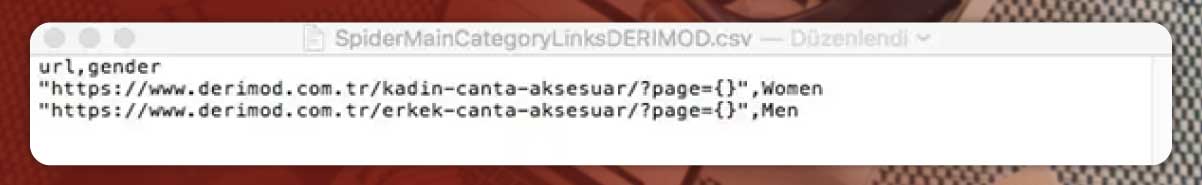

Therefore, the last CSV file would look like that:

The similar principles applied to rest of sites in a project.

Step3: Modify ‘settings.py’ and ‘items.py’

Step 1: Installing and Setting Up packages

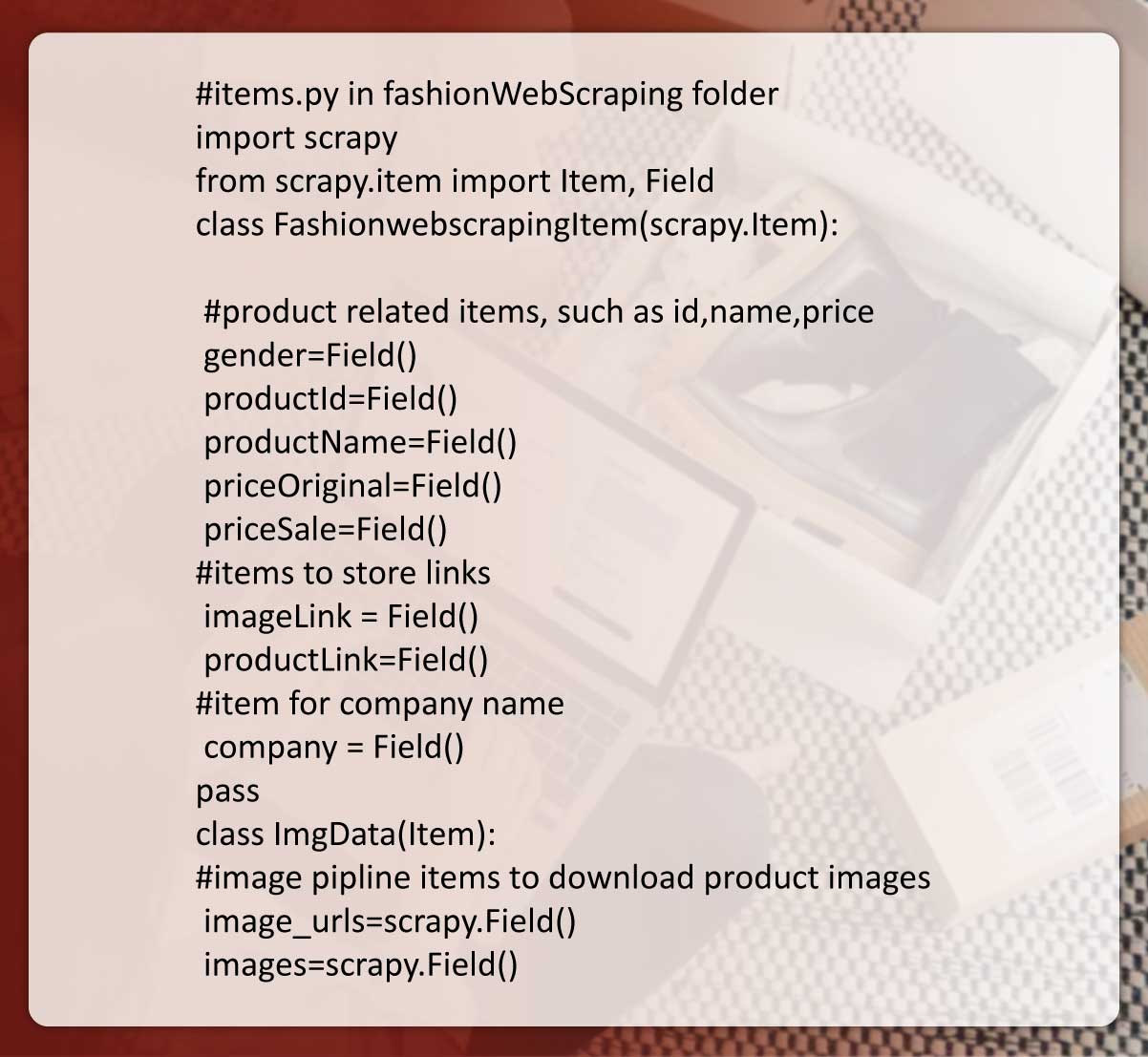

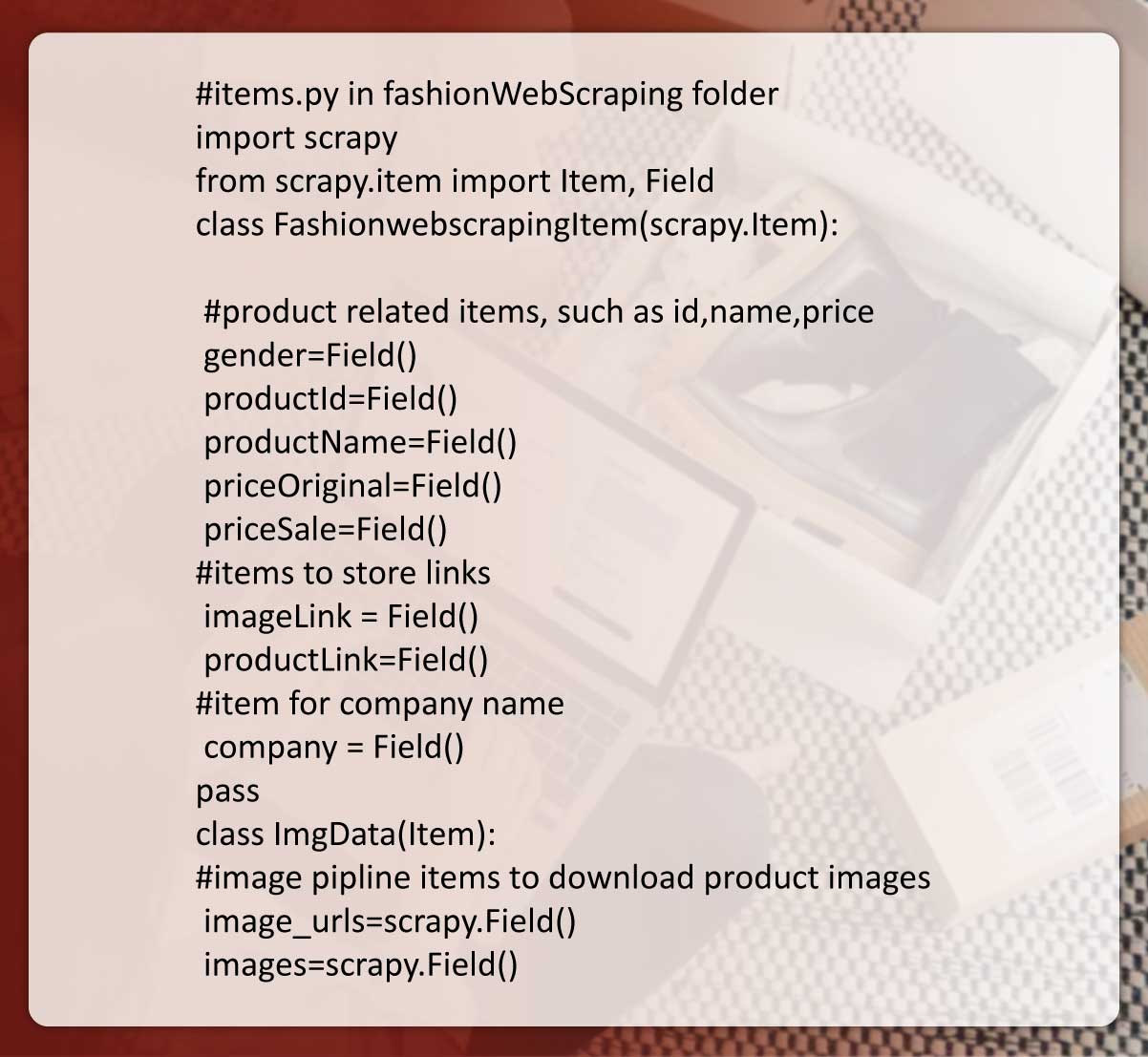

To do web scraping, we need to modify ‘items.py’ for defining ‘item

objects’ that are used for storing the extracted data.

To describe general output data formats Scrapy offers an Item class.

These item objects are easy containers used for collecting the extracted

data. They offer dictionary-like APIs with an easy syntax to declare their

accessible fields.

using scrapy.org

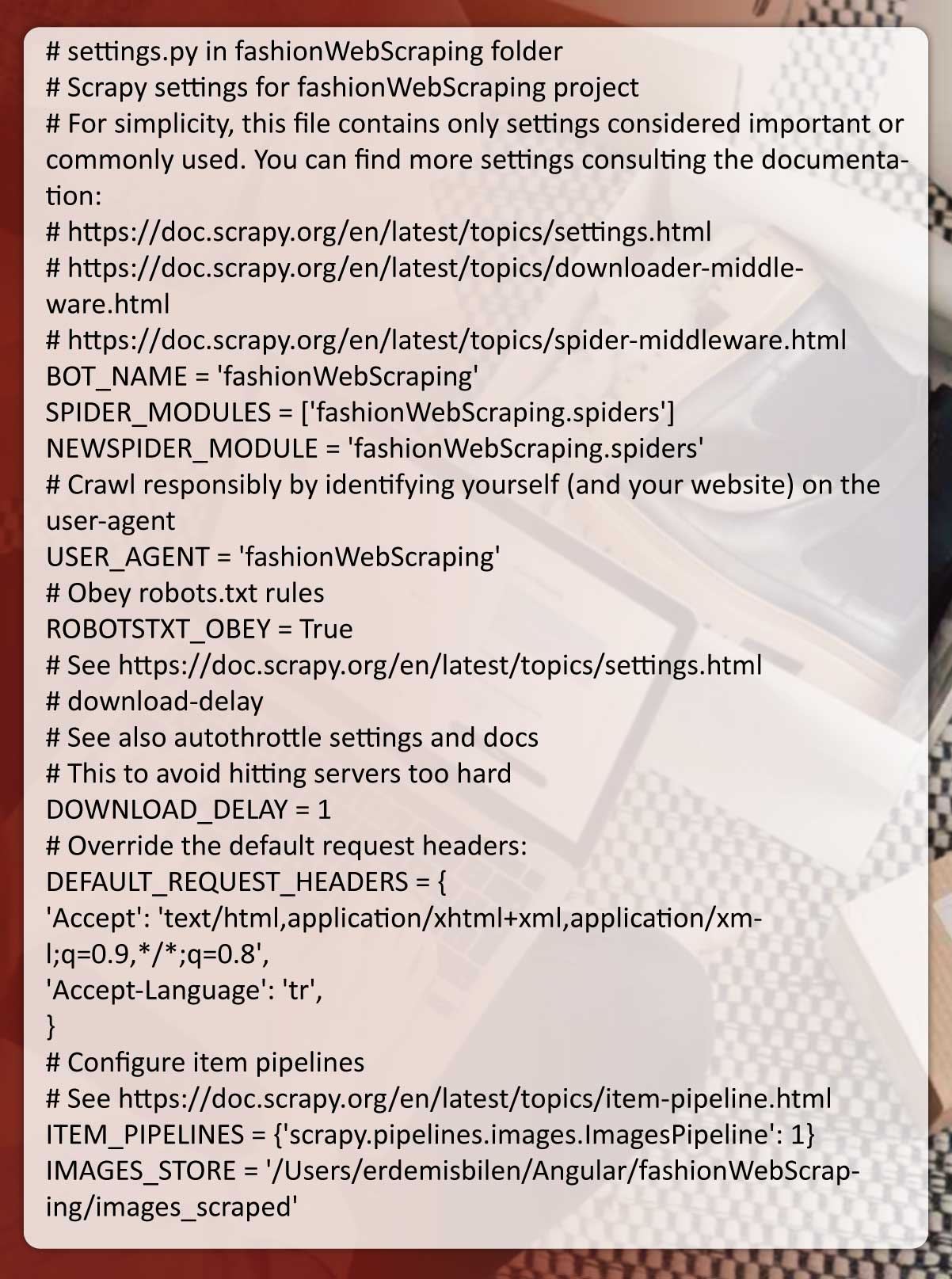

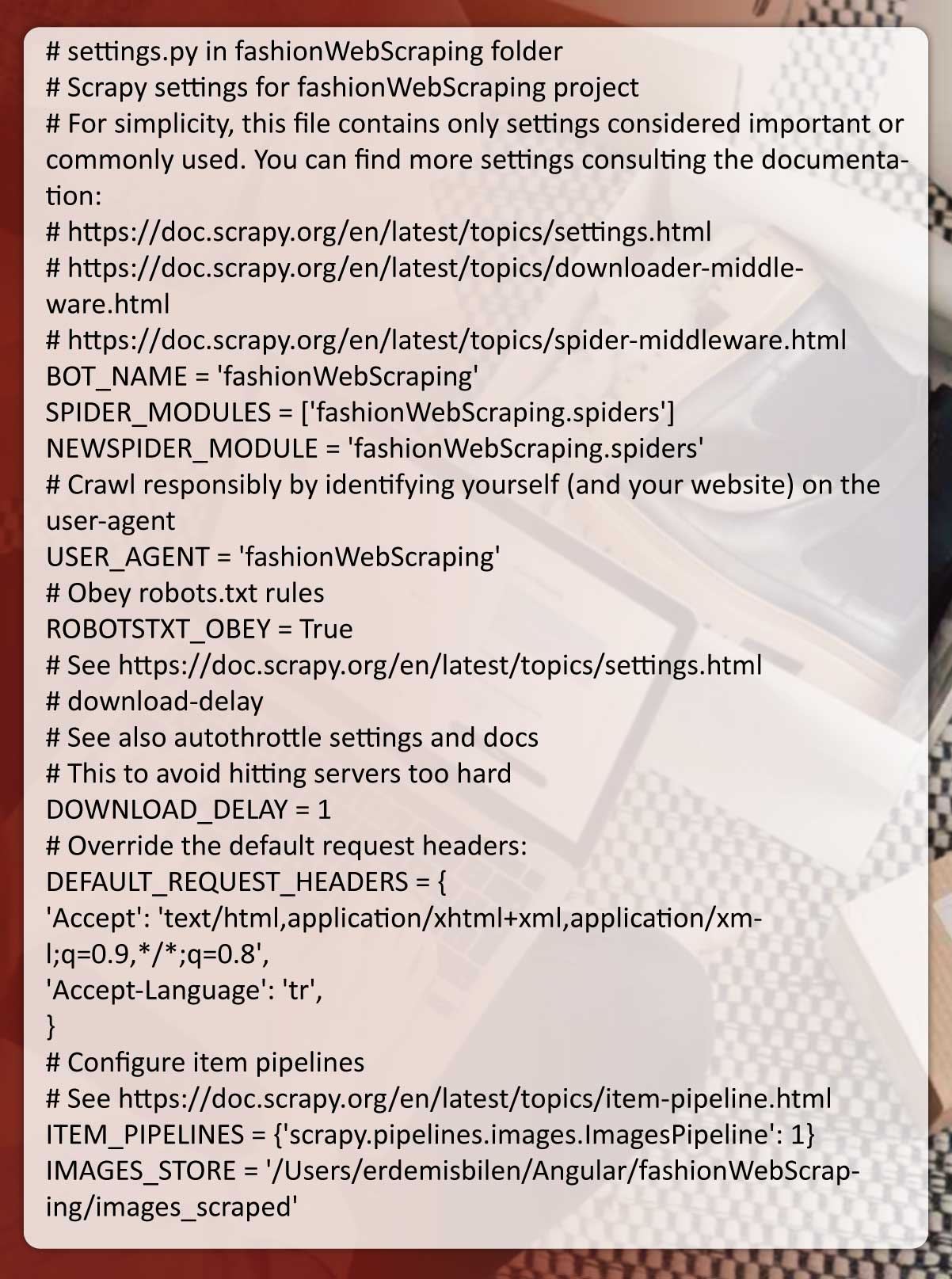

After that, we need to modify ‘settings.py’. It is necessary to customize

image pipelines and spiders’ behavior.

These Scrapy settings permit you in customizing the behavior of

different Scrapy components like the core, pipelines, extensions, and

spiders.

using scrapy.org

‘settings.py’ and ‘item.py’ are valid for different spiders in the project.

Step4: Making Spiders

Spiders from Scrapy are the classes that define how certain sites (or

groups of websites) will get scraped, together with how to do crawling

(i.e. follow links) as well as how to scrape structured data using their

pages (i.e. extracting items). Spiders are a place where you can define

crawling’s custom behavior and parsing the pages for any particular

website (or in a few cases, one group of websites).

using scrapy.org

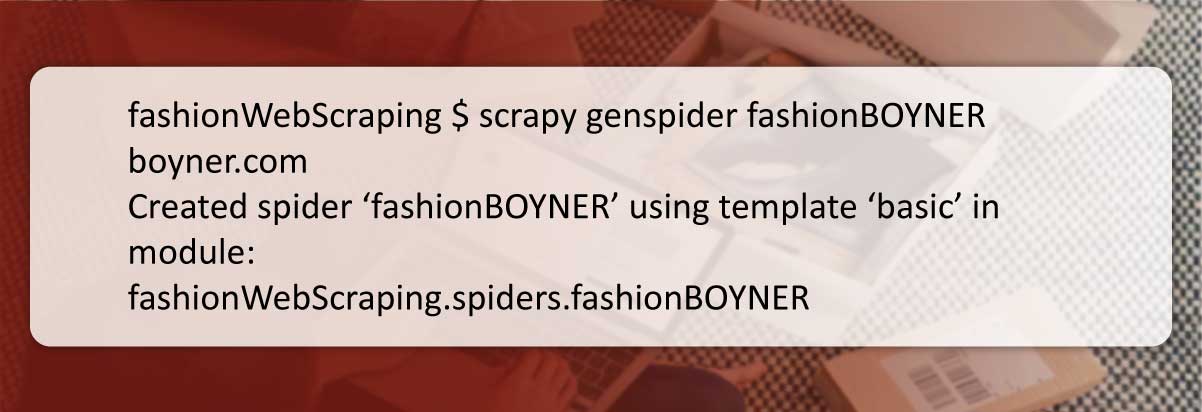

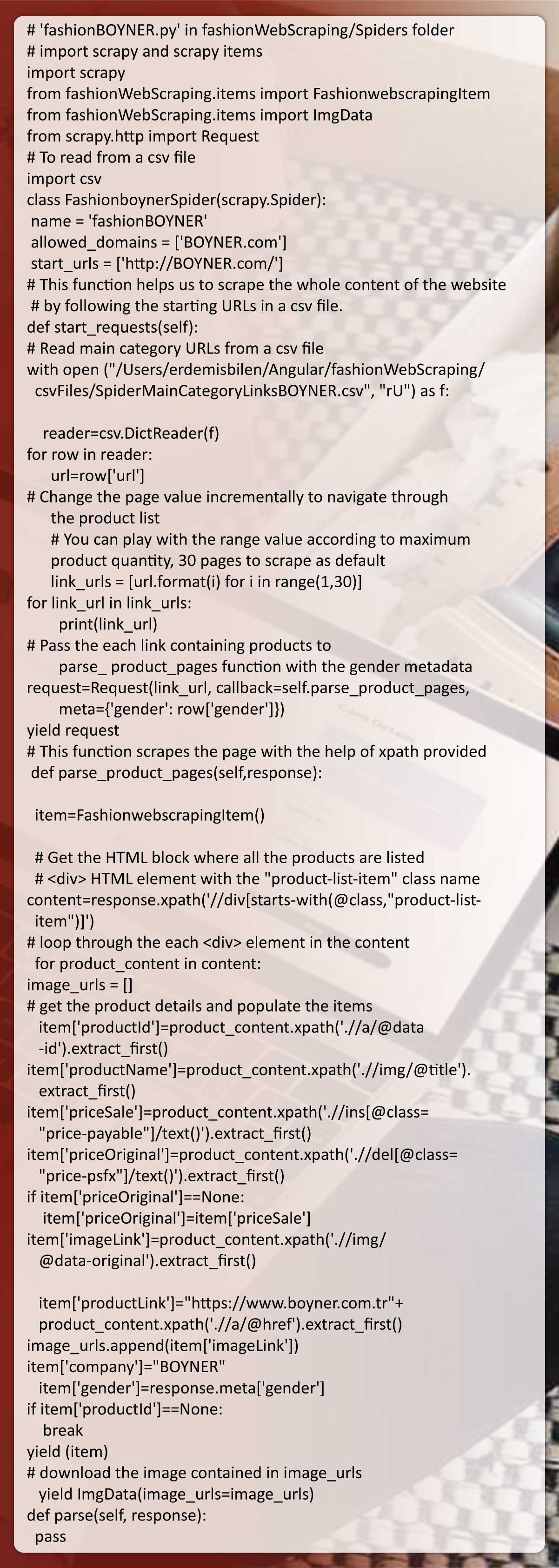

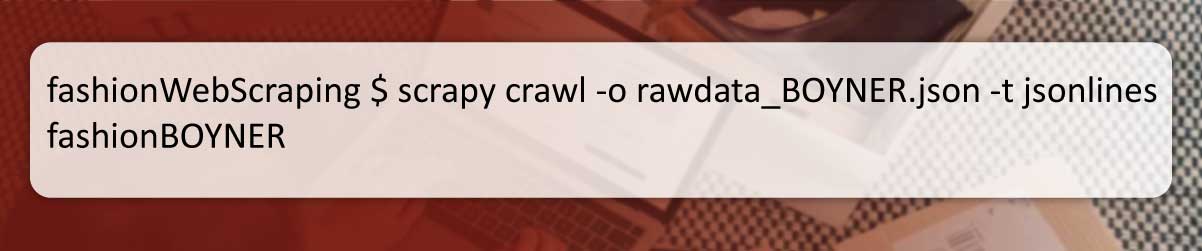

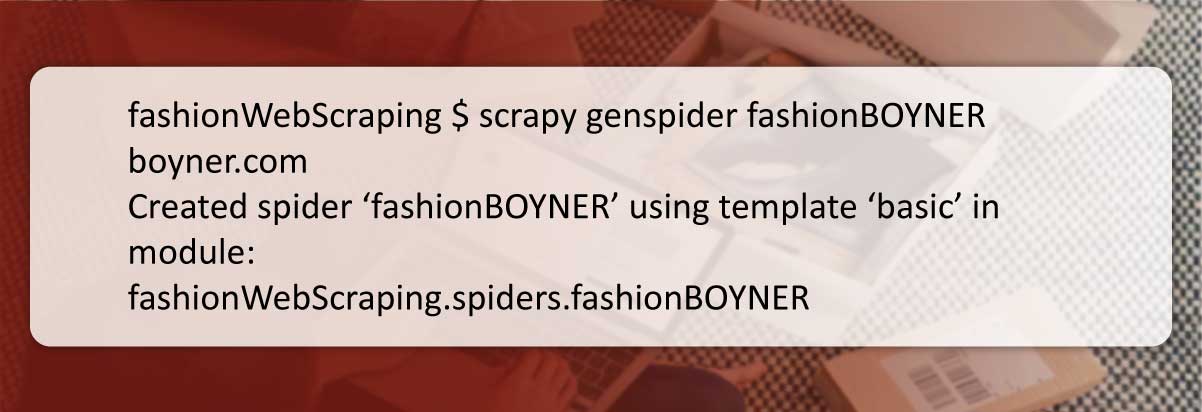

The given shell command makes a clear spider file. It’s time to write

codes in the fashionBOYNER.py file:

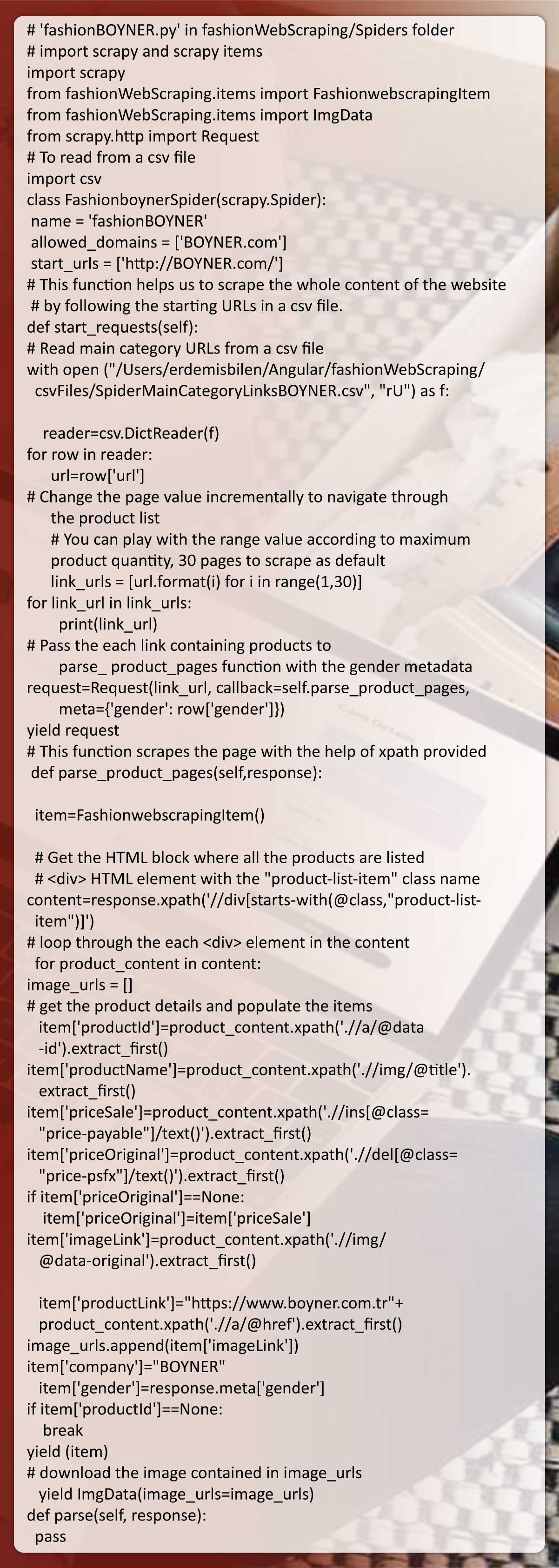

The spider class has 2 functions including ‘start_requests’ as well as

‘parse_product_pages’.

In function ‘start_requests’, we read from definite CSV file that we have

already produced to get starting URL data. Then we repeat the

placeholder {} for passing URLs of product pages into a

‘parse_product_pages’ function.

We could also pass ‘gender’ meta-data into ‘parse_product_pages’

function with ‘Request’ method using ‘meta={‘gender’: row[‘gender’]}’

stricture.

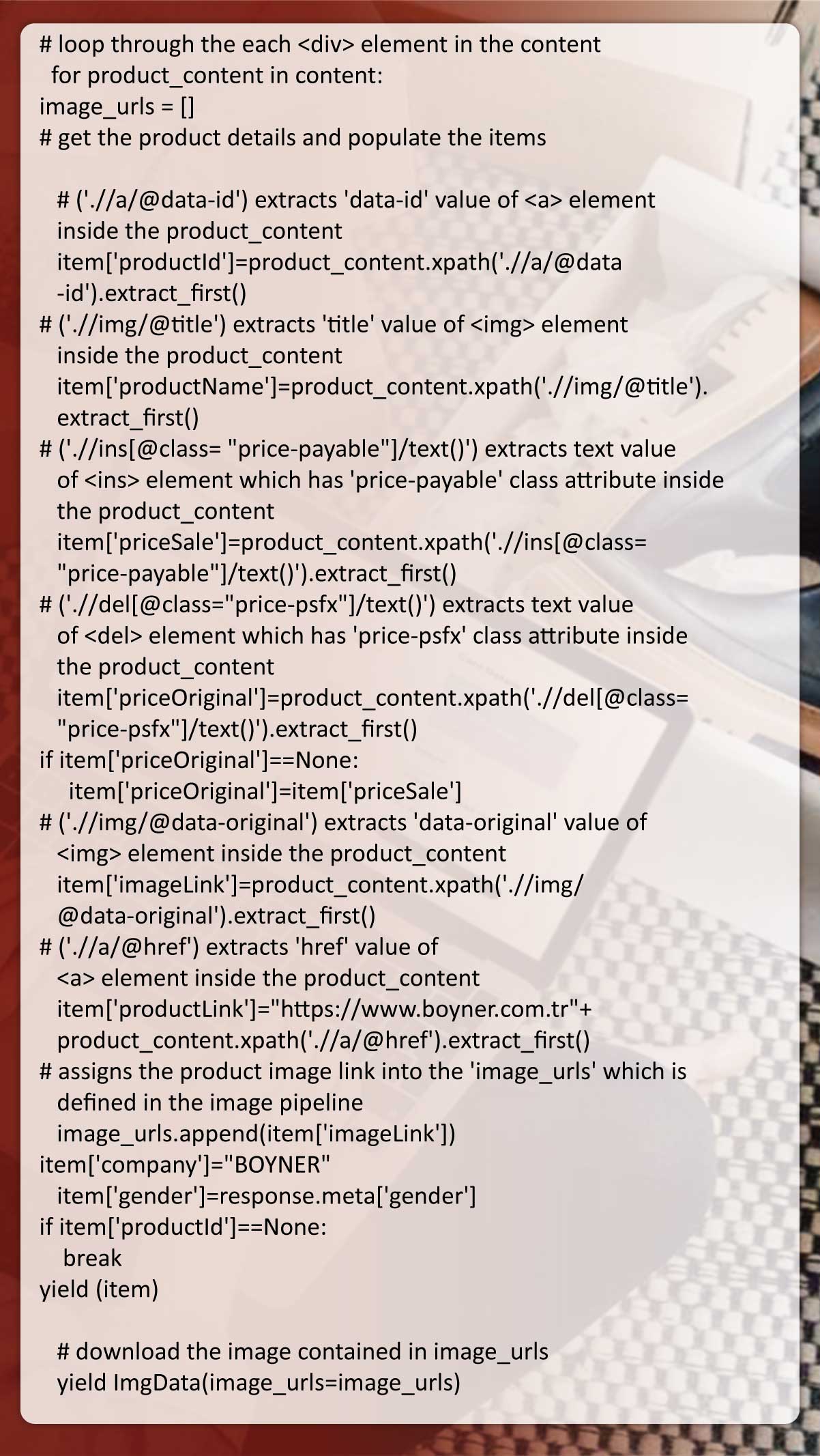

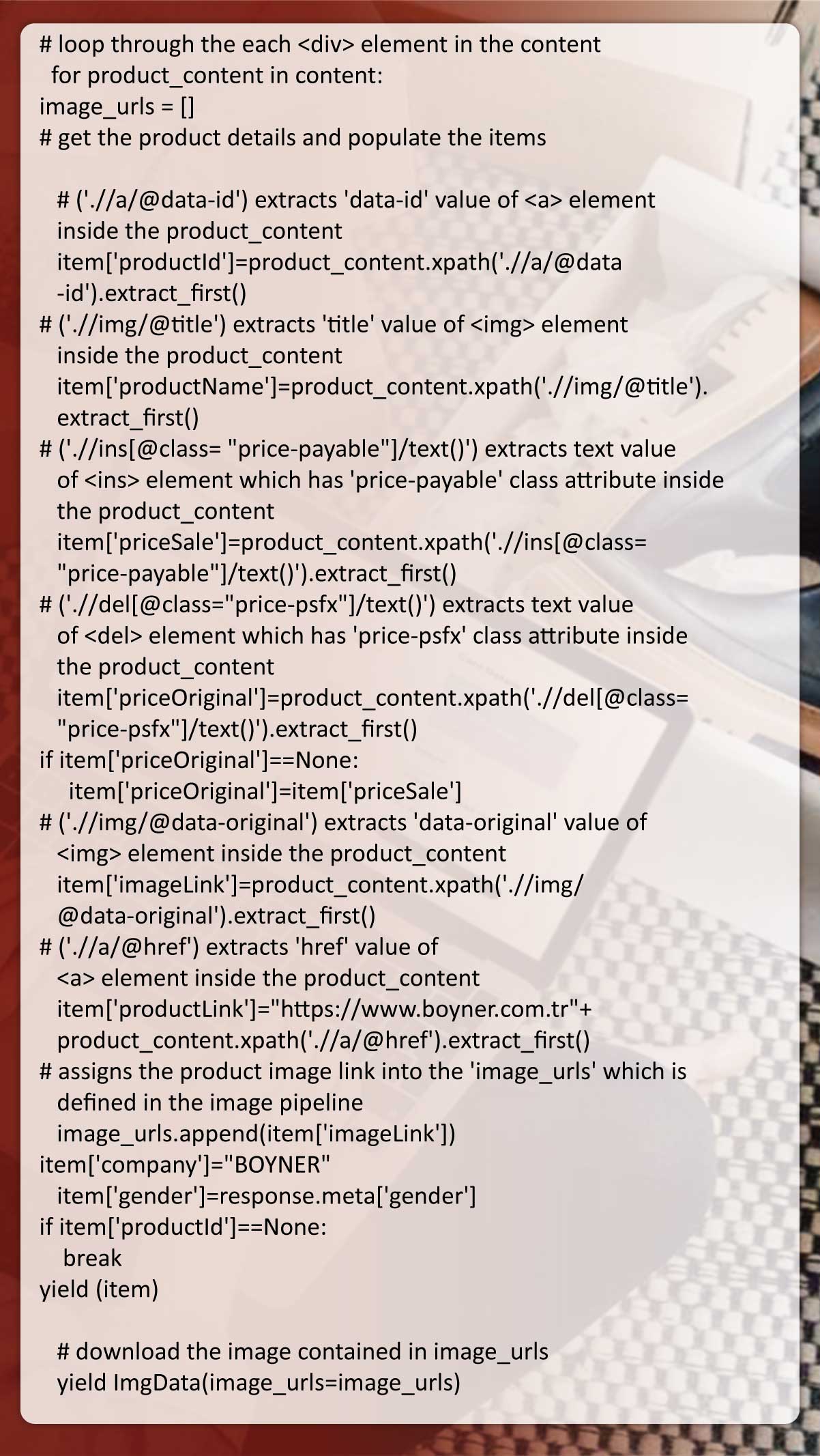

In ‘parse_product_pages’ function, we do the real web extraction and

populate Scrapy items using the extracted data.

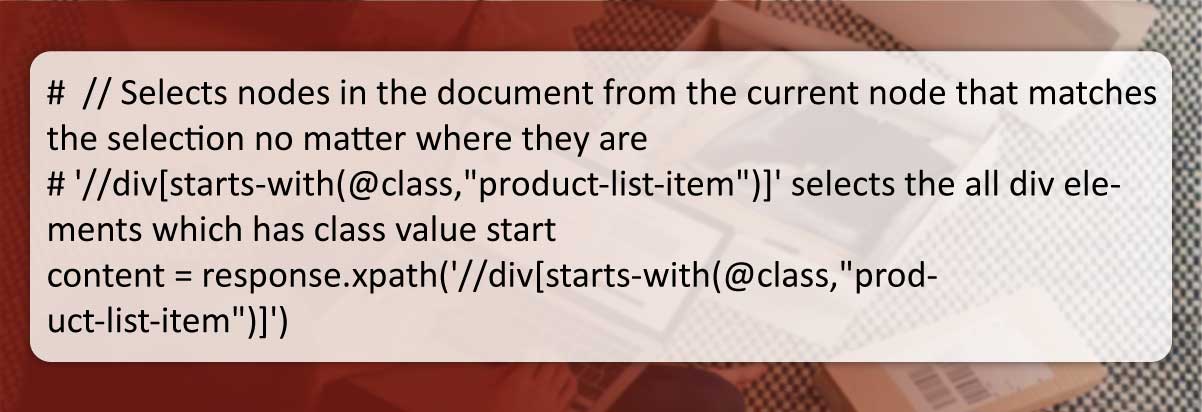

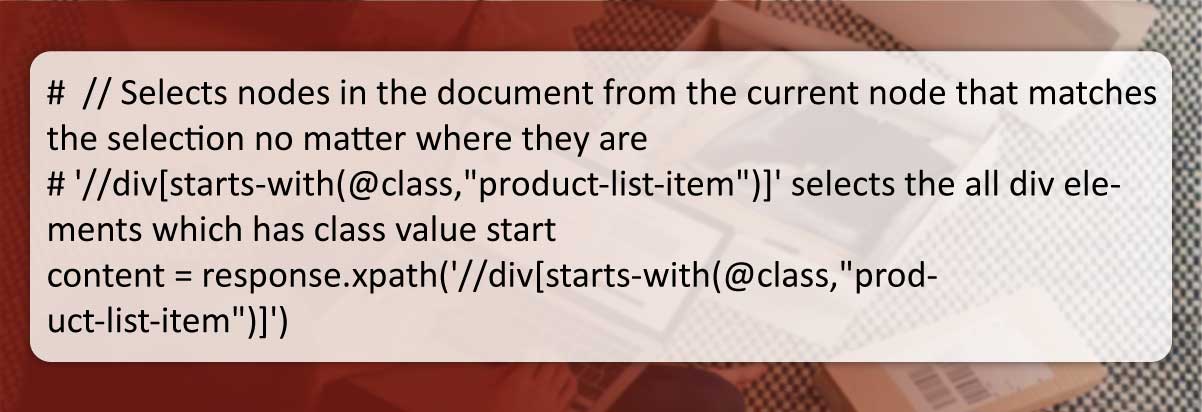

We use Xpath for locating HTML sections containing product data on a

web page.

The initial Xpath expression given scrapes the entire product listing from

current pages getting scrapped. All the necessary product data is

contained within ‘div’ content elements.

We have to loop in ‘content’ for reaching individual products as well as

storing them in Scrapy items. Using XPath expressions, we could easily

find the essential HTML elements in ‘content’.

We have to loop in

Step 5: Run Spiders and Store the Extracted Data in the JSON

File

With this scraping procedure, every product item is saved in the JSON

file. Every website has a particular JSON file occupied with data in every

spider run.

Use of jsonlines format can be more memory-efficient in comparison to

JSON format, particularly if you scrape many web pages at one session.

Note that a JSON file name begins with ‘rawdata’ indicating that next

step is checking and validating the extracted raw data before utilizing

them in the application.

Step 6: Clean and Validate the Extracted Data in the JSON

Files

After the extraction procedure ends, you might have some items you

need to remove from JSON files, before utilizing them in the application.

You might have some line items having duplicate values or null fields.

Both cases need a correction procedure which we handle using

‘deldub.py’ and ‘jsonPrep.py’.

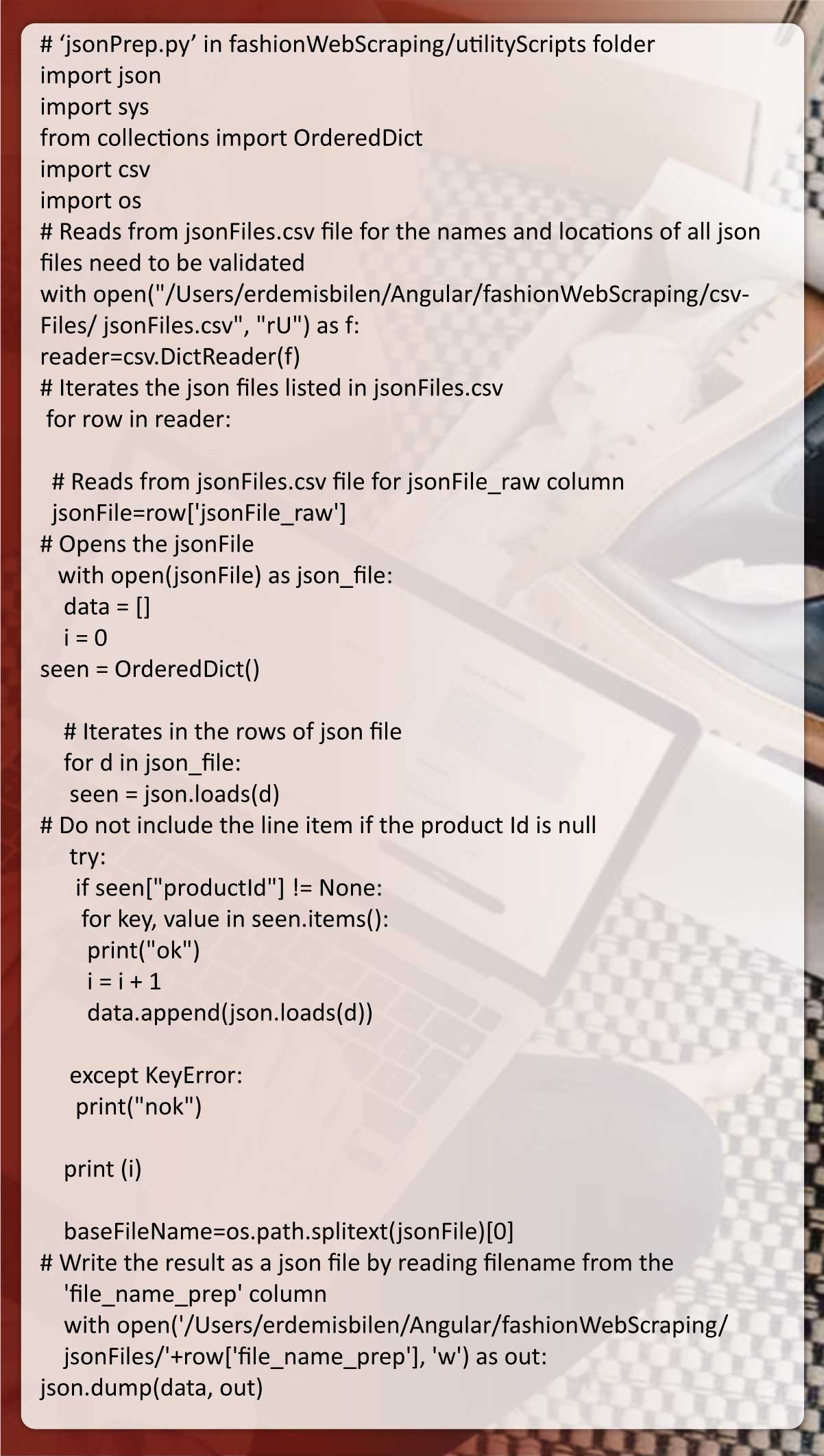

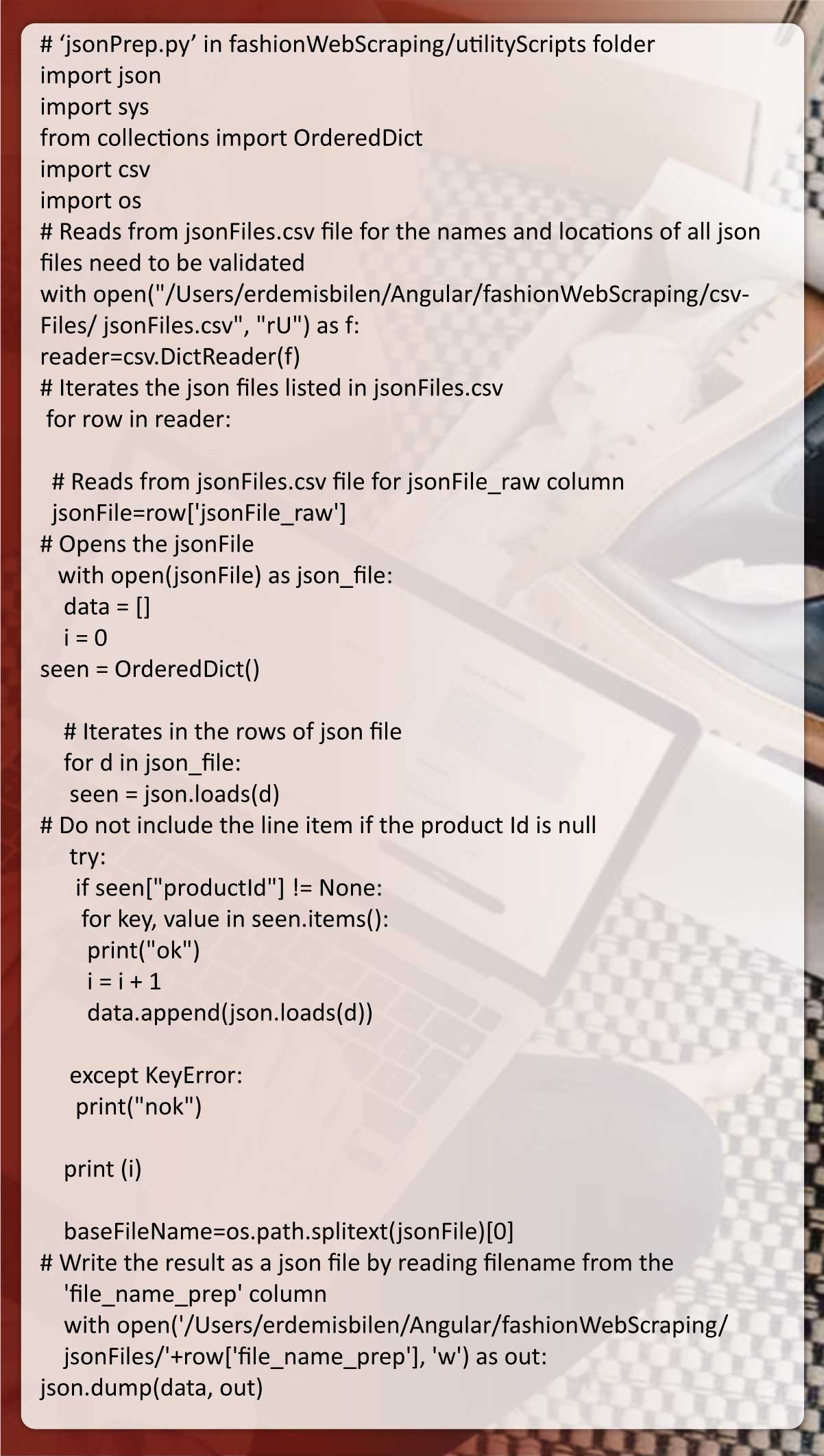

‘jsonPrep.py’ is looking for line items having null values as well as

removes them if detected. You could find a code having explanations

given below:

The results are saved with the file name begins with ‘prepdata’ in

‘jsonFiles’ folder after null line items get removed.

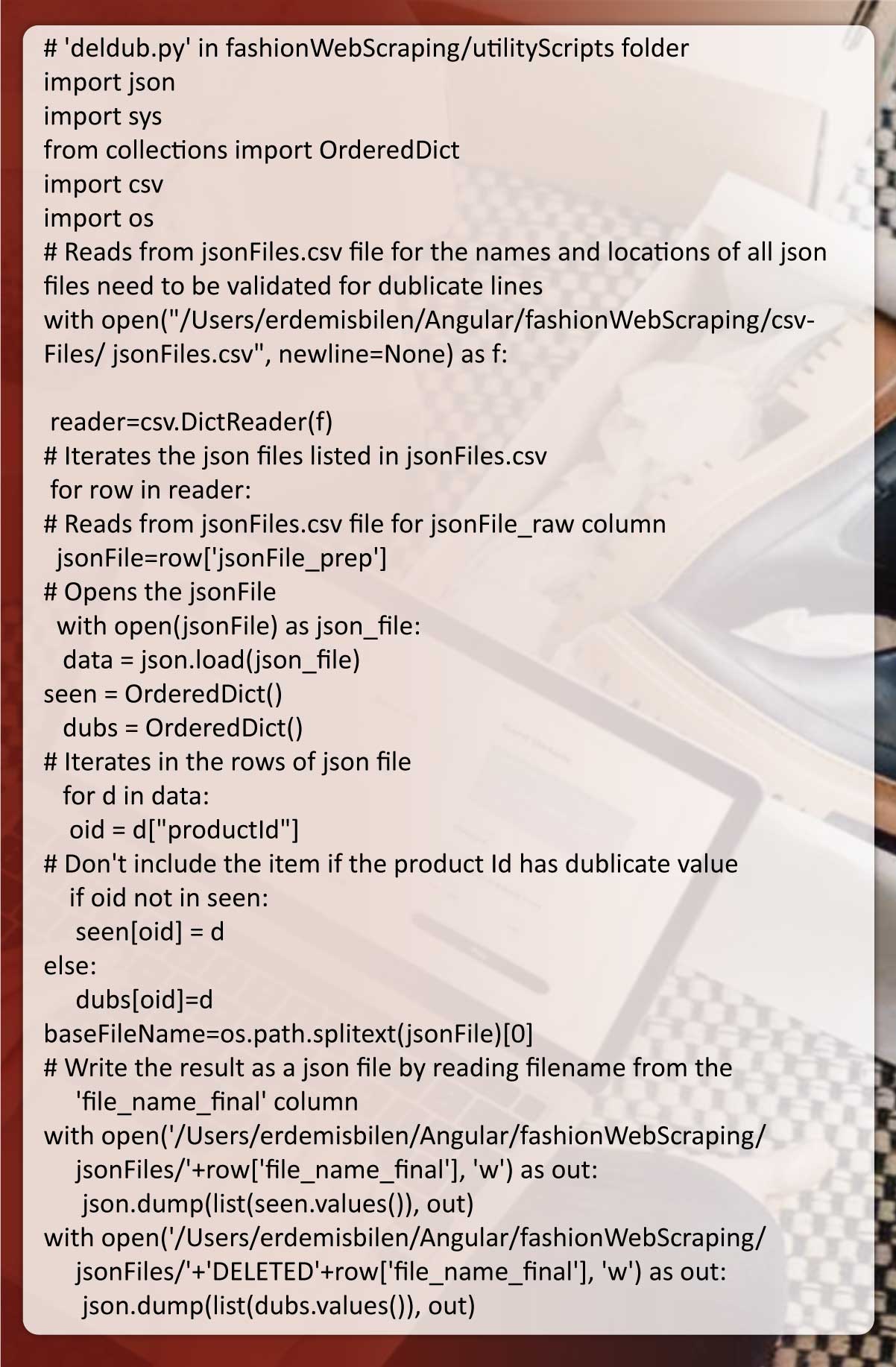

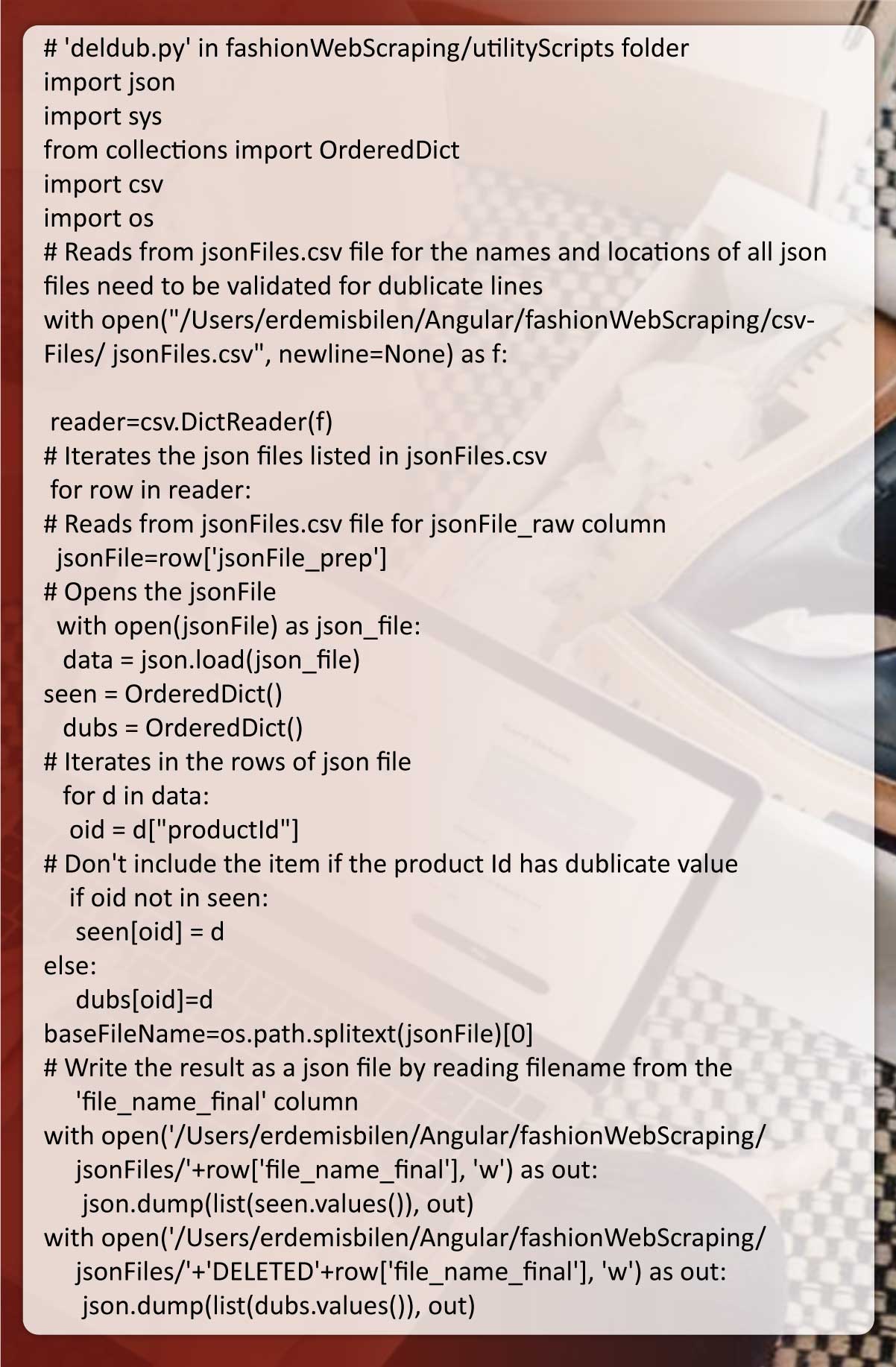

‘deldub.py’ needs duplicate line items as well as removes them if

detected. You could find a code having explanations given below:

‘deldub.py’ needs duplicate line items as well as removes them if

detected. You could find a code having explanations given below:

Automate the Entire Scraping Workflow Using an Apache

Airflow

When we define the scraping procedure, we can jump into workflow

automation. We will utilize Apache Airflow that is a Python-based

automation tool made by Airbnb.

We will offer terminal commands to install and configure Apache

Airflow.

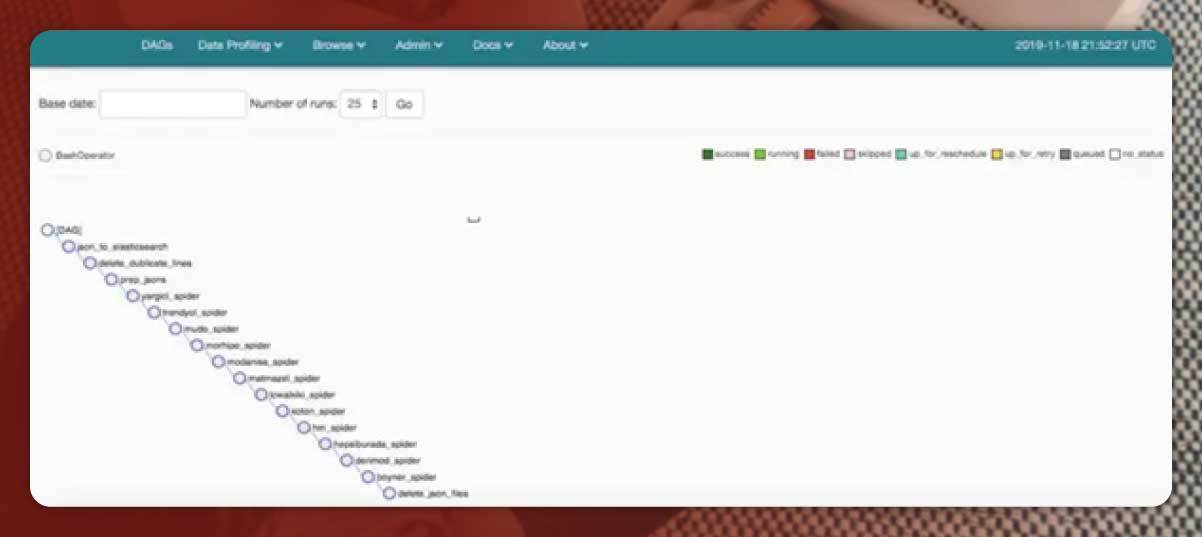

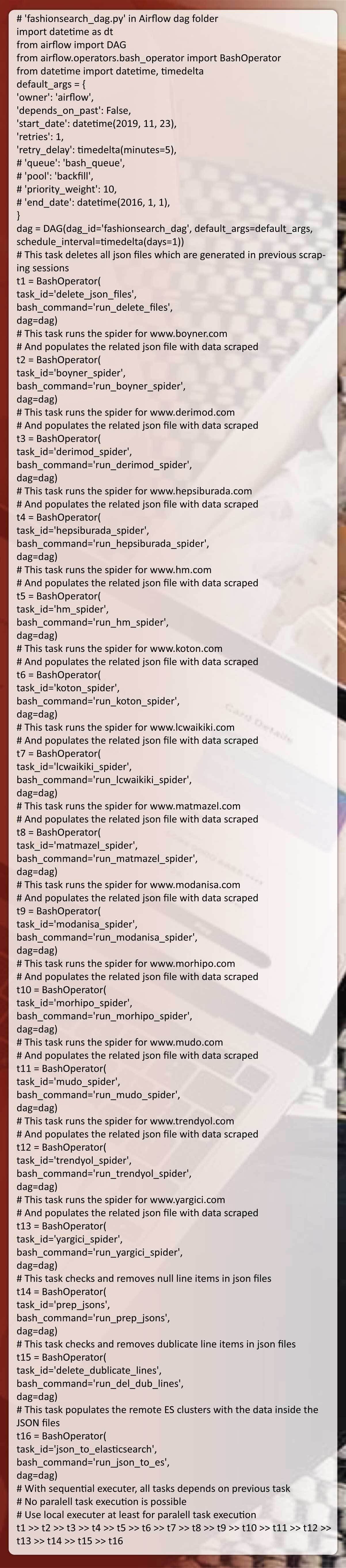

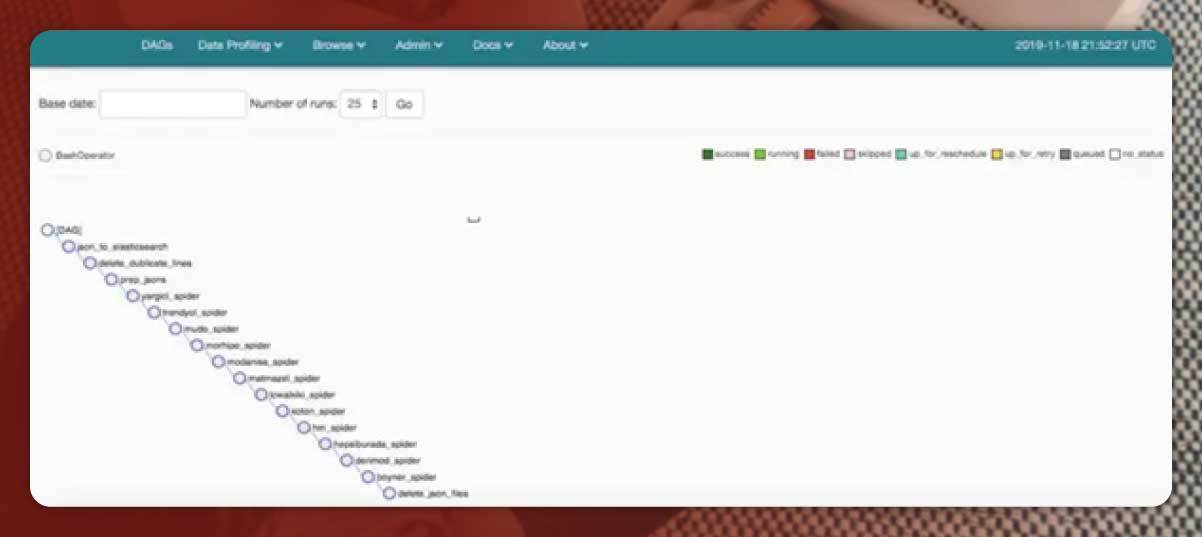

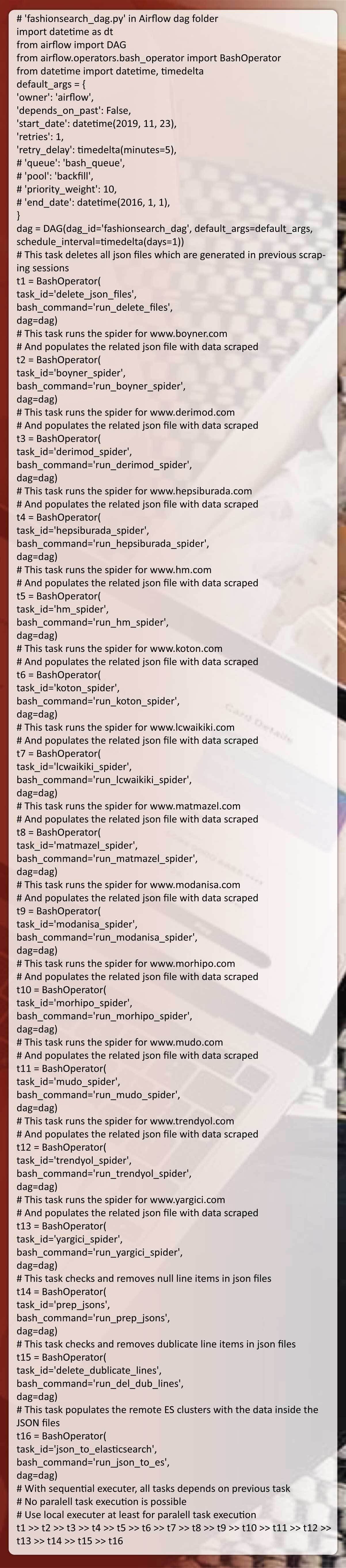

Generating a DAG file

In the Airflow, a DAG (Directed Acyclic Graph) is the collection of

different tasks you need to run, well-organized in the way which reflects

their dependencies and relationships.

For instance, an easy DAG can include three jobs: A, B, & C. This might

indicate that A need to successfully run before B could run, however, C

could run anytime. This indicates that job A times out afterwards 5

minutes, and B could get restarted around 5 times if it fails. This might

also indicate that workflow would run each night at 10 pm, however

shouldn’t begin until any certain date.

DAG’s that are defined in the Python file, is to organize a task flow. We

would not define real tasks within a DAG file.

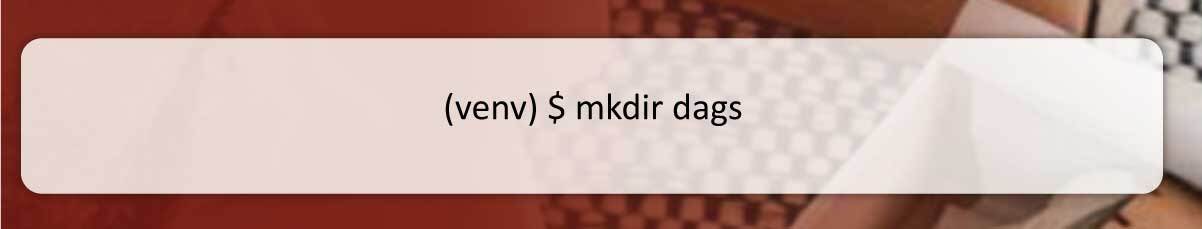

Let’s make a DAG folder with an empty Python file and start defining

workflow using Python codes.

Many operators are there given by Airflow for describing the job within a

DAG file. We have listed commonly utilized ones given below.

We are planning to utilize only ‘BashOperator’ as we would be

completing different tasks using Python scripts.

By following the tutorial, we generated bash scripts to do every task. You

could find them in the Github repository.

To begin a DAG workflow, we have to run an Airflow Scheduler. It will

execute a scheduler using a specified configuration in the ‘airflow.cfg’

file. A scheduler monitors every task in every DAG positioned in a ‘dags’

folder as well as triggers the task execution if dependencies are met.

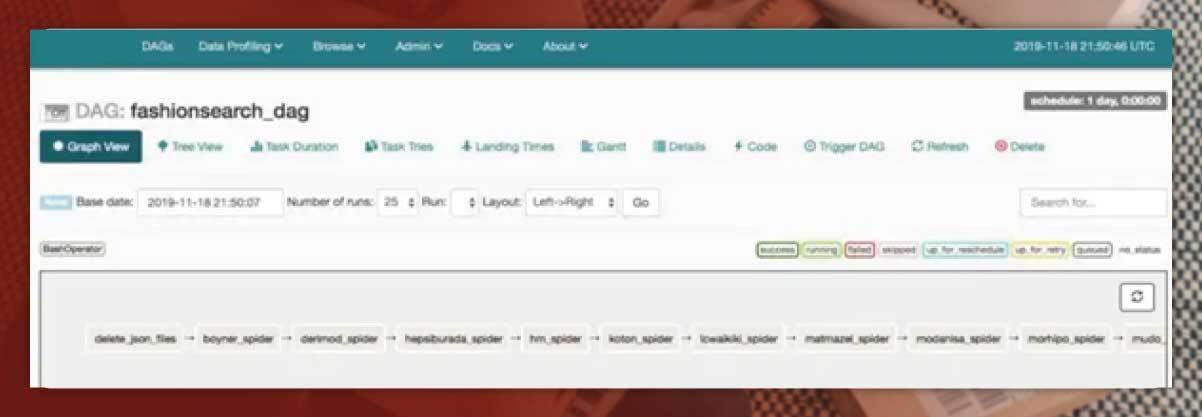

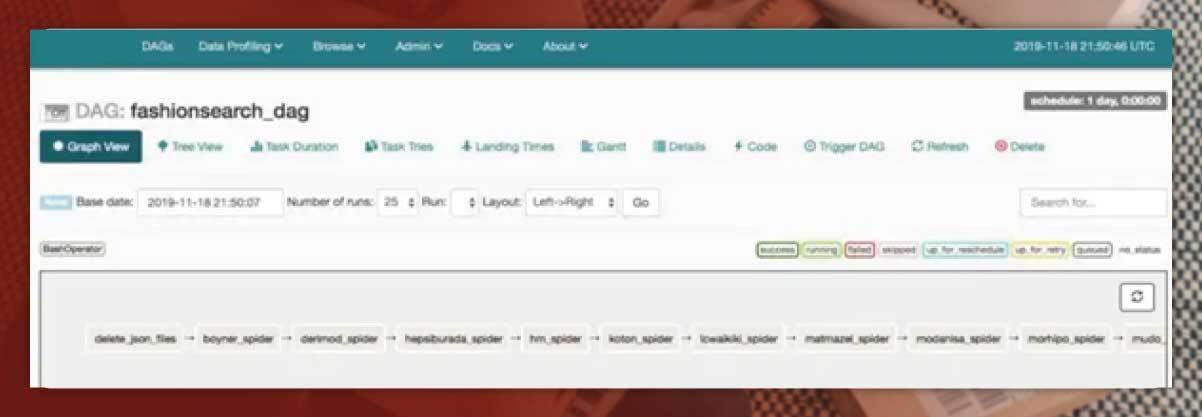

When we run an airflow scheduler, we could see status of the tasks

through visiting http://0.0.0.0:8080 on the browser. Airflow offers a

user interface in which we could see and observe scheduled dags.

Conclusion

We have shown here the web scraping workflow from starting till end.

Hopefully, it will assist you grasp the fundamentals of web scrapping

with workflow automation.

For more details, contact Actowiz Solutions. You can also reach us for all

your mobile app scraping and web scraping services requirements.

Core Scraping Services

Amazon Data Scraping #1 Walmart Data Scraping Shopify Store Scraping HOT TikTok Shop Scraping HOT Flipkart Data ScrapingTop Global Platforms

Platforms by Region

🇺🇸 USA🇬🇧🇪🇺 UK/EU🇮🇳 India🇦🇪 ME🌏 SEA🌎 LATAM🇨🇳🇯🇵🇰🇷🇦🇺 AUAmazon Data Scraping #1 Walmart Data Scraping Target Data Scraping NEW Shopify Scraping HOT TikTok Shop Scraping HOT Costco Data Scraping NEW Best Buy Scraping NEW Home Depot Scraping NEW Etsy Data Scraping NEW Shein Data Scraping NEW DoorDash Scraping NEW Instacart Scraping NEWTesco Data Scraping NEW Sainsbury's Scraping NEW ASDA Data Scraping NEW Ocado Scraping NEW ASOS Data Scraping NEW Rightmove Scraping NEW Deliveroo Scraping NEW Zalando Scraping NEW Otto Scraping NEW Cdiscount Scraping NEW Carrefour Scraping NEW Allegro Scraping NEW Bol.com Scraping NEWFlipkart Data Scraping JioMart Data Scraping NEW BigBasket Scraping NEW Myntra Data Scraping NEW Nykaa Data Scraping NEW Blinkit Data Scraping Zepto Data Scraping Zomato Data Scraping Swiggy Data ScrapingNoon Data Scraping NEW Amazon.ae Scraping NEW Talabat Data Scraping NEW Careem Data Scraping NEW PropertyFinder Scraping NEWPricing & Promotions

MAP Violations Brand Protection Counterfeit Detection Price Intelligence AI HOT Data IntelligenceBrand & Intelligence

Share of Search Content Audit & PDP Reviews & Ratings Retail Media Buy Box Monitoring Social Commerce HOT Live Commerce NEW Agentic Commerce NEWDigital Shelf & Search

Assortment Planning Competitive Benchmarking Product Availability Seller Intelligence NEW Q-Commerce NEWAssortment

E-commerce Intelligence Hyperlocal Insights POI & Store Locator DTC Brand Analytics NEWFor Retailers

Marketplace Scrapers

Amazon API TikTok Shop API HOT Uber Eats API Airbnb API Zepto / Blinkit API Instacart API NEW Talabat API NEWData APIs

Web Extract API Reviews API SERP API Pricing Webhook NEWUniversal APIs

Live Crawler API Scheduler Realtime Alerts Webhook Delivery 🐍 Python SDK 💚 Node.js SDKDelivery & SDKs

Knowledge Center

Digital Shelf Playbook MAP Compliance Guide Pricing Intel Guide Scraping Compliance TikTok Shop Guide NEW Cross-Border Guide NEWGuides & Playbooks

Sample Datasets HOT ROI Calculator NEW API Postman Collection Demo Dashboards Free API Playground NEW Press KitDownloads & Tools

Trust Center About Us FAQs CareersTrust & Company