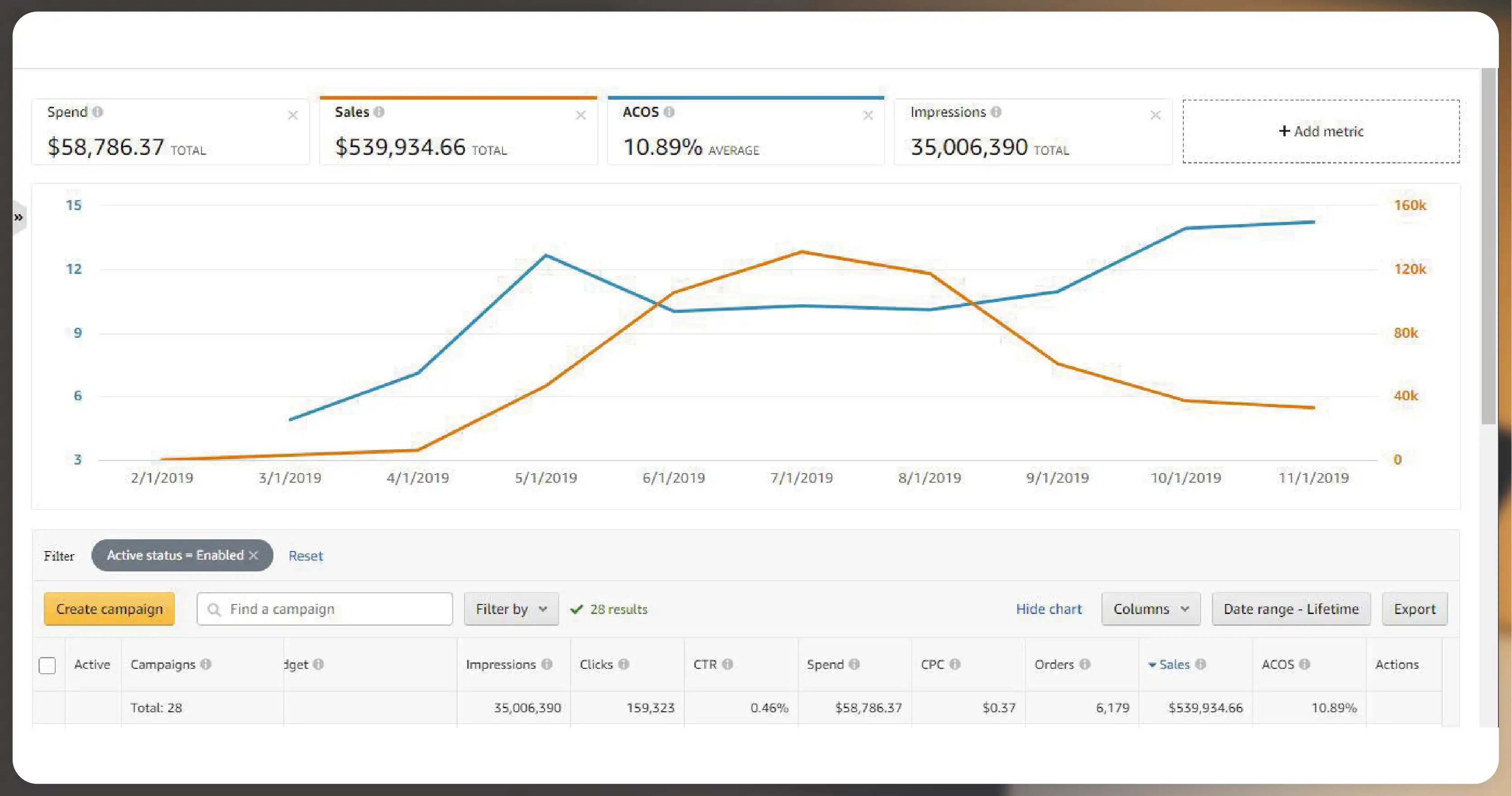

In the ever-evolving world of e-commerce, understanding and optimizing Amazon PPC (Pay-Per-Click) campaigns has become essential for businesses looking to stay ahead of the competition. With the vast amount of data generated through Amazon's advertising platform, scraping this data in real time can provide businesses with the insights they need to make informed decisions, optimize their ads, and boost sales. However, the option to Scrape Amazon PPC Ad Data in Real-Time isn't a straightforward task—especially when aiming to do it in real- time and without cost.

In this article, we will explore the best methods and tools for Amazon PPC Data Scraping, how to scrape Amazon advertising data effectively, and how to leverage this data to gain a competitive edge in the marketplace.

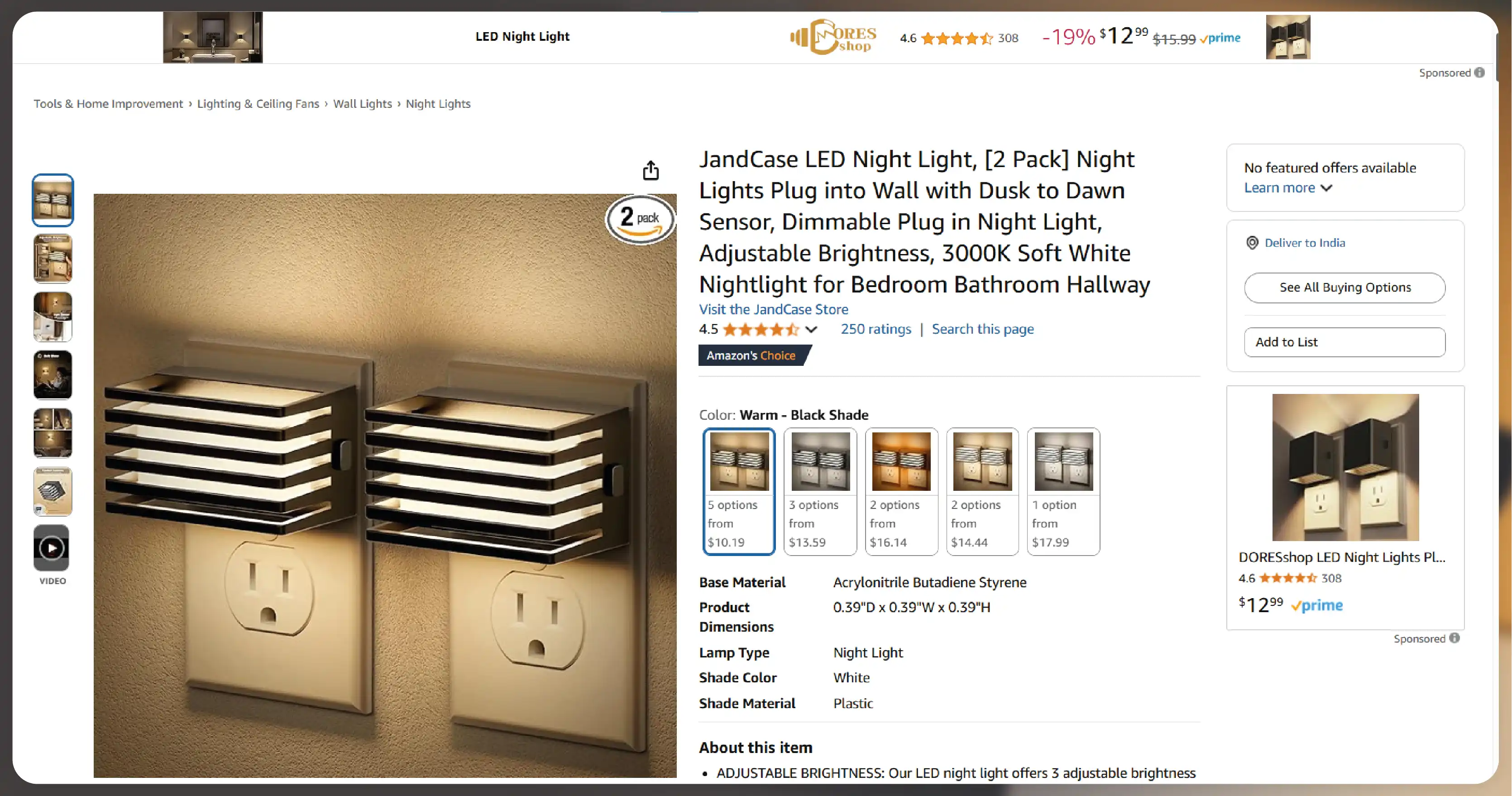

Amazon's advertising ecosystem, which includes Sponsored Products, Sponsored Brands, and Sponsored Display ads, provides an invaluable treasure trove of data. Scraping Amazon PPC data can give you insights into Amazon PPC Campaign Data Extraction, including ad performance, keyword rankings, bids, impressions, and conversion rates. This information is vital for optimizing campaigns, adjusting bids, and identifying keywords driving the most sales. Scraping also helps businesses keep track of competitors' ad strategies and benchmark their performance. With real-time data, you can adapt quickly, identify trends, and make adjustments that enhance your PPC strategy.

The need for Scrape Amazon Advertising Data is growing because Amazon is one of the largest e-commerce platforms in the world. Business advertising on Amazon must monitor their campaigns and track key metrics to improve their visibility, conversion rates, and return on investment (ROI). Real-time data helps ensure that you are always one step ahead of competitors by allowing you to optimize your campaigns continuously.

Scraping data from Amazon is not without its challenges. One of the biggest hurdles is Amazon's strict anti-scraping policies. To avoid getting blocked, you must use effective scraping techniques that respect Amazon's terms of service while still allowing you to gather valuable data.

Another challenge is the complexity of the data. Amazon Sponsored Ads Scraping involves gathering data points such as bid prices, impressions, click-through rates (CTR), and conversion rates. Without the right tools or infrastructure, collecting, processing, and analyzing this data can become cumbersome and time-consuming.

Additionally, obtaining accurate and real-time data is crucial. Any delay in capturing Scrape Amazon Ad Performance Data can lead to missed opportunities or outdated information that is no longer relevant. Therefore, having the right tools to scrape data in real-time is essential for making timely campaign adjustments.

You must employ the right combination of tools, techniques, and technologies to scrape Amazon PPC Keyword data effectively. While paid solutions that automate the process are available, scraping Amazon PPC data using open-source tools and libraries is possible if you follow Amazon's terms and conditions.

Here are some of the most effective ways to Scrape Amazon Advertising Data:

Python is one of the most popular programming languages for web scraping due to its simplicity and powerful libraries. Using Python, you can create a custom scraper tailored to your specific needs, scraping Amazon PPC Campaign Data Extraction from the Amazon Advertising Console or public Amazon pages.

Libraries like BeautifulSoup and Scrapy allow you to parse HTML and extract the needed data. These tools are widely used for web scraping and can be programmed to scrape Amazon PPC data such as bids, impressions, click-through rates (CTR), and more.

However, scraping directly from Amazon's website can be tricky, as the site uses CAPTCHA and other anti-bot mechanisms to prevent unauthorized scraping. To avoid this, it is essential to rotate IPs, use proxies, and ensure that your scraper mimics human behavior. This method is effective if you are comfortable with coding and deeply understand the scraping process.

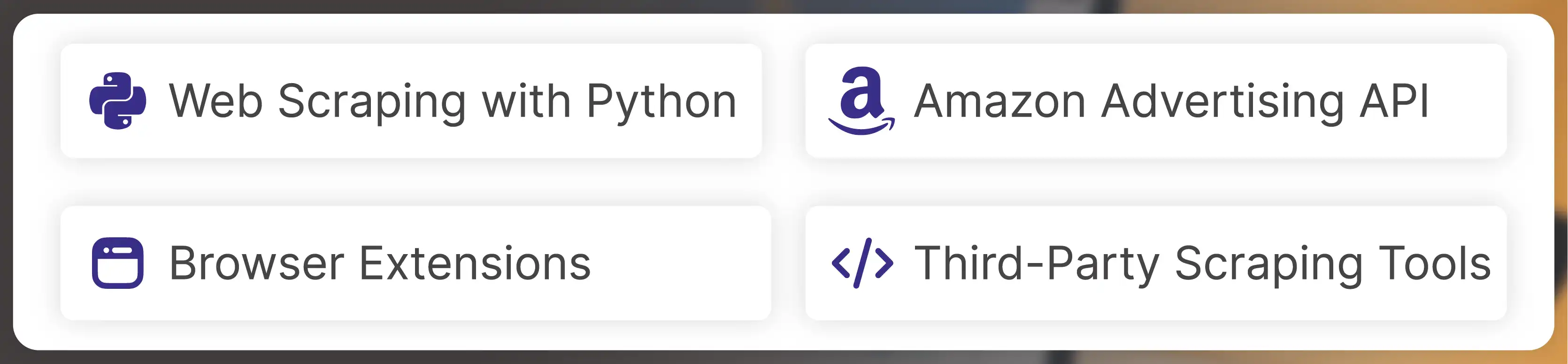

Another way to Scrape Amazon Ad Spend Data is by using the Amazon Advertising API. Amazon provides a robust API that gives access to detailed reports about your advertising campaigns, including performance metrics such as impressions, clicks, spending, and sales.

To use the API, you must register for an Amazon Advertising account and obtain access credentials. Once you have access, you can retrieve real-time Amazon Sponsored Product Scraping data directly from the API and analyze it using various tools such as Excel, Power BI, or Google Data Studio.

While the Amazon Advertising API is more reliable than scraping directly from the website, it does have some limitations, such as restricted data access and rate limits. Nevertheless, it is a valuable resource for businesses needing up-to-date advertising campaign data.

Browser extensions can be a good solution for businesses looking for a simpler way to scrape Amazon Ads Data Extraction Tools without writing any code. Extensions like Web Scraper allow users to scrape data from Amazon pages easily by setting up custom scraping rules.

These tools allow you to scrape various data points from Amazon's ad campaigns, such as product rankings, reviews, and PPC data. While browser extensions are not as flexible as custom scraping solutions, they are an easy way to collect data without requiring technical expertise.

Some third-party scraping tools offer limited plans that allow you to scrape Amazon Sponsored Ads Scraping data on a smaller scale. These tools typically come with pre-built templates for specific use cases, such as scraping Amazon product data or ad performance metrics.

While these tools may not offer as much flexibility as custom scraping solutions or the Amazon Advertising API, they can still be helpful for small businesses that need basic PPC data.

Before you begin scraping Amazon Ad Spend Data, it's crucial to understand the legal aspects involved. Amazon has strict policies regarding web scraping, and violating these policies can lead to your IP being blocked, your account being banned, or even legal action being taken against you.

To avoid legal issues, it is essential to scrape Amazon Sponsored Product Scraping data in a way that adheres to Amazon's terms of service. This means using API access when possible, avoiding scraping too frequently, and ensuring that your scraping activities do not place undue stress on Amazon's servers.

Moreover, when scraping PPC data, businesses should also be mindful of the data privacy laws in their region, such as the GDPR in Europe or the CCPA in California. These regulations govern how businesses collect and use personal data, so ensuring compliance with these laws is essential.

Once you have successfully scraped Amazon PPC Data Scraping, the next step is to use that data to optimize your campaigns. Here are some key ways to leverage the data you collect:

In 2025, scraping Amazon PPC Ad data in real time will be possible with the right tools and techniques. While there are challenges, such as Amazon's anti-scraping policies and complex data formats, various methods—ranging from Python-based web scrapers to third-party scraping tools and the Amazon Advertising API—can be used to collect valuable PPC data.

By carefully analyzing scraped data, businesses can gain insights into their ad performance, optimize their campaigns, and stay ahead of the competition. However, it's essential to navigate legal and ethical considerations when scraping to avoid potential penalties and ensure compliance with Amazon's terms of service.

Ultimately, Scraping Amazon Advertising Data opens up a world of possibilities for businesses to refine their strategies, improve their ad performance, and increase their ROI. By effectively leveraging real-time data, brands can drive more targeted, cost-effective, and successful PPC campaigns on Amazon.

Experience how Actowiz Solutions can assist brands in scraping MAP data, monitoring MAP violations, detecting counterfeit products, and managing unauthorized sellers. Join us for a live demonstration with our team of Digital Shelf experts to explore our services in detail. We specialize in instant data, mobile apps, and web scraping services. Contact us for more information and to schedule a demo.

You can also reach us for all your mobile app scraping, data collection, web scraping , and instant data scraper service requirements!

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

Complete guide to scraping Shopify store data in 2026. Extract product prices, reviews, and inventory from Shopify stores for competitive intelligence.

Discover how Natural Grocers achieved a 23% increase in promotional ROI using real-time organic product pricing intelligence. Learn how data-driven pricing strategies enhance promotions and retail performance.

Track UK Grocery Products Daily Using Automated Data Scraping across Morrisons, Asda, Tesco, Sainsbury’s, Iceland, Co-op, Waitrose, and Ocado for insights.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.