Introduction

In today's fast-paced and data-centric business landscape, staying ahead of the competition demands access to accurate and up-to-date information. Supplier data collection is pivotal in this quest for knowledge, allowing businesses to make informed decisions, optimize pricing strategies, and maintain efficient inventory levels. However, manually sourcing this data from diverse supplier websites can be arduous and time-consuming.

This is where web scraping steps in as a potent ally. Web scraping, a cutting-edge technology, empowers businesses to automate gathering critical data from supplier websites swiftly and efficiently. In this blog, we'll delve into the significance of supplier data collection in the contemporary business world and explore how web scraping is a powerful tool for automating this essential process.

Understanding Web Scraping

Web scraping is a transformative technology that has revolutionized how businesses collect data from the vast and dynamic landscape of the internet. At its core, web scraping involves automated data extraction from websites. Its role in data collection is fundamental, serving as the bridge that connects businesses with the invaluable information dispersed across the World Wide Web.

Defining Web Scraping

Web scraping, often referred to as web harvesting or data extraction, is automatically retrieving data from websites. This data can encompass a wide range of information, including text, images, prices, product descriptions, customer reviews, and much more. What sets web scraping apart from manual data collection is its ability to collect data from multiple websites rapidly and consistently, making it an indispensable tool for businesses seeking to stay competitive and informed.

How Web Scraping Works

Web scraping operates by simulating the actions of a human user navigating a website, but at a much faster pace and on a larger scale. Here's how it typically works:

- Request: The web scraping tool sends a request to a target website, similar to how you would enter a web address in your browser.

- HTML Retrieval: The website responds by returning the HTML code that makes up the web page's content.

- Parsing: The web scraping tool parses or processes the HTML code to identify and extract the specific data elements you're interested in. This involves locating HTML tags, attributes, and patterns corresponding to your desired data.

- Data Extraction: Once the relevant data is identified, the web scraping tool extracts it and stores it in a structured format for further use. This data can be saved in various formats, such as CSV, Excel, JSON, or databases.

- Automation: One of the critical strengths of web scraping is its automation capabilities. You can schedule web scraping tasks at specified intervals, ensuring your data remains up-to-date without manual intervention.

Emphasizing Automation

The automation aspect of web scraping is where its true power lies. Unlike manual data collection, which is time-consuming and prone to errors, web scraping can efficiently retrieve data from multiple websites, including those with extensive product catalogs or rapidly changing content. This automation saves businesses valuable time and ensures the collected data's consistency and accuracy.

Identifying Your Data Needs

Before embarking on a web scraping journey to collect data from supplier websites, laying a solid foundation is essential by clearly defining your data requirements. This initial step streamlines the web scraping process and ensures that you obtain the most relevant and valuable information for your business needs. Explore why this is crucial and explore some common data collection types from supplier websites.

The Significance of Data Requirement Definition

Precision and Relevance: Defining your data needs precisely ensures that you collect only the data that directly serves your business objectives. This prevents the accumulation of extraneous information, making your data more manageable and meaningful.

Efficiency: Knowing exactly what data you need allows you to design a focused web scraping strategy. This, in turn, optimizes the use of resources, reduces processing time, and minimizes the risk of encountering issues related to collecting irrelevant data.

Legal and Ethical Compliance: Clearly defined data requirements help ensure compliance with the terms of service of supplier websites and legal regulations. Scraping only the necessary data promotes ethical data collection practices.

Cost Savings: Efficient web scraping that targets specific data needs reduces the computational and storage costs associated with storing and managing vast datasets.

Common Types of Data from Supplier Websites

- Delivery Times: Knowing estimated delivery times helps set customer expectations and efficiently manage order fulfillment.

- Discounts and Offers: Gathering data on promotions and discounts allows businesses to adjust their pricing strategies and marketing efforts accordingly.

- Images: High-quality product images are crucial for visually appealing product listings on e-commerce platforms.

- Price: One of the most critical pieces of data, pricing information, helps businesses stay competitive and adjust their pricing strategies accordingly.

- Product Descriptions: Detailed descriptions provide essential information about the product, including specifications, features, and benefits.

- Product Reviews: Customer feedback and ratings provide insights into product quality and customer satisfaction.

- Restocking Dates: Knowing when a product will return to stock helps plan promotions and customer communications.

- Shipping Costs: Information about shipping costs is essential for calculating overall product costs.

- Stock Availability: Real-time data on product availability allows businesses to manage inventory effectively, preventing stockouts and overstock situations.

- Supplier Contact Information: Contact details are essential for establishing and maintaining supplier relationships.

- Supplier Ratings and Reviews: Customer feedback on suppliers can inform decisions regarding selecting reliable partners.

Clearly identifying your data requirements lays the groundwork for a successful web scraping project. Whether you are focused on pricing, product descriptions, images, inventory, or other details, having a precise understanding of your needs ensures that you collect the correct data to support your business objectives while adhering to ethical and legal considerations.

Selecting the Right Tools

Selecting the Right Tools for Web Scraping Success

Choosing the right web scraping tools and libraries is a pivotal decision in ensuring the success of your data collection project. The web scraping landscape offers a variety of options, each with its strengths and use cases. In this section, we'll discuss some popular web scraping tools and offer guidance on making an informed choice based on project complexity and scalability.

Popular Web Scraping Tools and Libraries

Beautiful Soup: Beautiful Soup is a Python library that excels at parsing and navigating HTML and XML documents. It's known for its simplicity and ease of use. Beautiful Soup is ideal for small to medium-scale web scraping projects where you need to extract data from relatively simple web pages.

Scrapy: Scrapy is a powerful and highly customizable Python framework for web scraping. It provides a full suite of tools for handling complex scraping tasks. Scrapy suits larger and more complex scraping projects, especially those involving multiple websites or intricate data extraction requirements.

Selenium: Selenium is a versatile tool for web automation and scraping dynamic web pages, such as those with JavaScript-driven content. It can interact with web elements like buttons and forms. Selenium is best suited for projects requiring interactivity and user-like interactions with websites.

Setting Up Your Web Scraping Environment

To begin scraping supplier websites for product information, you'll need to set up a web scraping environment. This guide will walk you through the process, including installing the necessary tools and libraries. We'll focus on Python as it is a widely used language for web scraping.

Step 1: Install Python

If you don't already have Python installed, visit the official Python website (https://www.python.org/downloads/) and download the latest version. Follow the installation instructions for your operating system.

Step 2: Install pip (Python Package Installer)

Pip is a package manager for Python that allows you to easily install libraries and packages. To ensure you have pip installed, open your terminal or command prompt and run:

python -m ensurepip --default-pip

Step 3: Create a Virtual Environment (Optional, but Recommended)

It's a good practice to create a virtual environment to isolate your web scraping project and avoid conflicts with other Python packages. Navigate to your project directory in the terminal and run:

python -m venv venv_name

Activate the virtual environment:

On Windows:

venv_name/Scripts/activate

On macOS and Linux:

source venv_name/bin/activate

Step 4: Install Required Libraries

For web scraping, you'll need libraries like Beautiful Soup and Requests. Install them using pip:

pip install beautifulsoup4 requests

Step 5: Create Your Web Scraping Script

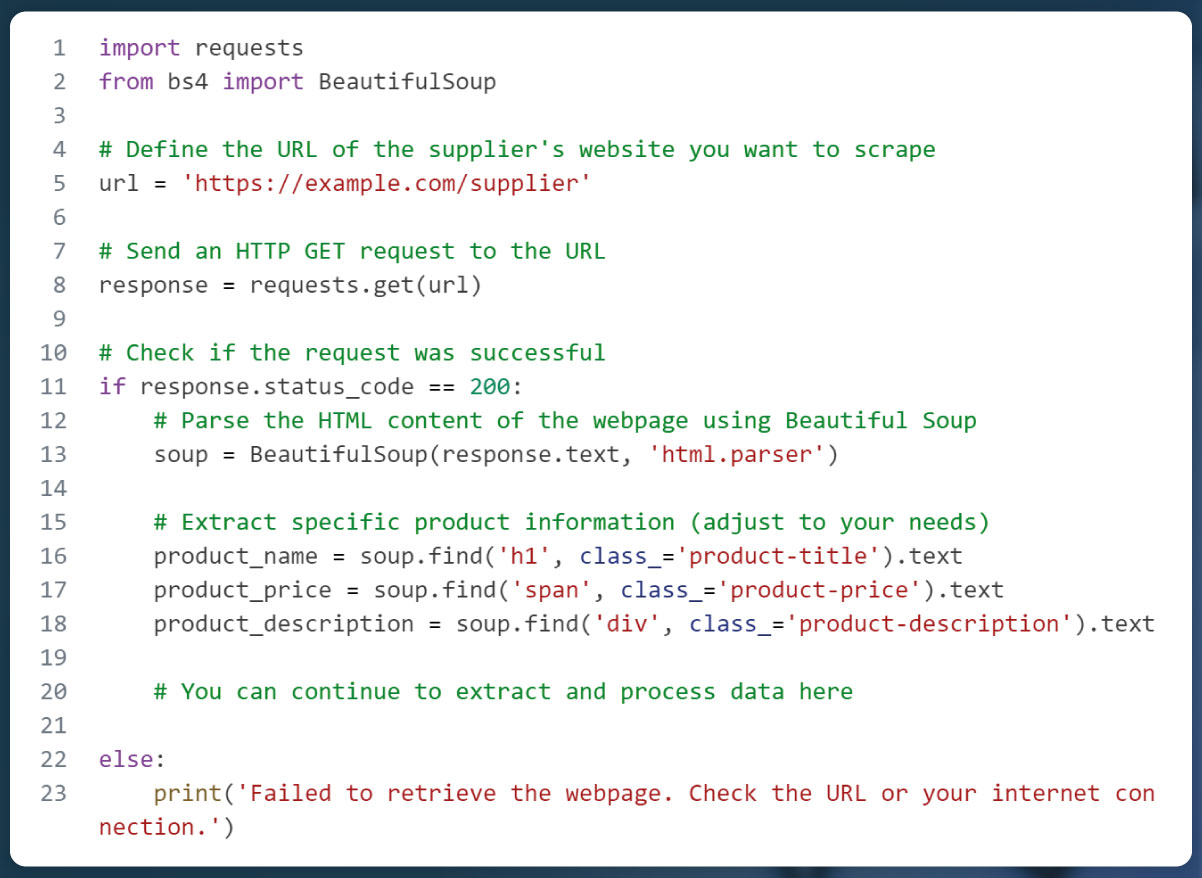

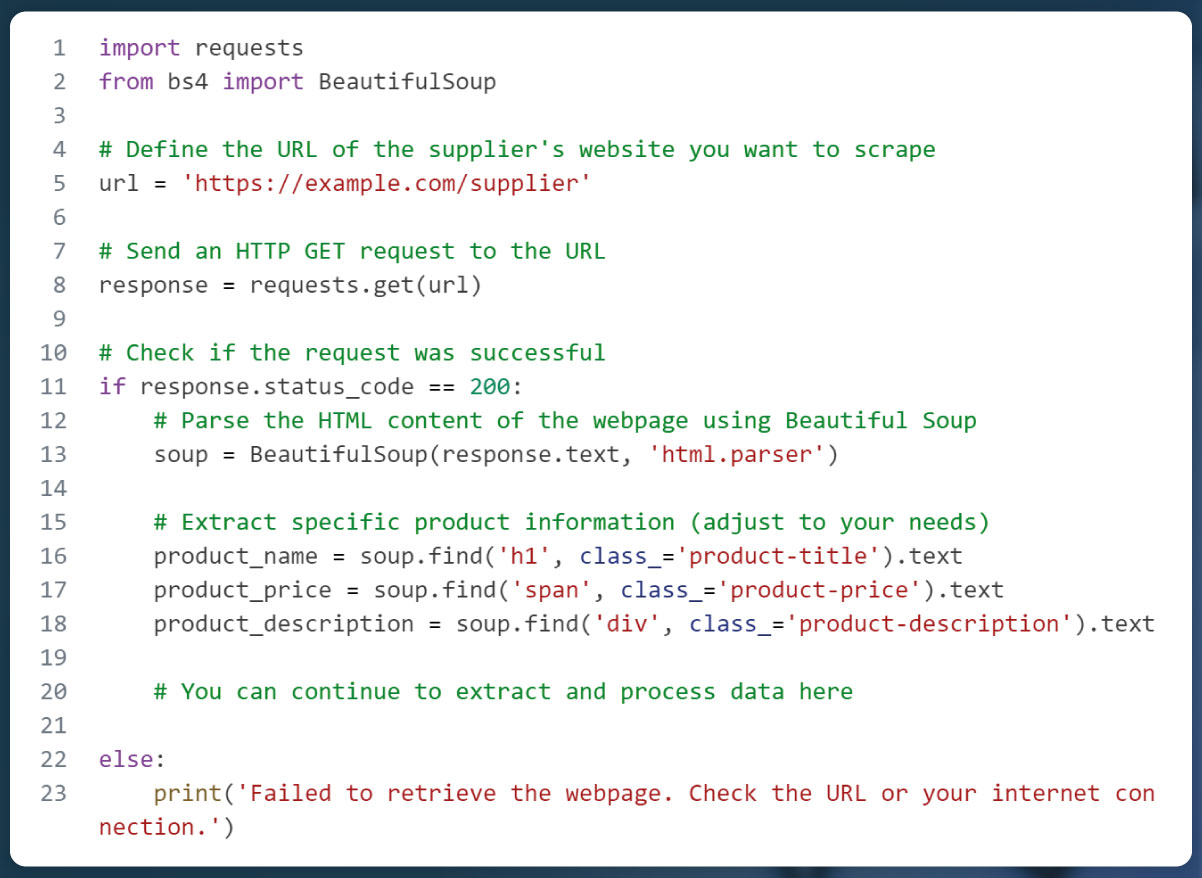

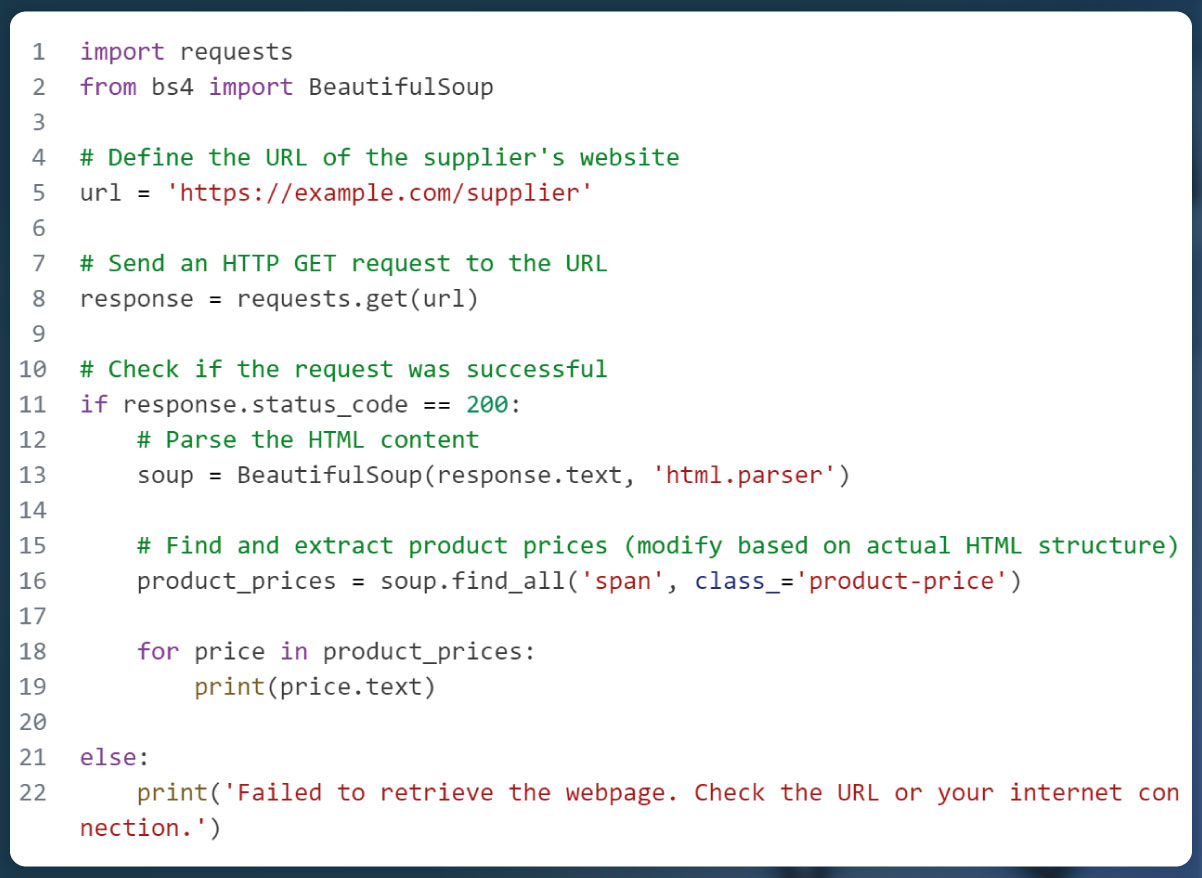

Now, create a Python script for your supplier data collection project. You can use any code editor you prefer. Below is a basic example using Beautiful Soup and Requests to scrape product information:

Step 6: Run Your Web Scraping Script

Save your Python script with a .py extension in your project directory. Run it from the terminal:

python your_script_name.py

Your script will send an HTTP GET request to the supplier's website, parse the HTML content, and extract the desired product information.

Note: Remember to review and comply with the terms of service and scraping policies of the supplier's website to ensure ethical and legal web scraping practices.

With these steps, you've set up a web scraping environment and created a basic script for collecting product information from supplier websites. You can further enhance and customize your script to meet specific data collection needs.

Writing Web Scraping Code

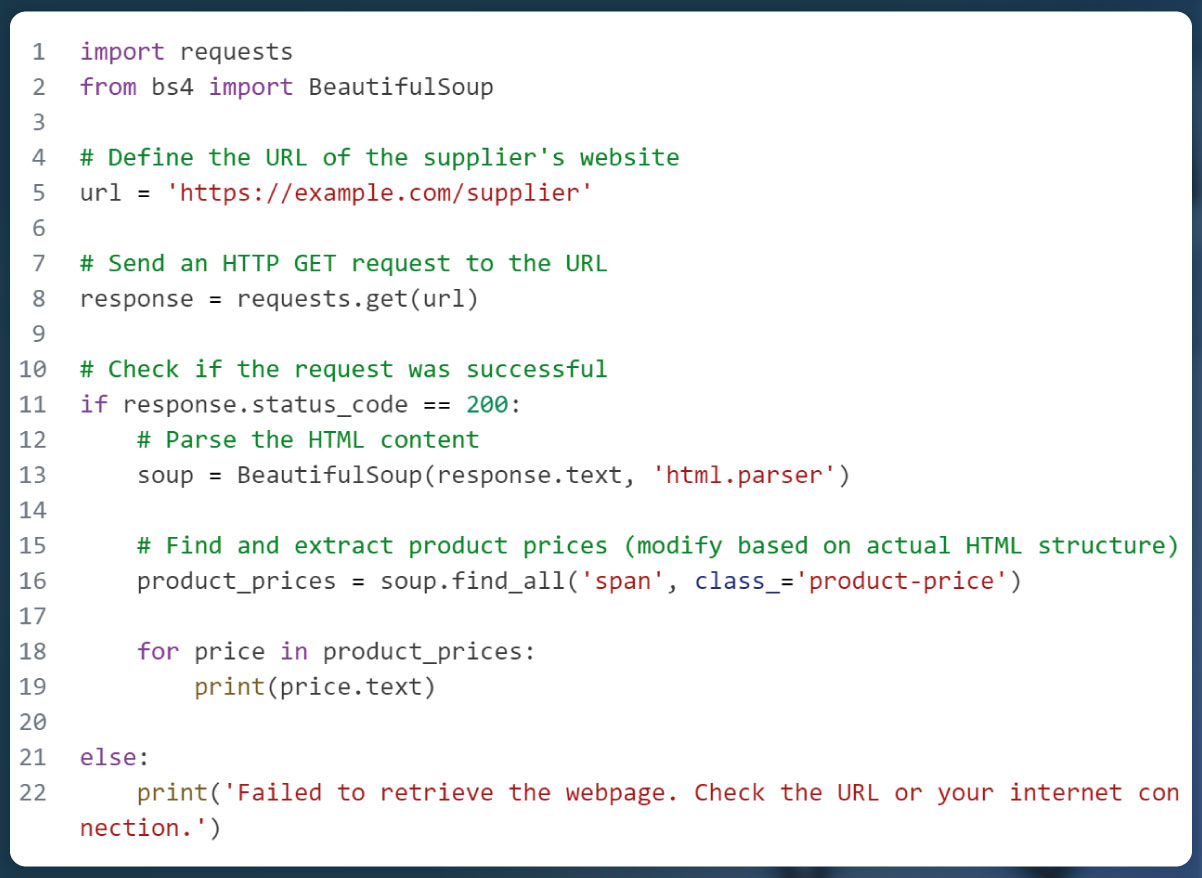

HTML elements are the building blocks of a web page. To scrape data, you'll need to identify and extract information from these elements. Common HTML elements include:

Tags: Tags represent structural elements (e.g., < div >, < span >, < table >).

Attributes: Attributes provide additional information about an element (e.g., class, id, href).

Text Content: Extract text content from elements using .text.

Here's a simplified example using Python and Beautiful Soup to scrape product prices from a supplier's website:

Data Cleaning and Transformation: Ensuring Usable Scraped Data

Web scraping often yields raw data that may require cleaning and transformation to make it usable for analysis or integration into your systems. Here's why data cleaning and transformation and guidance on achieving them are essential.

Importance of Data Cleaning and Transformation

- Quality Assurance: Raw scraped data may contain inconsistencies, inaccuracies, or missing values. Cleaning ensures data quality.

- Consistency: Data from different sources or formats may need standardization to maintain consistency.

- Structure: Data may be unstructured or in a format unsuitable for analysis. Transformation organizes it for a more straightforward interpretation.

- Efficiency: Cleaned and structured data is more efficient for processing, analysis, and integration.

Techniques and Tools for Data Cleaning and Transformation

- Removing Duplicate Data: Identify and eliminate duplicate entries to prevent data redundancy.

- Handling Missing Values: Decide how to handle missing data, whether by imputation, deletion, or substitution.

- Standardization: Standardize data formats, such as date formats, units of measurement, and naming conventions.

- Text Processing: For textual data, use techniques like tokenization, stemming, and lemmatization to clean and normalize text.

- Data Formatting: Convert data types, ensure consistent data structures, and handle outliers.

- Scaling and Normalization: Scale numerical data to the same range or normalize it to ensure comparability.

- Data Aggregation: Aggregate data to the desired level of granularity for analysis.

Automating Data Collection: Scheduling and Monitoring

Automating data collection is essential for efficiency and real-time updates. Here's how to set it up using scheduling and monitoring tools.

Scheduling:

- Cron Jobs (Linux) or Task Scheduler (Windows): Schedule your web scraping script to run at specific intervals, e.g., daily, hourly, or weekly.

- Third-party Automation Tools: Use tools like Zapier, Integromat, or Microsoft Power Automate to schedule and automate data collection tasks.

Monitoring:

- Status Alerts: Set up alerts to notify you of scraping failures or anomalies in the data.

- Logging: Implement logging to record scraping activities and identify issues.

Benefits of Real-Time Data Updates

- Competitive Advantage: Real-time updates allow you to react promptly to market changes, giving you a competitive edge.

- Inventory Management: Keep track of stock levels and product availability in real-time.

- Pricing Strategy: Adjust pricing strategies based on competitors' price changes.

- Data Integration: Bringing Scraped Data Into Your Systems Once data is scraped, you need to integrate it into your existing systems or databases. Here's how:

- Data Formats: Export scraped data into standard formats like CSV, JSON, or XML.

- API Integration: If the supplier offers an API, use it to import data into your systems directly.

- Database Integration: Store scraped data in a database (e.g., MySQL, PostgreSQL) for structured storage and easy querying.

- ETL (Extract, Transform, Load): Apply ETL processes to clean, transform, and load data into your data warehouse or analytics platform.

- Monitoring and Maintenance: Ensuring Efficiency and Accuracy Continuous monitoring and maintenance are vital to keep your scraping process efficient and accurate.

- Scheduled Checks: Regularly review scraped data for anomalies or inconsistencies.

- Update Scripts: Adapt your scraping scripts to accommodate website structure or content changes.

- Handling Blocks: Implement mechanisms to handle IP blocks or CAPTCHA challenges.

- Quality Control: Establish quality control procedures to ensure the reliability of scraped data.

Scaling Your Web Scraping Solution

As your data needs grow, you may need to scale your scraping solution. Consider these strategies:

- Distributed Scraping: Use multiple machines or proxies to scrape data in parallel, distributing the load.

- Cloud-Based Solutions: Leverage cloud platforms (e.g., AWS, Google Cloud) for scalability, storage, and processing power.

- Scalable Architecture: Design your scraping solution with scalability in mind, using frameworks like Scrapy or serverless architectures.

Scaling your web scraping solution allows you to handle larger volumes of data and tackle additional websites efficiently while maintaining data quality and reliability.

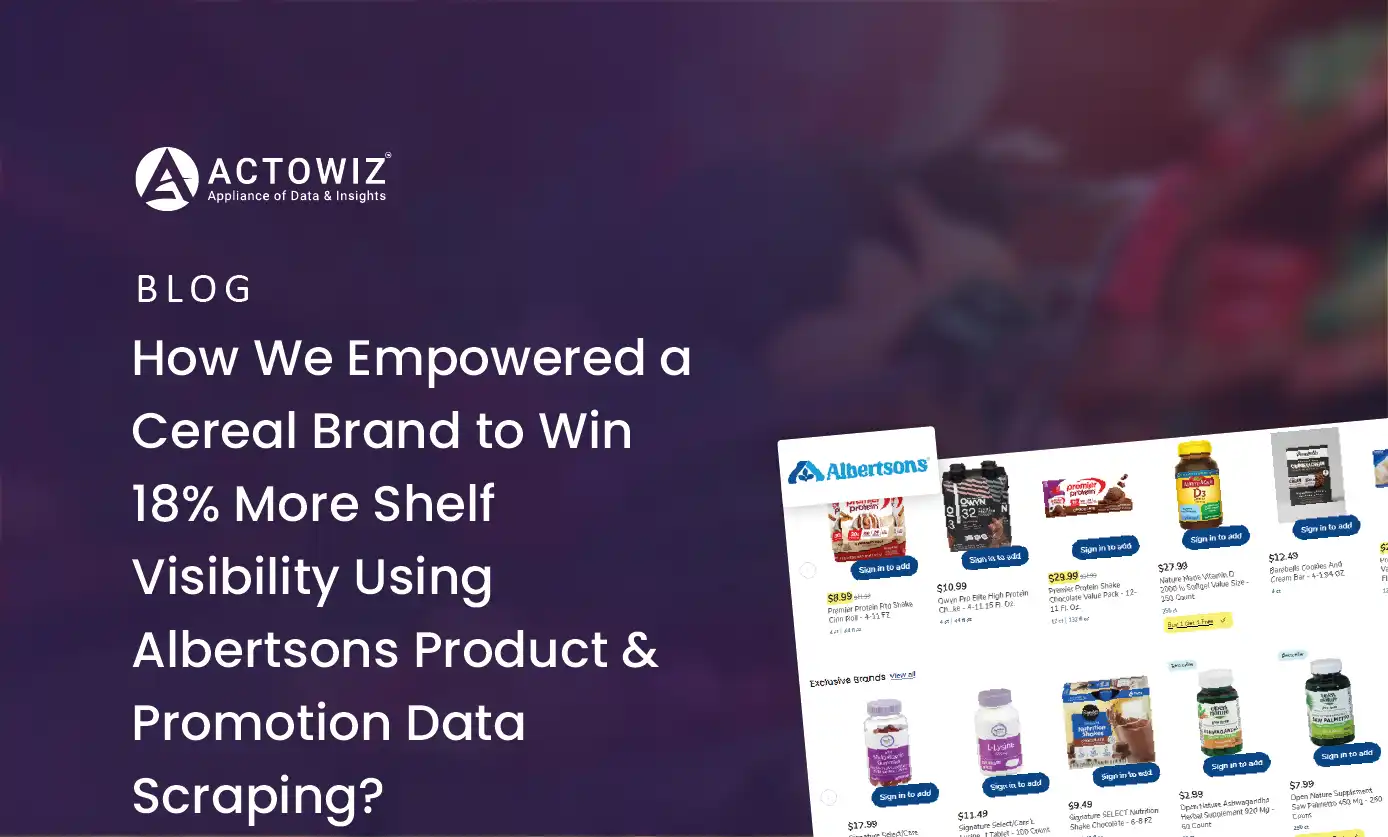

Why Choose Actowiz Solutions To Scrape Supplier Data?

Scraping Actowiz Solutions or any website should only be done for legitimate and ethical purposes, with proper authorization, and in compliance with all applicable laws and terms of service. Before considering scraping Actowiz Solutions or any website for supplier data, here are some reasons why you might want to do so:

Competitive Analysis: Scraping supplier data from Actowiz Solutions can help you gather information about their product offerings, pricing strategies, and inventory levels. This information can be invaluable for competitive analysis and benchmarking your own offerings.

Market Research: Accessing supplier data can provide insights into market trends, customer preferences, and product demand. This information can inform your business strategy and product development efforts.

Price Optimization: Scraped data can be used to monitor competitor prices in real-time. This enables you to adjust your pricing strategies and remain competitive in the market.

Inventory Management: For businesses that rely on suppliers for inventory, scraping supplier data can help you stay informed about stock availability, lead times, and restocking schedules.

Supplier Evaluation: Scraped data can be used to evaluate and compare different suppliers based on factors such as product quality, pricing, and customer reviews.

Automated Ordering: With regularly updated supplier data, you can automate the ordering process, ensuring that you restock products in a timely manner to meet customer demand.

Conclusion

In today's fast-paced business world, embracing web scraping for supplier data collection is not just an option; it's a strategic advantage. By harnessing the power of web scraping responsibly and ethically, businesses can gain deeper insights, make data-driven decisions, and stay ahead of the competition. Take the first step toward data-driven success with Actowiz Solutions and web scraping. Contact us today to explore how web scraping can revolutionize supplier data collection efforts. Unlock the Power of Data with Web Scraping. Contact Actowiz Solutions to get started! You can also reach us for all your mobile app scraping, instant data scraper and web scraping service requirements.

Core Scraping Services

Amazon Data Scraping #1 Walmart Data Scraping Shopify Store Scraping HOT TikTok Shop Scraping HOT Flipkart Data ScrapingTop Global Platforms

Platforms by Region

🇺🇸 USA🇬🇧🇪🇺 UK/EU🇮🇳 India🇦🇪 ME🌏 SEA🌎 LATAM🇨🇳🇯🇵🇰🇷🇦🇺 AUAmazon Data Scraping #1 Walmart Data Scraping Target Data Scraping NEW Shopify Scraping HOT TikTok Shop Scraping HOT Costco Data Scraping NEW Best Buy Scraping NEW Home Depot Scraping NEW Etsy Data Scraping NEW Shein Data Scraping NEW DoorDash Scraping NEW Instacart Scraping NEWTesco Data Scraping NEW Sainsbury's Scraping NEW ASDA Data Scraping NEW Ocado Scraping NEW ASOS Data Scraping NEW Rightmove Scraping NEW Deliveroo Scraping NEW Zalando Scraping NEW Otto Scraping NEW Cdiscount Scraping NEW Carrefour Scraping NEW Allegro Scraping NEW Bol.com Scraping NEWFlipkart Data Scraping JioMart Data Scraping NEW BigBasket Scraping NEW Myntra Data Scraping NEW Nykaa Data Scraping NEW Blinkit Data Scraping Zepto Data Scraping Zomato Data Scraping Swiggy Data ScrapingNoon Data Scraping NEW Amazon.ae Scraping NEW Talabat Data Scraping NEW Careem Data Scraping NEW PropertyFinder Scraping NEWPricing & Promotions

MAP Violations Brand Protection Counterfeit Detection Price Intelligence AI HOT Data IntelligenceBrand & Intelligence

Share of Search Content Audit & PDP Reviews & Ratings Retail Media Buy Box Monitoring Social Commerce HOT Live Commerce NEW Agentic Commerce NEWDigital Shelf & Search

Assortment Planning Competitive Benchmarking Product Availability Seller Intelligence NEW Q-Commerce NEWAssortment

E-commerce Intelligence Hyperlocal Insights POI & Store Locator DTC Brand Analytics NEWFor Retailers

Marketplace Scrapers

Amazon API TikTok Shop API HOT Uber Eats API Airbnb API Zepto / Blinkit API Instacart API NEW Talabat API NEWData APIs

Web Extract API Reviews API SERP API Pricing Webhook NEWUniversal APIs

Live Crawler API Scheduler Realtime Alerts Webhook Delivery 🐍 Python SDK 💚 Node.js SDKDelivery & SDKs

Knowledge Center

Digital Shelf Playbook MAP Compliance Guide Pricing Intel Guide Scraping Compliance TikTok Shop Guide NEW Cross-Border Guide NEWGuides & Playbooks

Sample Datasets HOT ROI Calculator NEW API Postman Collection Demo Dashboards Free API Playground NEW Press KitDownloads & Tools

Trust Center About Us FAQs CareersTrust & Company