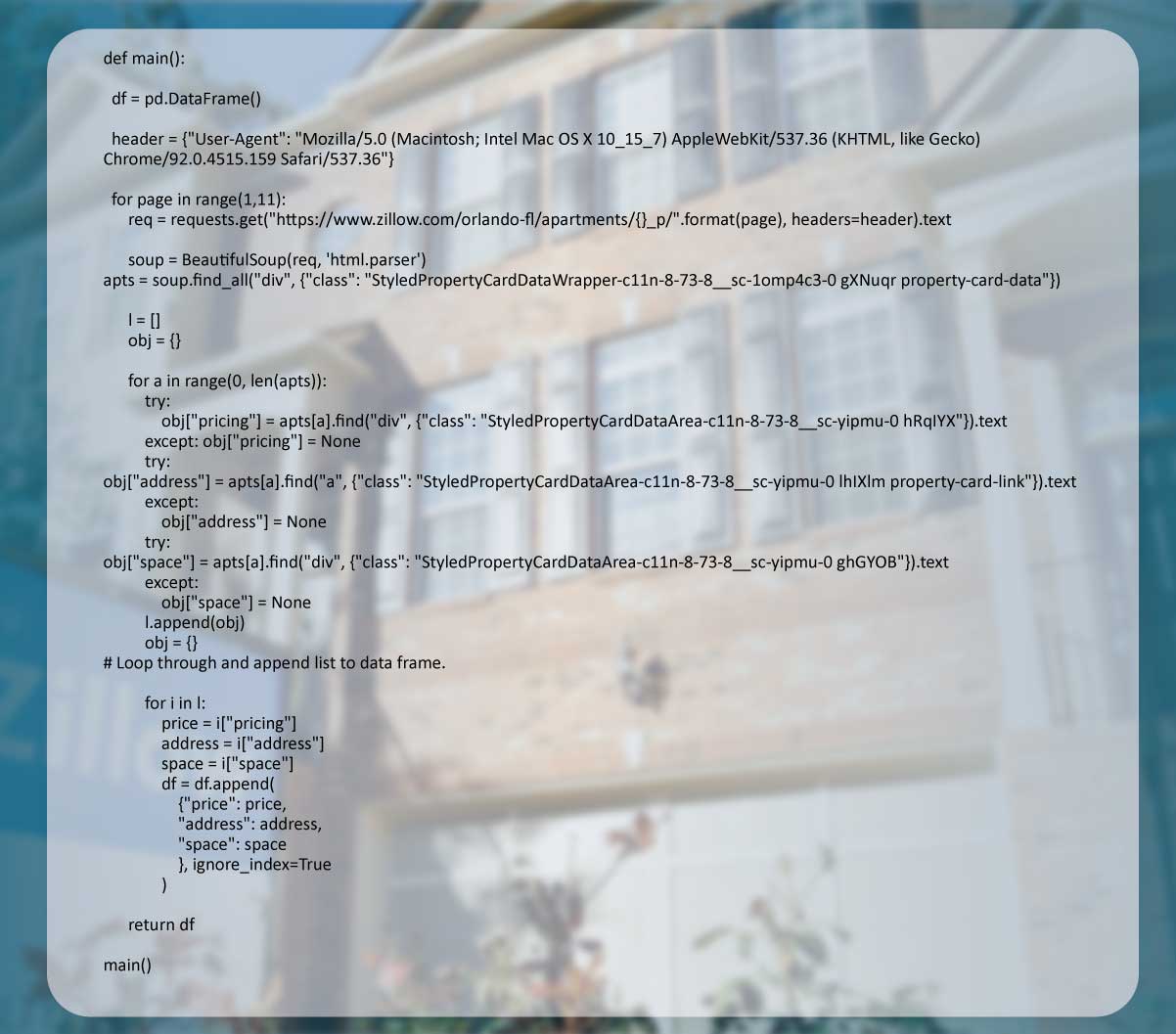

We have used Python to scrape apartment data on Zillow.

As many Zillow tutorials and projects focused on buying a home, we thought it might be interesting to scrape Zillow apartment data, as the data reverted is a lesser variable than home data.

We will show three critical steps associated with getting current apartment data:

We have covered methods you might have encountered, including BeautifulSoup, basic SQL, Panda's operation to do data frame manipulation, and BigQuery API.

Unlike sites with substantial text, including Wikipedia, Zillow includes many dynamic and visual elements like map applications and slide shows.

It doesn't make it harder to extract data, but you'll need to dig a bit deeper into underlying CSS or HTML to get the particular elements you'll need to target.

For initial data, we require to resolve three problems:

Complete disclosure:

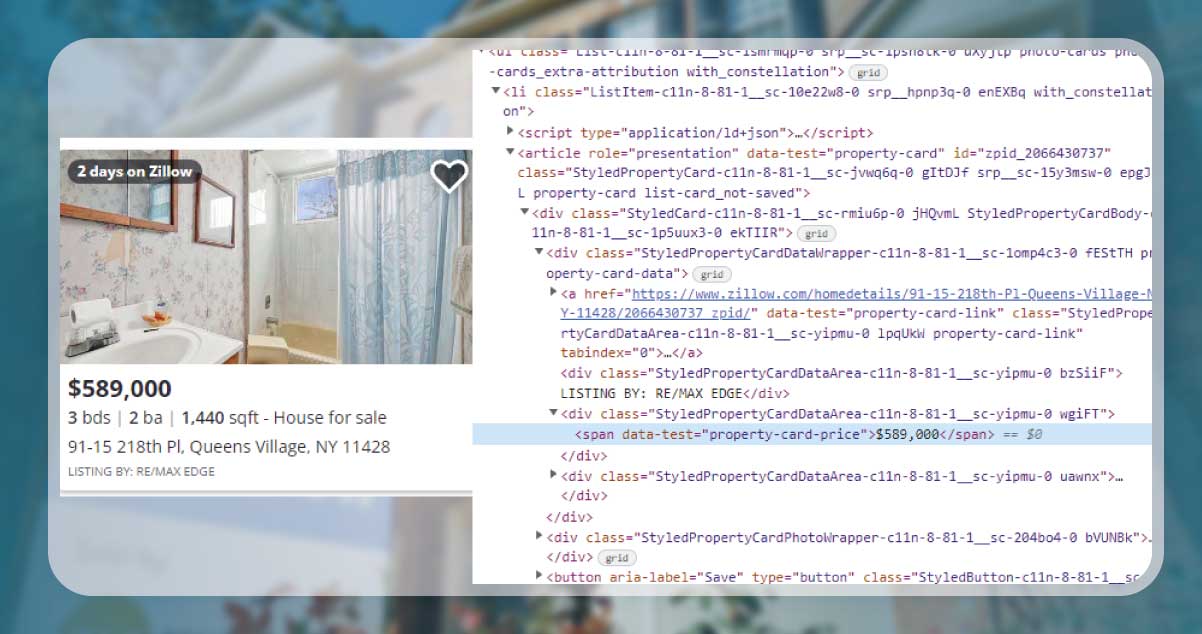

The thorniest part of web scraping is getting the elements containing the data you wish to scrape.

If you're using Chrome, hovering on what you need to extract and pressing "Inspect" will show you the fundamental developer code.

Here, we want to focus on a class called "Styled Property Card Data."

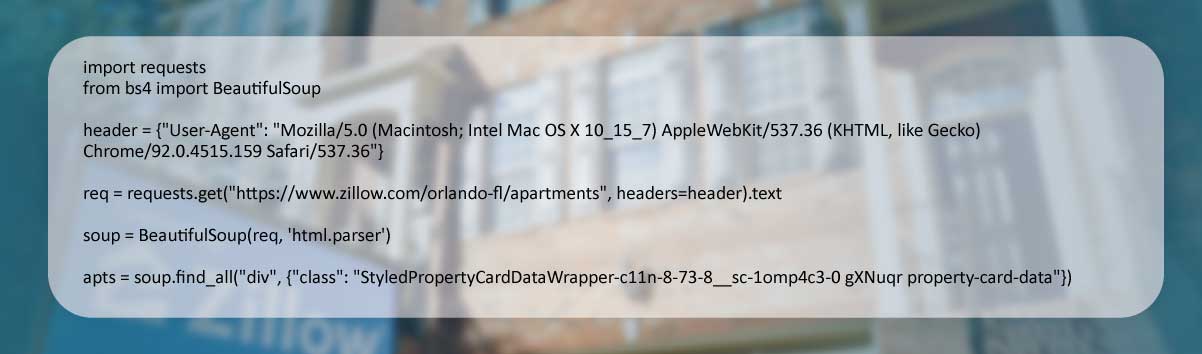

When you're over the sticker shock of the 1-bedroom apartment available at $1800/month, you can utilize both request and BeautifulSoup libraries to make an easy initial request.

Note: All requests made to Zillow would activate a captcha. So, to avoid it, utilize a header given in the script here.

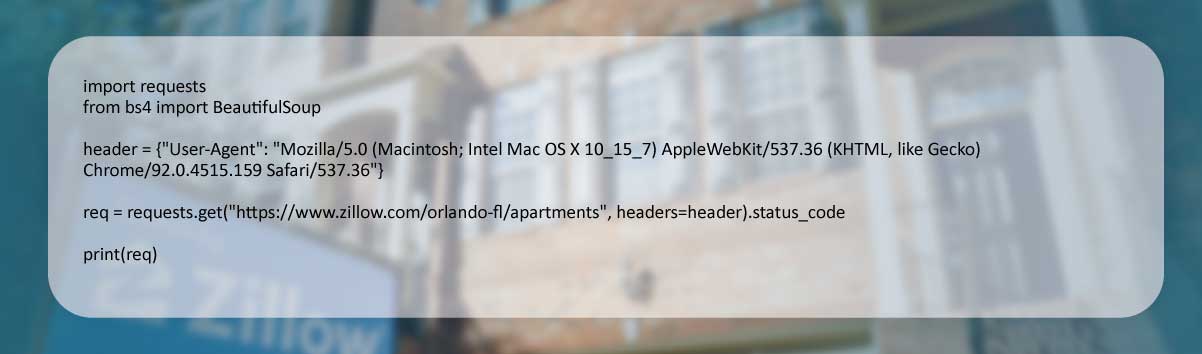

Before you return or print any outputs, ensure your request got successful. In the case of 200, we could check the results of "req."

Studying a line of raw output approves that we're directing the correct elements.

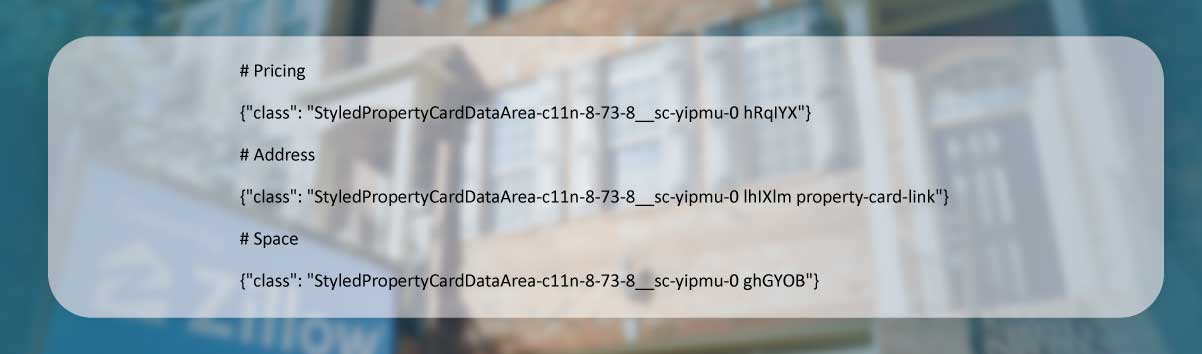

We have raw data, so we must regulate precisely which elements to analyze.

In imagining the final SQL table, we have determined we need the given fields:

After searching around, we thought this information gets stored in the following elements:

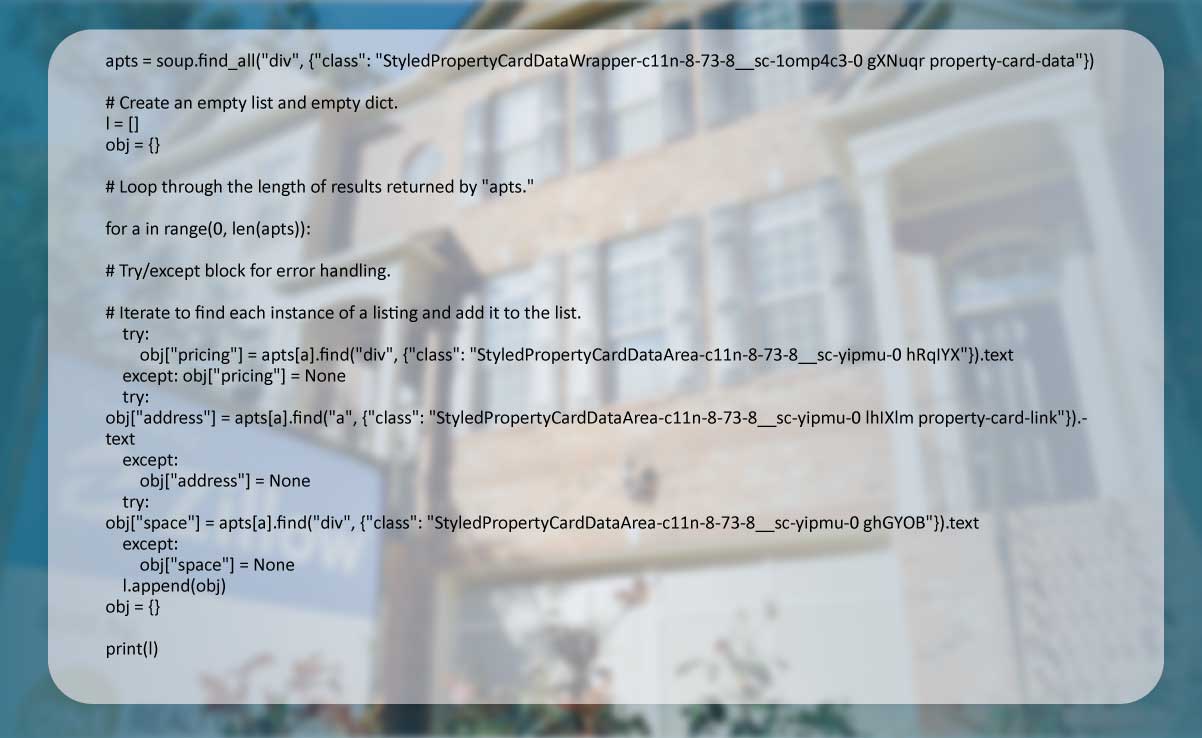

To scrape these elements, we have to make a looping structure with a data structure for storing results, or we'll only have limited rows.

We'll do the requests again while looping through the length of the results saved in "apts."

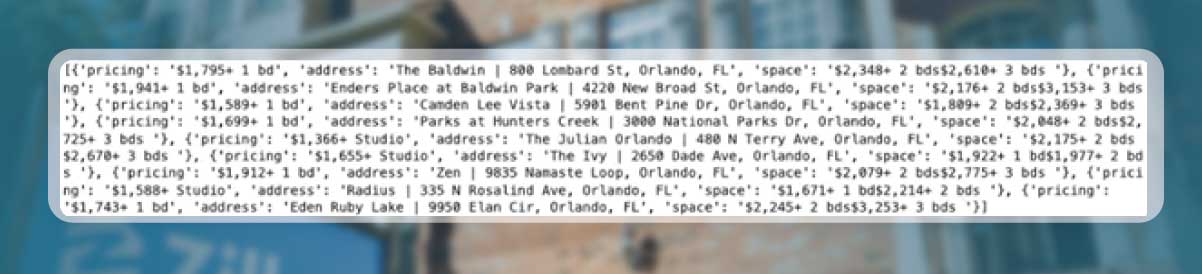

It returns a listing of dictionaries with one dict for every listing.

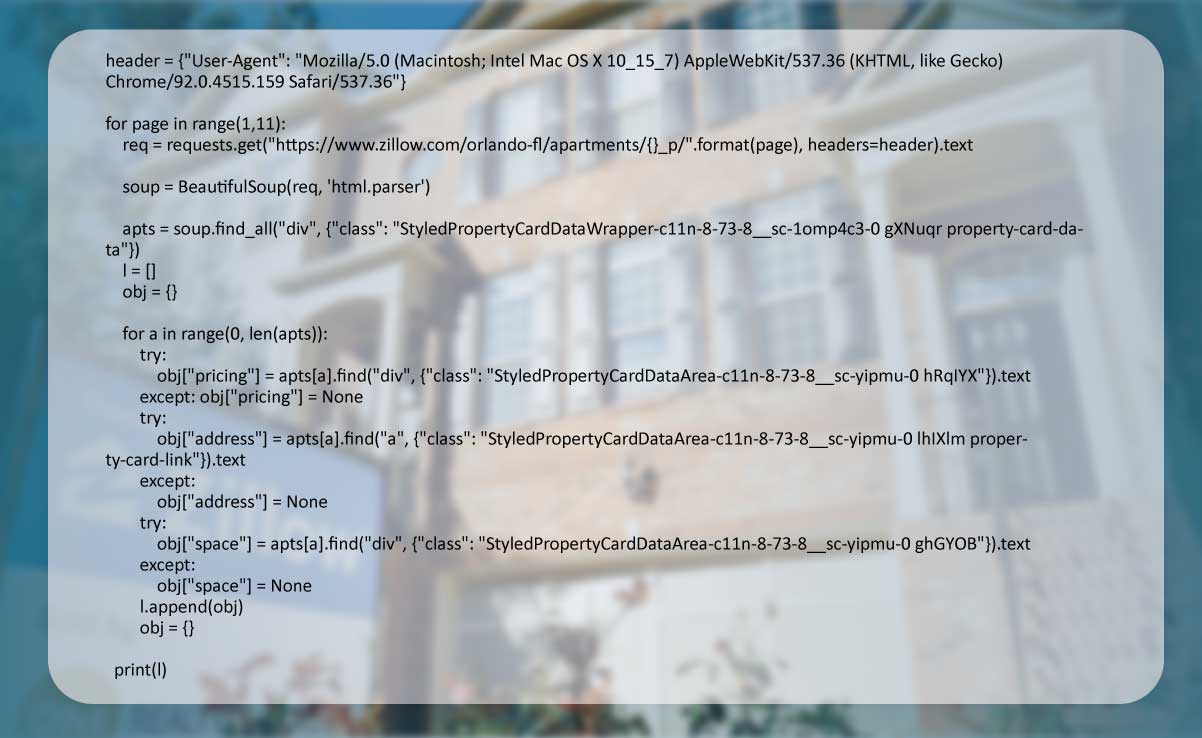

If you get the right parameters, you could treat the string with a link including other f-strings and insert variables that can change provided the looping structure.

We previously covered the web extraction concept while trying to ask for data from different pages of Rick & Morty API.

In this example, we have to append a page number variable to an original URL and loop through integers.

Let's include this in the more extensive script:

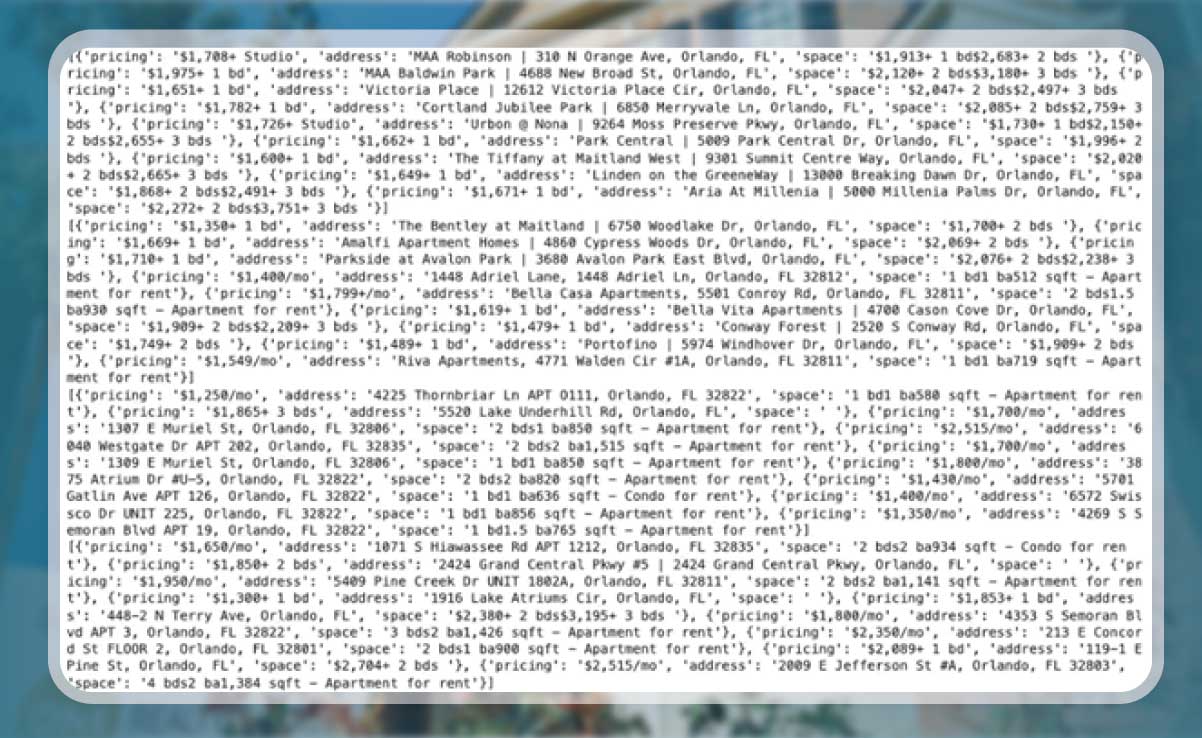

And verify the results:

Note that we have the listing of dicts for all pages specified within the range.

However, being a data scraping company, we don't like disorganized data. We will clean this by iterating this list and improving the data frame.

Wow! The results are much better!

We have learned how to understand and manipulate data saved in the HTML code.

We have learned how to make a request and save raw data in the listing of dictionaries.

We have covered dynamic link generation for iterating through different pages.

In conclusion, we have converted a messy result into a moderately cleaner data frame.

For more information about Zillow data scraping services, contact Actowiz Solutions. You can also contact us for all your mobile app scraping and web scraping service and data collection service requirements.

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

Complete guide to scraping Noon Saudi Arabia, Amazon.sa, Jarir, and Extra for Saudi e-commerce intelligence. Built for brands entering KSA, regional distributors, and Vision 2030 investors.

Learn how Actowiz Solutions helps you scrape Uber vs Ola vs Rapido fare comparison data for real-time pricing intelligence and ride-hailing market insights.

Scrape 10 Largest Food Chains Data in the United States in 2026 to track pricing, market share, and consumer trends with real-time insights.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.