– Full Technical Tutorial.webp)

Google Maps is the largest publicly accessible business directory in the world. It powers everything from:

Extracting 1,000,000+ Google Maps business listings can support:

But scraping Google Maps at scale is extremely challenging because:

This technical guide shows how Actowiz Solutions builds large-scale Google Maps scraping pipelines using:

Let’s build a workflow capable of scraping 1 million business listings.

pip install selenium

pip install undetected-chromedriver

pip install requests

pip install beautifulsoup4

pip install pandas

pip install fake-useragent

pip install lxmlWe’ll use:

Examples:

Google Maps search URL:

https://www.google.com/maps/search/

import undetected_chromedriver as uc

from selenium.webdriver.common.by import By

from selenium.webdriver.common.keys import Keys

from time import sleep

browser = uc.Chrome()

browser.get("https://www.google.com/maps/search/restaurants+in+new+york")

sleep(5)Google Maps loads 20–30 listings at a time inside the left-side results pane.

Detect scroll pane:

scrollable = browser.find_element(By.CLASS_NAME, "m6QErb")Scroll multiple times:

for _ in range(300): # adjust for millions

browser.execute_script("arguments[0].scrollTop = arguments[0].scrollHeight", scrollable)

sleep(1.5)For 1 million listings, you’ll need:

Each listing is wrapped inside a containing a business card.

cards = browser.find_elements(By.XPATH, '//div[contains(@class, "Nv2PK")]')records = []

for card in cards:

try:

name = card.find_element(By.CLASS_NAME, "qBF1Pd").text

except:

name = ""

try:

rating = card.find_element(By.CLASS_NAME, "MW4etd").text

except:

rating = ""

try:

reviews = card.find_element(By.CLASS_NAME, "UY7F9").text

except:

reviews = ""

try:

category = card.find_element(By.CLASS_NAME, "W4Efsd").text

except:

category = ""

try:

address = card.find_element(By.CLASS_NAME, "rllt__details").text

except:

address = ""

try:

url = card.find_element(By.TAG_NAME, "a").get_attribute("href")

except:

url = ""

records.append({

"name": name,

"rating": rating,

"review_count": reviews,

"category": category,

"address": address,

"maps_url": url

})Google Maps URLs contain:

.../data=!3m1!4b1!4m5!3m4!1s

Extract Place ID via regex.

import re

def extract_place_id(url):

match = re.findall(r"1s(.*)!2m", url)

return match[0] if match else None

for r in records:

r["place_id"] = extract_place_id(r["maps_url"])Google often serves structured JSON via:

https://www.google.com/maps/preview/place/details?authuser=0&hl=en&gl=us&pb=!1m2!1s

We can fetch details using Requests:

import requests

def fetch_place_details(place_id):

try:

url = f"https://www.google.com/maps/preview/place/details?authuser=0&hl=en&gl=us&pb=!1m2!1s{place_id}"

r = requests.get(url, headers={"User-Agent": "Mozilla/5.0"})

return r.text

except:

return "{}"Parse JSON-like structure from Google’s "pb" encoded data:

This requires custom parsers (Actowiz uses internal decoders), but simple fields like phone, hours, plus code, and website can be parsed using regex.

def extract_field(raw, key):

try:

idx = raw.index(key)

snippet = raw[idx:idx+200]

value = re.findall(r'"([^"]+)"', snippet)[1]

return value

except:

return ""

Attach:

for r in records:

raw = fetch_place_details(r["place_id"])

r["phone"] = extract_field(raw, "phone")

r["website"] = extract_field(raw, "website")

r["plus_code"] = extract_field(raw, "plus_code")Coordinates appear inside listing URLs:

def extract_lat_long(url):

try:

match = re.findall(r"@([\d\.\-]+),([\d\.\-]+)", url)[0]

return float(match[0]), float(match[1])

except:

return None, None

for r in records:

r["latitude"], r["longitude"] = extract_lat_long(r["maps_url"])To scrape 1M records:

Generate 500+ queries per country:

Cut the map into grids:

Search each grid:

https://www.google.com/maps/search/restaurants/@

Use:

Run scraper on:

Avoid duplicate businesses across queries.

Use Place ID as unique key:

import pandas as pd

df = pd.DataFrame(records)

df = df.drop_duplicates(subset=["place_id"])df.to_csv("google_maps_business_listings.csv", index=False)

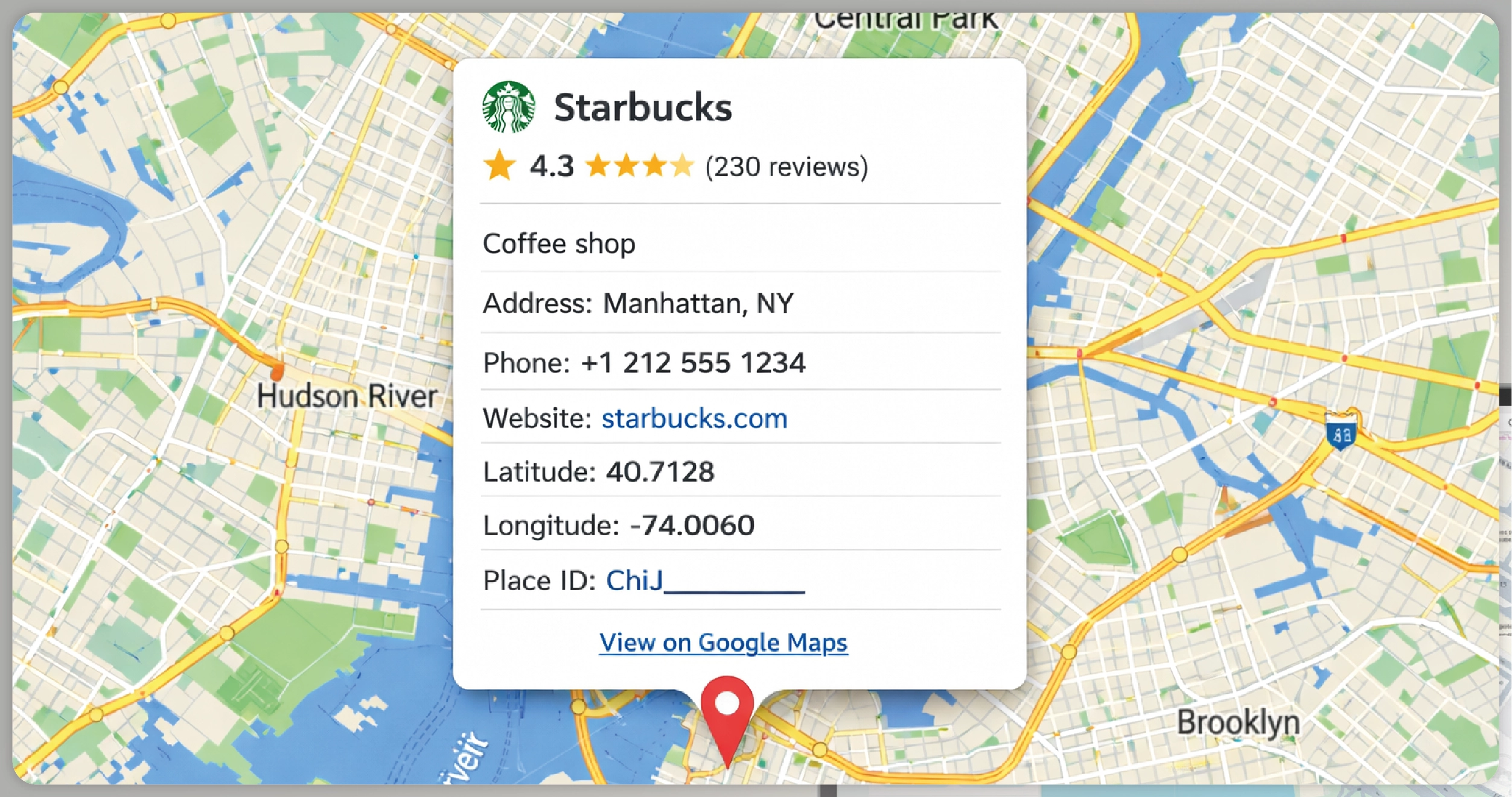

| Field | Example |

|---|---|

| name | Starbucks |

| rating | 4.3 |

| reviews | 230 |

| category | Coffee shop |

| address | Manhattan, NY |

| phone | +1 212 555 1234 |

| website | starbucks.com |

| latitude | 40.7128 |

| longitude | -74.0060 |

| place_id | ChIJ________ |

| maps_url | https://maps.google.com/... |

| Challenge | Explanation |

|---|---|

| Strong anti-bot protection | Causes blocks after 20–50 scrolls |

| Dynamic containers | review and detail blocks load asynchronously |

| infinite scroll | pagination is not linear |

| inconsistent HTML | varies by region |

| throttling | rapid requests trigger captcha |

Actowiz Solutions solves this using:

Choose Actowiz if you need:

We support extraction across:

In this tutorial, you learned how to:

This becomes the foundation of a full-scale location intelligence pipeline.

You can also reach us for all your mobile app scraping, data collection, web scraping , and instant data scraper service requirements!

Our web scraping expertise is relied on by 3,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

How IHG Hotels & Resorts data scraping enables real-time rate tracking, improves availability monitoring, and boosts revenue decisions.

How a top-10 UK grocery retailer used Actowiz grocery price scraping to achieve 300% promotional ROI and reduce competitive response time from 5 days to same-day.

Track UK Grocery Products Daily Using Automated Data Scraping across Morrisons, Asda, Tesco, Sainsbury’s, Iceland, Co-op, Waitrose, and Ocado for insights.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.