In the rapidly evolving real estate market, data-driven decision-making is crucial for investors, realtors, and property managers. Web Scraping Real Estate Data allows businesses to extract valuable insights from various property listing websites, helping them analyze pricing trends, demand fluctuations, and competitive landscapes.

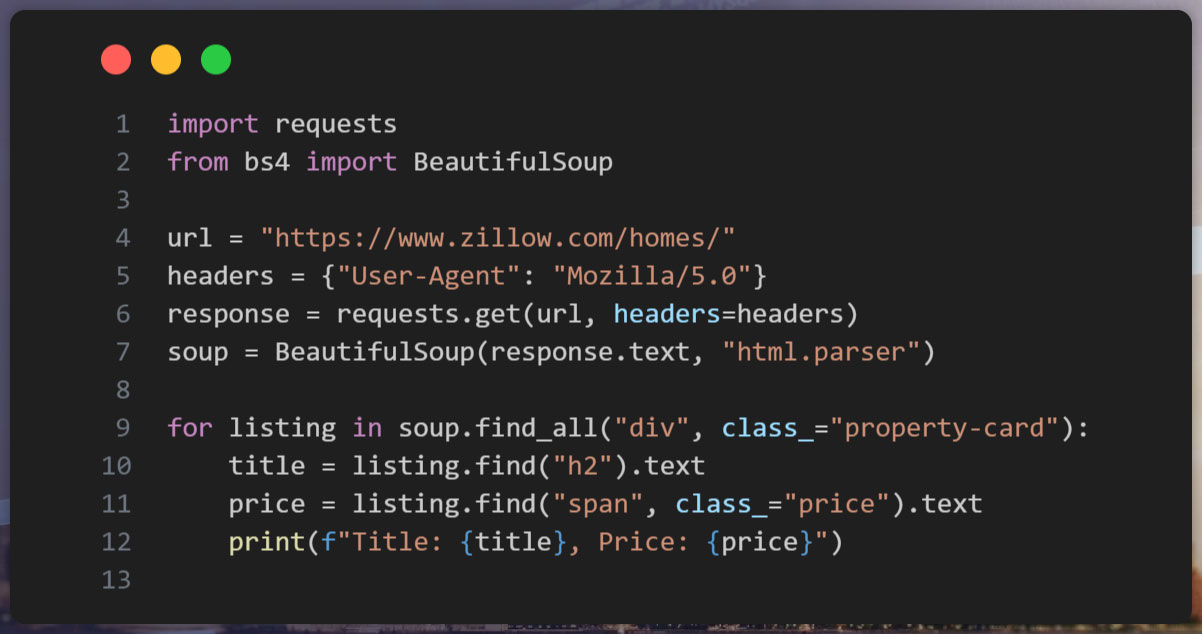

By leveraging Real Estate Data Scraping Python techniques, businesses can automate data collection from platforms like Zillow, Realtor, and Redfin. This blog explores how to Scrape Property Listings with Python, the best practices for Real Estate Web Scraping Tutorial, and how Actowiz Solutions can simplify the process with cutting-edge data extraction tools.

The real estate market is data-driven, relying on factors such as property values, rental trends, and demand-supply ratios. Using Python Web Scraping for Real Estate, businesses can automate the extraction of valuable property data, minimizing manual work and improving accuracy.

| Benefit | Impact |

|---|---|

| Automated Real Estate Data Collection | Saves time by eliminating manual data entry. |

| Real Estate Price Scraping Python | Helps track property prices dynamically. |

| Market Trend Analysis | Predicts future price movements based on historical data. |

| Competitive Intelligence | Monitors competitor pricing and property features. |

| Investment Decision-Making | Provides valuable insights for real estate investors. |

| Metric | Data Point |

|---|---|

| Average Property Price Growth | 5-7% annually in major metropolitan areas. |

| Rental Yield | 3-5% per year in prime real estate locations. |

| Percentage of Online Listings | Over 90% of property transactions start online. |

| Market Volatility Index | Fluctuates between 10-15% yearly. |

| Competitor Price Variance | Price differences of up to 15% between competitors. |

By implementing Real Estate Market Data Extraction, businesses gain structured and real-time information to enhance their decision-making processes. Web scraping enables firms to stay ahead of market trends, monitor competitive pricing, and make data-driven investment choices.

The integration of Python-based web scraping solutions ensures continuous updates and improved forecasting, giving real estate professionals a competitive edge in an ever-evolving market.

This script helps in Scraping Zillow and Realtor Data, which can then be analyzed for market trends.

As the real estate industry embraces digital transformation, web scraping will play an increasingly crucial role in data collection, market analysis, and pricing strategies. The next decade will witness a rapid evolution in real estate data scraping, driven by artificial intelligence, blockchain, and machine learning. Below are the key projected trends from 2025 to 2030:

| Year | Predicted Market Growth (%) | Key Trend |

|---|---|---|

| 2025 | 15% | AI-driven property price predictions |

| 2026 | 18% | Increased adoption of Automated Real Estate Data Collection |

| 2027 | 22% | Blockchain integration for real estate transparency |

| 2028 | 25% | Enhanced use of Real Estate Price Scraping Python for dynamic pricing |

| 2029 | 28% | Widespread use of machine learning in Real Estate Market Data Extraction |

| 2030 | 30% | Fully automated AI-driven real estate pricing models |

AI-powered algorithms will enhance the accuracy of property price forecasts, leveraging large datasets scraped from real estate listings, historical sales, and economic indicators. These predictions will help buyers, sellers, and investors make informed decisions.

Automation will become a standard in real estate data collection, with advanced scraping tools gathering and analyzing property details, rental trends, and market fluctuations in real-time. This will lead to more efficient decision-making for real estate professionals.

Blockchain technology will revolutionize real estate transactions by ensuring transparent, immutable property records. Web scraping tools will facilitate seamless access to blockchain-based real estate data, improving trust and reducing fraud.

Python-based web scraping tools will be extensively used to track and analyze price fluctuations dynamically. This will enable real-time price adjustments for property listings, optimizing pricing strategies based on market demand.

Machine learning models will refine real estate web scraping by identifying market trends, consumer behavior patterns, and investment opportunities with higher precision.

By 2030, AI will achieve full automation in real estate pricing models, making real-time adjustments based on extensive data analysis. This will streamline property valuation and investment strategies, transforming the industry.

| Year | Technology Impact Level | Market Adoption (%) |

|---|---|---|

| 2025 | Moderate | 40% |

| 2026 | High | 55% |

| 2027 | Very High | 65% |

| 2028 | Extensive | 75% |

| 2029 | Transformational | 85% |

| 2030 | Industry Standard | 95% |

Real estate web scraping will continue to shape the industry, empowering businesses and individuals with actionable insights. As AI, blockchain, and machine learning mature, real estate market data extraction will become more precise, efficient, and indispensable.

Common Challenges

Best Practices

✔ Use Rotating Proxies and User Agents: Employing rotating proxies and user agents helps bypass bot detection mechanisms.

✔ Follow Legal and Ethical Guidelines: Ensure compliance with data protection laws and respect website terms when scraping public data.

✔ Store and Clean Scraped Data: Implement data validation techniques to handle missing values and inconsistencies before analysis.

✔ Use Headless Browsers and CAPTCHA Solvers: Tools like Selenium and Puppeteer can help navigate websites dynamically and solve simple CAPTCHA challenges.

✔ Monitor Website Changes: Regularly update scraping scripts to adapt to website structure changes and avoid disruptions.

By following these best practices, businesses can efficiently extract, analyze, and utilize real estate data while minimizing risks and ensuring compliance.

Actowiz Solutions specializes in Web Scraping Real Estate Data, offering tailored solutions to businesses looking to streamline data collection from real estate websites. Our services include:

With Actowiz Solutions, businesses can focus on making strategic decisions while we handle the complexities of data mining and data extraction.

As the real estate industry moves towards data-driven decision-making, Python Web Scraping for Real Estate becomes an essential tool for businesses. By leveraging Real Estate Data Scraping Python, companies can gain insights into pricing trends, competitor strategies, and investment opportunities.

If you’re looking for a reliable partner to handle your Real Estate Market Data Extraction, Scrape Zillow and Realtor Data, or automate data collection, Actowiz Solutions has got you covered! Contact us today to get started with your customized real estate web scraping solution. You can also reach us for all your mobile app scraping, data collection, web scraping , and instant data scraper service requirements!

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

Complete guide to scraping Noon Saudi Arabia, Amazon.sa, Jarir, and Extra for Saudi e-commerce intelligence. Built for brands entering KSA, regional distributors, and Vision 2030 investors.

Learn how Actowiz Solutions helps you scrape Uber vs Ola vs Rapido fare comparison data for real-time pricing intelligence and ride-hailing market insights.

Scrape 10 Largest Food Chains Data in the United States in 2026 to track pricing, market share, and consumer trends with real-time insights.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.