In this blog, we will take a comprehensive look into scraping Python wrapper and its functionality and specifically focus on using it to search for tweets based on location. We will also delve into why the wrapper may not always perform as expected. Let's dive in

snscrape is a remarkable Python library that enables users to scrape tweets from Twitter without the need for personal API keys. With its lightning-fast performance, it can retrieve thousands of tweets within seconds. Moreover, snscrape offers powerful search capabilities, allowing for highly customizable queries. While the documentation for scraping tweets by location is currently limited, this blog aims to comprehensively introduce this topic. Let's delve into the details:

Introduction to Snscrape: Snscrape is a feature-rich Python library that simplifies scraping tweets from Twitter. Unlike traditional methods that require API keys, snscrape bypasses this requirement, making it accessible to users without prior authorization. Its speed and efficiency make it an ideal choice for various applications, from research and analysis to data collection.

The Power of Location-Based Tweet Scraping: Location-based tweet scraping allows users to filter tweets based on geographical coordinates or place names. This functionality is handy for conducting location-specific analyses, monitoring regional trends, or extracting data relevant to specific areas. By leveraging Snscrape's capabilities, users can gain valuable insights from tweets originating in their desired locations.

Exploring Snscrape's Location-Based Search Tools: Snscrape provides several powerful tools for conducting location-based tweet searches. Users can effectively narrow their search results to tweets from a particular location by utilizing specific parameters and syntax. This includes defining the search query, specifying the geographical coordinates or place names, setting search limits, and configuring the desired output format. Understanding and correctly using these tools is crucial for successful location-based tweet scraping.

Overcoming Documentation Gaps: While snscrape is a powerful library, its documentation on scraping tweets by location is currently limited. This article will provide a comprehensive introduction to the topic to bridge this gap, covering the necessary syntax, parameters, and strategies for effective location-based searches. Following the step-by-step guidelines, users can overcome the lack of documentation and successfully utilize snscrape for their location-specific scraping needs.

Best Practices and Tips: Alongside exploring Snscrape's location-based scraping capabilities, this article will also offer best practices and tips for maximizing the efficiency and reliability of your scraping tasks. This includes handling rate limits, implementing error-handling mechanisms, ensuring data consistency, and staying updated with any changes or updates in Snscrape's functionality.

In this blog, we’ll use tahe development version of snscrape that can be installed with

pip install git+https://github.com/JustAnotherArchivist/snscrape.git

Note: this needs Python 3.8 or latest

Some familiarity of the Pandas module is needed.

Three packages are available given below

To get the primary (i.e. most current) 100 tweets which contains phrase data science, we can utilize the code:

-100-tweets.jpg)

That can be shortened into given line:

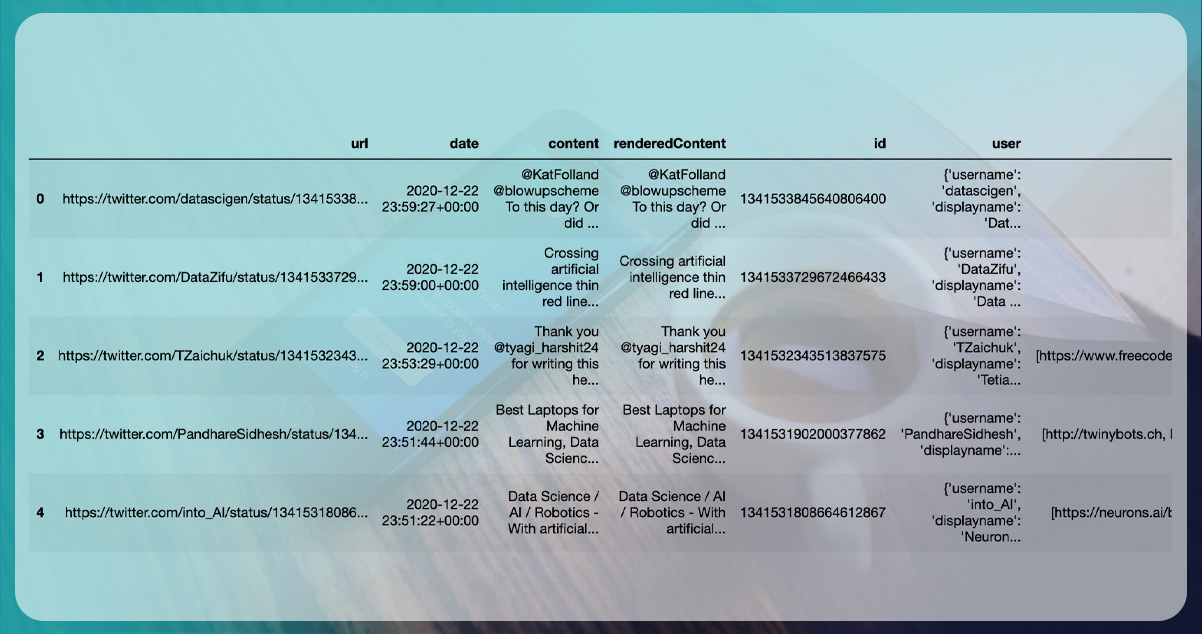

Outputting the initial five results, we can start seeing the information that line provides us:

however this isn’t it! It gives 21 data columns in reply including:

We encourage you to explore and experiment with the various features of snscrape to better understand its capabilities. Additionally, you can refer to the mentioned article for more in-depth information on the subject. Later in this blog, we will delve deeper into the user field and its significance in tweet scraping. By gaining a deeper understanding of these concepts, you can harness the full potential of snscrape for your scraping tasks.

When it comes to scraping tweets by location using snscrape, you have two options: utilizing the "near:city" tag along with "within:radius" or using "geocode:lat,long,radius." Through thorough research, it has been confirmed that these options yield identical results when used correctly, as interpreted by Twitter.

In this code snippet, we define the search query as "pizza near:Los Angeles within:10km", which specifies that we want to search for tweets containing the word "pizza" near Los Angeles within a radius of 10 km. The TwitterSearchScraper object is created with the search query, and then we iterate over the retrieved tweets and print their content.

Feel free to adjust the search query and radius per your specific requirements.

For comparing results, we can utilize an inner merging on two DataFrames:

common_rows = df_coord.merge(df_city, how='inner')

That returns 50 , for example, they both have the same rows.

When determining the location of tweets on Twitter, there are two primary sources: the geo-tag associated with a specific tweet and the user's location mentioned in their profile. However, it's important to note that only a small percentage of tweets (approximately 1-2%) are geo-tagged, making it an unreliable metric for location-based searches. On the other hand, many users include a location in their profile, but it's worth noting that these locations can be arbitrary and inaccurate. Some users provide helpful information like "London, England," while others might use humorous or irrelevant descriptions like "My Parents' Basement."

Despite the limited availability and potential inaccuracies of geo-tagged tweets and user profile locations, Twitter employs algorithms as part of its advanced search functionality to interpret a user's location based on their profile. This means that when you look for tweets through coordinates or city names, the search results will include tweets geotagged from the location and tweets posted by users who have that location (or a location nearby) mentioned in their profile.

Twitter's advanced search algorithms consider geo-tagged tweets and user profile locations to provide a broader set of tweets when performing location-based searches.

To illustrate the usage of location-based searching on Twitter, let's consider an example. Suppose we perform a search for tweets near "London." Here are two examples of tweets that were found using different methods:

The first tweet is geo-tagged, which means it contains specific geographic coordinates indicating its location. In this case, the tweet was found because of its geo-tag, regardless of whether the user has a location mentioned in their profile or not.

The following tweet isn’t geo-tagged, which means that it doesn't have explicit geographic coordinates associated with it. However, it was still included in the search results because a user has given a location in the profile that matches or is closely associated with London.

When performing a location-based search on Twitter, you can come across tweets that are either geo-tagged or have users with matching or relevant locations mentioned in their profiles. This allows for a more comprehensive search, capturing tweets from specific geographic locations and users who have declared their association with those locations.

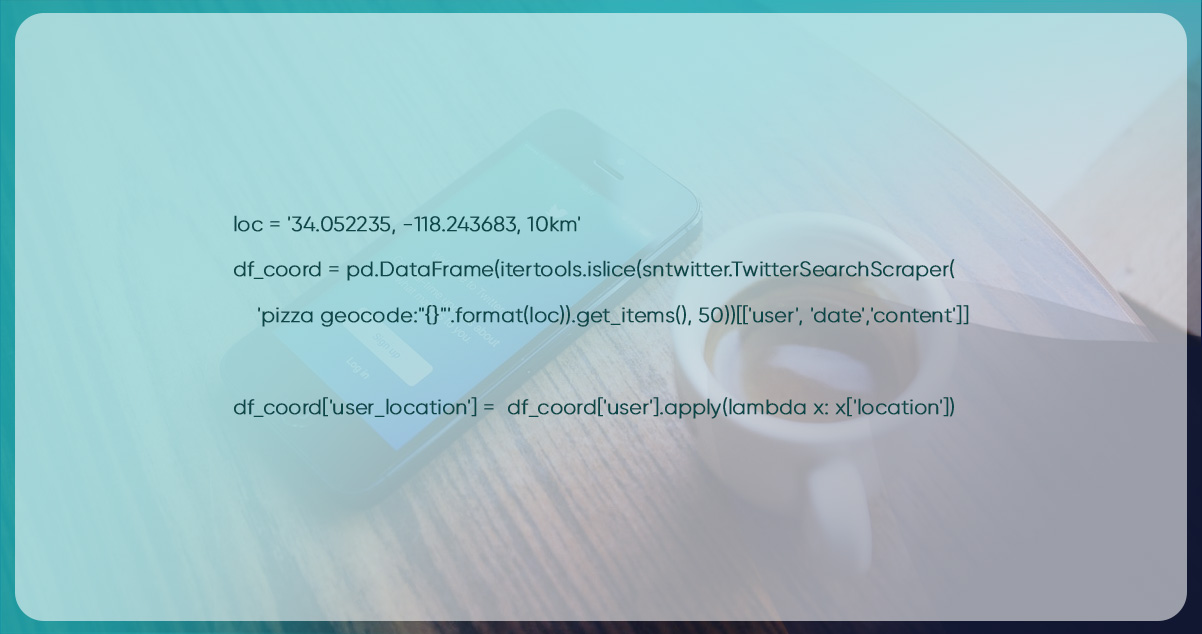

If you're using snscrape to scrape tweets and want to extract the user's location from the scraped data, you can do so by following these steps. In the example below, we scrape 50 tweets within a 10km radius of Los Angeles, store the data in a DataFrame, and then create a new column to capture the user's location.

You can customize the code further to suit your needs, such as extracting additional tweet data or analyzing the scraped tweets and user locations. By iterating over the scraped tweets, you can access the user.location attribute to retrieve the user's location information. This value is then stored in a new column called "user_location" in the DataFrame.

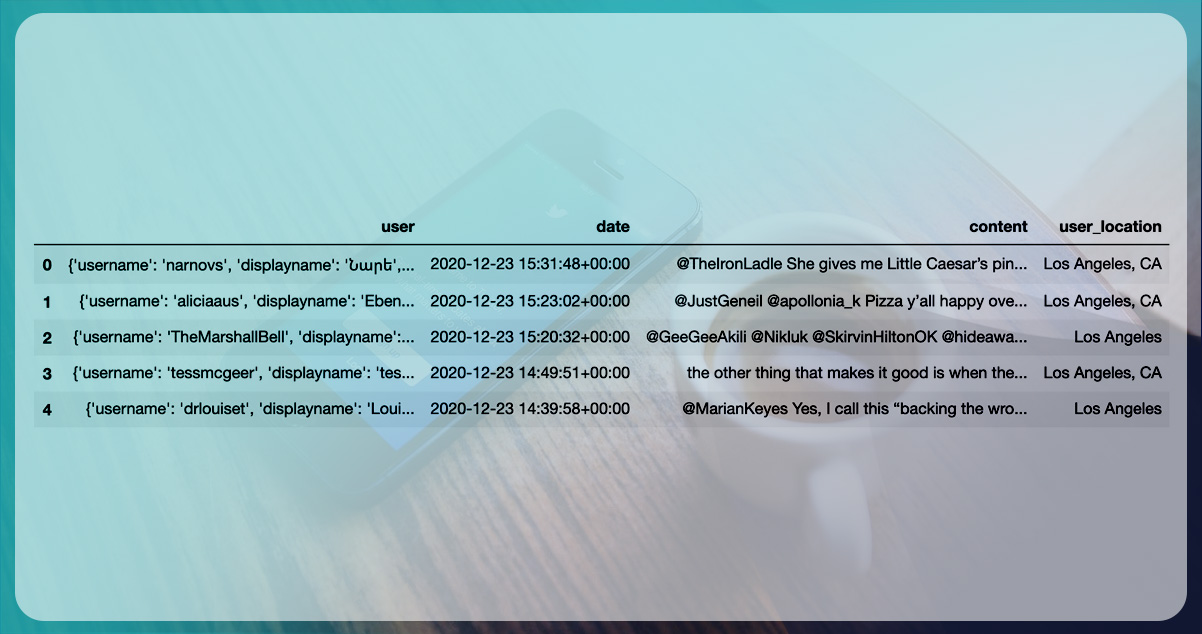

Upon inspecting the first 5 rows of the DataFrame, it is evident that while the location formats vary, they can all be interpreted as referring to Los Angeles.

The use of the near: and geocode: tags in Twitter's advanced search can sometimes yield inconsistent results, especially when searching for specific towns, villages, or countries. For instance, while searching for tweets nearby Lewisham, the results may show tweets from a completely different location, such as Hobart, Australia, which is over 17,000 km away.

To ensure more accurate results when scraping tweets by locations using snscrape, it is recommended to use the geocode tag having longitude & latitude coordinates, along with a specified radius, to narrow down the search area. This approach will provide more reliable and precise results based on the available data and features.

In conclusion, the snscrape Python module is a valuable tool for conducting specific and powerful searches on Twitter. Twitter has made significant efforts to convert user input locations into real places, enabling easy searching by name or coordinates. By leveraging its capabilities, users can extract relevant information from tweets based on various criteria.

For research, analysis, or other purposes, snscrape empowers users to extract valuable insights from Twitter data. Tweets serve as a valuable source of information. When combined with the capabilities of snscrape, even individuals with limited experience in Data Science or subject knowledge can undertake exciting projects.

Happy scrapping!

For more details, you can contact Actowiz Solutions anytime! Call us for all your mobile app scraping and web scraping services requirements.

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

Complete guide to scraping Shopify store data in 2026. Extract product prices, reviews, and inventory from Shopify stores for competitive intelligence.

Discover how Natural Grocers achieved a 23% increase in promotional ROI using real-time organic product pricing intelligence. Learn how data-driven pricing strategies enhance promotions and retail performance.

Track UK Grocery Products Daily Using Automated Data Scraping across Morrisons, Asda, Tesco, Sainsbury’s, Iceland, Co-op, Waitrose, and Ocado for insights.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.