Puppeteer and Cheerio are two widely used Node.js libraries that allow developers to browse the internet programmatically. These libraries are commonly chosen by developers who want to create web scrapers using Node.js.

To compare Puppeteer and Cheerio, we will demonstrate the process of building a web scraper using each library. This example will focus on extracting blog links from In Plain English, a renowned programming platform.

First, let's start with Cheerio. We can use Cheerio's jQuery-like syntax to parse and manipulate HTML. We will fetch the webpage's HTML content using an HTTP request library and then utilize Cheerio to extract the desired blog links by targeting specific elements and attributes.

On the other hand, Puppeteer offers a more comprehensive approach. Launching a headless browser instance allows us to automate interactions with web pages. We can navigate the desired webpage, evaluate and manipulate the DOM, and extract the necessary information. In this case, we will use Puppeteer to navigate to the In Plain English website, locate the blog links using CSS selectors, and retrieve them.

By implementing the web scraper with Puppeteer and Cheerio, we can compare their functionalities, ease of use, and performance in extracting blog links from In Plain English. This comparison will provide insights into the strengths and trade-offs of each library for web scraping tasks.

Cheerio and Puppeteer are powerful libraries with distinct features that can be leveraged for web scraping purposes, offering unique advantages in their own right.

Cheerio is primarily the DOM parser that excels at parsing XML and HTML files. This is a lightweight and efficient implementation of the core jQuery library designed for server-side usage. When using Cheerio for web scraping, combining it with Node.js HTTP customer library like Axios is necessary to make HTTP requests.

Unlike Puppeteer, Cheerio does not render websites like browsers, meaning it does not use CSS or loading external resources. Additionally, Cheerio just cannot relate with websites by clicking buttons or accessing content behind the scripts. As a result, scraping single-page applications (SPAs) built with front-end technologies like React can be challenging.

One of the notable advantages of Cheerio is its easy learning curves, especially for users familiar with jQuery. The syntax is simple and intuitive, making it accessible to developers with jQuery experience. Moreover, Cheerio is known for its speed and efficiency compared to Puppeteer.

Puppeteer is a powerful browser automation tool that provides access to the entire browser engine, usually based on Chromium. Compared to Cheerio, it offers more versatility and functionality.

One of the critical advantages of Puppeteer is its ability to execute JavaScript, making it suitable for scraping dynamic web pages, including single-page applications (SPAs). Puppeteers can interact with websites by simulating user actions such as clicking buttons and filling in login forms.

However, Puppeteer has a steeper learning curve than Cheerio due to its extensive capabilities and the need to work with asynchronous code using promises/async-await.

Puppeteer is generally slower than Cheerio, as it involves launching a browser instance and executing actions within it.

To build a web scraper with Cheerio, you can create a folder named "scraper" for your code. Inside the "scraper" folder, initialize the "package.json" file by running the command "npm init -y" or "yarn init -y," depending on your package manager preference.

Once the folder and package.json file are set up, you can install the necessary packages. For a more detailed guide on web scraping with Cheerio and Axios, refer to our comprehensive node.js web scraping guide.

For Cheerio installation, run the given command in the terminal:

To install Axios, a popular library for making HTTP requests in Node.js, you can use the following command in your terminal:

We use Axios to make HTTP requests to the website we want to scrape. The response we get from the website is HTML, which we can then parse and extract the information we need using Cheerio.

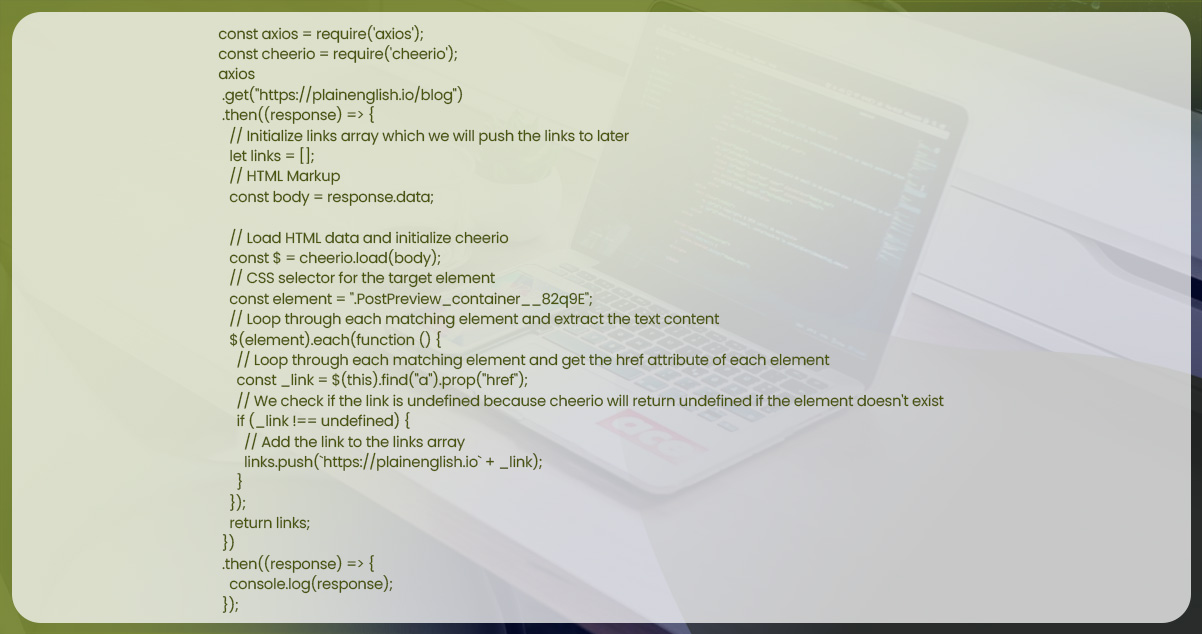

To begin web scraping using Cheerio and Axios, create a file called cheerio.js in the scraper folder. Use the following code structure as a starting point:

In the given code, we initially need Cheerio and Axios libraries.

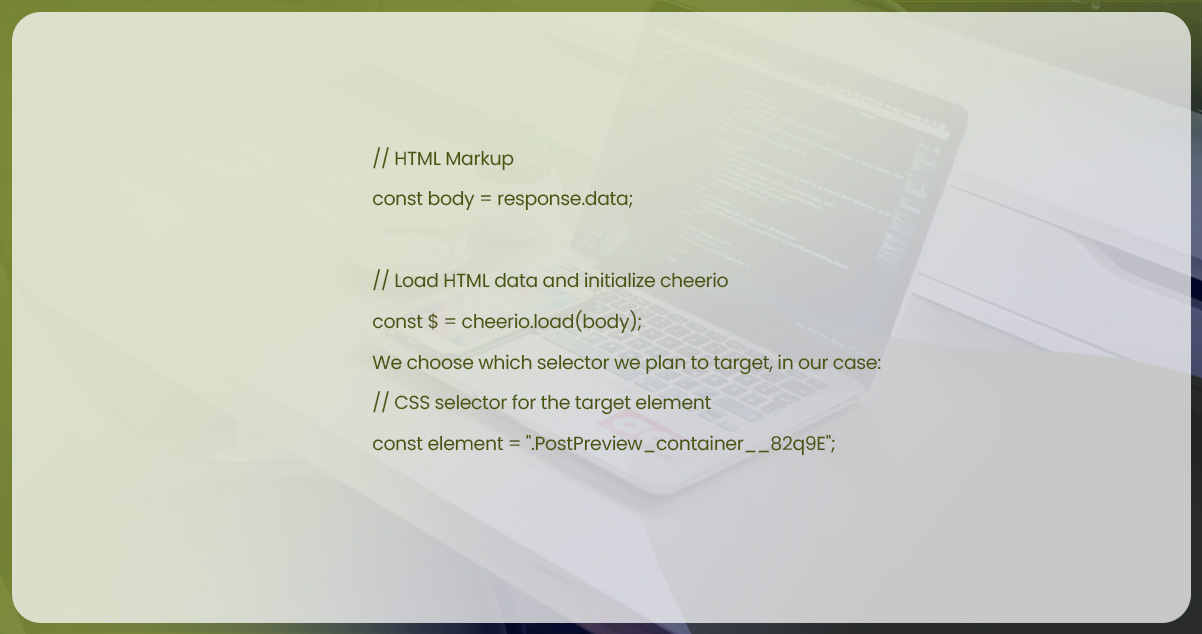

To initiate a GET request to "https://plainenglish.io/blog" using Axios, we utilize the asynchronous nature of Axios and chain the get() function with then(). Following that, we create an empty array called links to store the links we intend to scrape. To leverage Cheerio, we pass the response.data obtained from Axios to it.

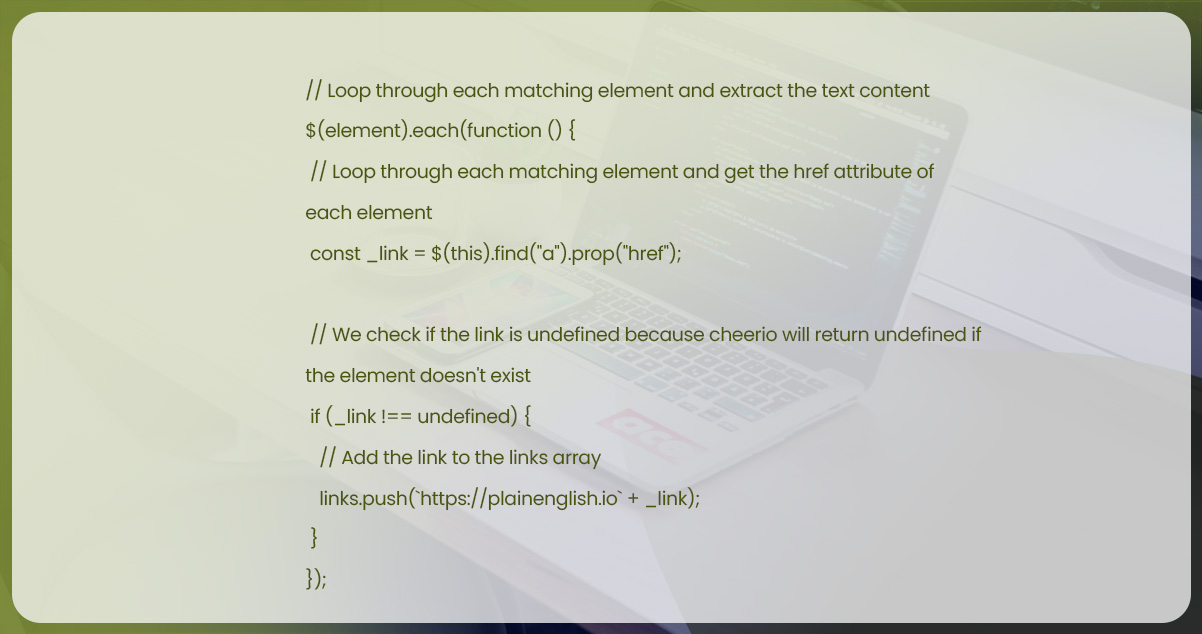

Next, we iterate through each matching element, locate the tag, and extract the value from its href property. We then add each match to our links array.

Then we return links, chain additional then() and console.log the response.

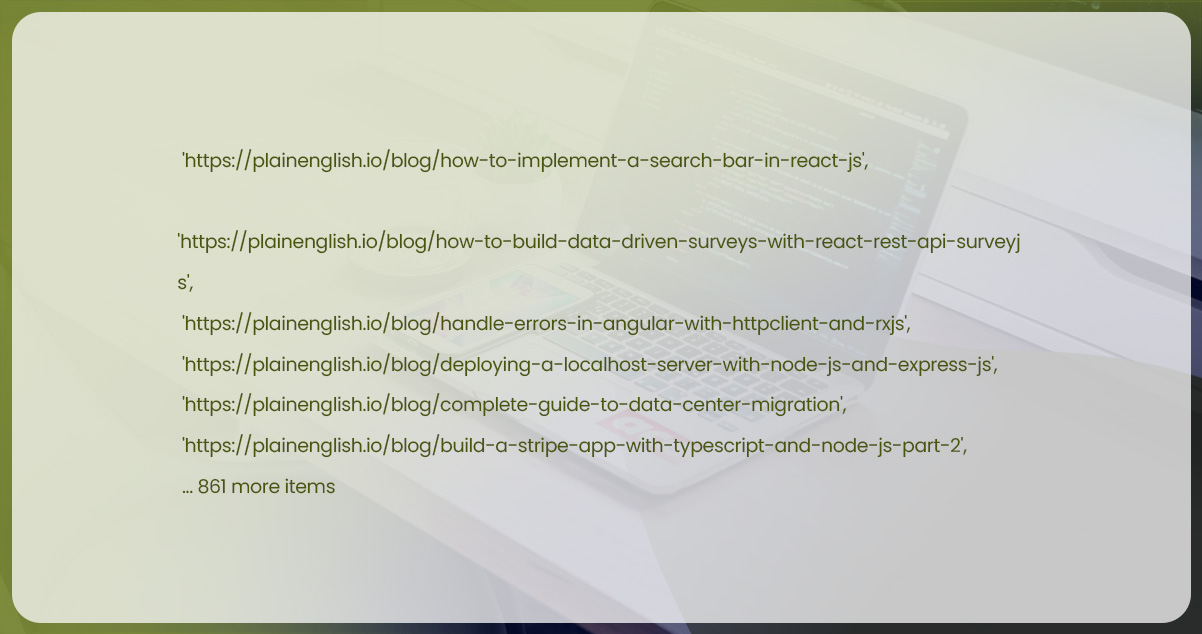

After implementing our scraper, we can open a terminal within the scraper folder and run the cheerio.js file using node.js. This will execute all of the code within the file. As a result, the URLs present in our links array will be displayed on the console. The output will resemble the following:

We have successfully scraped the In Plain English website! We can take another step to enhance our process and save the extracted data to a file instead of displaying it on the console. Thanks to the simplicity of Cheerio and Axios, performing web scraping in Node.js becomes effortless. With just a few lines of code, we can extract valuable data from websites and utilize it for various purposes.

To proceed, let's navigate to the scraper folder and create a new file called puppeteer.js. If you haven't already initialized the package.json file, please initialize it. Once that's done, we can install Puppeteer by executing the following command in the terminal:

For installing Puppeteer, run either of given commands:

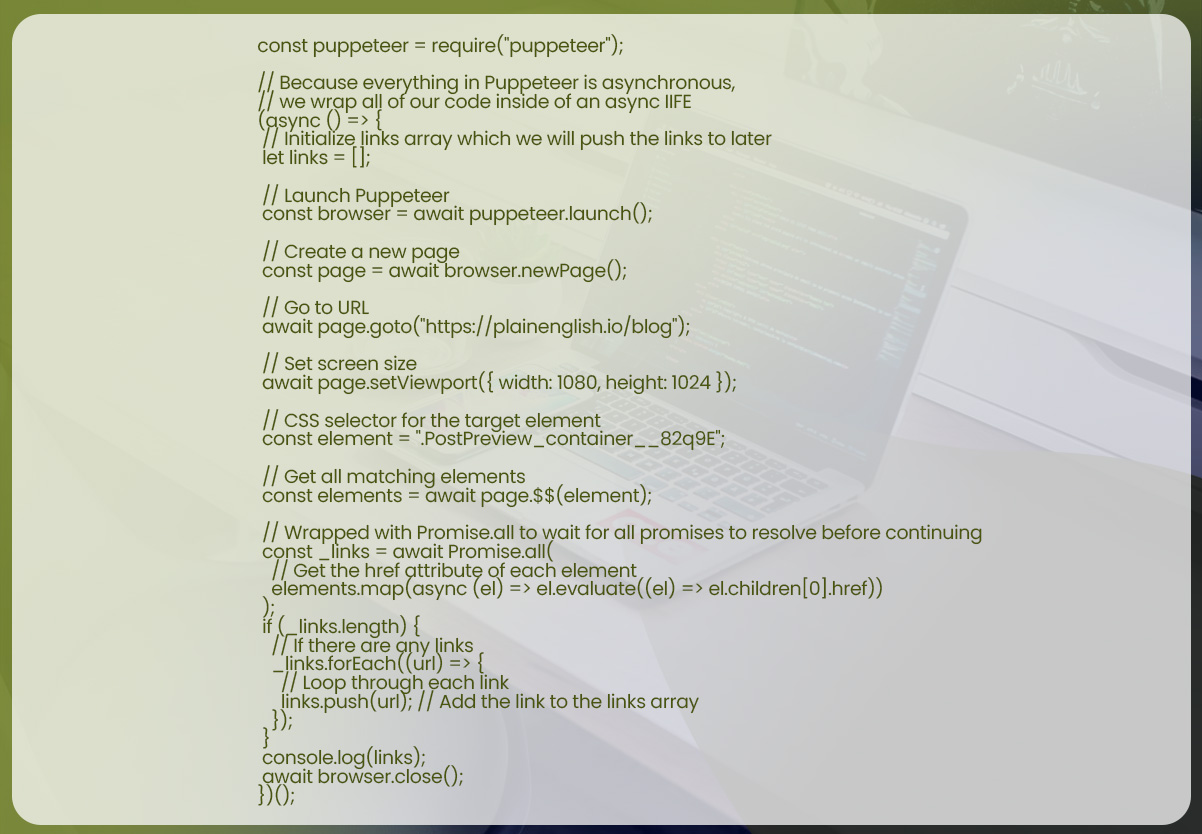

To begin with web scraping using Puppeteer, let's create a file named puppeteer.js inside our scraper folder. Here's the basic code structure that you can use to get started:

In the given code, we initially need a Puppeteer library.

Next, we create an immediately invoked function expression (IIFE) to handle the asynchronous nature of Puppeteer. We prepend the async keyword to indicate that the function contains asynchronous operations. Here's an example of how it would look:

Inside our async IIFE, we initialize an empty array called links. This array will be used to store the links we extract from the blog we are scraping.

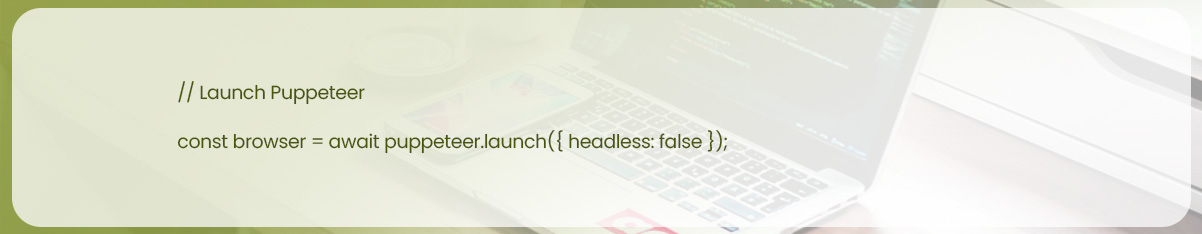

To begin, we launch Puppeteer, open a new page, navigate to a specific URL, and set the viewport of the page (i.e., the screen size). Here's an example of how it can be done:

By default, Puppeteer runs headless mode, operating without opening a visible browser window. However, we can still set the viewport size as we want Puppeteer to browse the site at a specific width and height.

If you prefer to see what Puppeteer is doing in real-time, you can disable headless mode by setting the headless option to false when launching the browser:

From here on, we select which selector we are planning to target, in this case:

And running what is type of equivalent to querySelectorAll() for targeted element:

Note: $$ is not similar to querySelectorAll, so don’t anticipate to get access to same things.

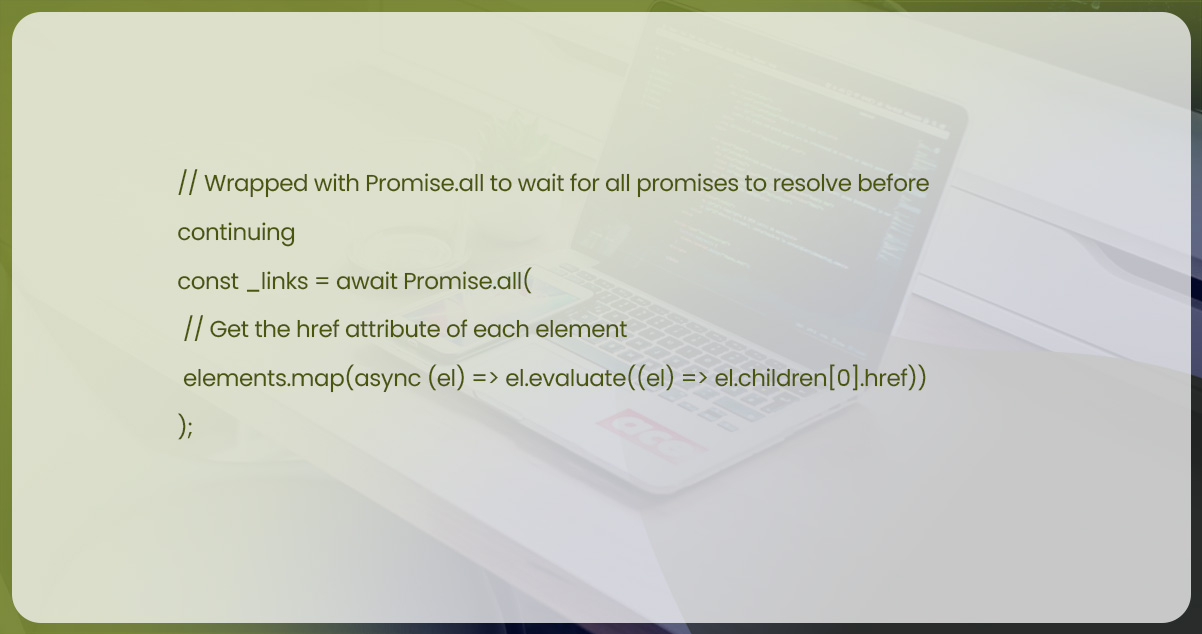

Now that we have our elements stored in the elements variable, we can iterate over each element using the map method to extract the href property. Here's an example of how it can be done:

After storing our desired elements in the elements variable, we can use the map() method to extract the href property from each element:

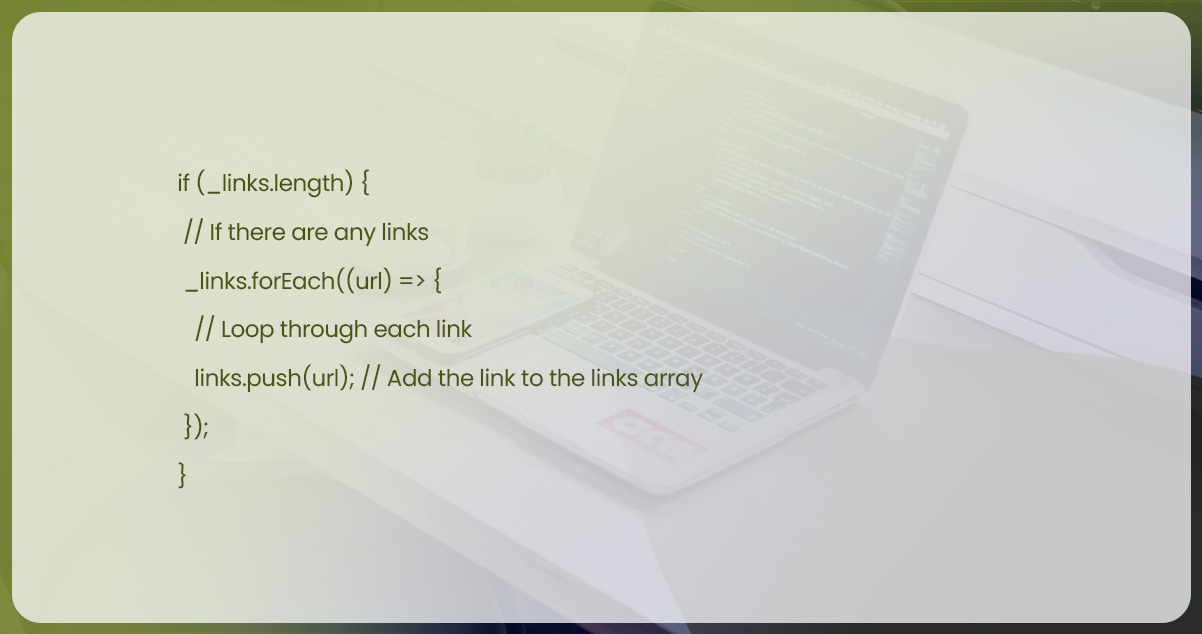

After that, we loop within every mapped element as well as push value in the links arrays, like so:

Finally, we console.log links, and close a browser:

Note: In case, you don’t close a browser, this will remain open and the terminal will droop.

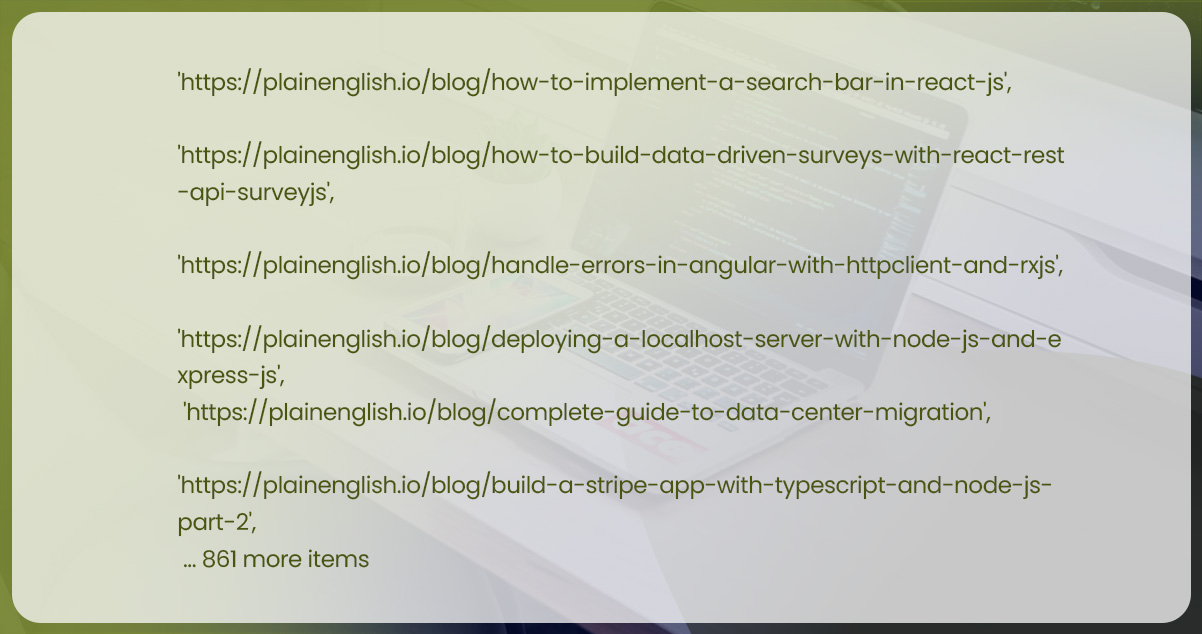

After executing the code in puppeteer.js, you should see the URLs from our links array being output to the console. Here's an example of what the output might look like:

Puppeteer proves to be a powerful tool for web scraping and automating browser tasks. Its comprehensive API allows for the efficient extraction of information from websites, the generation of screenshots and PDFs, and the execution of various automation tasks. By successfully utilizing Puppeteer, we have accomplished the task of scraping the website!

When scraping significant websites, it's advisable to integrate Puppeteer with a proxy to mitigate the risk of being blocked by anti-scraping measures.

Please note that alternative web scraping tools, such as Selenium or the Web Scraper IDE, are available. Alternatively, if time is a constraint, you can explore ready-made datasets that eliminate the need for the entire web scraping process.

Always adhere to the website's terms of service and respect legal and ethical considerations when performing web scraping activities.

Cheerio is an excellent choice When scraping static pages without the need for interactions or event handling. It provides a straightforward solution for parsing and manipulating HTML content.

On the other hand, if the website relies on JavaScript for content injection or requires interaction, Puppeteer becomes essential. It lets you automate browser tasks, handle events, and scrape dynamic websites effectively.

It's important to note that the use case discussed here was relatively simple. Scraping more complex websites, such as YouTube, Twitter, or Facebook, can be more challenging and may require advanced techniques.

Suppose you're looking for a quicker and more comprehensive solution without spending weeks piecing together your scraping solution. In that case, you might consider off-the-shelf solutions like Actowiz Solutions' Web Scraper IDE. This IDE offers pre-made scraping functions, built-in proxy infrastructure for unblocking, JavaScript browser scripting, debugging capabilities, and ready-to-use scraping templates for popular websites.

These tools can provide a more efficient and convenient way to scrape websites while offering additional features to streamline the process. However, reviewing the terms of service and legal considerations before engaging in any web scraping activities is essential.

For more information, contact Actowiz Solutions now! You can also call us for all your mobile app scraping or web scraping service requirements.

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

Complete guide to scraping Noon Saudi Arabia, Amazon.sa, Jarir, and Extra for Saudi e-commerce intelligence. Built for brands entering KSA, regional distributors, and Vision 2030 investors.

Scrape Cracker Barrel restaurants locations Data in the USA in 2026 to analyze store presence, expansion trends, and location intelligence.

Scrape Tim Hortons restaurants locations Data in USA to uncover expansion trends, store distribution insights, and competitive benchmarking strategies.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.