In this guide, you will learn how to harness the power of Node.js to perform web scraping on the Apple App Store, extracting valuable product information and user reviews. Utilizing popular Node.js libraries such as axios, cheerio, and node-fetch, we will walk you through the step-by-step process of searching for specific apps, retrieving essential details like app name, developer, price, and ratings, and fetching user reviews with their respective ratings and comments. By the end of this tutorial, you'll be equipped with the knowledge to create a robust web scraper, enabling you to gather valuable insights from the vast pool of data in the Apple App Store.

What to Scrape:

Discover how to scrape Apple App Store product information and reviews using Node.js with a comprehensive code example in the online IDE. The example demonstrates step-by-step implementation, including installing essential packages, searching for specific apps, extracting crucial details like app name, developer, price, and ratings, and fetching user reviews along with their ratings and comments. By following this hands-on code, you'll gain practical experience building a powerful web scraper to access valuable insights from the vast Apple App Store data. Start exploring the code example now and unlock the potential of web scraping with Node.js!

Leveraging SerpApi's Apple Product Page Scraper and Apple App Store Reviews Scraper APIs offers numerous advantages for web scraping tasks. Using these APIs, you can effortlessly overcome common challenges while creating your custom parsers or crawlers. SerpApi handles CAPTCHA and IP blocks, eliminating the need to worry about bypassing them. With ready-to-use APIs, you avoid the hassle of building and maintaining parsers from scratch, saving valuable time and effort. Additionally, you no longer need to invest in proxies or CAPTCHA solvers. Most importantly, SerpApi enables high-speed data extraction in large volumes without the complexity of browser automation. Embrace the simplicity and efficiency of SerpApi APIs for seamless web scraping experiences.

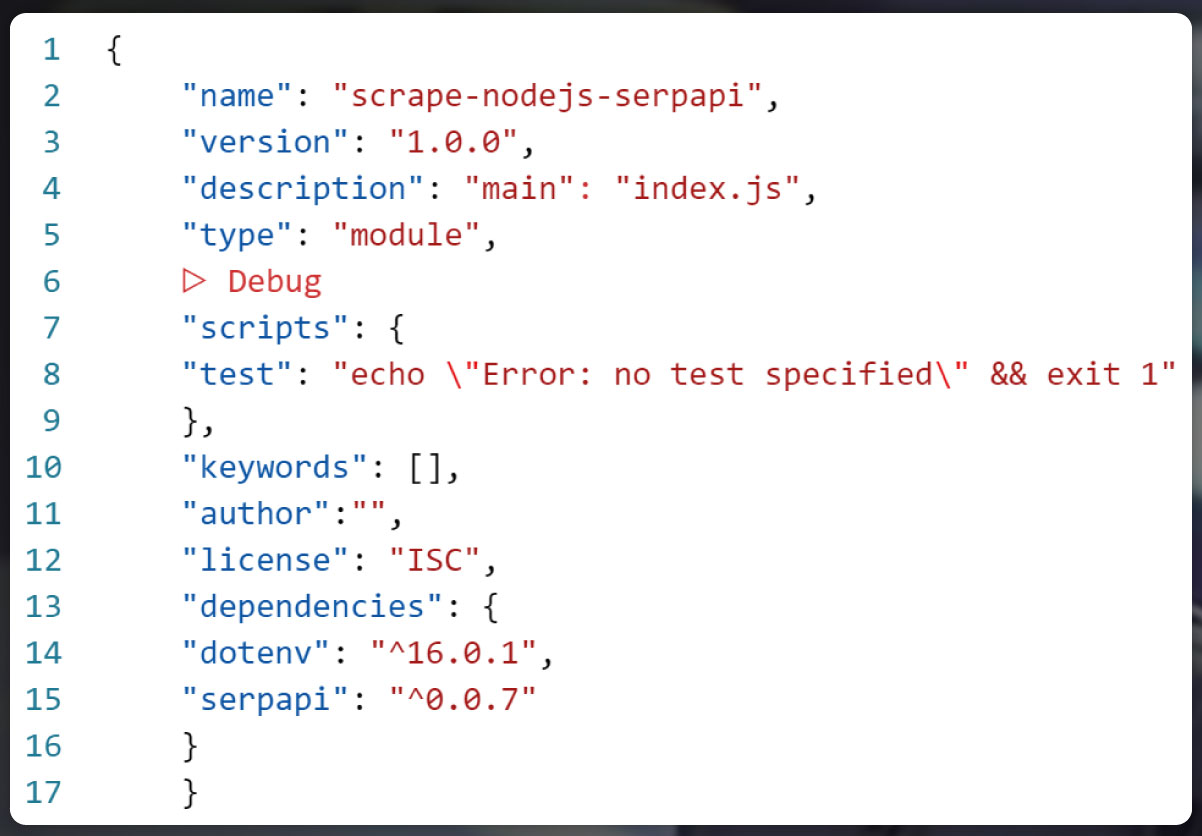

To begin, let's set up a Node.js* project and add the necessary npm packages, serpapi, and dotenv. Follow these steps:

$ npm init -y

And after that:

$ npm i serpapi dotenv

If you do not have Node.js installed, you can download it from nodejs.org and follow the installation documentation.

To proceed with the web scraping project, we'll use two essential npm packages:

SerpApi: This package enables the scraping and parsing of search engine outcomes with SerpApi. It provides access to search results from Bing, Baidu, Google, eBay, Yandex, Home Depot, Yahoo, and more.

dotenv: This package is a zero-dependency component which loads environment variable quantity from the .env file to process.env.

To utilize ES6 modules in Node.js, add a top-level "type" field with a value of "module" in your package.json file:

With the Node.js environment successfully set up for our project, let's now dive into the step-by-step explanation of the code. We'll walk you through the process of utilizing the SerpApi package for web scraping and the dotenv package for handling environment variables. Follow along to understand how to extract and parse search engine results efficiently

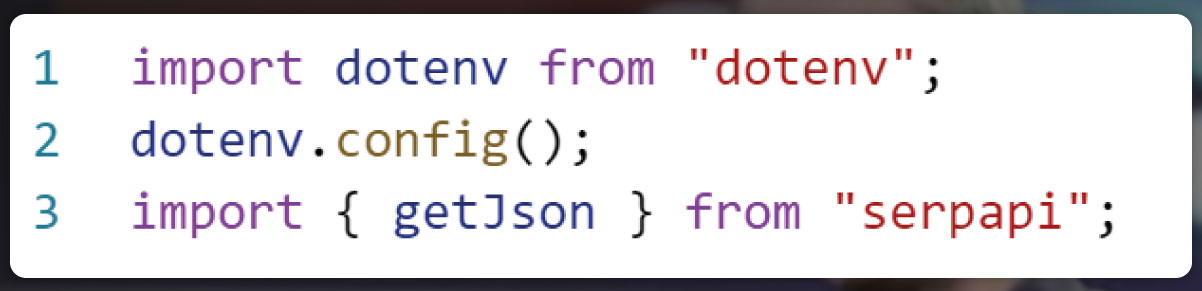

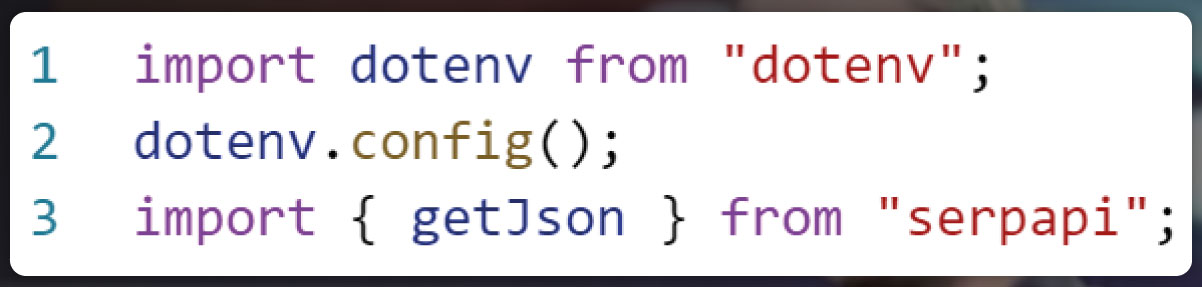

In the code explanation, our first step is to import the dotenv library and call the config() method to load environment variables from the .env file:

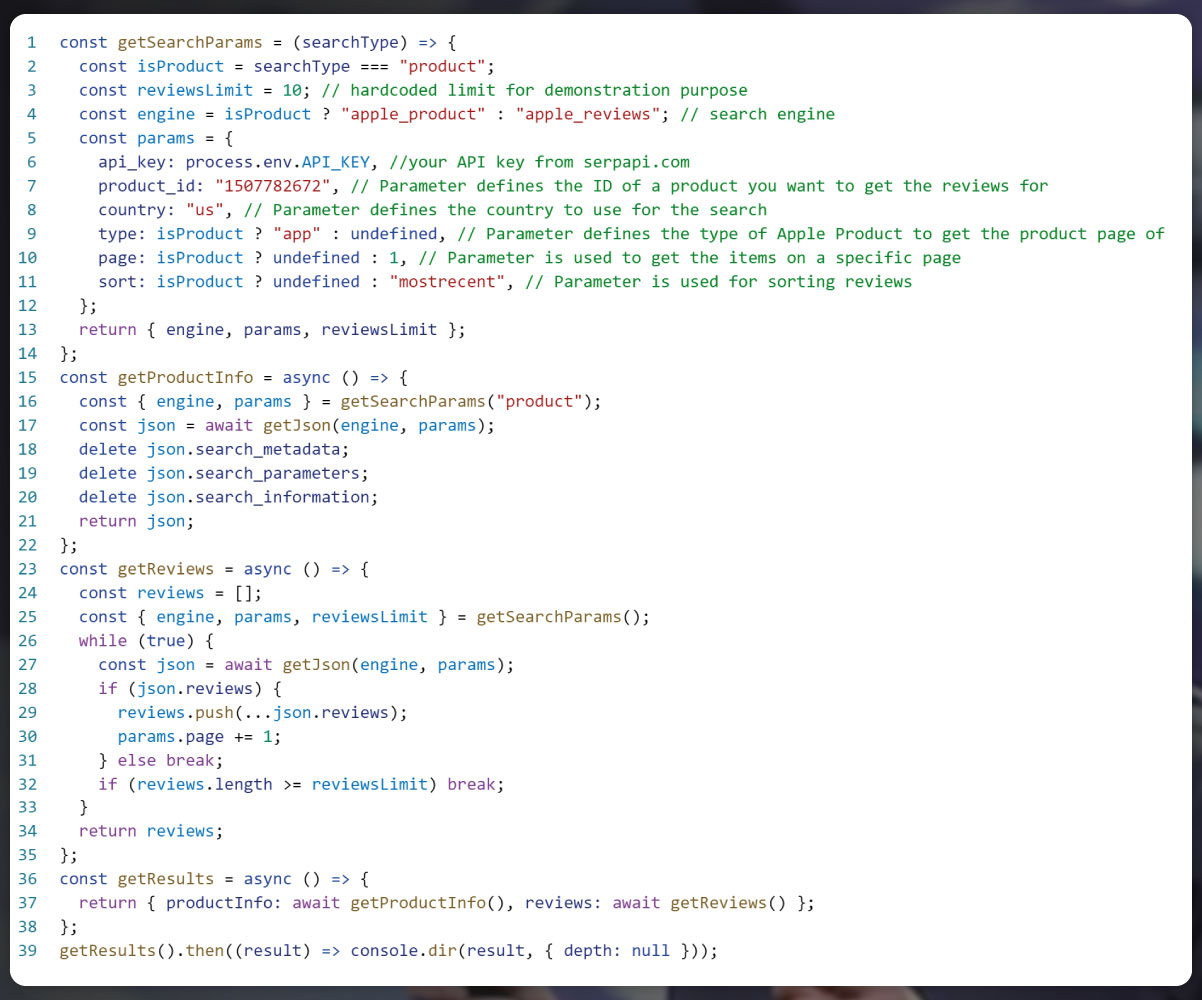

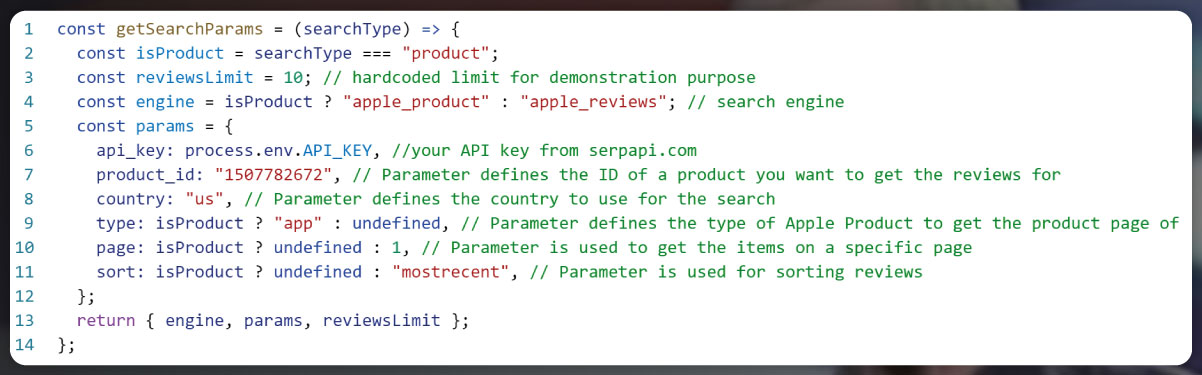

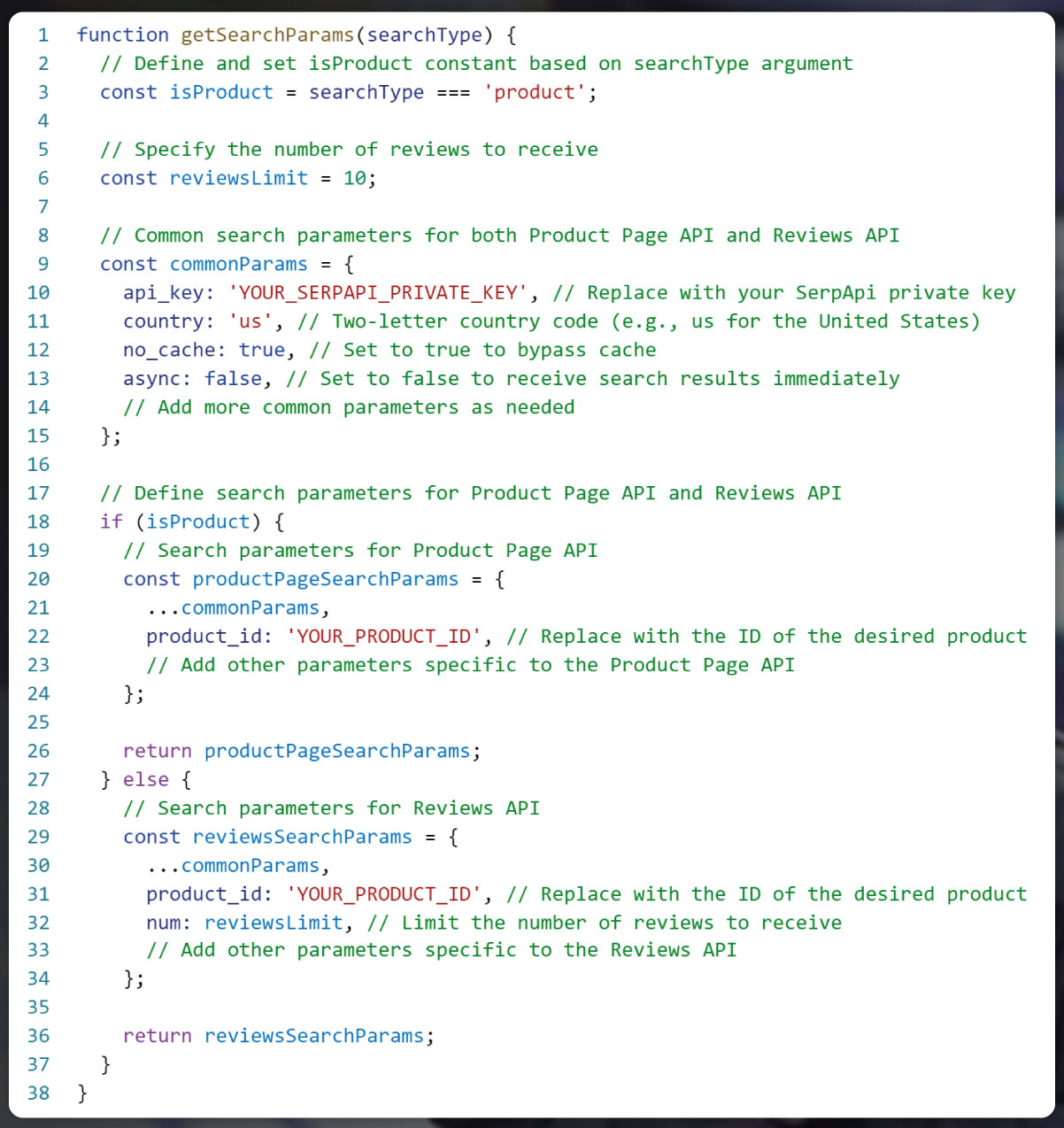

Let's create the getSearchParams function to generate the required search parameters for two different APIs. We'll use the isProduct constant, which depends on the searchType argument, to differentiate between the two APIs. Additionally, we'll define the reviewsLimit constant to specify how many reviews we want to receive.

With the getSearchParams function, we can now dynamically generate the appropriate search parameters based on the searchType. If the searchType is 'product', the function will return search parameters suitable for the Product Page API, and if it is anything else, it will return parameters for the Reviews API. This allows us to customize the API requests according to our requirements.

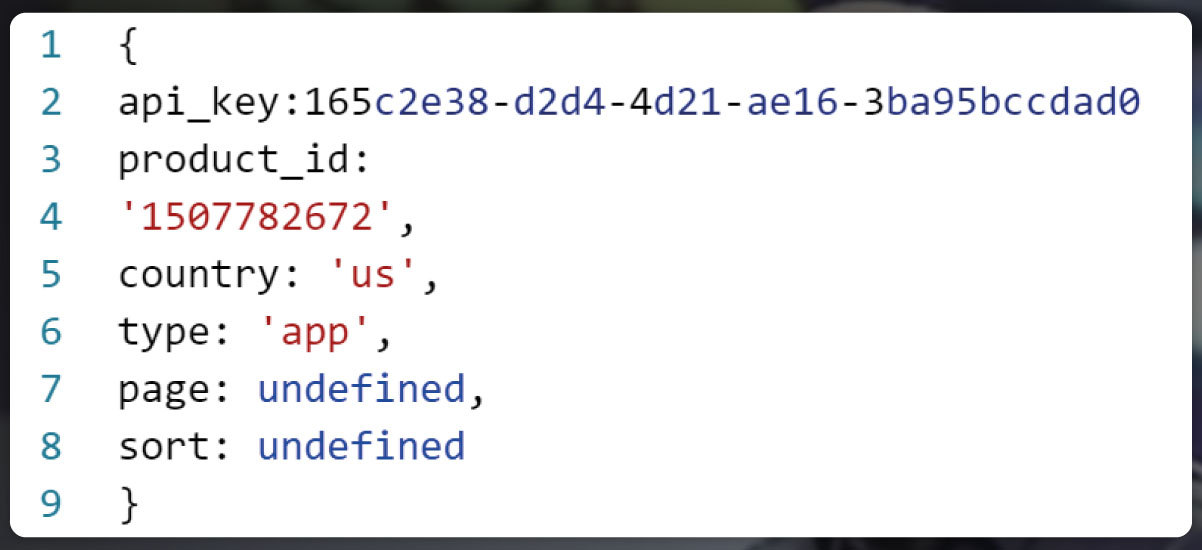

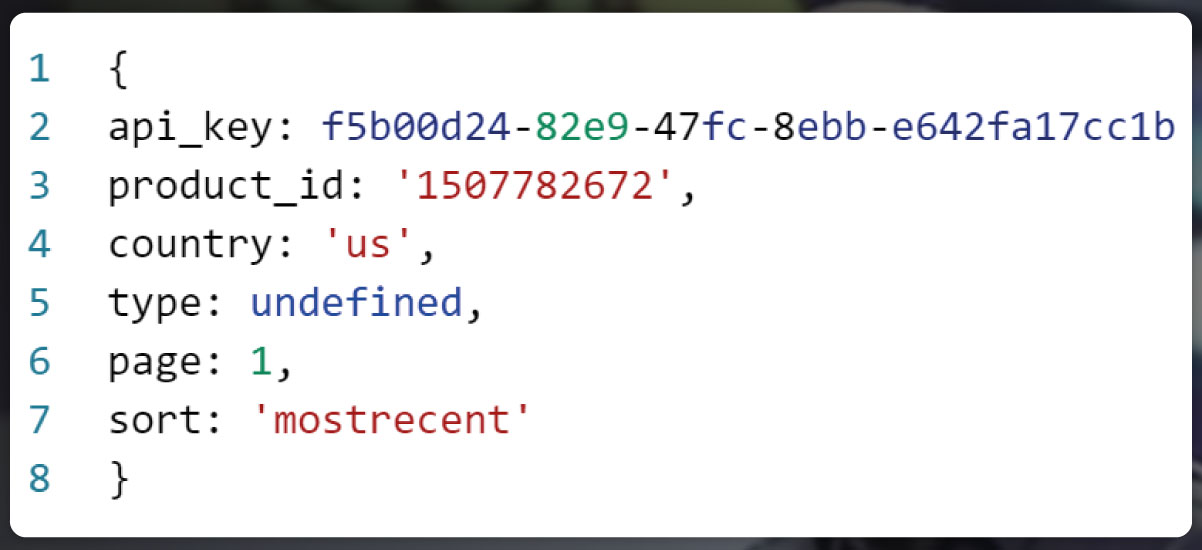

When we run the getSearchParams function, we receive different search parameters depending on the value of the searchType argument:

Product Page API:

Reviews API:

Certainly! Here are the common parameters that you can use in the getSearchParams function for the SerpApi requests:

Make sure to replace 'YOUR_SERPAPI_PRIVATE_KEY' with your actual SerpApi private key and 'YOUR_PRODUCT_ID' with the ID of the product you want to get reviews for. These parameters will allow you to customize your API requests accordingly, whether you are using the Product Page API or the Reviews API.

Product Page params:

Here's the updated getSearchParams function with the additional parameter type for the Product Page API, which defines the type of Apple product to retrieve the product page for (defaulting to "app"):

Reviews params:

Here's the updated getSearchParams function with the additional parameters page and sort for the Reviews API:

Please note that you need to replace 'YOUR_SERPAPI_PRIVATE_KEY' with your actual SerpApi private key, and 'YOUR_PRODUCT_ID' with the ID of the product you want to get reviews for.

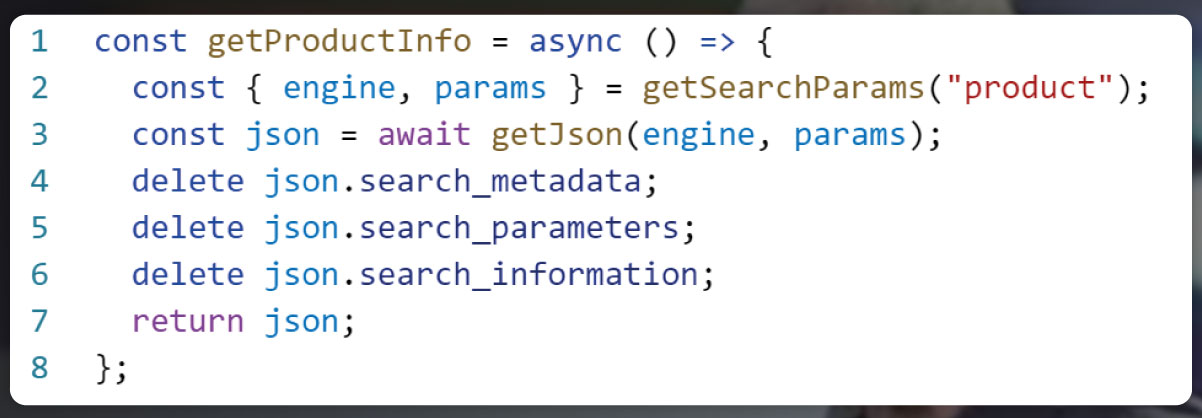

Now, let's create the getProductInfo function to retrieve all product information from the page:

In the getProductInfo function, we destructure engine and params from the getSearchParams function with the argument 'product'. We then get the JSON results from the API, remove any unnecessary keys, and return the product information accordingly.

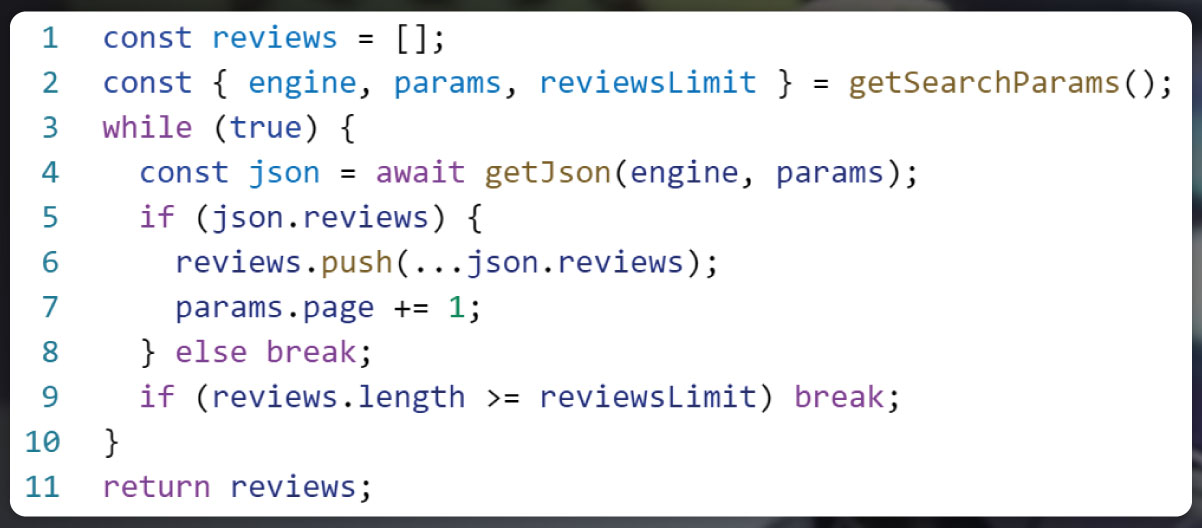

Let's create the getReviews function to retrieve review results from all pages (using pagination) and return them:

const getReviews = async () => {

...

};

In the getReviews function, we initialize an empty reviews array and destructure the engine, params, and reviewsLimit variables from the getSearchParams function without any arguments. Using a while loop, we fetch JSON results from each page and append the reviews to the reviews array

The loop continues until either there are no more results on the page or the number of received results reaches or exceeds the reviewsLimit.

When either of these conditions is met, the loop stops using break, and the function returns the array with the accumulated review results.

Please note that we have assumed that the reviewsLimit is included in the parameters returned by the getSearchParams function. If it's not there, you may need to adjust the logic accordingly.

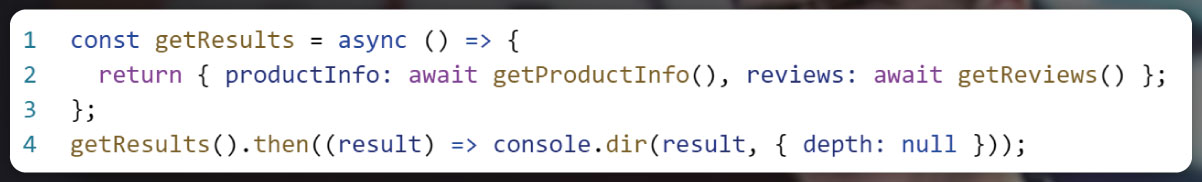

In the getResults function, we await the results from getProductInfo and getReviews functions. Then, we create an object named results that contains the product information and reviews.

Finally, we use console.dir to print the results object to the console. The { depth: null, colors: true } option allows us to display the entire object (no depth limit) and apply color highlighting to the output for better readability.

Now, when you run the getResults function, you should see the product information and reviews printed in the console.

Still if you need more information about this, contact Actowiz Solutions now! You can also reach us for all your mobile app scraping, instant data scraper, web scraping service requirements.

Our web scraping expertise is relied on by 3,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

How IHG Hotels & Resorts data scraping enables real-time rate tracking, improves availability monitoring, and boosts revenue decisions.

How a top-10 UK grocery retailer used Actowiz grocery price scraping to achieve 300% promotional ROI and reduce competitive response time from 5 days to same-day.

Track UK Grocery Products Daily Using Automated Data Scraping across Morrisons, Asda, Tesco, Sainsbury’s, Iceland, Co-op, Waitrose, and Ocado for insights.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.