In the dynamic realm of real estate, staying abreast of precise and current property data is paramount for informed decision-making. StreetEasy, a prominent real estate marketplace, is a comprehensive resource offering many insights into properties, neighborhoods, and market dynamics. While the platform provides a wealth of information, users may desire additional data beyond the readily available features. This is where web scraping emerges as a potent solution, enabling data extraction from websites, StreetEasy included.

StreetEasy data scraping services empower users to delve deeper into the details of property listings, prices, and other relevant information that might not be easily accessible through conventional means. By leveraging this technique responsibly and ethically, real estate professionals, investors, and enthusiasts can gain a competitive edge in understanding market trends and making well-informed decisions. As with any powerful tool, it is crucial to approach web scraping with a sense of responsibility, ensuring compliance with the terms of service of the target website and being mindful of ethical considerations to extract valuable insights while respecting the integrity of online platforms.

Web scraping is a technique employed to extract data from websites, allowing users to gather information beyond what is readily available through traditional means. The process typically involves a series of steps, from sending HTTP requests to the target website's servers. These requests prompt the servers to deliver the HTML content of the web pages.

Once the HTML content is obtained, the next step is parsing—breaking down the structure of the HTML document. This is where parsing libraries like Beautiful Soup come into play, facilitating the extraction of specific data points by navigating the HTML tree. Extracted information may include text, images, links, or any other content embedded in the HTML.

While web scraping can be a powerful tool for data collection and analysis, approaching it with a strong sense of responsibility and ethics is of utmost importance. Respecting the policies outlined by the website being scraped is essential to ensure legal compliance and maintain the integrity of online platforms. Many websites explicitly outline their terms of service, and violating these terms could lead to legal consequences. Therefore, web scraping practitioners should exercise caution, transparency, and adherence to ethical standards to ensure this valuable data-gathering technique's sustainable and respectful use./p>

When embarking on a web scraping project, selecting an appropriate programming language is a critical decision that significantly influences the ease and efficiency of the process. Python stands out as one of the most popular and versatile choices for web scraping, and its widespread adoption in the data science and web development communities makes it a go-to language for many developers.

Python's popularity in web scraping is attributed to its rich ecosystem of libraries that streamline the entire scraping workflow. Beautiful Soup, a Python library, excels in parsing HTML and XML documents, making extracting data from web pages effortless. Its intuitive syntax allows developers to navigate the document structure seamlessly, quickly identifying and extracting specific elements.

In addition to Beautiful Soup, Python boasts another powerful tool for web scraping—Scrapy. Scrapy is a robust and extensible framework explicitly designed for scraping large-scale websites. It provides:

The combination of Python, Beautiful Soup, and Scrapy creates a formidable toolkit for web scraping, enabling developers to execute scraping tasks with precision and efficiency. The language's readability, extensive documentation, and a supportive community further contribute to its status as the language of choice for those seeking to harness the power of web scraping in a reliable and accessible manner.

Before diving into the exciting world of web scraping, it's essential to equip your programming environment with the necessary tools. Python's package manager, pip, is a convenient tool for installing libraries that streamline the scraping process. For scraping property data from StreetEasy, two indispensable libraries are Requests and Beautiful Soup.

To begin, open your terminal or command prompt and enter the following commands:

pip install requests beautifulsoup4 The first library, requests, is crucial for sending HTTP requests to StreetEasy's servers, fetching the HTML content of the web pages you intend to scrape. This library simplifies interacting with web servers, handling cookies, and managing sessions.

The second library, Beautiful Soup (often imported as bs4), plays a pivotal role in parsing the HTML retrieved from StreetEasy. It transforms the raw HTML into a navigable Python object, making it easy to extract specific elements like property prices, addresses, and descriptions from the HTML structure.

Once these libraries are installed, you can begin you scrape property data from StreetEasy. Remember to check the official documentation for requests and Beautiful Soup to maximize their potential and streamline your web scraping workflow. With these tools in your arsenal, you're ready to explore the vast world of real estate data available on StreetEasy.

Scraping property data from StreetEasy involves a systematic approach, starting with inspecting the website's structure to identify the HTML elements housing the desired information. Follow these steps to initiate the scraping process:

Open StreetEasy's webpage in your web browser and right-click on the element you want to extract information from. Select "Inspect" to open the browser's developer tools. This allows you to examine the HTML structure of the page. Identify key HTML elements associated with the data you wish to scrape, such as property prices, addresses, and descriptions.

Navigate through the HTML structure using the developer tools to pinpoint the specific tags, classes, or IDs encapsulating the data of interest. For example, property prices might be contained within < span > tags with a class like 'price,' while addresses could be found within < div > tags with a class like 'address.'

Once you've identified the relevant HTML elements, construct CSS or XPath selectors that precisely target those elements. These selectors will serve as your guide to programmatically locate and extract the desired data during the scraping process.

By thoroughly inspecting the page and understanding its structure, you gain valuable insights into navigating and extracting information. This foundational step ensures that your web scraper is tailored to the specific layout of StreetEasy's pages, allowing for accurate and efficient extraction of property data and enhancing the effectiveness of your scraping endeavor.

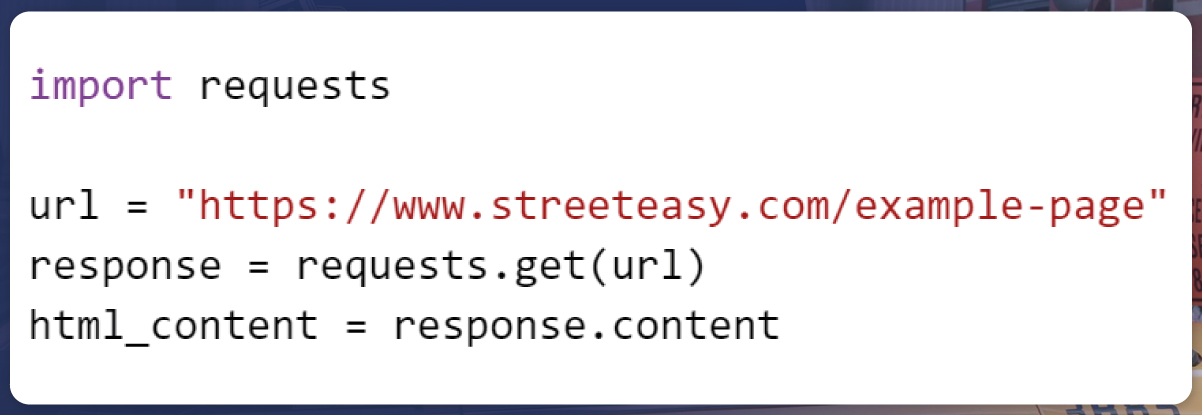

With the insights gained from inspecting StreetEasy's page structure, the next step is to use the requests library to send HTTP requests and retrieve the HTML content of the pages targeted for scraping. In Python, this involves using the following code snippet:

Here, the requests.get() function is employed to send a GET request to the specified URL, and the resulting HTML content is stored in the html_content variable. This content will be parsed in subsequent steps to extract the desired property data from StreetEasy's pages.

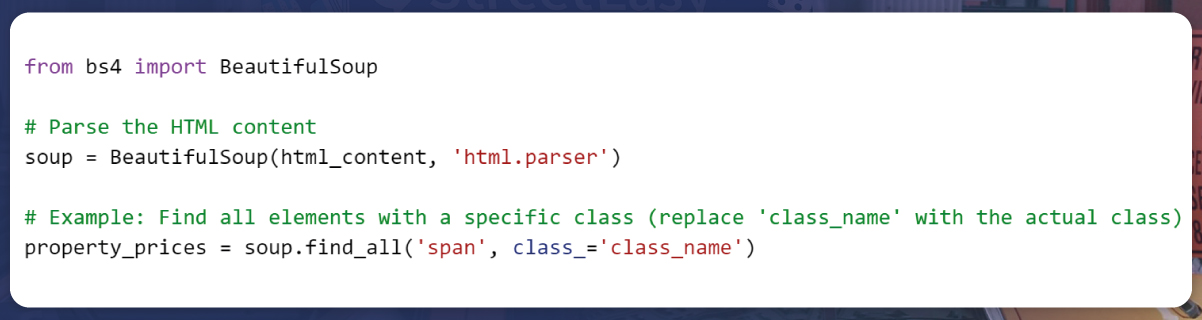

After obtaining the HTML content from StreetEasy using the requests library, the next step is to utilize Beautiful Soup to parse the HTML and navigate through the document structure. Beautiful Soup makes it easier to extract relevant information from the HTML by providing methods to search, filter, and navigate the parsed HTML tree.

Here's an example of how to use Beautiful Soup for parsing:

In this example, find_all() is used to locate all HTML elements with a specific tag (span) and class (class_name). Replace 'class_name' with the actual class name associated with the property prices on the StreetEasy page.

Once the HTML is parsed, you can navigate through the document structure and extract the desired property data using Beautiful Soup's methods.

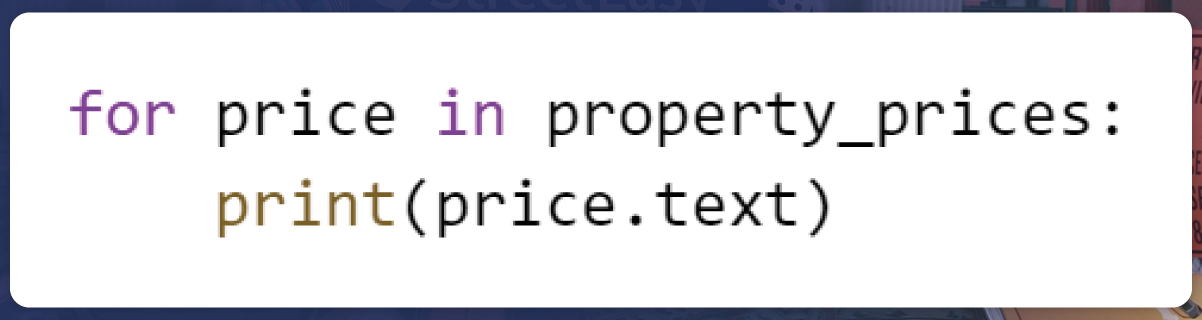

Having located the relevant HTML elements using Beautiful Soup, the next step involves extracting the desired information from these elements. In the case of property prices, you can iterate through the list of elements and print or process the extracted data. Here's an example:

In this loop, price.text retrieves the text content of each HTML element representing property prices. Depending on your specific requirements, you can modify this loop to store the data in variables, a data structure, or perform additional processing steps.

This iterative process allows you to capture and display the property prices obtained from StreetEasy's HTML structure, providing a glimpse into how data extraction is achieved using Beautiful Soup in the context of web scraping.

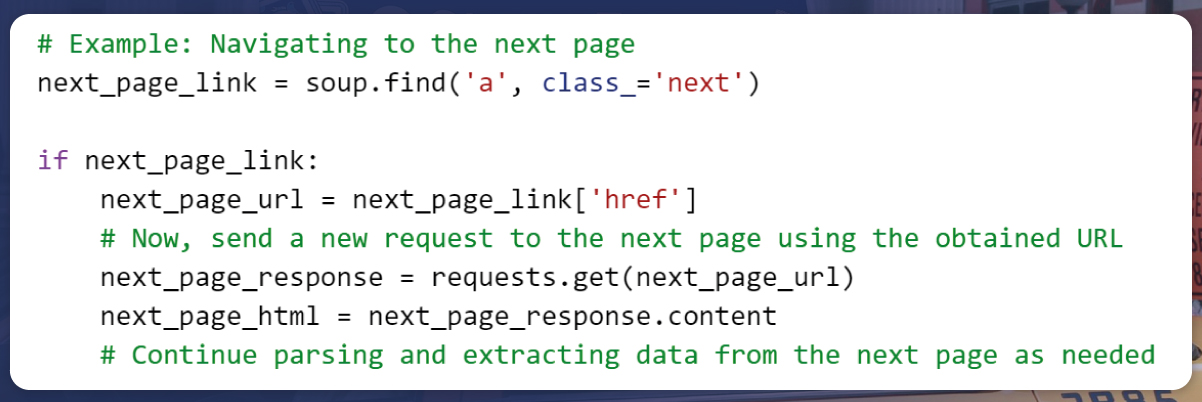

Handling pagination is crucial when scraping data from websites with multiple pages. If StreetEasy's data spans several pages, you need to implement logic to navigate through these pages systematically. Below is an example of how to extract the URL of the next page:

In this example, soup.find('a', class_='next') locates the anchor (a) element with the class 'next,' typically associated with the link to the next page. If such a link exists, the URL is extracted and used to send a new request to the next page.

By incorporating pagination handling into your web scraping logic, you can ensure a comprehensive extraction of property data from all relevant pages on StreetEasy.

Ethical considerations play a vital role in the practice of StreetEasy data scraping services. As you harness the power of this technique to extract valuable insights from StreetEasy or any other website, it's crucial to be aware of the ethical implications and legal boundaries. Here are some key points to keep in mind:

Before engaging in StreetEasy data scraping services, carefully review and adhere to the terms of service of the target website, in this case, StreetEasy. Websites often explicitly outline their policies regarding data usage, scraping, and user behavior. Violating these terms can lead to legal consequences.

Avoid aggressive scraping practices that could strain the servers or negatively impact the user experience on StreetEasy. Be considerate of the website's resources and implement appropriate throttling mechanisms to prevent overwhelming their servers with requests.

Moderate the frequency and volume of your scraping activities. Excessive and frequent requests can be interpreted as a denial-of-service attack and may result in IP bans or other restrictive measures.

Check for the presence of a robots.txt file on StreetEasy's domain. This file provides guidelines to web crawlers and scrapers about which areas of the site are off-limits. Adhering to the directives in this file demonstrates a commitment to responsible scraping.

Include a proper User-Agent header in your HTTP requests to identify your scraper and its purpose. This helps websites differentiate between legitimate scrapers and potentially malicious automated activities.

Be conscious of data privacy laws and regulations, especially if the scraped data includes personally identifiable information. Avoid collecting or using sensitive information without proper consent.

By approaching StreetEasy data scraping services with a sense of responsibility, transparency, and adherence to ethical standards, you not only safeguard yourself from legal repercussions but also contribute to maintaining a healthy online ecosystem. Responsible scraping ensures that the practice remains a valuable and sustainable tool for gathering insights without negatively impacting the targeted websites or their users.

Leveraging web scraping to scrape property data from StreetEasy presents a valuable opportunity for real estate professionals, investors, and enthusiasts seeking a comprehensive understanding of the market. The ability to gain insights beyond the platform's standard offerings can provide a competitive edge in decision-making processes.

However, it is imperative to approach web scraping responsibly. Adhering to ethical standards and respecting StreetEasy's terms of service ensures a sustainable and respectful use of this powerful technique. Actowiz Solutions understands the importance of ethical StreetEasy data scraping services and is committed to helping clients navigate the complexities of data extraction within legal and ethical boundaries.

To harness the full potential of web scraping for your real estate endeavors, partner with Actowiz Solutions. Our expertise in ethical and compliant web scraping, coupled with a commitment to client success, positions us as your trusted ally in unlocking valuable insights from StreetEasy and enhancing your strategic decision-making in the dynamic real estate market. Contact Actowiz Solutions today to explore how we can empower your data-driven initiatives while maintaining the highest ethical standards. You can also reach us for all your mobile app scraping, instant data scraper and web scraping service requirements.

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

Complete guide to scraping Shopify store data in 2026. Extract product prices, reviews, and inventory from Shopify stores for competitive intelligence.

Discover how Natural Grocers achieved a 23% increase in promotional ROI using real-time organic product pricing intelligence. Learn how data-driven pricing strategies enhance promotions and retail performance.

Track UK Grocery Products Daily Using Automated Data Scraping across Morrisons, Asda, Tesco, Sainsbury’s, Iceland, Co-op, Waitrose, and Ocado for insights.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.