-

Services ▾CORE SERVICES

Enterprise Data Extraction

Scalable web, app & AI-powered extraction. 99.9% accuracy.

All Services →99.9%Accuracy75+PlatformsWeb Scraping Services Enterprise Web Crawling Web Scraping API AI-Powered Scraping HOT Live Crawler Custom Data Extraction AI Training Data NEW App Scraping (Android & iOS)Core Scraping Services

Amazon Data Scraping #1 Walmart Data Scraping Shopify Store Scraping HOT TikTok Shop Scraping HOT Flipkart Data ScrapingTop Global Platforms

Platforms by Region

🇺🇸 USA🇬🇧🇪🇺 UK/EU🇮🇳 India🇦🇪 ME🌏 SEA🌎 LATAM🇨🇳🇯🇵🇰🇷🇦🇺 AUAmazon Data Scraping #1 Walmart Data Scraping Target Data Scraping NEW Shopify Scraping HOT TikTok Shop Scraping HOT Costco Data Scraping NEW Best Buy Scraping NEW Home Depot Scraping NEW Etsy Data Scraping NEW Shein Data Scraping NEW DoorDash Scraping NEW Instacart Scraping NEWTesco Data Scraping NEW Sainsbury's Scraping NEW ASDA Data Scraping NEW Ocado Scraping NEW ASOS Data Scraping NEW Rightmove Scraping NEW Deliveroo Scraping NEW Zalando Scraping NEW Otto Scraping NEW Cdiscount Scraping NEW Carrefour Scraping NEW Allegro Scraping NEW Bol.com Scraping NEWFlipkart Data Scraping JioMart Data Scraping NEW BigBasket Scraping NEW Myntra Data Scraping NEW Nykaa Data Scraping NEW Blinkit Data Scraping Zepto Data Scraping Zomato Data Scraping Swiggy Data ScrapingNoon Data Scraping NEW Amazon.ae Scraping NEW Talabat Data Scraping NEW Careem Data Scraping NEW PropertyFinder Scraping NEW🇦🇪 Expanding across UAE, Saudi, Qatar, Kuwait & more

Request Custom Platform →Shopee Data Scraping NEW Lazada Data Scraping NEW Tokopedia Scraping NEW Grab Data Scraping NEW Traveloka Scraping NEW🌏 Singapore, Indonesia, Thailand, Philippines, Vietnam & Malaysia

Request Custom Platform →Mercado Libre Scraping NEW iFood Data Scraping NEW Rappi Data Scraping NEW🌎 Brazil, Mexico, Argentina, Colombia & Chile

Request Custom Platform → -

Solutions ▾27Solutions5CategoriesPrice Monitoring AI Dynamic Pricing HOT Product Matching Smart Repricer Promo Tracking Cross-Border Pricing NEW Multi-Currency NEW

Pricing & Promotions

MAP Violations Brand Protection Counterfeit Detection Price Intelligence AI HOT Data IntelligenceBrand & Intelligence

Share of Search Content Audit & PDP Reviews & Ratings Retail Media Buy Box Monitoring Social Commerce HOT Live Commerce NEW Agentic Commerce NEWDigital Shelf & Search

Assortment Planning Competitive Benchmarking Product Availability Seller Intelligence NEW Q-Commerce NEWAssortment

E-commerce Intelligence Hyperlocal Insights POI & Store Locator DTC Brand Analytics NEWFor Retailers

Which solution fits?

Talk to Expert -

Datasets ▾32DatasetsDailyUpdatesUSAmazon Datasets #1 Walmart Datasets Target Datasets NEW Shopify Datasets HOT TikTok Shop HOT Costco / Best Buy NEW Etsy / Temu NEW DoorDash / Instacart NEW Zillow / Realtor Indeed / Glassdoor / LinedInUK & EuropeTesco / Sainsbury's NEW Ocado / Deliveroo NEW Zalando / Otto NEW Cdiscount / Carrefour NEW Allegro NEW Booking / AirbnbIndia & Middle EastFlipkart / Meesho NEW Blinkit / Zepto Zomato / Swiggy BigBasket / JioMart NEW Myntra / Nykaa NEW Noon / Amazon.ae NEW Talabat / Careem NEW🌍 Global & MoreShopee / Lazada NEW Mercado Libre NEW Rakuten / Coupang NEW eBay AU / Woolworths NEW Netflix / Prime Video Google Maps / Yelp AI Training HOT Cross-Border NEW

-

Scrapers & APIs ▾28Tools2SDKsAmazon (Global) #1 Walmart Scraper Target Scraper NEW Shopify Scraper HOT eBay Scraper Flipkart Scraper Shopee Scraper NEW Noon Scraper NEW Mercado Libre NEW Google Maps HOT

Marketplace Scrapers

Amazon API TikTok Shop API HOT Uber Eats API Airbnb API Zepto / Blinkit API Instacart API NEW Talabat API NEWData APIs

Web Extract API Reviews API SERP API Pricing Webhook NEWUniversal APIs

Live Crawler API Scheduler Realtime Alerts Webhook Delivery 🐍 Python SDK 💚 Node.js SDKDelivery & SDKs

Ready to integrate?

Start Free Trial -

Resources ▾Blog Case Studies HOT Whitepapers Research & Reports Competitor Template NEW

Knowledge Center

Digital Shelf Playbook MAP Compliance Guide Pricing Intel Guide Scraping Compliance TikTok Shop Guide NEW Cross-Border Guide NEWGuides & Playbooks

Sample Datasets HOT ROI Calculator NEW API Postman Collection Demo Dashboards Free API Playground NEW Press KitDownloads & Tools

Trust Center About Us FAQs CareersTrust & Company

- Contact US

Enterprise Data Extraction

Scalable web, app & AI-powered extraction. 99.9% accuracy.

All Services →Which solution fits?

Talk to ExpertReady to integrate?

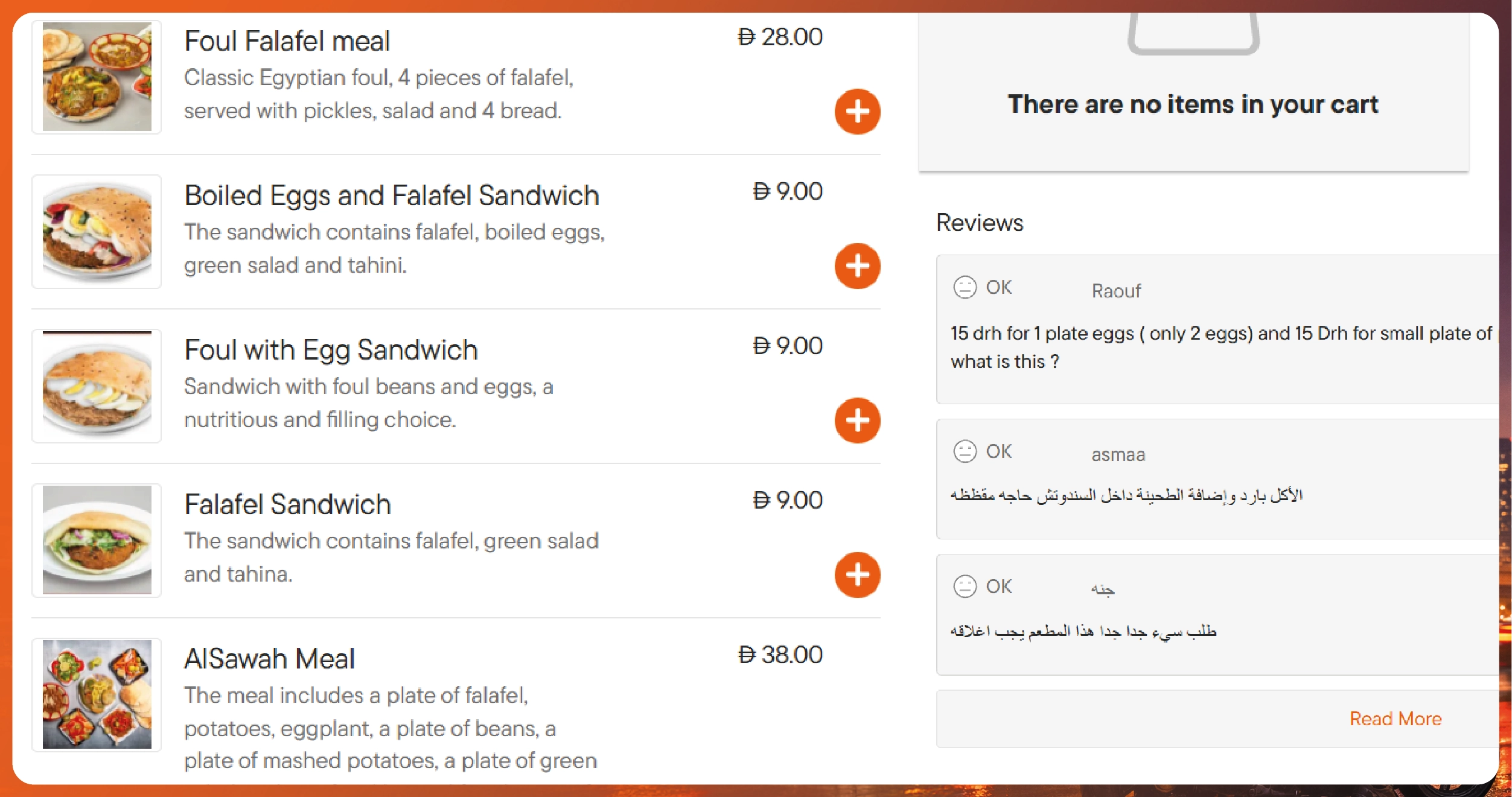

Start Free Trial A Technical Guide to Extracting Food & Restaurant Data.webp)