Walgreens is a cornerstone in the American pharmacy landscape, offering more than just health essentials—it's a reservoir of valuable data. For professionals delving into online retail intricacies or those aiming to understand evolving consumer healthcare patterns, Web Scraping Walgreens emerges as an invaluable strategy.

Web scraping, which extracts information from websites, revolutionizes health care data collection from online marketplaces like Walgreens. Automating the Health Care data scraping process paves the way for insightful analysis and innovative solutions.

This guide focuses on extracting children's and babies' healthcare products from Walgreens using the renowned Python library Beautiful Soup.

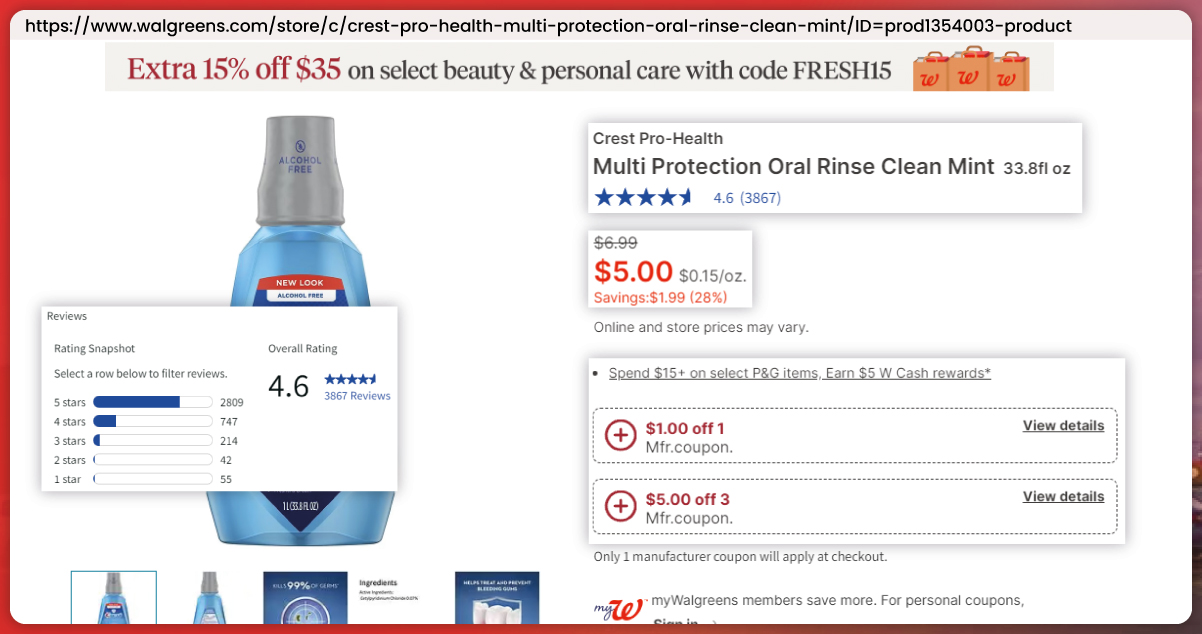

Our mission? To capture pivotal details ranging from product name, brand, and price to ratings, review counts, size, and stock availability. Delving deeper, we'll extract insights on product offers, descriptions, and specifications and scrutinize for any cautions or listed ingredients. From establishing the Web Scraping Walgreens environment to crafting precise extraction code, this guide demystifies Beautiful Soup's Health Care data retrieval prowess.

In this guide, we'll delve into extracting key attributes from Walgreens' product pages, including:

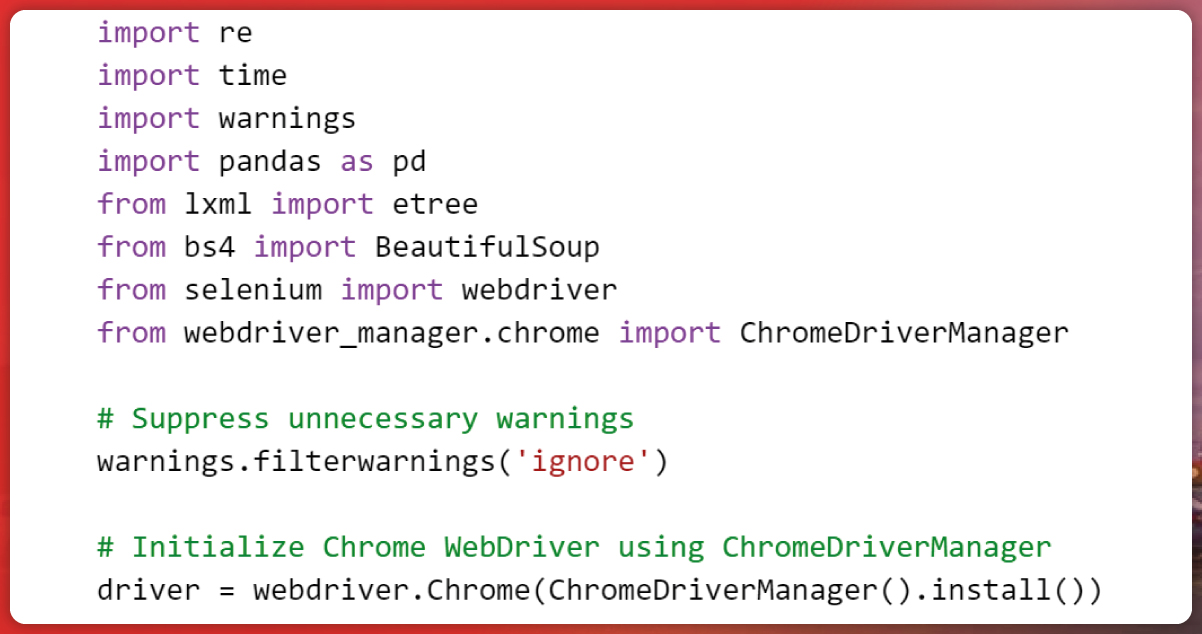

To kickstart our data extraction journey, it's imperative to import the necessary libraries. These include:

By incorporating these libraries and configuring the web driver, you're all set to initiate the process of scraping Children & Baby's Health Care data from Walgreens website using Beautiful Soup.

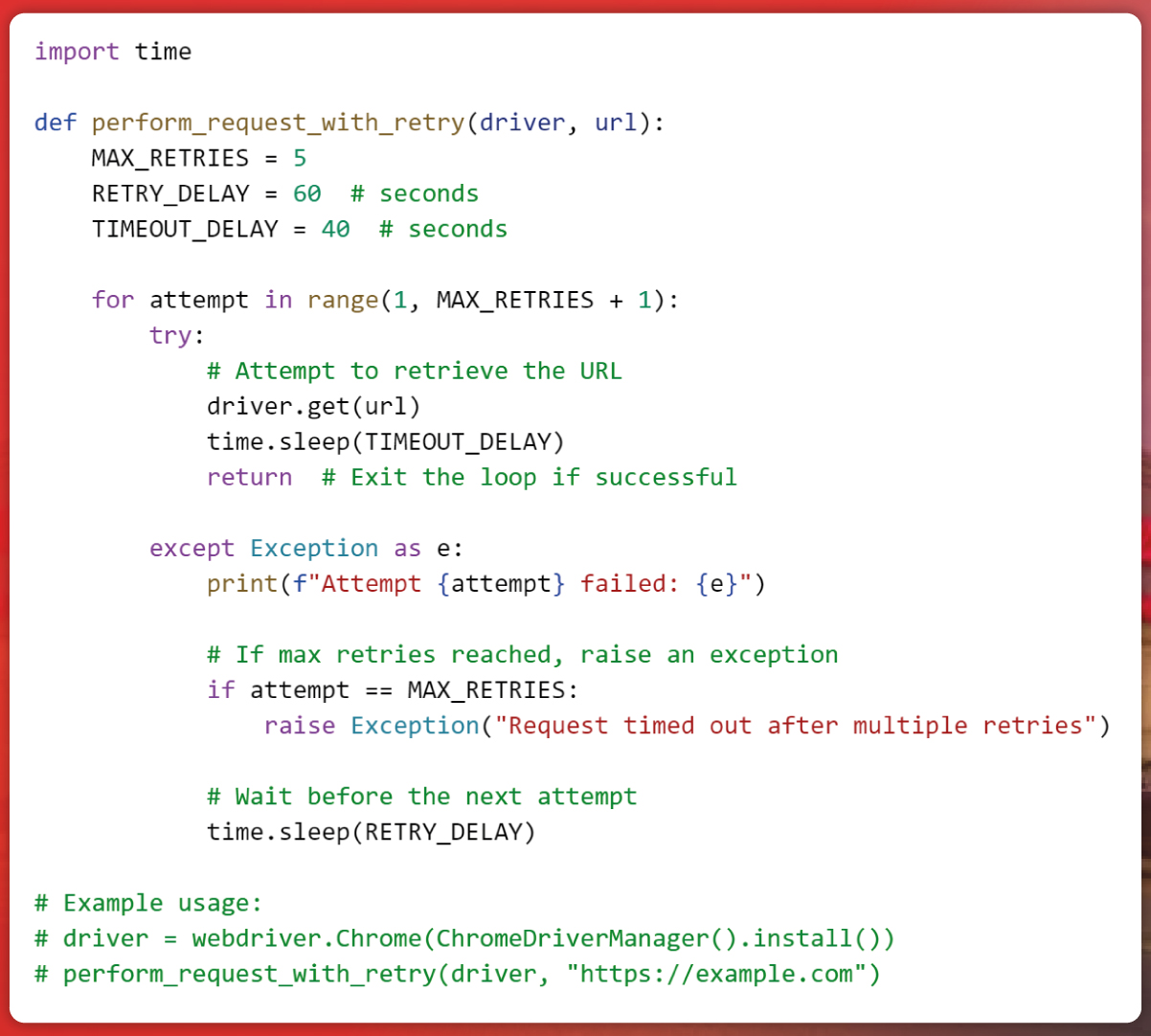

Implementing a robust "Request Retry with Maximum Retry Limit" approach is pivotal in web scraping endeavors. This mechanism empowers scrapers to persistently pursue data extraction, even in the face of obstacles. We harmonize tenacity and operational efficiency by setting a defined retry threshold. Whether confronted with timeouts or fluctuations in network connectivity, the scraper exhibits adaptability and resilience. Such a strategy guarantees consistent and dependable data retrieval amidst the dynamic landscape of the online realm.

The perform_request_with_retry function is designed with the purpose of ensuring robust web scraping by managing potential request failures. This function accepts two primary parameters: driver, representing the active web driver instance, and url, indicating the target webpage to access.

Here's a breakdown of its functionality:

Initialization: A counter named retry_count starts at 0 to monitor retry attempts.

Retries Loop: Inside a loop set to a maximum of 5 retries, the function tries to fetch the webpage using driver.get(url).

Success Path: If the request succeeds (i.e., no exceptions are raised), the function pauses for 40 seconds (assumed for page loading) and exits the loop.

Error Handling: If any exception arises during the request (indicating potential issues like timeouts), the except block is triggered.

The retry_count increments by 1, marking an unsuccessful attempt.

If retry_count reaches 5 (MAX_RETRIES), an exception is raised to prevent infinite retries.

If not at the max limit, the function waits for 60 seconds before the next attempt, allowing time for transient issues to potentially resolve.

This structured approach ensures that web scraping processes remain resilient, adapting to occasional hiccups in web page access.

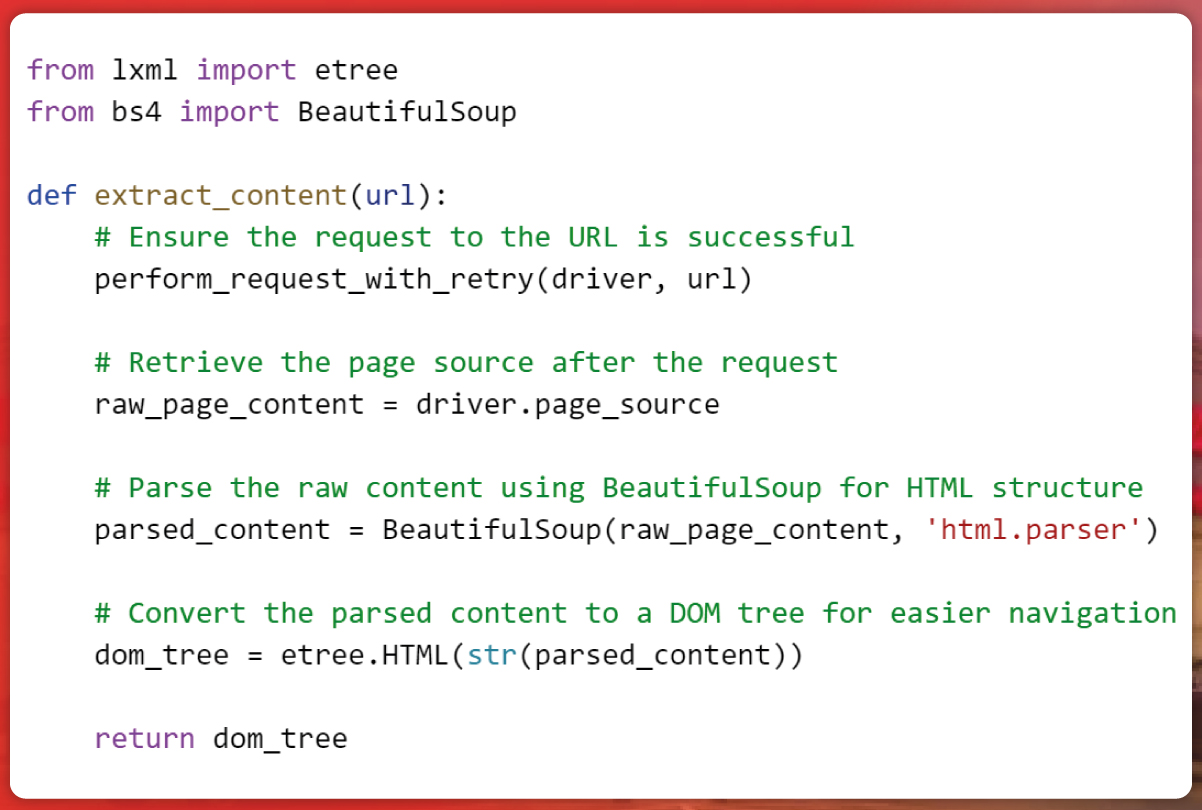

The phase of 'Content Extraction and DOM Parsing' holds paramount importance. This stage revolves around the extraction and structuring of content from designated web pages. As we embark on the journey of data acquisition, this technique demystifies web page structures, transforming intricate HTML into an organized and accessible format, setting the stage for comprehensive analysis and utilization.

The 'extract_content' function is a cornerstone of our web scraping strategy. It initiates a stable connection to the target webpage via 'perform_request_with_retry,' adeptly managing potential connectivity hiccups. Once this steadfast connection is established, it retrieves the webpage's raw HTML content using 'driver.page_source'. This raw data is then processed by Beautiful Soup, which structures it using the 'html.parser'. This structured content is encapsulated within the 'product_soup' variable.

We employ the 'etree.HTML' method to optimize data handling, transforming the Beautiful Soup output into a more navigable hierarchical structure. This refined 'dom' object is primed for intricate exploration, extraction, and analysis of the Walgreens webpage's details. Ultimately, this methodology equips us with robust mechanisms to delve deep into the website's content, revealing invaluable insights for subsequent investigations.

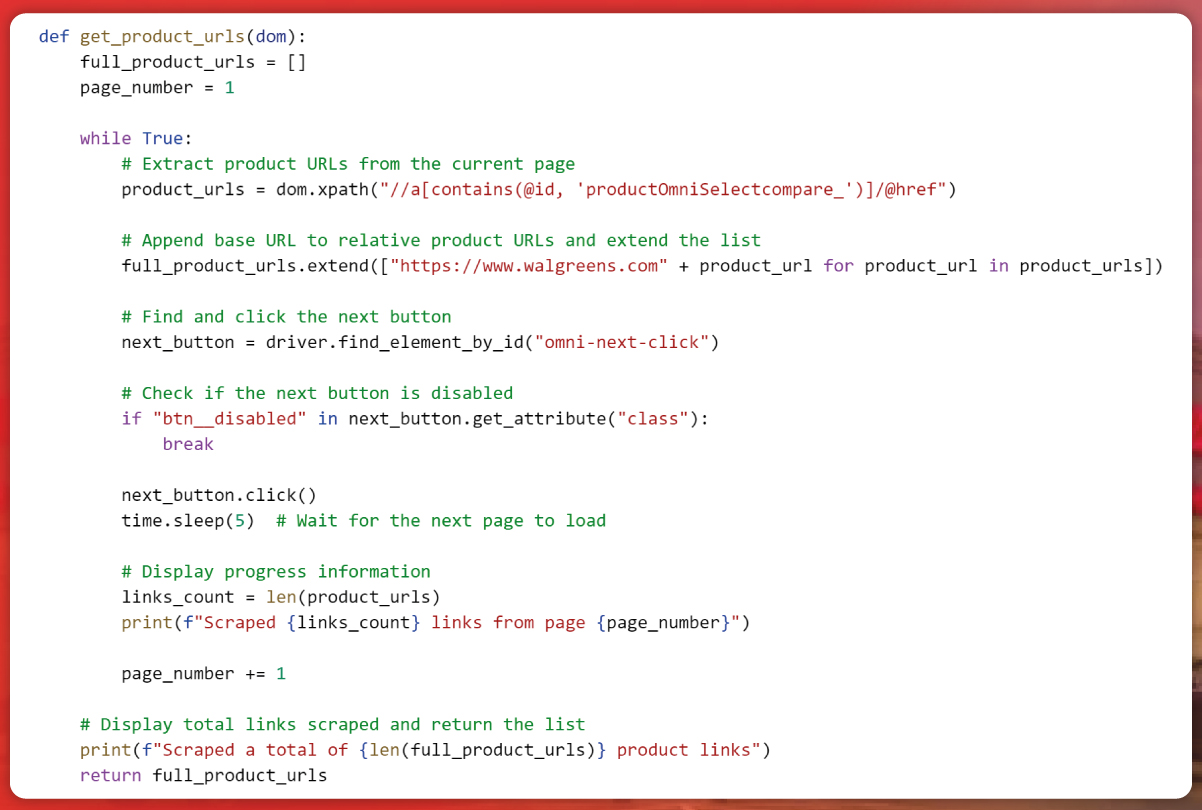

The subsequent vital phase involves procuring product URLs from the Walgreens platform. This task systematically collates and structures web links, each corresponding to a distinct product within Walgreens' online inventory.

While Walgreens might not showcase its complete assortment on a singular page, we replicate the action of clicking a "next page" button. This action fluidly transitions us from one webpage to the subsequent, unveiling an extensive array of product URLs. These web links act as gateways, ushering us into a realm abundant with data. Hereafter, we'll traverse these links to extract pertinent insights, constructing a holistic view of the Children & Baby's Health Care segment.

The function get_product_urls receives a parsed DOM object (dom) that represents the structure of a web page. Within a loop, the function uses XPath, a language for querying XML documents, to pull out partial product URLs based on specific attributes from the DOM. To form complete URLs, these partial URLs are combined with the base URL of the Walgreens website.

For pagination, the loop emulates clicking a "next page" button to access additional product listings. Before proceeding with the click action, it verifies if the button is inactive, signaling the end of available pages. After the simulated click, a short delay is added using the time.sleep() function to ensure the page fully loads before data extraction. Once the loop concludes, the function displays the total count of product URLs gathered from all pages.

The URLs are accumulated in the full_product_urls list, which is then returned as the function's output for use in subsequent scraping tasks.

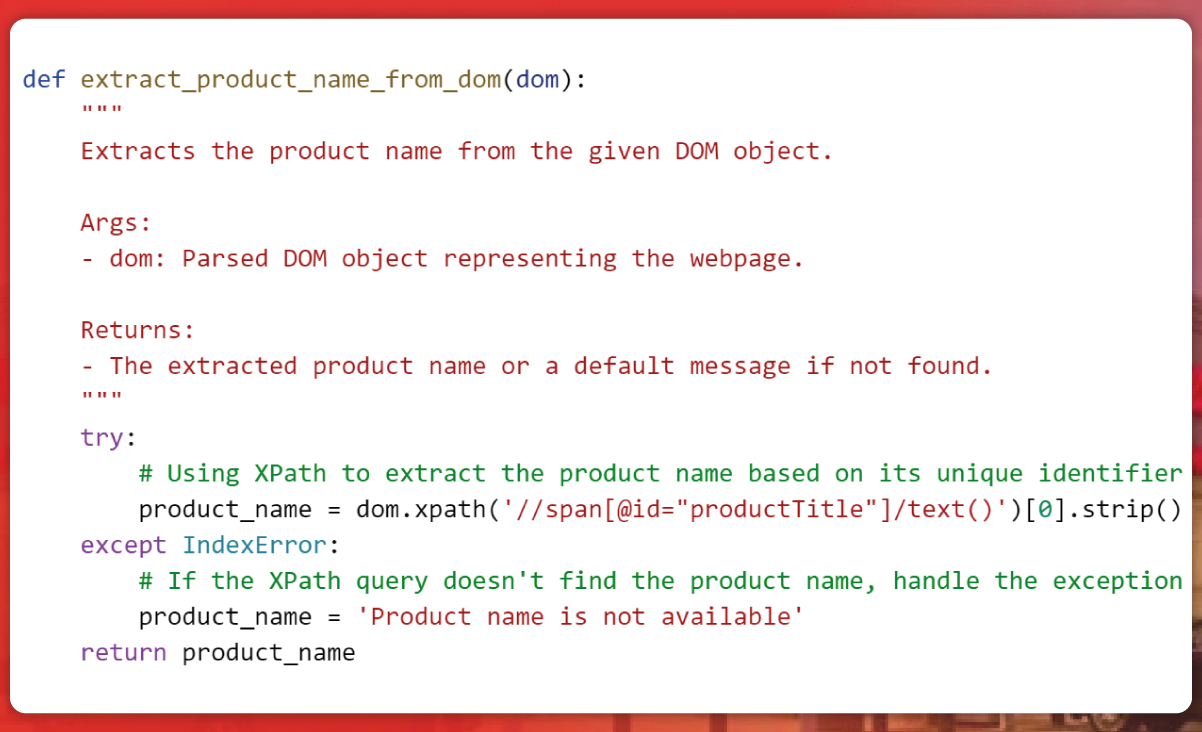

The subsequent phase involves extracting product names from the web pages, providing essential information about each product's identity. As each item possesses a distinct identity, retrieving product names becomes invaluable for a comprehensive understanding of the available offerings.

The function, named get_product_name, accepts a parameter dom, which is the parsed DOM of the webpage. Within the function, there's a try-except structure—the try block attempts to locate the HTML element containing the product name using an XPath query. If successful, the product name is stored in the product_name variable.

However, if there's an issue with the XPath query or the extraction fails, the code within the except block is triggered. In such scenarios, it sets the product_name variable to the default value, 'Product name is unavailable.' Ultimately, the function returns the successfully extracted product name or the default value if the extraction process encounters an error.

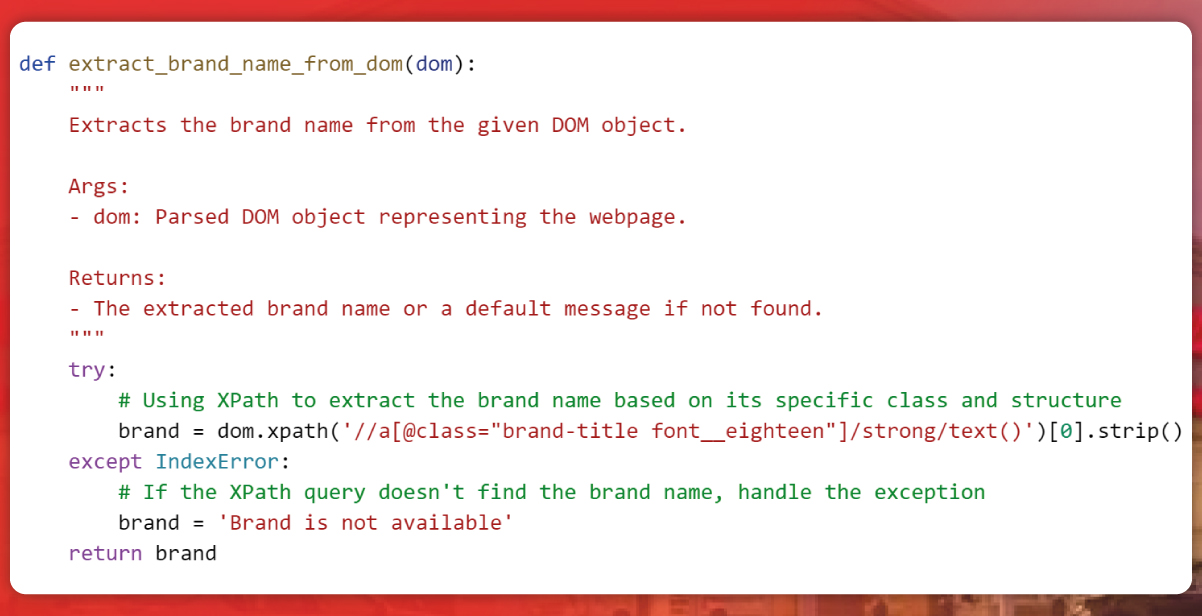

Extracting brand names is pivotal in discerning product quality and establishing trust. Such data, obtained through Web Scraping Walgreens, offers invaluable insights into consumer preferences and competitor positioning. This Health Care data collection method aids in informed decision-making, especially for optimizing product price strategies, particularly within the Children & Baby's Health Care products segment. Leveraging Health Care data from Walgreens enables a deeper understanding and enhancement of our offerings.

The function, named get_brand, accepts a parameter named dom, representing a webpage's parsed Document Object Model. Enclosed within a try-except block, the function attempts to execute a sequence of actions to extract brand information from the webpage. Using XPath—a language tailored for XML navigation—it targets an HTML element with the class attribute "brand-title font__eighteen" to retrieve the embedded strong text representing the brand name.

If successful, the brand name is stored in the brand variable. However, any challenges in executing the XPath query or during data extraction trigger the except block, which defaults the brand variable to 'Brand is not available.'

Additionally, by leveraging Web Scraping Walgreens techniques, other attributes like Number of Reviews, Ratings, Price, Unit Price, Offer, Stock Status, Description, Warnings, and Ingredients can be similarly extracted from the Health Care data collection on Walgreens. This Health Care data Scraping approach ensures comprehensive insights for informed decision-making, especially concerning product price strategies within the Health Care data from the Walgreens domain.

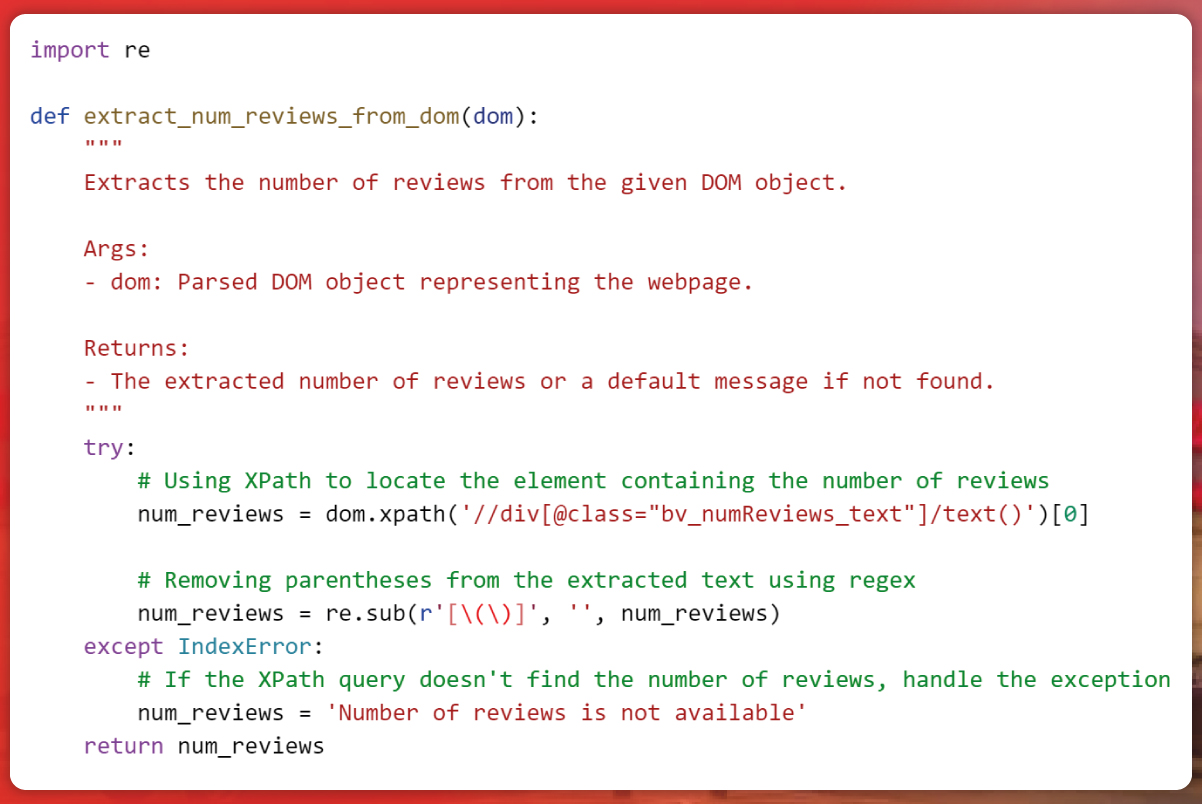

Customer feedback serves as a potent indicator, with review counts shedding light on product popularity and satisfaction, particularly within the Children & Baby's Health Care products sector. Grasping these metrics facilitates tailored decision-making and a more profound understanding of customer preferences in the realm of wellness.

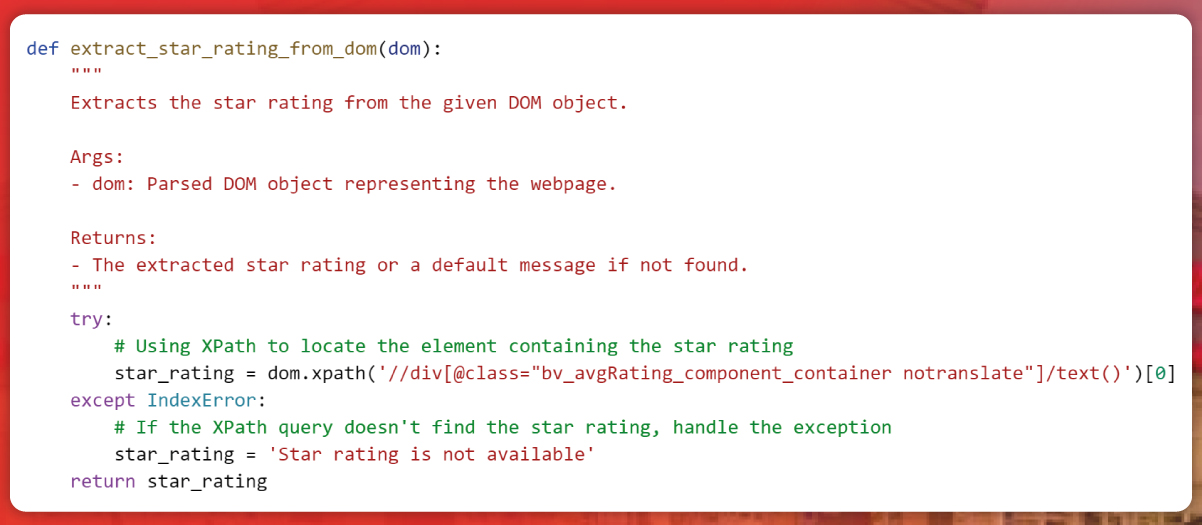

Product ratings hold considerable sway, directing discerning shoppers towards top-tier and trustworthy choices. Every star represents customer satisfaction and holds the potential to influence choices profoundly. These ratings encapsulate extensive insights, offering a swift overview of customer contentment and product quality.

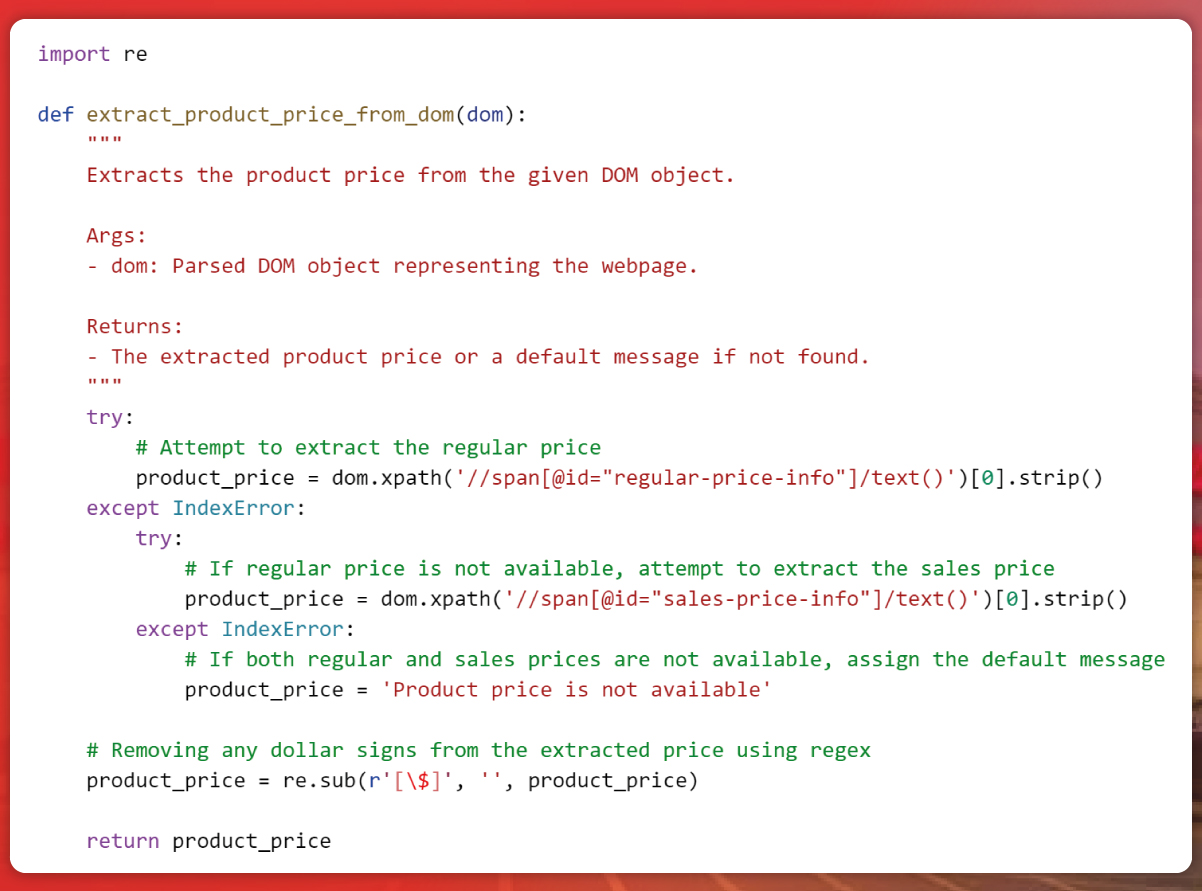

Extracting prices empowers us to navigate the realm of discounts and deals effectively. This process equips us with the insights needed to make informed decisions and uncover potential savings.

In this function, the initial step is to retrieve the product price from the "regular-price-info" element. If unsuccessful, it then enters a nested try-except block to fetch the price from the "sales-price-info" element. If both extraction attempts falter, the function defaults the product_price variable to signify that the price information is unavailable.

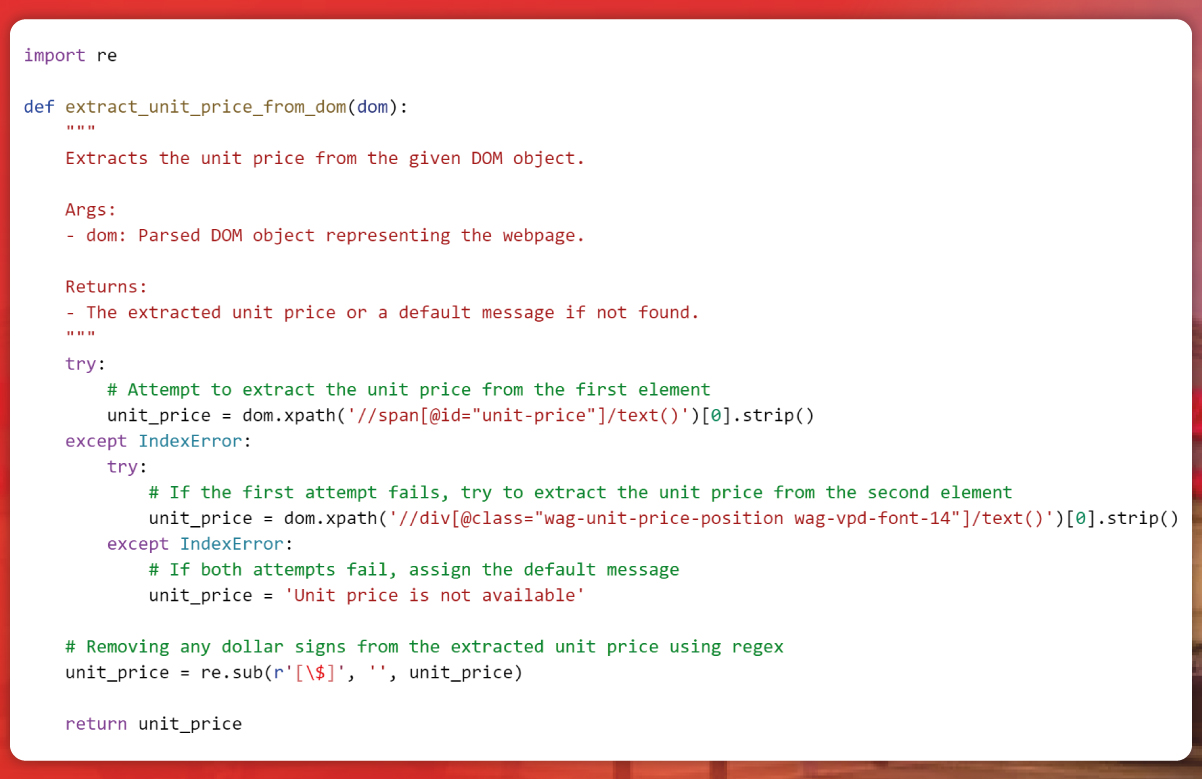

Extracting unit prices serves as an essential tool for discerning consumers. It illuminates cost-effective options and streamlines packaging choices.

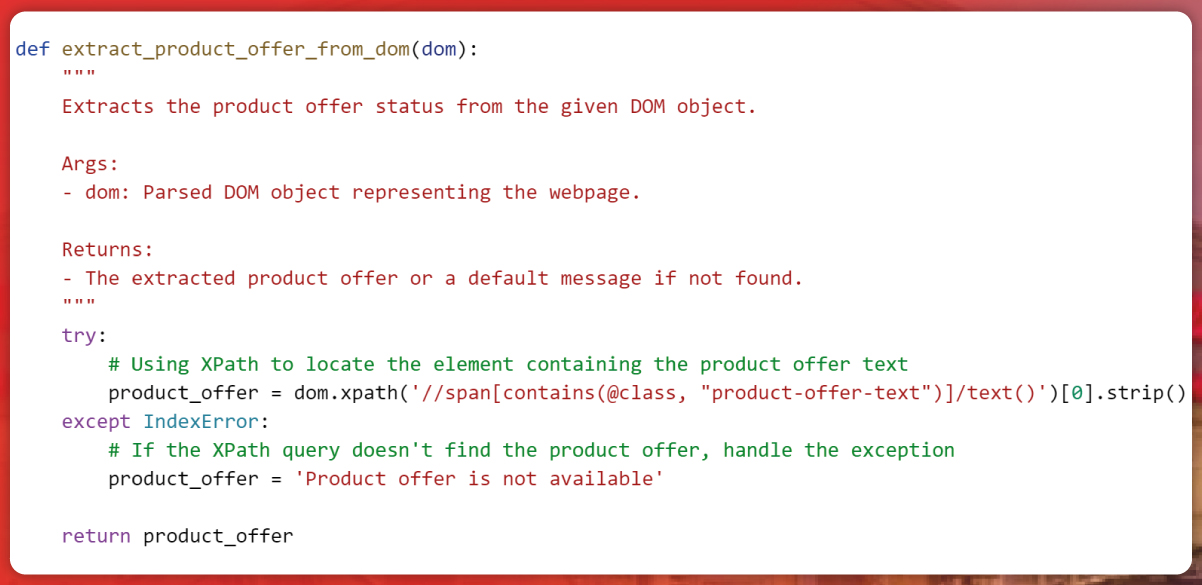

Revealing the status provides a glimpse into the ever-evolving landscape of discounts, promotions, and time-sensitive offers.

Understanding the precise weights, dimensions, sizes, or quantities serves as our compass in the pursuit of the ideal match, guaranteeing products harmonize flawlessly with our preferences and requirements.

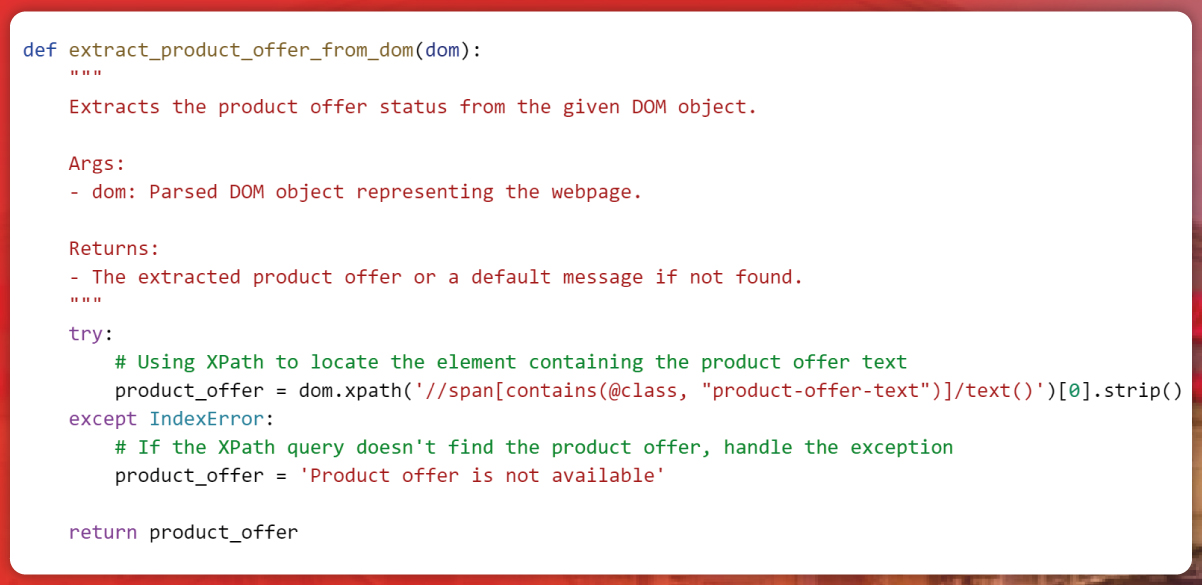

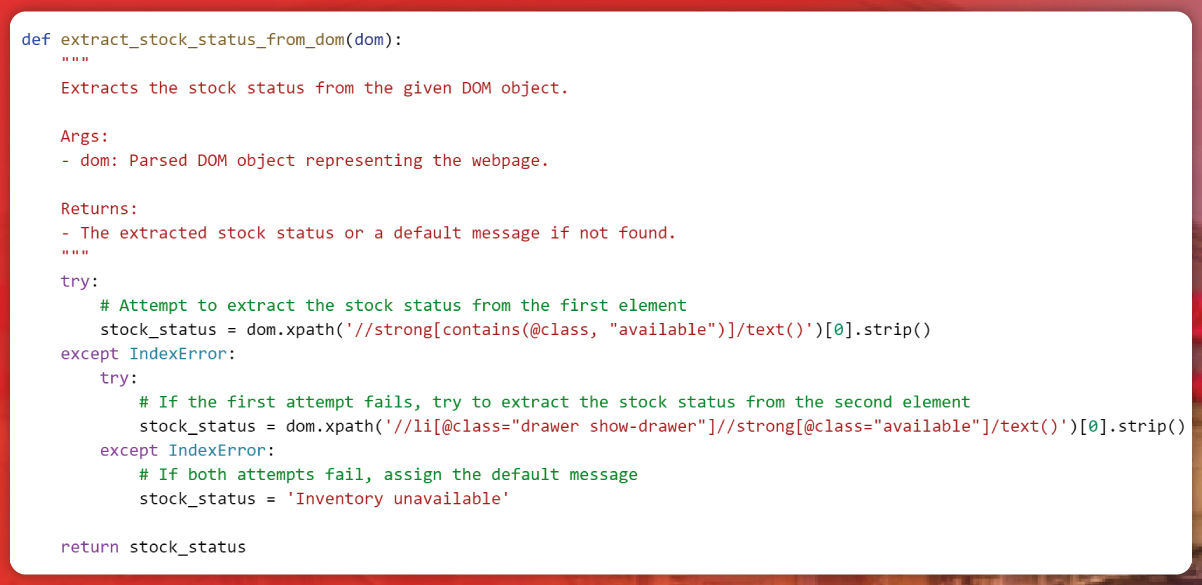

Stock status serves as our navigational guide across digital shelves, aiding us in assessing item availability.

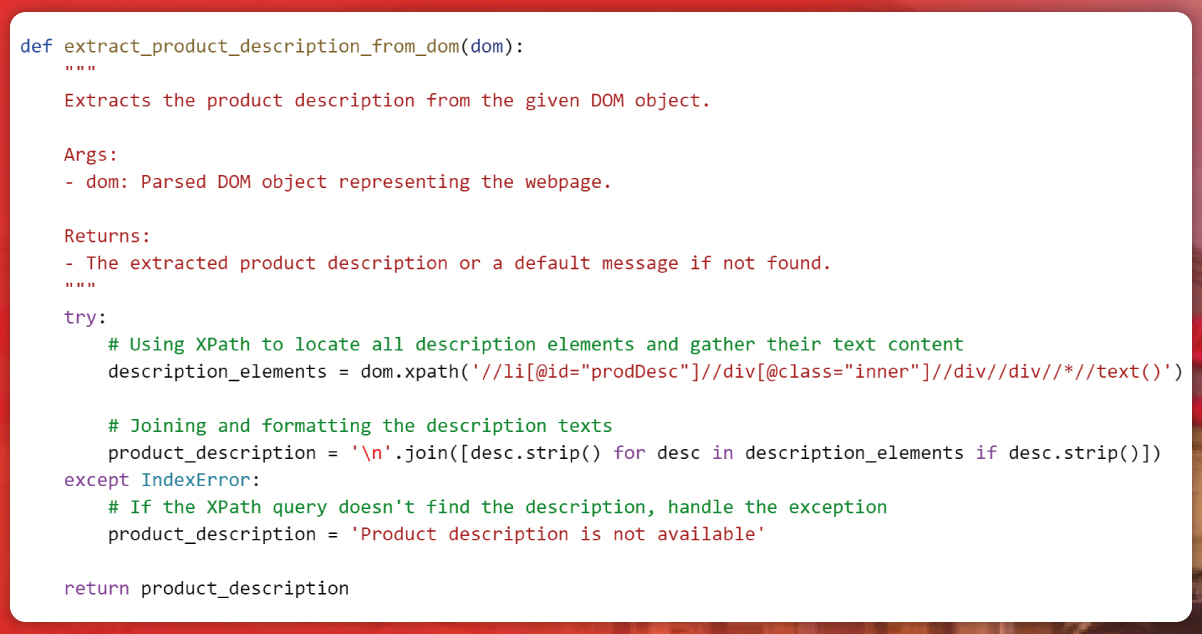

Uncovering descriptions reveals the core essence of products, providing valuable insights that empower informed decisions.

In this code segment, product_description is directly derived from a list of text nodes extracted via the XPath expression. A list comprehension is employed to refine each extracted text element by removing any leading or trailing whitespace, subsequently filtering out any empty strings. Ultimately, these refined text elements are concatenated using newline characters ('/n'), creating a consolidated product description for enhanced clarity.

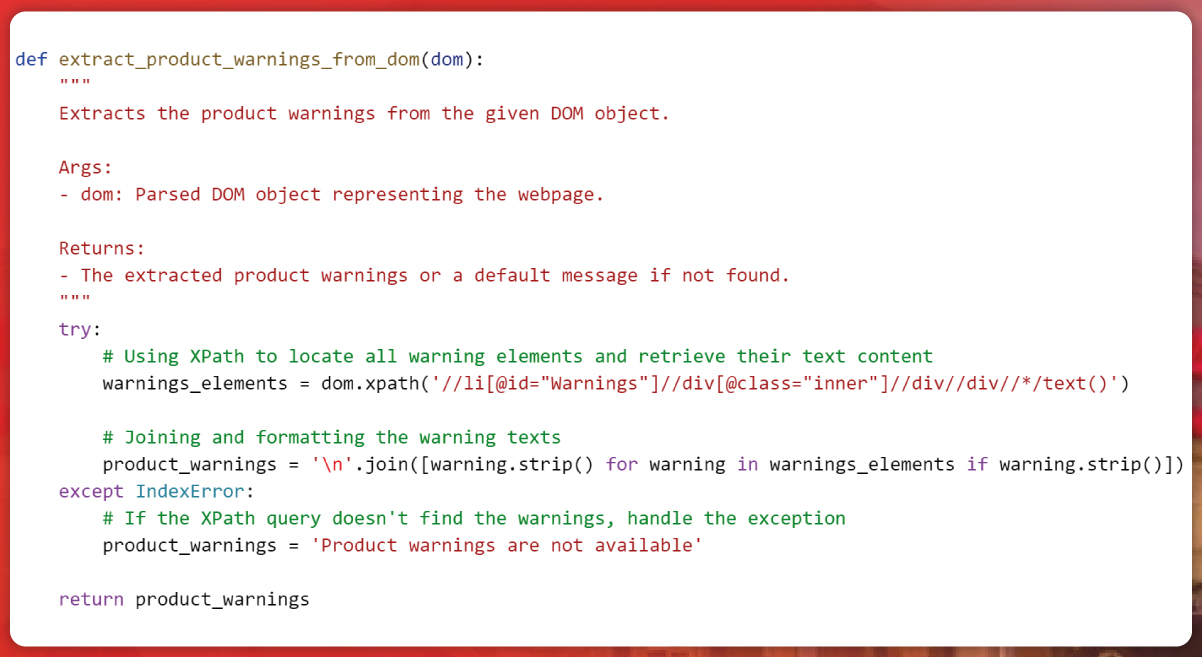

Warnings serve as pivotal indicators, offering essential insights for informed consumer decisions, highlighting product safety and considerations.

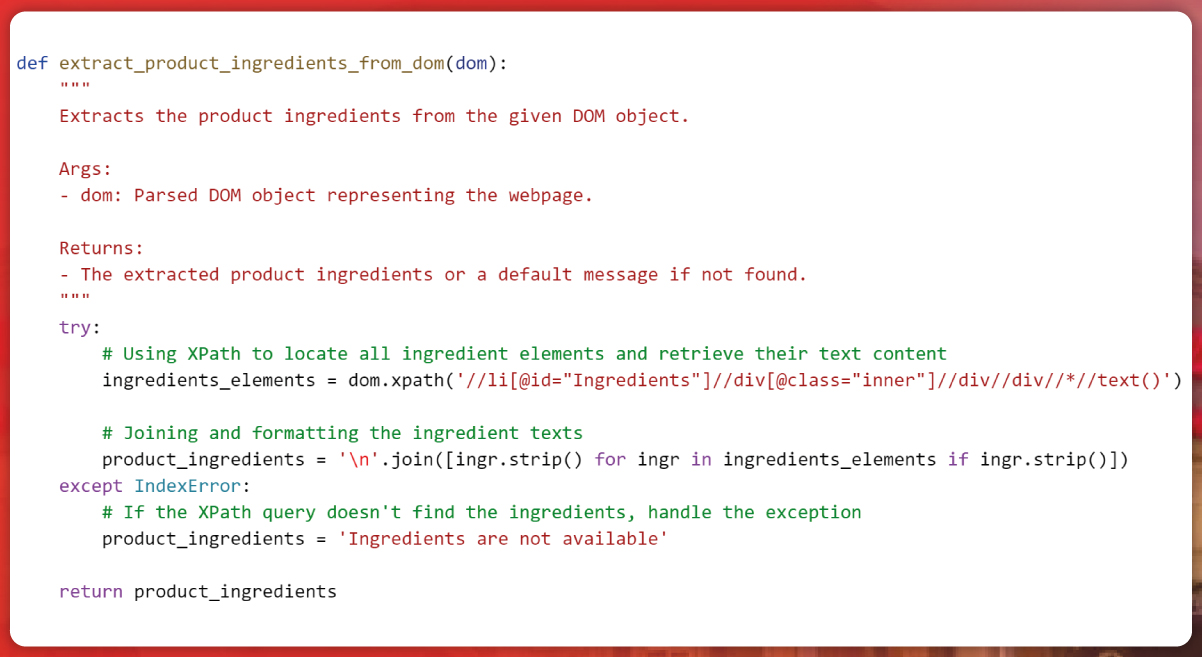

Extracting ingredients unveils product insight, shedding light on formulation and potential benefits, facilitating informed decisions.

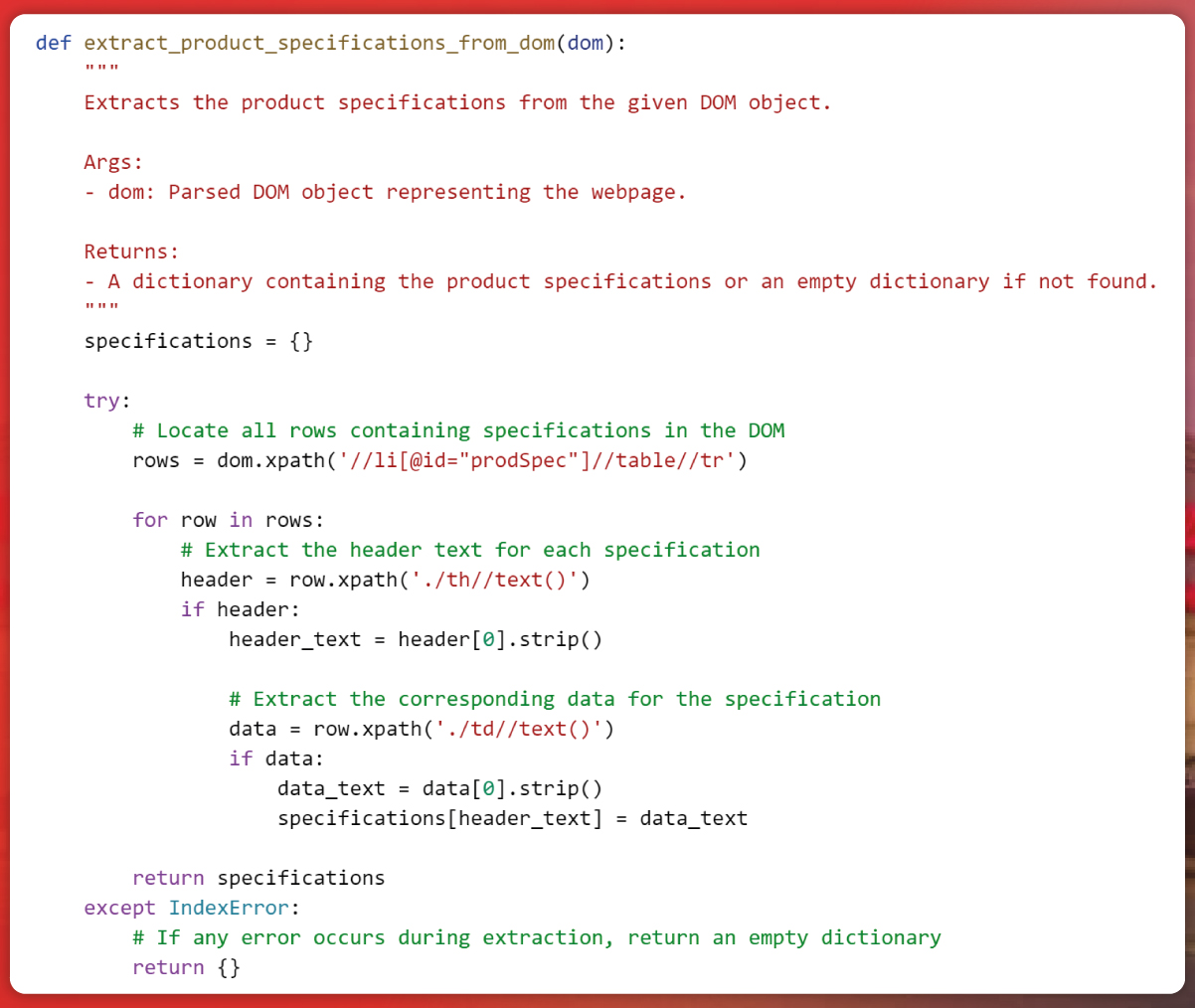

Specifications are the foundation for informed online shopping, presenting a guide to product attributes aligned with our preferences. These details, encompassing product type, brand, FSA eligibility, size/count, item code, and UPC, offer a holistic view of each item.

The get_product_specifications function operates on the parameter dom, representing the parsed Document Object Model from a webpage. Safeguarded within a try-except block, the function extracts crucial Health Care data from Walgreens. In the try block, leveraging an XPath query tailored for Web Scraping Walgreens, it targets HTML elements identified by the class attribute "prospect." This allows the function to systematically gather structured information, including product price, from the webpage. By navigating the HTML structure, the function identifies table rows and meticulously retrieves header and data cells' text content. Upon successful extraction, it refines the text by eliminating redundant whitespace. The extracted details are then cataloged in the specifications dictionary, associating specific headers with their respective Health Care data. However, the except block intervenes should any anomalies surface during the XPath querying or data harvesting process. In such scenarios, the function gracefully returns an empty dictionary, signifying an unsuccessful endeavor in Health Care data scraping.

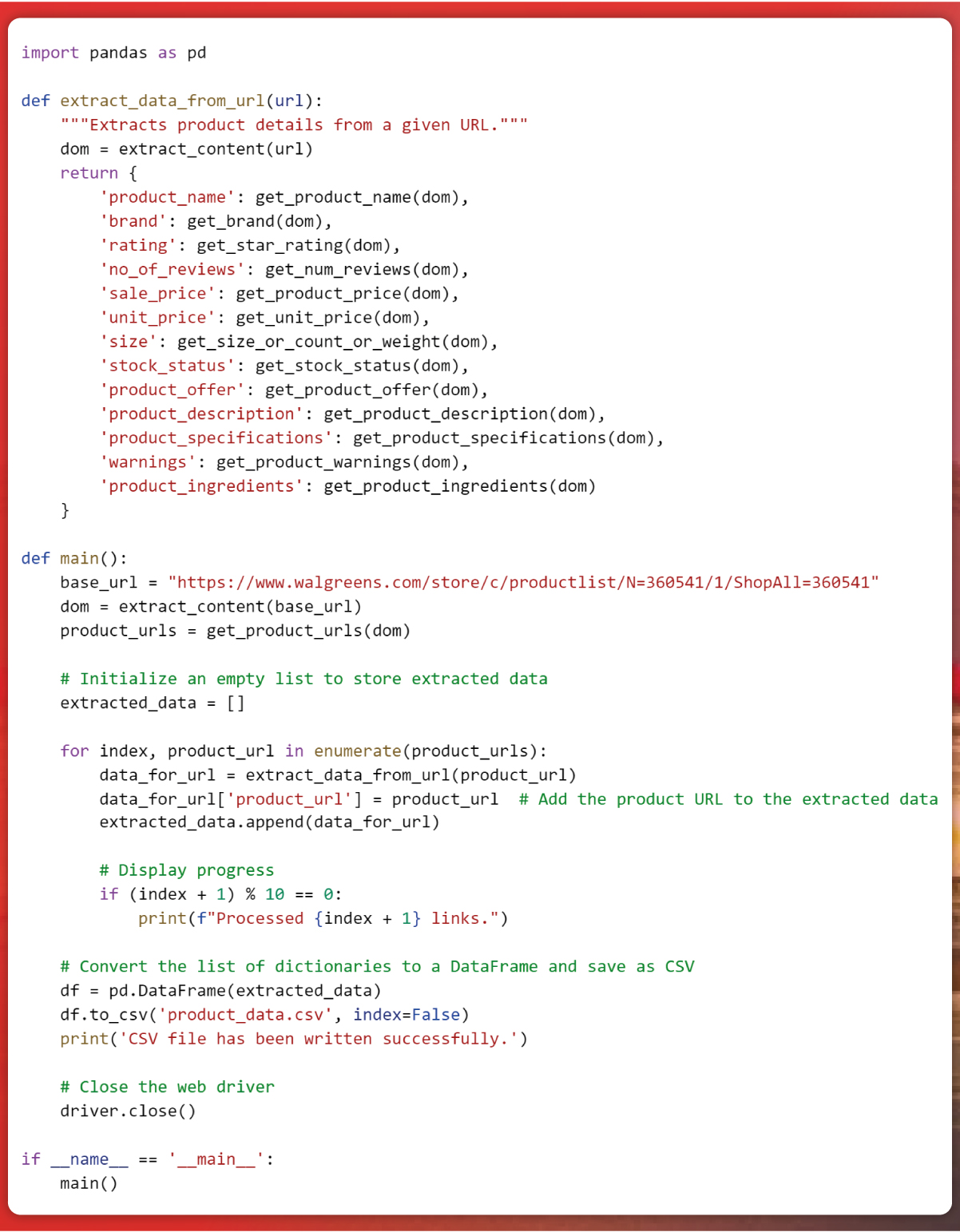

Subsequently, we invoke the functions, capture the extracted data in an initially empty list, and then export this data to a CSV file.

The primary () function serves as the central hub for the intricate process of Health Care data scraping from the Walgreens website, utilizing Beautiful Soup in Python. Acting as a pivotal component for Web Scraping Walgreens, the function kick-starts by pinpointing the designated URL. It then leverages the extract_content function to capture the website's DOM content. Using get_product_urls, a list of product URLs from the webpage is curated.

In a subsequent phase, an iteration traverses each product URL within the list. This loop harnesses functions like get_product_name, get_brand, get_star_rating, get_num_reviews, and others to extract attributes pivotal to health care data collection meticulously. These attributes encompass product name, brand, star ratings, review counts, product price, dimensions, availability, descriptions, specifications, warnings, and ingredients. Each extracted dataset is methodically assembled into a dictionary and appended to the data list.

Progressive conditional statements embedded within the loop offer periodic progress indicators, updating users upon reaching specific thresholds. Upon exhausting the product URLs list, the aggregated data undergoes a metamorphosis into a structured pandas DataFrame. This DataFrame, encapsulating vital Health Care data from Walgreens, is then archived as a CSV file dubbed 'product_data.csv.' To conclude the scraping endeavor, the browser session is duly terminated.

The encapsulating if __name__ == '__central__': clause ensures that the primary () function exclusively springs into action when the script is invoked directly, ensuring immunity from inadvertent execution during module imports. This script is an exhaustive blueprint for adeptly retrieving and structuring multifaceted product-centric information from Walgreen's web ecosystem using the synergistic prowess of Beautiful Soup and pandas.

Incorporating Beautiful Soup into your toolkit simplifies the intricate realm of Web Scraping Walgreens, even when navigating intricate websites such as Walgreens. By adhering to this systematic guide, you can extract detailed information on Children's and babies' Health Care products, including crucial data points like product price. It empowers you to derive invaluable insights from the Health Care data from Walgreens, offering a competitive edge.

However, it's imperative to approach web scraping ethically, ensuring compliance with website terms and guidelines. Embrace the more profound journey into the web, unraveling significant insights and trends.

Are you interested in expanding your competitive intelligence further? Connect with Actowiz Solutions for top-tier web data extraction services today! You can also reach us for all your mobile app scraping, instant data scraper and web scraping service requirements.

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

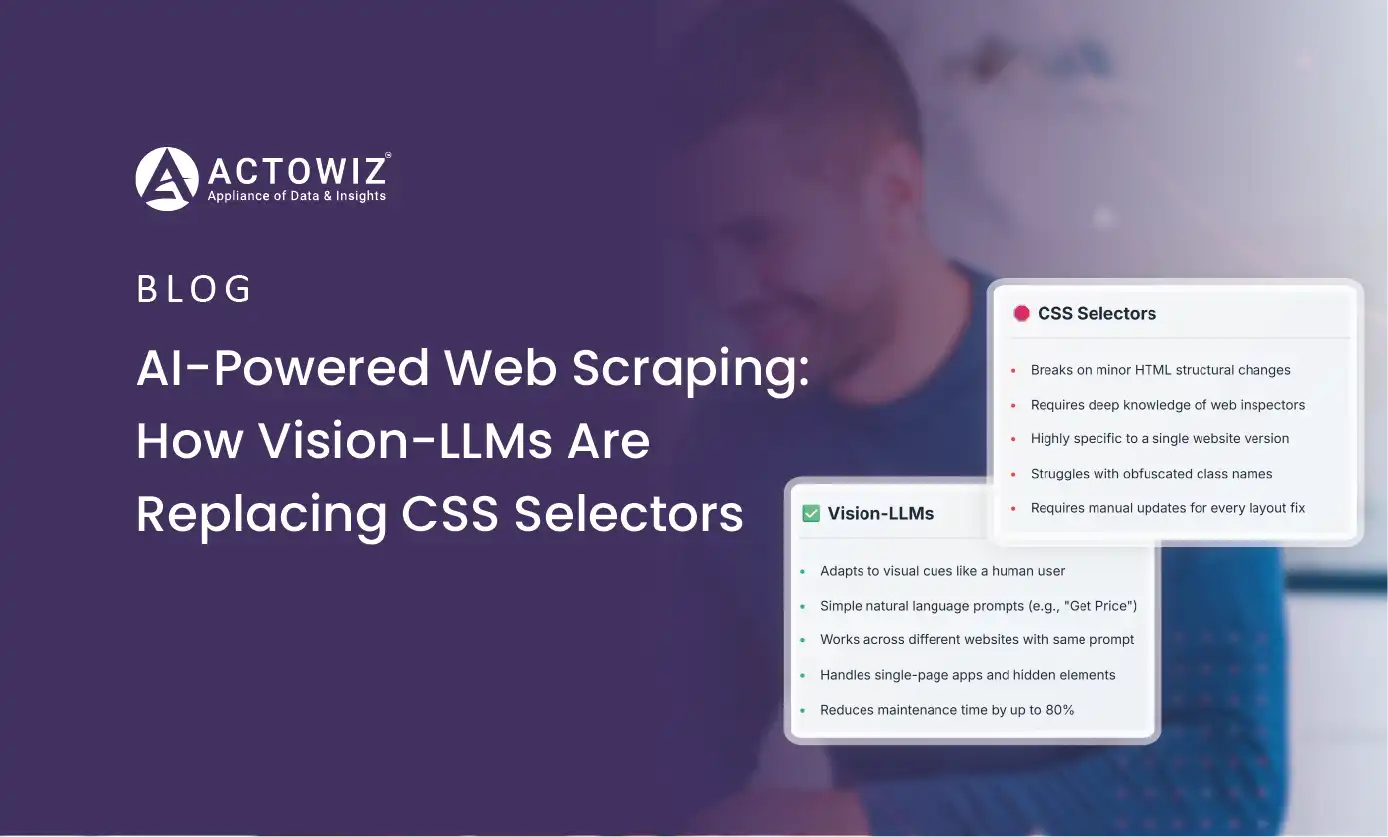

How AI and Vision-LLMs are revolutionizing web scraping in 2026. Self-healing scrapers, visual parsing, and zero-maintenance data extraction explained.

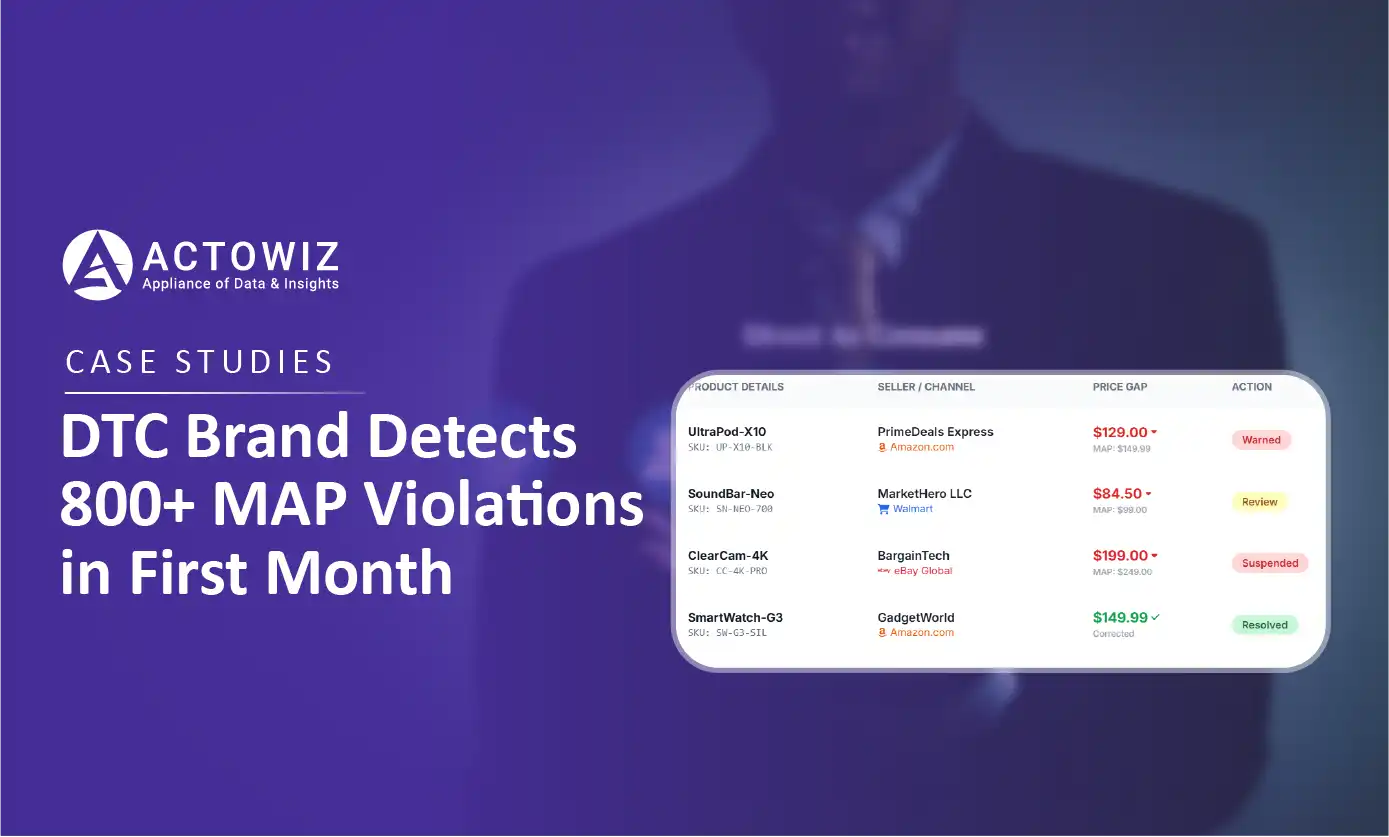

How a $50M+ consumer electronics brand used Actowiz MAP monitoring to detect 800+ violations in 30 days, achieving 92% resolution rate and improving retailer satisfaction by 40%.

Track UK Grocery Products Daily Using Automated Data Scraping across Morrisons, Asda, Tesco, Sainsbury’s, Iceland, Co-op, Waitrose, and Ocado for insights.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.